More on Marketing

Ivona Hirschi

3 years ago

7 LinkedIn Tips That Will Help in Audience Growth

In 8 months, I doubled my audience with them.

LinkedIn's buzz isn't over.

People dream of social proof every day. They want clients, interesting jobs, and field recognition.

LinkedIn coaches will benefit greatly. Sell learning? Probably. Can you use it?

Consistency has been key in my eight-month study of LinkedIn. However, I'll share seven of my tips. 700 to 4500 people followed me.

1. Communication, communication, communication

LinkedIn is a social network. I like to think of it as a cafe. Here, you can share your thoughts, meet friends, and discuss life and work.

Do not treat LinkedIn as if it were a board for your post-its.

More socializing improves relationships. It's about people, like any network.

Consider interactions. Three main areas:

Respond to criticism left on your posts.

Comment on other people's posts

Start and maintain conversations through direct messages.

Engage people. You spend too much time on Facebook if you only read your wall. Keeping in touch and having meaningful conversations helps build your network.

Every day, start a new conversation to make new friends.

2. Stick with those you admire

Interact thoughtfully.

Choose your contacts. Build your tribe is a term. Respectful networking.

I only had past colleagues, family, and friends in my network at the start of this year. Not business-friendly. Since then, I've sought out people I admire or can learn from.

Finding a few will help you. As they connect you to their networks. Friendships can lead to clients.

Don't underestimate network power. Cafe-style. Meet people at each table. But avoid people who sell SEO, web redesign, VAs, mysterious job opportunities, etc.

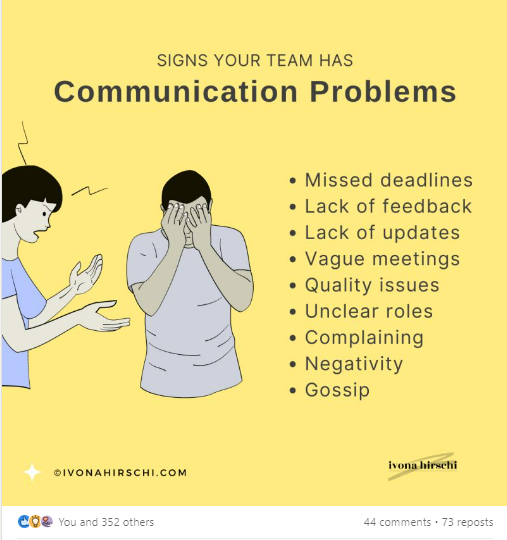

3. Share eye-catching infographics

Daily infographics flood LinkedIn. Visuals are popular. Use Canva's free templates if you can't draw them.

Last week's:

It's a fun way to visualize your topic.

You can repost and comment on infographics. Involve your network. I prefer making my own because I build my brand around certain designs.

My friend posted infographics consistently for four months and grew his network to 30,000.

If you start, credit the authors. As you steal someone's work.

4. Invite some friends over.

LinkedIn alone can be lonely. Having a few friends who support your work daily will boost your growth.

I was lucky to be invited to a group of networkers. We share knowledge and advice.

Having a few regulars who can discuss your posts is helpful. It's artificial, but it works and engages others.

Consider who you'd support if they were in your shoes.

You can pay for an engagement group, but you risk supporting unrelated people with rubbish posts.

Help each other out.

5. Don't let your feed or algorithm divert you.

LinkedIn's algorithm is magical.

Which time is best? How fast do you need to comment? Which days are best?

Overemphasize algorithms. Consider the user. No need to worry about the best time.

Remember to spend time on LinkedIn actively. Not passively. That is what Facebook is for.

Surely someone would find a LinkedIn recipe. Don't beat the algorithm yet. Consider your audience.

6. The more personal, the better

Personalization isn't limited to selfies. Share your successes and failures.

The more personality you show, the better.

People relate to others, not theories or quotes. Why should they follow you? Everyone posts the same content?

Consider your friends. What's their appeal?

Because they show their work and identity. It's simple. Medium and Linkedin are your platforms. Find out what works.

You can copy others' hooks and structures. You decide how simple to make it, though.

7. Have fun with those who have various post structures.

I like writing, infographics, videos, and carousels. Because you can:

Repurpose your content!

Out of one blog post I make:

Newsletter

Infographics (positive and negative points of view)

Carousel

Personal stories

Listicle

Create less but more variety. Since LinkedIn posts last 24 hours, you can rotate the same topics for weeks without anyone noticing.

Effective!

The final LI snippet to think about

LinkedIn is about consistency. Some say 15 minutes. If you're serious about networking, spend more time there.

The good news is that it is worth it. The bad news is that it takes time.

Guillaume Dumortier

2 years ago

Mastering the Art of Rhetoric: A Guide to Rhetorical Devices in Successful Headlines and Titles

Unleash the power of persuasion and captivate your audience with compelling headlines.

As the old adage goes, "You never get a second chance to make a first impression."

In the world of content creation and social ads, headlines and titles play a critical role in making that first impression.

A well-crafted headline can make the difference between an article being read or ignored, a video being clicked on or bypassed, or a product being purchased or passed over.

To make an impact with your headlines, mastering the art of rhetoric is essential. In this post, we'll explore various rhetorical devices and techniques that can help you create headlines that captivate your audience and drive engagement.

tl;dr : Headline Magician will help you craft the ultimate headline titles powered by rhetoric devices

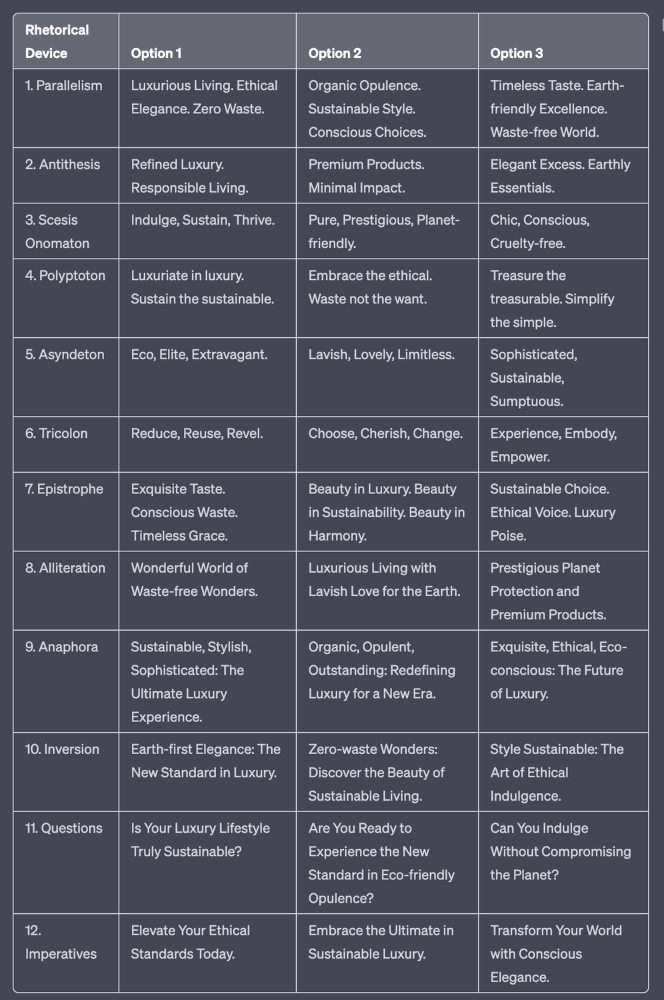

Example with a high-end luxury organic zero-waste skincare brand

✍️ The Power of Alliteration

Alliteration is the repetition of the same consonant sound at the beginning of words in close proximity. This rhetorical device lends itself well to headlines, as it creates a memorable, rhythmic quality that can catch a reader's attention.

By using alliteration, you can make your headlines more engaging and easier to remember.

Examples:

"Crafting Compelling Content: A Comprehensive Course"

"Mastering the Art of Memorable Marketing"

🔁 The Appeal of Anaphora

Anaphora is the repetition of a word or phrase at the beginning of successive clauses. This rhetorical device emphasizes a particular idea or theme, making it more memorable and persuasive.

In headlines, anaphora can be used to create a sense of unity and coherence, which can draw readers in and pique their interest.

Examples:

"Create, Curate, Captivate: Your Guide to Social Media Success"

"Innovation, Inspiration, and Insight: The Future of AI"

🔄 The Intrigue of Inversion

Inversion is a rhetorical device where the normal order of words is reversed, often to create an emphasis or achieve a specific effect.

In headlines, inversion can generate curiosity and surprise, compelling readers to explore further.

Examples:

"Beneath the Surface: A Deep Dive into Ocean Conservation"

"Beyond the Stars: The Quest for Extraterrestrial Life"

⚖️ The Persuasive Power of Parallelism

Parallelism is a rhetorical device that involves using similar grammatical structures or patterns to create a sense of balance and symmetry.

In headlines, parallelism can make your message more memorable and impactful, as it creates a pleasing rhythm and flow that can resonate with readers.

Examples:

"Eat Well, Live Well, Be Well: The Ultimate Guide to Wellness"

"Learn, Lead, and Launch: A Blueprint for Entrepreneurial Success"

⏭️ The Emphasis of Ellipsis

Ellipsis is the omission of words, typically indicated by three periods (...), which suggests that there is more to the story.

In headlines, ellipses can create a sense of mystery and intrigue, enticing readers to click and discover what lies behind the headline.

Examples:

"The Secret to Success... Revealed"

"Unlocking the Power of Your Mind... A Step-by-Step Guide"

🎭 The Drama of Hyperbole

Hyperbole is a rhetorical device that involves exaggeration for emphasis or effect.

In headlines, hyperbole can grab the reader's attention by making bold, provocative claims that stand out from the competition. Be cautious with hyperbole, however, as overuse or excessive exaggeration can damage your credibility.

Examples:

"The Ultimate Guide to Mastering Any Skill in Record Time"

"Discover the Revolutionary Technique That Will Transform Your Life"

❓The Curiosity of Questions

Posing questions in your headlines can be an effective way to pique the reader's curiosity and encourage engagement.

Questions compel the reader to seek answers, making them more likely to click on your content. Additionally, questions can create a sense of connection between the content creator and the audience, fostering a sense of dialogue and discussion.

Examples:

"Are You Making These Common Mistakes in Your Marketing Strategy?"

"What's the Secret to Unlocking Your Creative Potential?"

💥 The Impact of Imperatives

Imperatives are commands or instructions that urge the reader to take action. By using imperatives in your headlines, you can create a sense of urgency and importance, making your content more compelling and actionable.

Examples:

"Master Your Time Management Skills Today"

"Transform Your Business with These Innovative Strategies"

💢 The Emotion of Exclamations

Exclamations are powerful rhetorical devices that can evoke strong emotions and convey a sense of excitement or urgency.

Including exclamations in your headlines can make them more attention-grabbing and shareable, increasing the chances of your content being read and circulated.

Examples:

"Unlock Your True Potential: Find Your Passion and Thrive!"

"Experience the Adventure of a Lifetime: Travel the World on a Budget!"

🎀 The Effectiveness of Euphemisms

Euphemisms are polite or indirect expressions used in place of harsher, more direct language.

In headlines, euphemisms can make your message more appealing and relatable, helping to soften potentially controversial or sensitive topics.

Examples:

"Navigating the Challenges of Modern Parenting"

"Redefining Success in a Fast-Paced World"

⚡Antithesis: The Power of Opposites

Antithesis involves placing two opposite words side-by-side, emphasizing their contrasts. This device can create a sense of tension and intrigue in headlines.

Examples:

"Once a day. Every day"

"Soft on skin. Kill germs"

"Mega power. Mini size."

To utilize antithesis, identify two opposing concepts related to your content and present them in a balanced manner.

🎨 Scesis Onomaton: The Art of Verbless Copy

Scesis onomaton is a rhetorical device that involves writing verbless copy, which quickens the pace and adds emphasis.

Example:

"7 days. 7 dollars. Full access."

To use scesis onomaton, remove verbs and focus on the essential elements of your headline.

🌟 Polyptoton: The Charm of Shared Roots

Polyptoton is the repeated use of words that share the same root, bewitching words into memorable phrases.

Examples:

"Real bread isn't made in factories. It's baked in bakeries"

"Lose your knack for losing things."

To employ polyptoton, identify words with shared roots that are relevant to your content.

✨ Asyndeton: The Elegance of Omission

Asyndeton involves the intentional omission of conjunctions, adding crispness, conviction, and elegance to your headlines.

Examples:

"You, Me, Sushi?"

"All the latte art, none of the environmental impact."

To use asyndeton, eliminate conjunctions and focus on the core message of your headline.

🔮 Tricolon: The Magic of Threes

Tricolon is a rhetorical device that uses the power of three, creating memorable and impactful headlines.

Examples:

"Show it, say it, send it"

"Eat Well, Live Well, Be Well."

To use tricolon, craft a headline with three key elements that emphasize your content's main message.

🔔 Epistrophe: The Chime of Repetition

Epistrophe involves the repetition of words or phrases at the end of successive clauses, adding a chime to your headlines.

Examples:

"Catch it. Bin it. Kill it."

"Joint friendly. Climate friendly. Family friendly."

To employ epistrophe, repeat a key phrase or word at the end of each clause.

obimy.app

3 years ago

How TikTok helped us grow to 6 million users

This resulted to obimy's new audience.

Hi! obimy's official account. Here, we'll teach app developers and marketers. In 2022, our downloads increased dramatically, so we'll share what we learned.

obimy is what we call a ‘senseger’. It's a new method to communicate digitally. Instead of text, obimy users connect through senses and moods. Feeling playful? Flirt with your partner, pat a pal, or dump water on a classmate. Each feeling is an interactive animation with vibration. It's a wordless app. App Store and Google Play have obimy.

We had 20,000 users in 2022. Two to five thousand of them opened the app monthly. Our DAU metric was 500.

We have 6 million users after 6 months. 500,000 individuals use obimy daily. obimy was the top lifestyle app this week in the U.S.

And TikTok helped.

TikTok fuels obimys' growth. It's why our app exploded. How and what did we learn? Our Head of Marketing, Anastasia Avramenko, knows.

our actions prior to TikTok

We wanted to achieve product-market fit through organic expansion. Quora, Reddit, Facebook Groups, Facebook Ads, Google Ads, Apple Search Ads, and social media activity were tested. Nothing worked. Our CPI was sometimes $4, so unit economics didn't work.

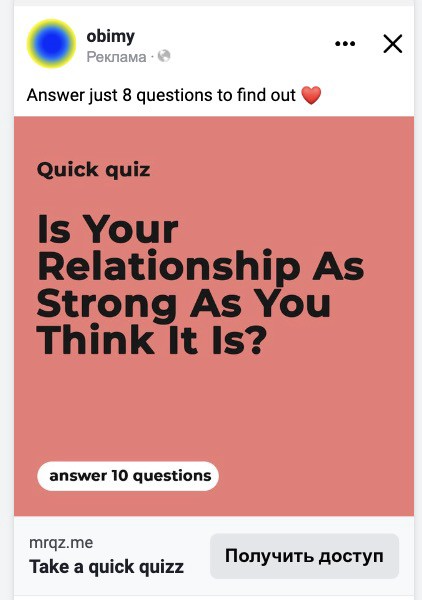

We studied our markets and made audience hypotheses. We promoted our goods and studied our audience through social media quizzes. Our target demographic was Americans in long-distance relationships. I designed quizzes like Test the Strength of Your Relationship to better understand the user base. After each quiz, we encouraged users to download the app to enhance their connection and bridge the distance.

We got 1,000 responses for $50. This helped us comprehend the audience's grief and coping strategies (aka our rivals). I based action items on answers given. If you can't embrace a loved one, use obimy.

We also tried Facebook and Google ads. From the start, we knew it wouldn't work.

We were desperate to discover a free way to get more users.

Our journey to TikTok

TikTok is a great venue for emerging creators. It also helped reach people. Before obimy, my TikTok videos garnered 12 million views without sponsored promotion.

We had to act. TikTok was required.

I wasn't a TikTok user before obimy. Initially, I uploaded promotional content. Call-to-actions appear strange next to dancing challenges and my money don't jiggle jiggle. I learned TikTok. Watch TikTok for an hour was on my to-do list. What a dream job!

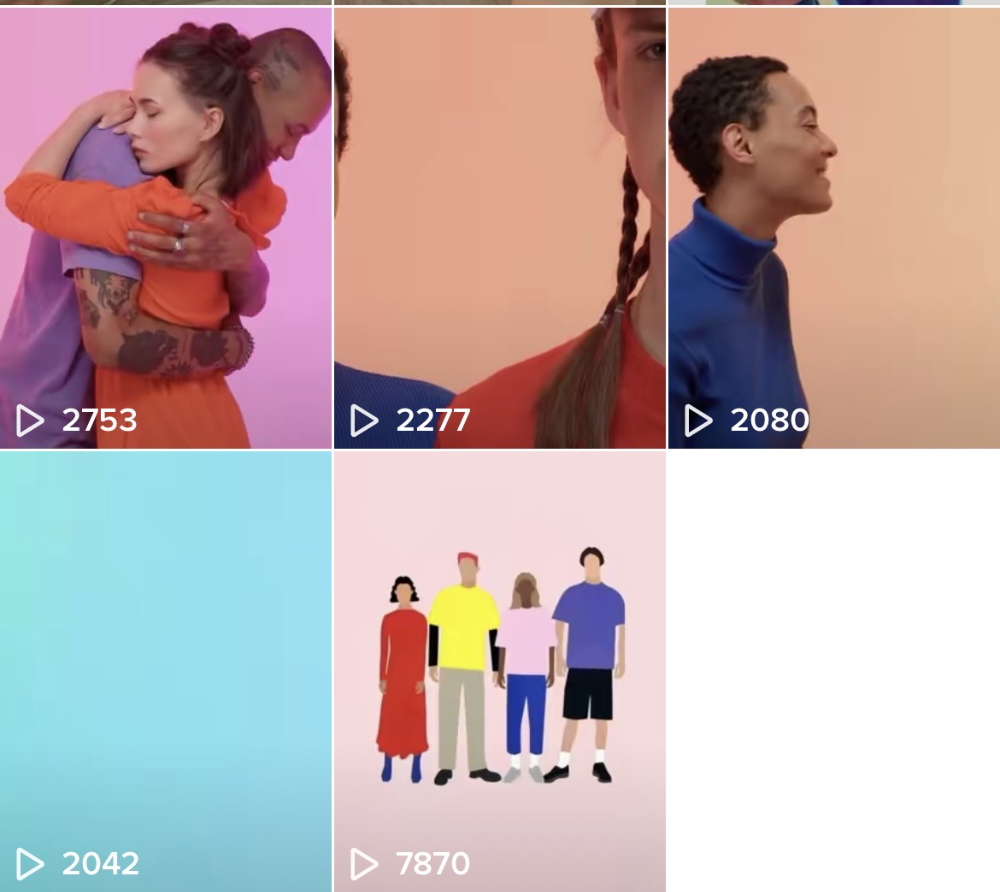

Our most popular movies presented the app alongside text outlining what it does. We started promoting them in Europe and the U.S. and got a 16% CTR and $1 CPI, an improvement over our previous efforts.

Somehow, we were expanding. So we came up with new hypotheses, calls to action, and content.

Four months passed, yet we saw no organic growth.

Russia attacked Ukraine.

Our app aimed to be helpful. For now, we're focusing on our Ukrainian audience. I posted sloppy TikToks illustrating how obimy can help during shelling or air raids.

In two hours, Kostia sent me our visitor count. Our servers crashed.

Initially, we had several thousand daily users. Over 200,000 users joined obimy in a week. They posted obimy videos on TikTok, drawing additional users. We've also resumed U.S. video promotion.

We gained 2,000,000 new members with less than $100 in ads, primarily in the U.S. and U.K.

TikTok helped.

The figures

We were confident we'd chosen the ideal tool for organic growth.

Over 45 million people have viewed our own videos plus a ton of user-generated content with the hashtag #obimy.

About 375 thousand people have liked all of our individual videos.

The number of downloads and the virality of videos are directly correlated.

Where are we now?

TikTok fuels our organic growth. We post 56 videos every week and pay to promote viral content.

We use UGC and influencers. We worked with Universal Music Italy on Eurovision. They offered to promote us through their million-follower TikTok influencers. We thought their followers would improve our audience, but it didn't matter. Integration didn't help us. Users that share obimy videos with their followers can reach several million views, which affects our download rate.

After the dust settled, we determined our key audience was 13-18-year-olds. They want to express themselves, but it's sometimes difficult. We're searching for methods to better engage with our users. We opened a Discord server to discuss anime and video games and gather app and content feedback.

TikTok helps us test product updates and hypotheses. Example: I once thought we might raise MAU by prompting users to add strangers as friends. Instead of asking our team to construct it, I made a TikTok urging users to share invite URLs. Users share links under every video we upload, embracing people worldwide.

Key lessons

Don't direct-sell. TikTok isn't for Instagram, Facebook, or YouTube promo videos. Conventional advertisements don't fit. Most users will swipe up and watch humorous doggos.

More product videos are better. Finally. So what?

Encourage interaction. Tagging friends in comments or making videos with the app promotes it more than any marketing spend.

Be odd and risqué. A user mistakenly sent a French kiss to their mom in one of our most popular videos.

TikTok helps test hypotheses and build your user base. It also helps develop apps. In our upcoming blog, we'll guide you through obimy's design revisions based on TikTok. Follow us on Twitter, Instagram, and TikTok.

You might also like

Johnny Harris

3 years ago

The REAL Reason Putin is Invading Ukraine [video with transcript]

Transcript:

[Reporter] The Russian invasion of Ukraine.

Momentum is building for a war between Ukraine and Russia.

[Reporter] Tensions between Russia and the West

are growing rapidly.

[Reporter] President Biden considering deploying

thousands of troops to Eastern Europe.

There are now 100,000 troops

on the Eastern border of Ukraine.

Russia is setting up field hospitals on this border.

Like this is what preparation for war looks like.

A legitimate war.

Ukrainian troops are watching and waiting,

saying they are preparing for a fight.

The U.S. has ordered the families of embassy staff

to leave Ukraine.

Britain has sent all of their nonessential staff home.

And now the U.S. is sending tons of weapons and munitions

to Ukraine's army.

And we're even considering deploying

our own troops to the region.

I mean, this thing is heating up.

Meanwhile, Russia and the West have been in Geneva

and Brussels trying to talk it out,

and sort of getting nowhere.

The message is very clear.

Should Russia take further aggressive actions

against Ukraine the costs will be severe

and the consequences serious.

It's a scary, grim momentum that is unpredictable.

And the chances of miscalculation

and escalation are growing.

I want to explain what's going on here,

but I want to show you that this isn't just

typical geopolitical behavior.

Stuff that can just be explained on the map.

Instead, to understand why 100,000 troops are camped out

on Ukraine's Eastern border, ready for war,

you have to understand Russia

and how it's been cut down over the ages

from the Slavic empire that dominated this whole region

to then the Soviet Union,

which was defeated in the nineties.

And what you really have to understand here

is how that history is transposed

onto the brain of one man.

This guy, Vladimir Putin.

This is a story about regional domination

and struggles between big powers,

but really it's the story about

what Vladimir Putin really wants.

[Reporter] Russian troops moving swiftly

to take control of military bases in Crimea.

[Reporter] Russia has amassed more than 100,000 troops

and a lot of military hardware

at the border with Ukraine.

Let's dive back in.

Okay. Let's get up to speed on what's happening here.

And I'm just going to quickly give you the highlight version

of like the news that's happening,

because I want to get into the juicy part,

which is like why, the roots of all of this.

So let's go.

A few months ago, Russia started sending

more and more troops to this border.

It's this massive border between Ukraine and Russia.

They said they were doing a military exercise,

but the rest of the world was like,

"Yeah, we totally believe you Russia. Pshaw."

This was right before this big meeting

where North American and European countries

were coming together to talk about a lot

of different things, like these countries often do

in these diplomatic summits.

But soon, because of Russia's aggressive behavior

coming in and setting up 100,000 troops

on the border with Ukraine,

the entire summit turned into a whole, "WTF Russia,

what are you doing on the border of Ukraine," meeting.

Before the meeting Putin comes out and says,

"Listen, I have some demands for the West."

And everyone's like, "Okay, Russia, what are your demands?

You know, we have like, COVID19 right now.

And like, that's like surging.

So like, we don't need your like,

bluster about what your demands are."

And Putin's like, "No, here's my list of demands."

Putin's demands for the summit were this:

number one, that NATO, which is this big military alliance

between U.S., Canada, and Europe stop expanding,

meaning they don't let any new members in, okay.

So, Russia is like, "No more new members to your, like,

cool military club that I don't like.

You can't have any more members."

Number two, that NATO withdraw all of their troops

from anywhere in Eastern Europe.

Basically Putin is saying,

"I can veto any military cooperation

or troops going between countries

that have to do with Eastern Europe,

the place that used to be the Soviet Union."

Okay, and number three, Putin demands that America vow

not to protect its allies in Eastern Europe

with nuclear weapons.

"LOL," said all of the other countries,

"You're literally nuts, Vladimir Putin.

Like these are the most ridiculous demands, ever."

But there he is, Putin, with these demands.

These very, very aggressive demands.

And he sort of is implying that if his demands aren't met,

he's going to invade Ukraine.

I mean, it doesn't work like this.

This is not how international relations work.

You don't just show up and say like,

"I'm not gonna allow other countries to join your alliance

because it makes me feel uncomfortable."

But what I love about this list of demands

from Vladimir Putin for this summit

is that it gives us a clue

on what Vladimir Putin really wants.

What he's after here.

You read them closely and you can grasp his intentions.

But to grasp those intentions

you have to understand what NATO is.

and what Russia and Ukraine used to be.

(dramatic music)

Okay, so a while back I made this video

about why Russia is so damn big,

where I explain how modern day Russia started here in Kiev,

which is actually modern day Ukraine.

In other words, modern day Russia, as we know it,

has its original roots in Ukraine.

These places grew up together

and they eventually became a part

of the same mega empire called the Soviet Union.

They were deeply intertwined,

not just in their history and their culture,

but also in their economy and their politics.

So it's after World War II,

it's like the '50s, '60s, '70s, and NATO was formed,

the North Atlantic Treaty Organization.

This was a military alliance between all of these countries,

that was meant to sort of deter the Soviet Union

from expanding and taking over the world.

But as we all know, the Soviet Union,

which was Russia and all of these other countries,

collapsed in 1991.

And all of these Soviet republics,

including Ukraine, became independent,

meaning they were not now a part

of one big block of countries anymore.

But just because the border's all split up,

it doesn't mean that these cultural ties actually broke.

Like for example, the Soviet leader at the time

of the collapse of the Soviet Union, this guy, Gorbachev,

he was the son of a Ukrainian mother and a Russian father.

Like he grew up with his mother singing him

Ukrainian folk songs.

In his mind, Ukraine and Russia were like one thing.

So there was a major reluctance to accept Ukraine

as a separate thing from Russia.

In so many ways, they are one.

There was another Russian at the time

who did not accept this new division.

This young intelligence officer, Vladimir Putin,

who was starting to rise up in the ranks

of postSoviet Russia.

There's this amazing quote from 2005

where Putin is giving this stateoftheunionlike address,

where Putin declares the collapse of the Soviet Union,

quote, "The greatest catastrophe of the 20th century.

And as for the Russian people, it became a genuine tragedy.

Tens of millions of fellow citizens and countrymen

found themselves beyond the fringes of Russian territory."

Do you see how he frames this?

The Soviet Union were all one people in his mind.

And after it collapsed, all of these people

who are a part of the motherland were now outside

of the fringes or the boundaries of Russian territory.

First off, fact check.

Greatest catastrophe of the 20th century?

Like, do you remember what else happened

in the 20th century, Vladimir?

(ominous music)

Putin's worry about the collapse of this one people

starts to get way worse when the West, his enemy,

starts showing up to his neighborhood

to all these exSoviet countries that are now independent.

The West starts selling their ideology

of democracy and capitalism and inviting them

to join their military alliance called NATO.

And guess what?

These countries are totally buying it.

All these exSoviet countries are now joining NATO.

And some of them, the EU.

And Putin is hating this.

He's like not only did the Soviet Union divide

and all of these people are now outside

of the Russia motherland,

but now they're being persuaded by the West

to join their military alliance.

This is terrible news.

Over the years, this continues to happen,

while Putin himself starts to chip away

at Russian institutions, making them weaker and weaker.

He's silencing his rivals

and he's consolidating power in himself.

(triumphant music)

And in the past few years,

he's effectively silenced anyone who can challenge him;

any institution, any court,

or any political rival have all been silenced.

It's been decades since the Soviet Union fell,

but as Putin gains more power,

he still sees the region through the lens

of the old Cold War, Soviet, Slavic empire view.

He sees this region as one big block

that has been torn apart by outside forces.

"The greatest catastrophe of the 20th century."

And the worst situation of all of these,

according to Putin, is Ukraine,

which was like the gem of the Soviet Union.

There was tons of cultural heritage.

Again, Russia sort of started in Ukraine,

not to mention it was a very populous

and industrious, resourcerich place.

And over the years Ukraine has been drifting west.

It hasn't joined NATO yet, but more and more,

it's been electing proWestern presidents.

It's been flirting with membership in NATO.

It's becoming less and less attached

to the Russian heritage that Putin so adores.

And more than half of Ukrainians say

that they'd be down to join the EU.

64% of them say that it would be cool joining NATO.

But Putin can't handle this. He is in total denial.

Like an exboyfriend who handle his exgirlfriend

starting to date someone else,

Putin can't let Ukraine go.

He won't let go.

So for the past decade,

he's been trying to keep the West out

and bring Ukraine back into the motherland of Russia.

This usually takes the form of Putin sending

secret soldiers from Russia into Ukraine

to help the people in Ukraine who want to like separate

from Ukraine and join Russia.

It also takes the form of, oh yeah,

stealing entire parts of Ukraine for Russia.

Russian troops moving swiftly to take control

of military bases in Crimea.

Like in 2014, Putin just did this.

To what America is officially calling

a Russian invasion of Ukraine.

He went down and just snatched this bit of Ukraine

and folded it into Russia.

So you're starting to see what's going on here.

Putin's life's work is to salvage what he calls

the greatest catastrophe of the 20th century,

the division and the separation

of the Soviet republics from Russia.

So let's get to present day. It's 2022.

Putin is at it again.

And honestly, if you really want to understand

the mind of Vladimir Putin and his whole view on this,

you have to read this.

"On the History of Unity of Russians and Ukrainians,"

by Vladimir Putin.

A blog post that kind of sounds

like a ninth grade history essay.

In this essay, Vladimir Putin argues

that Russia and Ukraine are one people.

He calls them essentially the same historical

and spiritual space.

Kind of beautiful writing, honestly.

Anyway, he argues that the division

between the two countries is due to quote,

"a deliberate effort by those forces

that have always sought to undermine our unity."

And that the formula they use, these outside forces,

is a classic one: divide and rule.

And then he launches into this super indepth,

like 10page argument, as to every single historical beat

of Ukraine and Russia's history

to make this argument that like,

this is one people and the division is totally because

of outside powers, i.e. the West.

Okay, but listen, there's this moment

at the end of the post,

that actually kind of hit me in a big way.

He says this, "Just have a look at Austria and Germany,

or the U.S. and Canada, how they live next to each other.

Close in ethnic composition, culture,

and in fact, sharing one language,

they remain sovereign states with their own interests,

with their own foreign policy.

But this does not prevent them

from the closest integration or allied relations.

They have very conditional, transparent borders.

And when crossing them citizens feel at home.

They create families, study, work, do business.

Incidentally, so do millions of those born in Ukraine

who now live in Russia.

We see them as our own close people."

I mean, listen, like,

I'm not in support of what Putin is doing,

but like that, it's like a pretty solid like analogy.

If China suddenly showed up and started like

coaxing Canada into being a part of its alliance,

I would be a little bit like, "What's going on here?"

That's what Putin feels.

And so I kind of get what he means there.

There's a deep heritage and connection between these people.

And he's seen that falter and dissolve

and he doesn't like it.

He clearly genuinely feels a brotherhood

and this deep heritage connection

with the people of Ukraine.

Okay, okay, okay, okay. Putin, I get it.

Your essay is compelling there at the end.

You're clearly very smart and wellread.

But this does not justify what you've been up to. Okay?

It doesn't justify sending 100,000 troops to the border

or sending cyber soldiers to sabotage

the Ukrainian government, or annexing territory,

fueling a conflict that has killed

tens of thousands of people in Eastern Ukraine.

No. Okay.

No matter how much affection you feel for Ukrainian heritage

and its connection to Russia, this is not okay.

Again, it's like the boyfriend

who genuinely loves his girlfriend.

They had a great relationship,

but they broke up and she's free to see whomever she wants.

But Putin is not ready to let go.

[Man In Blue Shirt] What the hell's wrong with you?

I love you, Jessica.

What the hell is wrong with you?

Dude, don't fucking touch me.

I love you. Worldstar!

What is wrong with you? Just stop!

Putin has constructed his own reality here.

One in which Ukraine is actually being controlled

by shadowy Western forces

who are holding the people of Ukraine hostage.

And if that he invades, it will be a swift victory

because Ukrainians will accept him with open arms.

The great liberator.

(triumphant music)

Like, this guy's a total romantic.

He's a history buff and a romantic.

And he has a hill to die on here.

And it is liberating the people

who have been taken from the Russian motherland.

Kind of like the abusive boyfriend, who's like,

"She actually really loves me,

but it's her annoying friends

who were planting all these ideas in her head.

That's why she broke up with me."

And it's like, "No, dude, she's over you."

[Man In Blue Shirt] What the hell is wrong with you?

I love you, Jessica.

I mean, maybe this video should be called

Putin is just like your abusive exboyfriend.

[Man In Blue Shirt] What the hell is wrong with you?

I love you, Jessica!

Worldstar! What's wrong with you?

Okay. So where does this leave us?

It's 2022, Putin is showing up to these meetings in Europe

to tell them where he stands.

He says, "NATO, you cannot expand anymore. No new members.

And you need to withdraw all your troops

from Eastern Europe, my neighborhood."

He knows these demands will never be accepted

because they're ludicrous.

But what he's doing is showing a false effort to say,

"Well, we tried to negotiate with the West,

but they didn't want to."

Hence giving a little bit more justification

to a Russian invasion.

So will Russia invade? Is there war coming?

Maybe; it's impossible to know

because it's all inside of the head of this guy.

But, if I were to make the best argument

that war is not coming tomorrow,

I would look at a few things.

Number one, war in Ukraine would be incredibly costly

for Vladimir Putin.

Russia has a far superior army to Ukraine's,

but still, Ukraine has a very good army

that is supported by the West

and would give Putin a pretty bad bloody nose

in any invasion.

Controlling territory in Ukraine would be very hard.

Ukraine is a giant country.

They would fight back and it would be very hard

to actually conquer and take over territory.

Another major point here is that if Russia invades Ukraine,

this gives NATO new purpose.

If you remember, NATO was created because of the Cold War,

because the Soviet Union was big and nuclear powered.

Once the Soviet Union fell,

NATO sort of has been looking for a new purpose

over the past couple of decades.

If Russia invades Ukraine,

NATO suddenly has a brand new purpose to unite

and to invest in becoming more powerful than ever.

Putin knows that.

And it would be very bad news for him if that happened.

But most importantly, perhaps the easiest clue

for me to believe that war isn't coming tomorrow

is the Russian propaganda machine

is not preparing the Russian people for an invasion.

In 2014, when Russia was about to invade

and take over Crimea, this part of Ukraine,

there was a barrage of state propaganda

that prepared the Russian people

that this was a justified attack.

So when it happened, it wasn't a surprise

and it felt very normal.

That isn't happening right now in Russia.

At least for now. It may start happening tomorrow.

But for now, I think Putin is showing up to the border,

flexing his muscles and showing the West that he is earnest.

I'm not sure that he's going to invade tomorrow,

but he very well could.

I mean, read the guy's blog post

and you'll realize that he is a romantic about this.

He is incredibly idealistic about the glory days

of the Slavic empires, and he wants to get it back.

So there is dangerous momentum towards war.

And the way war works is even a small little, like, fight,

can turn into the other guy

doing something bigger and crazier.

And then the other person has to respond

with something a little bit bigger.

That's called escalation.

And there's not really a ceiling

to how much that momentum can spin out of control.

That is why it's so scary when two nuclear countries

go to war with each other,

because there's kind of no ceiling.

So yeah, it's dangerous. This is scary.

I'm not sure what happens next here,

but the best we can do is keep an eye on this.

At least for now, we better understand

what Putin really wants out of all of this.

Thanks for watching.

Max Chafkin

3 years ago

Elon Musk Bets $44 Billion on Free Speech's Future

Musk’s purchase of Twitter has sealed his bond with the American right—whether the platform’s left-leaning employees and users like it or not.

Elon Musk's pursuit of Twitter Inc. began earlier this month as a joke. It started slowly, then spiraled out of control, culminating on April 25 with the world's richest man agreeing to spend $44 billion on one of the most politically significant technology companies ever. There have been bigger financial acquisitions, but Twitter's significance has always outpaced its balance sheet. This is a unique Silicon Valley deal.

To recap: Musk announced in early April that he had bought a stake in Twitter, citing the company's alleged suppression of free speech. His complaints were vague, relying heavily on the dog whistles of the ultra-right. A week later, he announced he'd buy the company for $54.20 per share, four days after initially pledging to join Twitter's board. Twitter's directors noticed the 420 reference as well, and responded with a “shareholder rights” plan (i.e., a poison pill) that included a 420 joke.

Musk - Patrick Pleul/Getty Images

No one knew if the bid was genuine. Musk's Twitter plans seemed implausible or insincere. In a tweet, he referred to automated accounts that use his name to promote cryptocurrency. He enraged his prospective employees by suggesting that Twitter's San Francisco headquarters be turned into a homeless shelter, renaming the company Titter, and expressing solidarity with his growing conservative fan base. “The woke mind virus is making Netflix unwatchable,” he tweeted on April 19.

But Musk got funding, and after a frantic weekend of negotiations, Twitter said yes. Unlike most buyouts, Musk will personally fund the deal, putting up up to $21 billion in cash and borrowing another $12.5 billion against his Tesla stock.

Free Speech and Partisanship

Percentage of respondents who agree with the following

The deal is expected to replatform accounts that were banned by Twitter for harassing others, spreading misinformation, or inciting violence, such as former President Donald Trump's account. As a result, Musk is at odds with his own left-leaning employees, users, and advertisers, who would prefer more content moderation rather than less.

Dorsey - Photographer: Joe Raedle/Getty Images

Previously, the company's leadership had similar issues. Founder Jack Dorsey stepped down last year amid concerns about slowing growth and product development, as well as his dual role as CEO of payments processor Block Inc. Compared to Musk, a father of seven who already runs four companies (besides Tesla and SpaceX), Dorsey is laser-focused.

Musk's motivation to buy Twitter may be political. Affirming the American far right with $44 billion spent on “free speech” Right-wing activists have promoted a series of competing upstart Twitter competitors—Parler, Gettr, and Trump's own effort, Truth Social—since Trump was banned from major social media platforms for encouraging rioters at the US Capitol on Jan. 6, 2021. But Musk can give them a social network with lax content moderation and a real user base. Trump said he wouldn't return to Twitter after the deal was announced, but he wouldn't be the first to do so.

Trump - Eli Hiller/Bloomberg

Conservative activists and lawmakers are already ecstatic. “A great day for free speech in America,” said Missouri Republican Josh Hawley. The day the deal was announced, Tucker Carlson opened his nightly Fox show with a 10-minute laudatory monologue. “The single biggest political development since Donald Trump's election in 2016,” he gushed over Musk.

But Musk's supporters and detractors misunderstand how much his business interests influence his political ideology. He marketed Tesla's cars as carbon-saving machines that were faster and cooler than gas-powered luxury cars during George W. Bush's presidency. Musk gained a huge following among wealthy environmentalists who reserved hundreds of thousands of Tesla sedans years before they were made during Barack Obama's presidency. Musk in the Trump era advocated for a carbon tax, but he also fought local officials (and his own workers) over Covid rules that slowed the reopening of his Bay Area factory.

Teslas at the Las Vegas Convention Center Loop Central Station in April 2021. The Las Vegas Convention Center Loop was Musk's first commercial project. Ethan Miller/Getty Images

Musk's rightward shift matched the rise of the nationalist-populist right and the desire to serve a growing EV market. In 2019, he unveiled the Cybertruck, a Tesla pickup, and in 2018, he announced plans to manufacture it at a new plant outside Austin. In 2021, he decided to move Tesla's headquarters there, citing California's "land of over-regulation." After Ford and General Motors beat him to the electric truck market, Musk reframed Tesla as a company for pickup-driving dudes.

Similarly, his purchase of Twitter will be entwined with his other business interests. Tesla has a factory in China and is friendly with Beijing. This could be seen as a conflict of interest when Musk's Twitter decides how to treat Chinese-backed disinformation, as Amazon.com Inc. founder Jeff Bezos noted.

Musk has focused on Twitter's product and social impact, but the company's biggest challenges are financial: Either increase cash flow or cut costs to comfortably service his new debt. Even if Musk can't do that, he can still benefit from the deal. He has recently used the increased attention to promote other business interests: Boring has hyperloops and Neuralink brain implants on the way, Musk tweeted. Remember Tesla's long-promised robotaxis!

Musk may be comfortable saying he has no expectation of profit because it benefits his other businesses. At the TED conference on April 14, Musk insisted that his interest in Twitter was solely charitable. “I don't care about money.”

The rockets and weed jokes make it easy to see Musk as unique—and his crazy buyout will undoubtedly add to that narrative. However, he is a megabillionaire who is risking a small amount of money (approximately 13% of his net worth) to gain potentially enormous influence. Musk makes everything seem new, but this is a rehash of an old media story.

INTΞGRITY team

3 years ago

Privacy Policy

Effective date: August 31, 2022

This Privacy Statement describes how INTΞGRITY ("we," or "us") collects, uses, and discloses your personal information. This Privacy Statement applies when you use our websites, mobile applications, and other online products and services that link to this Privacy Statement (collectively, our "Services"), communicate with our customer care team, interact with us on social media, or otherwise interact with us.

This Privacy Policy may be modified from time to time. If we make modifications, we will update the date at the top of this policy and, in certain instances, we may give you extra notice (such as adding a statement to our website or providing you with a notification). We encourage you to routinely review this Privacy Statement to remain informed about our information practices and available options.

INFORMATION COLLECTION

The Data You Provide to Us

We collect information that you directly supply to us. When you register an account, fill out a form, submit or post material through our Services, contact us via third-party platforms, request customer assistance, or otherwise communicate with us, you provide us with information directly. We may collect your name, display name, username, bio, email address, company information, your published content, including your avatar image, photos, posts, responses, and any other information you voluntarily give.

In certain instances, we may collect the information you submit about third parties. We will use your information to fulfill your request and will not send emails to your contacts unrelated to your request unless they separately opt to receive such communications or connect with us in some other way.

We do not collect payment details via the Services.

Automatically Collected Information When You Communicate with Us

In certain cases, we automatically collect the following information:

We gather data regarding your behavior on our Services, such as your reading history and when you share links, follow users, highlight posts, and like posts.

Device and Usage Information: We gather information about the device and network you use to access our Services, such as your hardware model, operating system version, mobile network, IP address, unique device identifiers, browser type, and app version. We also collect information regarding your activities on our Services, including access times, pages viewed, links clicked, and the page you visited immediately prior to accessing our Services.

Information Obtained Through Cookies and Comparable Tracking Technologies: We collect information about you through tracking technologies including cookies and web beacons. Cookies are little data files kept on your computer's hard disk or device's memory that assist us in enhancing our Services and your experience, determining which areas and features of our Services are the most popular, and tracking the number of visitors. Web beacons (also known as "pixel tags" or "clear GIFs") are electronic pictures that we employ on our Services and in our communications to assist with cookie delivery, session tracking, and usage analysis. We also partner with third-party analytics providers who use cookies, web beacons, device identifiers, and other technologies to collect information regarding your use of our Services and other websites and applications, including your IP address, web browser, mobile network information, pages viewed, time spent on pages or in mobile apps, and links clicked. INTΞGRITY and others may use your information to, among other things, analyze and track data, evaluate the popularity of certain content, present content tailored to your interests on our Services, and better comprehend your online activities. See Your Options for additional information on cookies and how to disable them.

Information Obtained from Outside Sources

We acquire information from external sources. We may collect information about you, for instance, through social networks, accounting service providers, and data analytics service providers. In addition, if you create or log into your INTΞGRITY account via a third-party platform (such as Apple, Facebook, Google, or Twitter), we will have access to certain information from that platform, including your name, lists of friends or followers, birthday, and profile picture, in accordance with the authorization procedures determined by that platform.

We may derive information about you or make assumptions based on the data we gather. We may deduce your location based on your IP address or your reading interests based on your reading history, for instance.

USAGE OF INFORMATION

We use the information we collect to deliver, maintain, and enhance our Services, including publishing and distributing user-generated content, and customizing the posts you see. Additionally, we utilize collected information to: create and administer your INTΞGRITY account;

Send transaction-related information, including confirmations, receipts, and user satisfaction surveys;

Send you technical notices, security alerts, and administrative and support messages;

Respond to your comments and queries and offer support;

Communicate with you about new INTΞGRITY content, goods, services, and features, as well as other news and information that we believe may be of interest to you (see Your Choices for details on how to opt out of these communications at any time);

Monitor and evaluate usage, trends, and activities associated with our Services;

Detect, investigate, and prevent security incidents and other harmful, misleading, fraudulent, or illegal conduct, and safeguard INTΞGRITY’s and others' rights and property;

Comply with our legal and financial requirements; and Carry out any other purpose specified to you at the time the information was obtained.

SHARING OF INFORMATION

We share personal information where required by law or as otherwise specified in this policy:

Personal information is shared with other Service users. If you use our Services to publish content, make comments, or send private messages, for instance, certain information about you, such as your name, photo, bio, and other account information you may supply, as well as information about your activity on our Services, will be available to others (e.g., your followers and who you follow, recent posts, likes, highlights, and responses).

We share personal information with vendors, service providers, and consultants who require access to such information to perform services on our behalf, such as companies that assist us with web hosting, storage, and other infrastructure, analytics, fraud prevention, and security, customer service, communications, and marketing.

We may release personally identifiable information if we think that doing so is in line with or required by any relevant law or legal process, including authorized demands from public authorities to meet national security or law enforcement obligations. If we intend to disclose your personal information in response to a court order, we will provide you with prior notice so that you may contest the disclosure (for example, by seeking court intervention), unless we are prohibited by law or believe that doing so could endanger others or lead to illegal conduct. We shall object to inappropriate legal requests for information regarding users of our Services.

If we believe your actions are inconsistent with our user agreements or policies, if we suspect you have violated the law, or if we believe it is necessary to defend the rights, property, and safety of INTΞGRITY, our users, the public, or others, we may disclose your personal information.

We share personal information with our attorneys and other professional advisers when necessary for obtaining counsel or otherwise protecting and managing our business interests.

We may disclose personal information in conjunction with or during talks for any merger, sale of corporate assets, financing, or purchase of all or part of our business by another firm.

Personal information is transferred between and among INTΞGRITY, its current and future parents, affiliates, subsidiaries, and other companies under common ownership and management.

We will only share your personal information with your permission or at your instruction.

We also disclose aggregated or anonymized data that cannot be used to identify you.

IMPLEMENTATIONS FROM THIRD PARTIES

Some of the content shown on our Services is not hosted by INTΞGRITY. Users are able to publish content hosted by a third party but embedded in our pages ("Embed"). When you interact with an Embed, it can send information to the hosting third party just as if you had visited the hosting third party's website directly. When you load an INTΞGRITY post page with a YouTube video Embed and view the video, for instance, YouTube collects information about your behavior, such as your IP address and how much of the video you watch. INTΞGRITY has no control over the information that third parties acquire via Embeds or what they do with it. This Privacy Statement does not apply to data gathered via Embeds. Before interacting with the Embed, it is recommended that you review the privacy policy of the third party hosting the Embed, which governs any information the Embed gathers.

INFORMATION TRANSFER TO THE UNITED STATES AND OTHER NATIONS

INTΞGRITY’s headquarters are located in the United States, and we have operations and service suppliers in other nations. Therefore, we and our service providers may transmit, store, or access your personal information in jurisdictions that may not provide a similar degree of data protection to your home jurisdiction. For instance, we transfer personal data to Amazon Web Services, one of our service providers that processes personal information on our behalf in numerous data centers throughout the world, including those indicated above. We shall take measures to guarantee that your personal information is adequately protected in the jurisdictions where it is processed.

YOUR SETTINGS

Account Specifics

You can access, modify, delete, and export your account information at any time by login into the Services and visiting the Settings page. Please be aware that if you delete your account, we may preserve certain information on you as needed by law or for our legitimate business purposes.

Cookies

The majority of web browsers accept cookies by default. You can often configure your browser to delete or refuse cookies if you wish. Please be aware that removing or rejecting cookies may impact the accessibility and performance of our services.

Communications

You may opt out of getting certain messages from us, such as digests, newsletters, and activity notifications, by following the instructions contained within those communications or by visiting the Settings page of your account. Even if you opt out, we may still send you emails regarding your account or our ongoing business relationships.

Mobile Push Notifications

We may send push notifications to your mobile device with your permission. You can cancel these messages at any time by modifying your mobile device's notification settings.

YOUR CALIFORNIA PRIVACY RIGHTS

The California Consumer Privacy Act, or "CCPA" (Cal. Civ. Code 1798.100 et seq. ), grants California residents some rights regarding their personal data. If you are a California resident, you are subject to this clause.

We have collected the following categories of personal information over the past year: identifiers, commercial information, internet or other electronic network activity information, and conclusions. Please refer to the section titled "Collection of Information" for specifics regarding the data points we gather and the sorts of sources from which we acquire them. We collect personal information for the business and marketing purposes outlined in the section on Use of Information. In the past 12 months, we have shared the following types of personal information to the following groups of recipients for business purposes:

Category of Personal Information: Identifiers

Categories of Recipients: Analytics Providers, Communication Providers, Custom Service Providers, Fraud Prevention and Security Providers, Infrastructure Providers, Marketing Providers, Payment Processors

Category of Personal Information: Commercial Information

Categories of Recipients: Analytics Providers, Infrastructure Providers, Payment Processors

Category of Personal Information: Internet or Other Electronic Network Activity Information

Categories of Recipients: Analytics Providers, Infrastructure Providers

Category of Personal Information: Inferences

Categories of Recipients: Analytics Providers, Infrastructure Providers

INTΞGRITY does not sell personally identifiable information.

You have the right, subject to certain limitations: (1) to request more information about the categories and specific pieces of personal information we collect, use, and disclose about you; (2) to request the deletion of your personal information; (3) to opt out of any future sales of your personal information; and (4) to not be discriminated against for exercising these rights. You may submit these requests by email to hello@int3grity.com. We shall not treat you differently if you exercise your rights under the CCPA.

If we receive your request from an authorized agent, we may request proof that you have granted the agent a valid power of attorney or that the agent otherwise possesses valid written authorization to submit requests on your behalf. This may involve requiring identity verification. Please contact us if you are an authorized agent wishing to make a request.

ADDITIONAL DISCLOSURES FOR INDIVIDUALS IN EUROPE

This section applies to you if you are based in the European Economic Area ("EEA"), the United Kingdom, or Switzerland and have specific rights and safeguards regarding the processing of your personal data under relevant law.

Legal Justification for Processing

We will process your personal information based on the following legal grounds:

To fulfill our obligations under our agreement with you (e.g., providing the products and services you requested).

When we have a legitimate interest in processing your personal information to operate our business or to safeguard our legitimate interests, we will do so (e.g., to provide, maintain, and improve our products and services, conduct data analytics, and communicate with you).

To meet our legal responsibilities (e.g., to maintain a record of your consents and track those who have opted out of non-administrative communications).

If we have your permission to do so (e.g., when you opt in to receive non-administrative communications from us). When consent is the legal basis for our processing of your personal information, you may at any time withdraw your consent.

Data Retention

We retain the personal information associated with your account so long as your account is active. If you close your account, your account information will be deleted within 14 days. We retain other personal data for as long as is required to fulfill the objectives for which it was obtained and for other legitimate business purposes, such as to meet our legal, regulatory, or other compliance responsibilities.

Data Access Requests

You have the right to request access to the personal data we hold on you and to get your data in a portable format, to request that your personal data be rectified or erased, and to object to or request that we restrict particular processing, subject to certain limitations. To assert your legal rights:

If you sign up for an INTΞGRITY account, you can request an export of your personal information at any time via the Settings website, or by visiting Settings and selecting Account from inside our app.

You can edit the information linked with your account on the Settings website, or by navigating to Settings and then Account in our app, and the Customize Your Interests page.

You may withdraw consent at any time by deleting your account via the Settings page, or by visiting Settings and then selecting Account within our app (except to the extent INTΞGRITY is prevented by law from deleting your information).

You may object to the use of your personal information at any time by contacting hello@int3grity.com.

Questions or Complaints

If we are unable to settle your concern over our processing of personal data, you have the right to file a complaint with the Data Protection Authority in your country. The links below provide access to the contact information for your Data Protection Authority.

For people in the EEA, please visit https://edpb.europa.eu/about-edpb/board/members en.

For persons in the United Kingdom, please visit https://ico.org.uk/global/contact-us.

For people in Switzerland: https://www.edoeb.admin.ch/edoeb/en/home/the-fdpic/contact.html

CONTACT US

Please contact us at hello@int3grity.com if you have any queries regarding this Privacy Statement.