More on Technology

M.G. Siegler

3 years ago

G3nerative

Generative AI hype: some thoughts

The sudden surge in "generative AI" startups and projects feels like the inverse of the recent "web3" boom. Both came from hyped-up pots. But while web3 hyped idealistic tech and an easy way to make money, generative AI hypes unsettling tech and questions whether it can be used to make money.

Web3 is technology looking for problems to solve, while generative AI is technology creating almost too many solutions. Web3 has been evangelists trying to solve old problems with new technology. As Generative AI evolves, users are resolving old problems in stunning new ways.

It's a jab at web3, but it's true. Web3's hype, including crypto, was unhealthy. Always expected a tech crash and shakeout. Tech that won't look like "web3" but will enhance "web2"

But that doesn't mean AI hype is healthy. There'll be plenty of bullshit here, too. As moths to a flame, hype attracts charlatans. Again, the difference is the different starting point. People want to use it. Try it.

With the beta launch of Dall-E 2 earlier this year, a new class of consumer product took off. Midjourney followed suit (despite having to jump through the Discord server hoops). Twelve more generative art projects. Lensa, Prisma Labs' generative AI self-portrait project, may have topped the hype (a startup which has actually been going after this general space for quite a while). This week, ChatGPT went off-topic.

This has a "fake-it-till-you-make-it" vibe. We give these projects too much credit because they create easy illusions. This also unlocks new forms of creativity. And faith in new possibilities.

As a user, it's thrilling. We're just getting started. These projects are not only fun to play with, but each week brings a new breakthrough. As an investor, it's all happening so fast, with so much hype (and ethical and societal questions), that no one knows how it will turn out. Web3's demand won't be the issue. Too much demand may cause servers to melt down, sending costs soaring. Companies will try to mix rapidly evolving tech to meet user demand and create businesses. Frustratingly difficult.

Anyway, I wanted an excuse to post some Lensa selfies.

These are really weird. I recognize them as me or a version of me, but I have no memory of them being taken. It's surreal, out-of-body. Uncanny Valley.

Gareth Willey

3 years ago

I've had these five apps on my phone for a long time.

TOP APPS

Who survives spring cleaning?

Relax. Notion is off-limits. This topic is popular.

(I wrote about it 2 years ago, before everyone else did.) So).

These apps are probably new to you. I hope you find a new phone app after reading this.

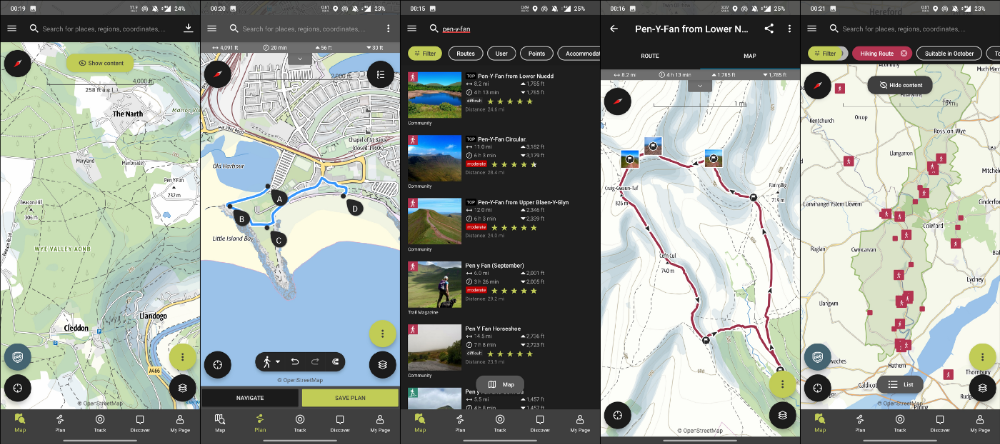

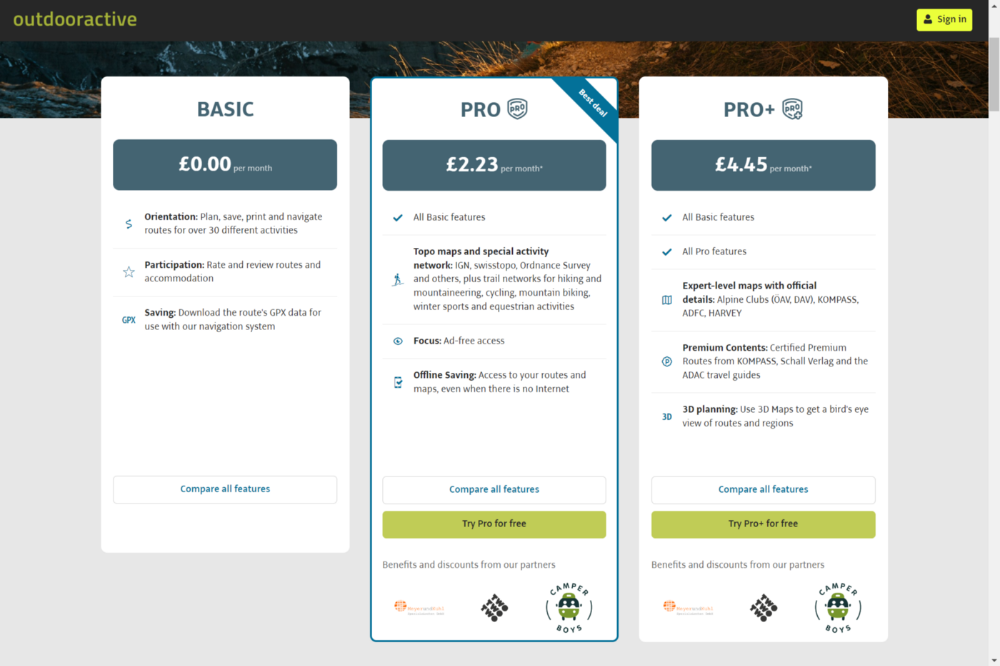

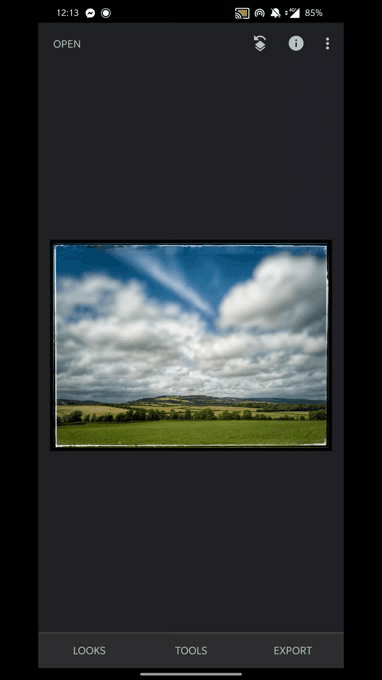

Outdooractive

ViewRanger is Google Maps for outdoor enthusiasts.

This app has been so important to me as a freedom-loving long-distance walker and hiker.

This app shows nearby trails and right-of-ways on top of an Open Street Map.

Helpful detail and data. Any route's distance,

You can download and follow tons of routes planned by app users.

This has helped me find new routes and places a fellow explorer has tried.

Free with non-intrusive ads. Years passed before I subscribed. Pro costs £2.23/month.

This app is for outdoor lovers.

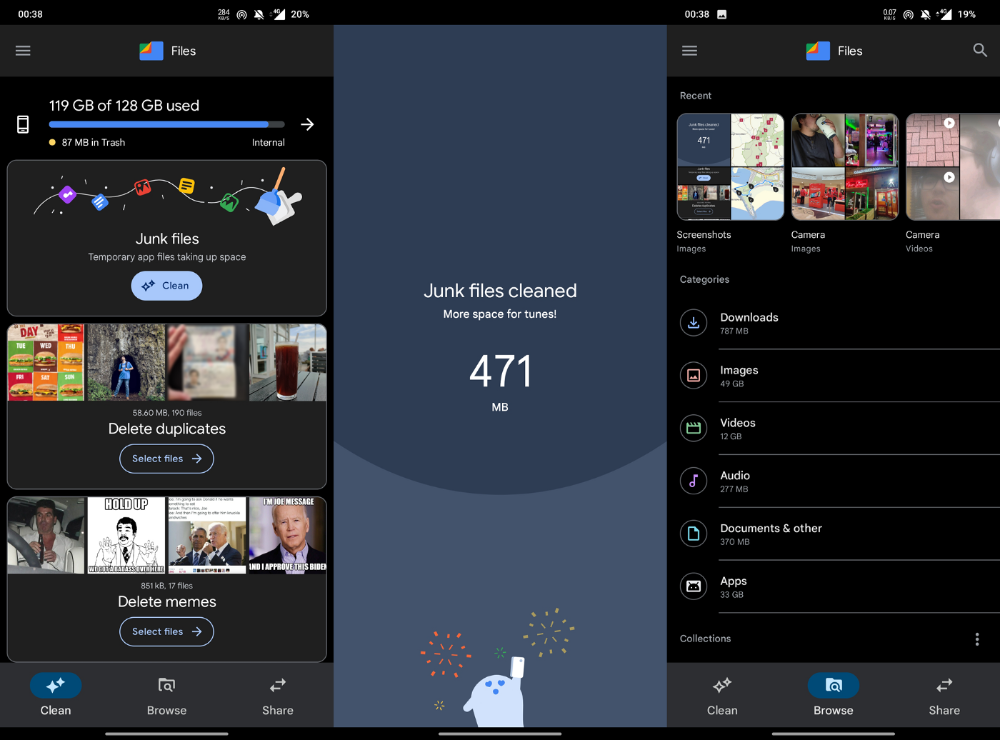

Google Files

New phones come with bloatware. These rushed apps are frustrating.

We must replace these apps. 2017 was Google's year.

Files is a file manager. It's quick, innovative, and clean. They've given people what they want.

It's easy to organize files, clear space, and clear cache.

I recommend Gallery by Google as a gallery app alternative. It's quick and easy.

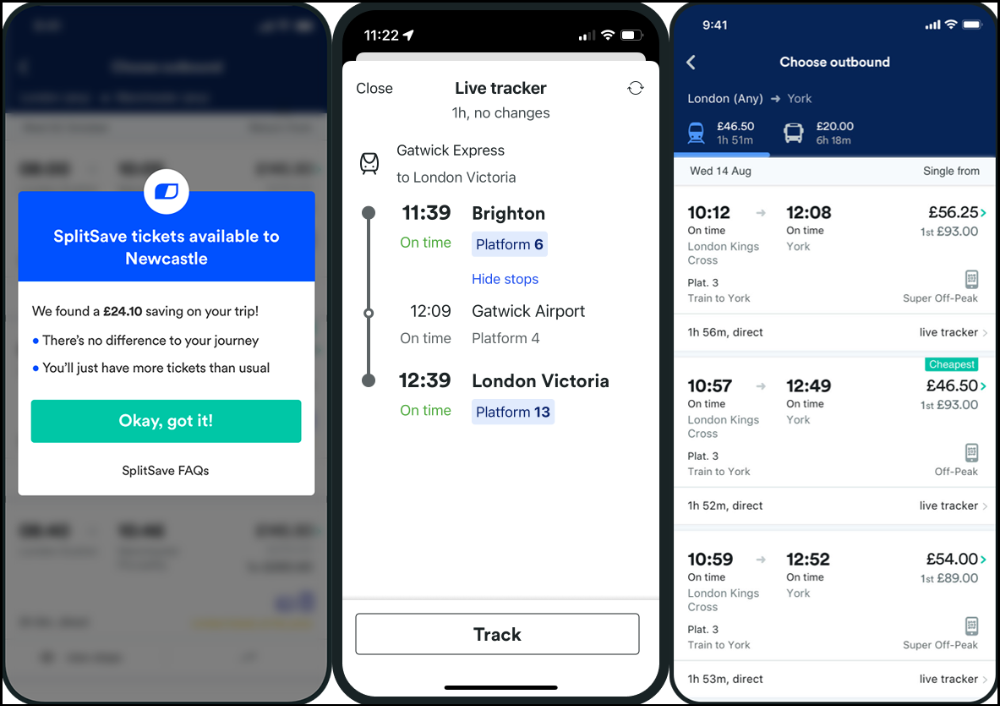

Trainline

App for trains, buses, and coaches.

I've used this app for years. It did the basics well when I first used it.

Since then, it's improved. It's constantly adding features to make traveling easier and less stressful.

Split-ticketing helps me save hundreds a year on train fares. This app is only available in the UK and Europe.

This service doesn't link to a third-party site. Their app handles everything.

Not all train and coach companies use this app. All the big names are there, though.

Here's more on the app.

Battlefield: Mobile

Play Store has 478,000 games. Few can turn my phone into a console.

Call of Duty Mobile and Asphalt 8/9 are examples.

Asphalt's loot boxes and ads make it unplayable. Call of Duty opens with a few ads. Close them to play without hassle.

This game uses all your phone's features to provide a high-quality, seamless experience. If my internet connection is good, I never experience lag or glitches.

The gameplay is energizing and intense, just like on consoles. Sometimes I'm too involved. I've thrown my phone in anger. I'm totally absorbed.

Customizability is my favorite. Since phones have limited screen space, we should only have the buttons we need, placed conveniently.

Size, opacity, and position are modifiable. Adjust audio, graphics, and textures. It's customizable.

This game has been on my phone for three years. It began well and has gotten better. When I think the creators can't do more, they do.

If you play, read my tips for winning a Battle Royale.

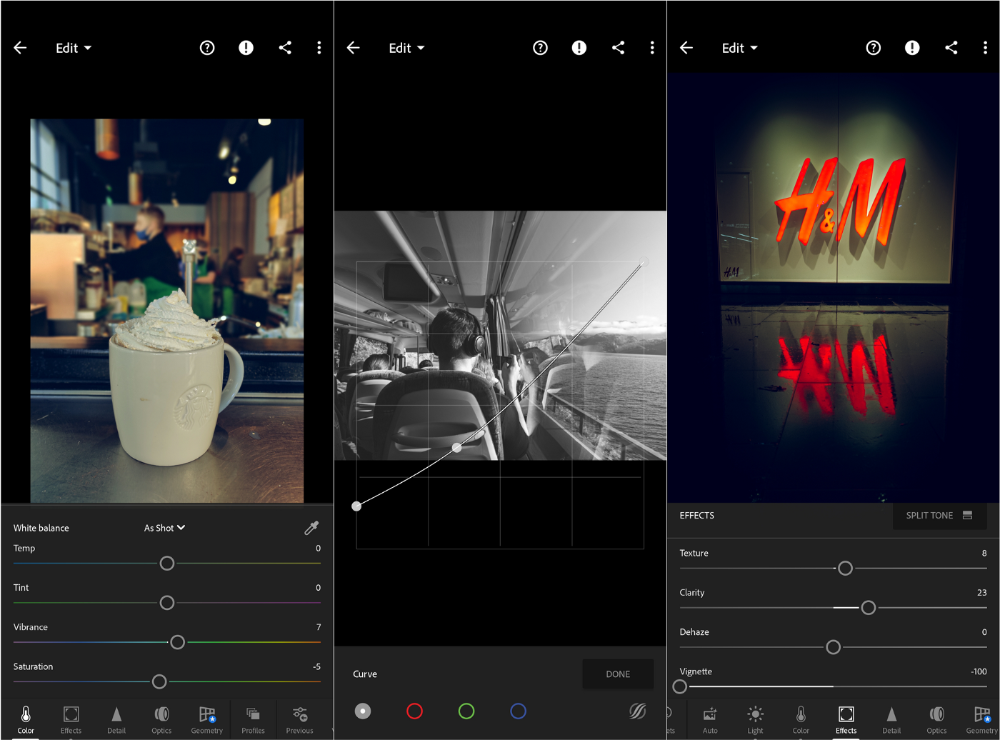

Lightroom

As a photographer, I believe your best camera is on you. The phone.

2017 was a big year for this app. I've tried many photo-editing apps since then. This always wins.

The app is dull. I've never seen better photo editing on a phone.

Adjusting settings and sliders doesn't damage or compress photos. It's detailed.

This is important for phone photos, which are lower quality than professional ones.

Some tools are behind a £4.49/month paywall. Adobe must charge a subscription fee instead of selling licenses. (I'm still bitter about Creative Cloud's price)

Snapseed is my pick. Lightroom is where I do basic editing before moving to Snapseed. Snapseed review:

These apps are great. They cover basic and complex editing needs while traveling.

Final Reflections

I hope you downloaded one of these. Share your favorite apps. These apps are scarce.

Amelia Winger-Bearskin

3 years ago

Reasons Why AI-Generated Images Remind Me of Nightmares

AI images are like funhouse mirrors.

Google's AI Blog introduced the puppy-slug in the summer of 2015.

Puppy-slug isn't a single image or character. "Puppy-slug" refers to Google's DeepDream's unsettling psychedelia. This tool uses convolutional neural networks to train models to recognize dataset entities. If researchers feed the model millions of dog pictures, the network will learn to recognize a dog.

DeepDream used neural networks to analyze and classify image data as well as generate its own images. DeepDream's early examples were created by training a convolutional network on dog images and asking it to add "dog-ness" to other images. The models analyzed images to find dog-like pixels and modified surrounding pixels to highlight them.

Puppy-slugs and other DeepDream images are ugly. Even when they don't trigger my trypophobia, they give me vertigo when my mind tries to reconcile familiar features and forms in unnatural, physically impossible arrangements. I feel like I've been poisoned by a forbidden mushroom or a noxious toad. I'm a Lovecraft character going mad from extradimensional exposure. They're gross!

Is this really how AIs see the world? This is possibly an even more unsettling topic that DeepDream raises than the blatant abjection of the images.

When these photographs originally circulated online, many friends were startled and scandalized. People imagined a computer's imagination would be literal, accurate, and boring. We didn't expect vivid hallucinations and organic-looking formations.

DeepDream's images didn't really show the machines' imaginations, at least not in the way that scared some people. DeepDream displays data visualizations. DeepDream reveals the "black box" of convolutional network training.

Some of these images look scary because the models don't "know" anything, at least not in the way we do.

These images are the result of advanced algorithms and calculators that compare pixel values. They can spot and reproduce trends from training data, but can't interpret it. If so, they'd know dogs have two eyes and one face per head. If machines can think creatively, they're keeping it quiet.

You could be forgiven for thinking otherwise, given OpenAI's Dall-impressive E's results. From a technological perspective, it's incredible.

Arthur C. Clarke once said, "Any sufficiently advanced technology is indistinguishable from magic." Dall-magic E's requires a lot of math, computer science, processing power, and research. OpenAI did a great job, and we should applaud them.

Dall-E and similar tools match words and phrases to image data to train generative models. Matching text to images requires sorting and defining the images. Untold millions of low-wage data entry workers, content creators optimizing images for SEO, and anyone who has used a Captcha to access a website make these decisions. These people could live and die without receiving credit for their work, even though the project wouldn't exist without them.

This technique produces images that are less like paintings and more like mirrors that reflect our own beliefs and ideals back at us, albeit via a very complex prism. Due to the limitations and biases that these models portray, we must exercise caution when viewing these images.

The issue was succinctly articulated by artist Mimi Onuoha in her piece "On Algorithmic Violence":

As we continue to see the rise of algorithms being used for civic, social, and cultural decision-making, it becomes that much more important that we name the reality that we are seeing. Not because it is exceptional, but because it is ubiquitous. Not because it creates new inequities, but because it has the power to cloak and amplify existing ones. Not because it is on the horizon, but because it is already here.

You might also like

Sanjay Priyadarshi

3 years ago

Meet a Programmer Who Turned Down Microsoft's $10,000,000,000 Acquisition Offer

Failures inspire young developers

Jason citron created many products.

These products flopped.

Microsoft offered $10 billion for one of these products.

He rejected the offer since he was so confident in his success.

Let’s find out how he built a product that is currently valued at $15 billion.

Early in his youth, Jason began learning to code.

Jason's father taught him programming and IT.

His father wanted to help him earn money when he needed it.

Jason created video games and websites in high school.

Jason realized early on that his IT and programming skills could make him money.

Jason's parents misjudged his aptitude for programming.

Jason frequented online programming communities.

He looked for web developers. He created websites for those people.

His parents suspected Jason sold drugs online. When he said he used programming to make money, they were shocked.

They helped him set up a PayPal account.

Florida higher education to study video game creation

Jason never attended an expensive university.

He studied game design in Florida.

“Higher Education is an interesting part of society… When I work with people, the school they went to never comes up… only thing that matters is what can you do…At the end of the day, the beauty of silicon valley is that if you have a great idea and you can bring it to the life, you can convince a total stranger to give you money and join your project… This notion that you have to go to a great school didn’t end up being a thing for me.”

Jason's life was altered by Steve Jobs' keynote address.

After graduating, Jason joined an incubator.

Jason created a video-dating site first.

Bad idea.

Nobody wanted to use it when it was released, so they shut it down.

He made a multiplayer game.

It was released on Bebo. 10,000 people played it.

When Steve Jobs unveiled the Apple app store, he stopped playing.

The introduction of the app store resembled that of a new gaming console.

Jason's life altered after Steve Jobs' 2008 address.

“Whenever a new video game console is launched, that’s the opportunity for a new video game studio to get started, it’s because there aren’t too many games available…When a new PlayStation comes out, since it’s a new system, there’s only a handful of titles available… If you can be a launch title you can get a lot of distribution.”

Apple's app store provided a chance to start a video game company.

They released an app after 5 months of work.

Aurora Feint is the game.

Jason believed 1000 players in a week would be wonderful. A thousand players joined in the first hour.

Over time, Aurora Feints' game didn't gain traction. They don't make enough money to keep playing.

They could only make enough for one month.

Instead of buying video games, buy technology

Jason saw that they established a leaderboard, chat rooms, and multiplayer capabilities and believed other developers would want to use these.

They opted to sell the prior game's technology.

OpenFeint.

Assisting other game developers

They had no money in the bank to create everything needed to make the technology user-friendly.

Jason and Daniel designed a website saying:

“If you’re making a video game and want to have a drop in multiplayer support, you can use our system”

TechCrunch covered their website launch, and they gained a few hundred mailing list subscribers.

They raised seed funding with the mailing list.

Nearly all iPhone game developers started adopting the Open Feint logo.

“It was pretty wild… It was really like a whole social platform for people to play with their friends.”

What kind of a business model was it?

OpenFeint originally planned to make the software free for all games. As the game gained popularity, they demanded payment.

They later concluded it wasn't a good business concept.

It became free eventually.

Acquired for $104 million

Open Feint's users and employees grew tremendously.

GREE bought OpenFeint for $104 million in April 2011.

GREE initially committed to helping Jason and his team build a fantastic company.

Three or four months after the acquisition, Jason recognized they had a different vision.

He quit.

Jason's Original Vision for the iPad

Jason focused on distribution in 2012 to help businesses stand out.

The iPad market and user base were growing tremendously.

Jason said the iPad may replace mobile gadgets.

iPad gamers behaved differently than mobile gamers.

People sat longer and experienced more using an iPad.

“The idea I had was what if we built a gaming business that was more like traditional video games but played on tablets as opposed to some kind of mobile game that I’ve been doing before.”

Unexpected insight after researching the video game industry

Jason learned from studying the gaming industry that long-standing companies had advantages beyond a single release.

Previously, long-standing video game firms had their own distribution system. This distribution strategy could buffer time between successful titles.

Sony, Microsoft, and Valve all have gaming consoles and online stores.

So he built a distribution system.

He created a group chat app for gamers.

He envisioned a team-based multiplayer game with text and voice interaction.

His objective was to develop a communication network, release more games, and start a game distribution business.

Remaking the video game League of Legends

Jason and his crew reimagined a League of Legends game mode for 12-inch glass.

They adapted the game for tablets.

League of Legends was PC-only.

So they rebuilt it.

They overhauled the game and included native mobile experiences to stand out.

Hammer and Chisel was the company's name.

18 people worked on the game.

The game was funded. The game took 2.5 years to make.

Was the game a success?

July 2014 marked the game's release. The team's hopes were dashed.

Critics initially praised the game.

Initial installation was widespread.

The game failed.

As time passed, the team realized iPad gaming wouldn't increase much and mobile would win.

Jason was given a fresh idea by Stan Vishnevskiy.

Stan Vishnevskiy was a corporate engineer.

He told Jason about his plan to design a communication app without a game.

This concept seeded modern strife.

“The insight that he really had was to put a couple of dots together… we’re seeing our customers communicating around our own game with all these different apps and also ourselves when we’re playing on PC… We should solve that problem directly rather than needing to build a new game…we should start making it on PC.”

So began Discord.

Online socializing with pals was the newest trend.

Jason grew up playing video games with his friends.

He never played outside.

Jason had many great moments playing video games with his closest buddy, wife, and brother.

Discord was about providing a location for you and your group to speak and hang out.

Like a private cafe, bedroom, or living room.

Discord was developed for you and your friends on computers and phones.

You can quickly call your buddies during a game to conduct a conference call. Put the call on speaker and talk while playing.

Discord wanted to give every player a unique experience. Because coordinating across apps was a headache.

The entire team started concentrating on Discord.

Jason decided Hammer and Chisel would focus on their chat app.

Jason didn't want to make a video game.

How Discord attracted the appropriate attention

During the first five months, the entire team worked on the game and got feedback from friends.

This ensures product improvement. As a result, some teammates' buddies started utilizing Discord.

The team knew it would become something, but the result was buggy. App occasionally crashed.

Jason persuaded a gamer friend to write on Reddit about the software.

New people would find Discord. Why not?

Reddit users discovered Discord and 50 started using it frequently.

Discord was launched.

Rejecting the $10 billion acquisition proposal

Discord has increased in recent years.

It sends billions of messages.

Discord's users aren't tracked. They're privacy-focused.

Purchase offer

Covid boosted Discord's user base.

Weekly, billions of messages were transmitted.

Microsoft offered $10 billion for Discord in 2021.

Jason sold Open Feint for $104m in 2011.

This time, he believed in the product so much that he rejected Microsoft's offer.

“I was talking to some people in the team about which way we could go… The good thing was that most of the team wanted to continue building.”

Last time, Discord was valued at $15 billion.

Discord raised money on March 12, 2022.

The $15 billion corporation raised $500 million in 2021.

Luke Plunkett

4 years ago

Gran Turismo 7 Update Eases Up On The Grind After Fan Outrage

Polyphony Digital has changed the game after apologizing in March.

To make amends for some disastrous downtime, Gran Turismo 7 director Kazunori Yamauchi announced a credits handout and promised to “dramatically change GT7's car economy to help make amends” last month. The first of these has arrived.

The game's 1.11 update includes the following concessions to players frustrated by the economy and its subsequent grind:

-

The last half of the World Circuits events have increased in-game credit rewards.

-

Modified Arcade and Custom Race rewards

-

Clearing all circuit layouts with Gold or Bronze now rewards In-game Credits. Exiting the Sector selection screen with the Exit button will award Credits if an event has already been cleared.

-

Increased Credits Rewards in Lobby and Daily Races

-

Increased the free in-game Credits cap from 20,000,000 to 100,000,000.

Additionally, “The Human Comedy” missions are one-hour endurance races that award “up to 1,200,000” credits per event.

This isn't everything Yamauchi promised last month; he said it would take several patches and updates to fully implement the changes. Here's a list of everything he said would happen, some of which have already happened (like the World Cup rewards and credit cap):

- Increase rewards in the latter half of the World Circuits by roughly 100%.

- Added high rewards for all Gold/Bronze results clearing the Circuit Experience.

- Online Races rewards increase.

- Add 8 new 1-hour Endurance Race events to Missions. So expect higher rewards.

- Increase the non-paid credit limit in player wallets from 20M to 100M.

- Expand the number of Used and Legend cars available at any time.

- With time, we will increase the payout value of limited time rewards.

- New World Circuit events.

- Missions now include 24-hour endurance races.

- Online Time Trials added, with rewards based on the player's time difference from the leader.

- Make cars sellable.

The full list of updates and changes can be found here.

Read the original post.

Eve Arnold

3 years ago

Your Ideal Position As a Part-Time Creator

Inspired by someone I never met

Inspiration is good and bad.

Paul Jarvis inspires me. He's a web person and writer who created his own category by being himself.

Paul said no thank you when everyone else was developing, building, and assuming greater responsibilities. This isn't success. He rewrote the rules. Working for himself, expanding at his own speed, and doing what he loves were his definitions of success.

Play with a problem that you have

The biggest problem can be not recognizing a problem.

Acceptance without question is deception. When you don't push limits, you forget how. You start thinking everything must be as it is.

For example: working. Paul worked a 9-5 agency work with little autonomy. He questioned whether the 9-5 was a way to live, not the way.

Another option existed. So he chipped away at how to live in this new environment.

Don't simply jump

Internet writers tell people considering quitting 9-5 to just quit. To throw in the towel. To do what you like.

The advice is harmful, despite the good intentions. People think quitting is hard. Like courage is the issue. Like handing your boss a resignation letter.

Nope. The tough part comes after. It’s easy to jump. Landing is difficult.

The landing

Paul didn't quit. Intelligent individuals don't. Smart folks focus on landing. They imagine life after 9-5.

Paul had been a web developer for a long time, had solid clients, and was respected. Hence if he pushed the limits and discovered another route, he had the potential to execute.

Working on the side

Society loves polarization. It’s left or right. Either way. Or chaos. It's 9-5 or entrepreneurship.

But like Paul, you can stretch polarization's limits. In-between exists.

You can work a 9-5 and side jobs (as I do). A mix of your favorites. The 9-5's stability and creativity. Fire and routine.

Remember you can't have everything but anything. You can create and work part-time.

My hybrid lifestyle

Not selling books doesn't destroy my world. My globe keeps spinning if my new business fails or if people don't like my Tweets. Unhappy algorithm? Cool. I'm not bothered (okay maybe a little).

The mix gives me the best of both worlds. To create, hone my skill, and grasp big-business basics. I like routine, but I also appreciate spending 4 hours on Saturdays writing.

Some days I adore leaving work at 5 pm and disconnecting. Other days, I adore having a place to write if inspiration strikes during a run or a discussion.

I’m a part-time creator

I’m a part-time creator. No, I'm not trying to quit. I don't work 5 pm - 2 am on the side. No, I'm not at $10,000 MRR.

I work part-time but enjoy my 9-5. My 9-5 has goodies. My side job as well.

It combines both to meet my lifestyle. I'm satisfied.

Join the Part-time Creators Club for free here. I’ll send you tips to enhance your creative game.