More on Entrepreneurship/Creators

Alex Mathers

25 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

Mangu Solutions

3 years ago

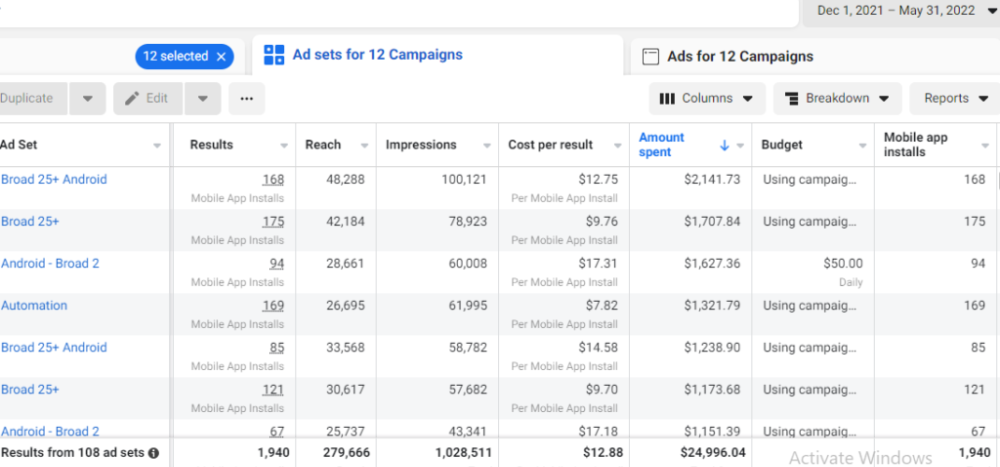

Growing a New App to $15K/mo in 6 Months [SaaS Case Study]

Discover How We Used Facebook Ads to Grow a New Mobile App from $0 to $15K MRR in Just 6 Months and Our Strategy to Hit $100K a Month.

Our client introduced a mobile app for Poshmark resellers in December and wanted as many to experience it and subscribe to the monthly plan.

An Error We Committed

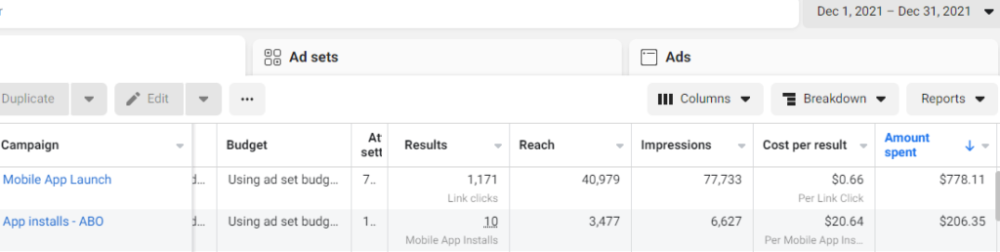

We initiated a Facebook ad campaign with a "awareness" goal, not "installs." This sent them to a landing page that linked to the iPhone App Store and Android Play Store. Smart, right?

We got some installs, but we couldn't tell how many came from the ad versus organic/other channels because the objective we chose only reported landing page clicks, not app installs.

We didn't know which interest groups/audiences had the best cost per install (CPI) to optimize and scale our budget.

After spending $700 without adequate data (installs and trials report), we stopped the campaign and worked with our client's app developer to set up app events tracking.

This allowed us to create an installs campaign and track installs, trials, and purchases (in some cases).

Finding a Successful Audience

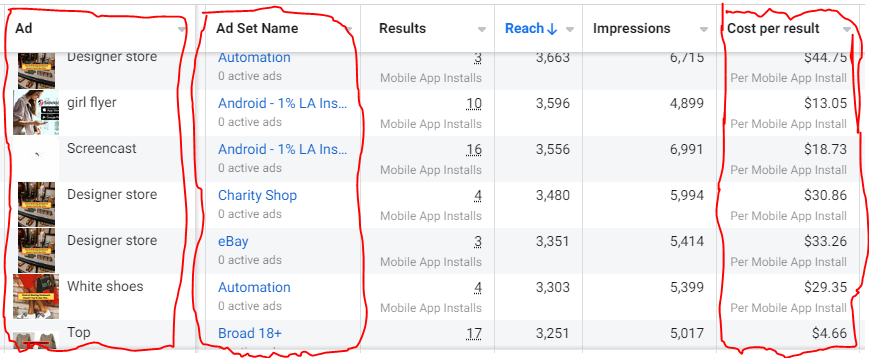

Once we knew what ad sets brought in what installs at what cost, we began optimizing and testing other interest groups and audiences, growing the profitable low CPI ones and eliminating the high CPI ones.

We did all our audience testing using an ABO campaign (Ad Set Budget Optimization), spending $10 to $30 on each ad set for three days and optimizing afterward. All ad sets under $30 were moved to a CBO campaign (Campaign Budget Optimization).

We let Facebook's AI decide how much to spend on each ad set, usually the one most likely to convert at the lowest cost.

If the CBO campaign maintains a nice CPI, we keep increasing the budget by $50 every few days or duplicating it sometimes in order to double the budget. This is how we've scaled to $400/day profitably.

Finding Successful Creatives

Per campaign, we tested 2-6 images/videos. Same ad copy and CTA. There was no clear winner because some images did better with some interest groups.

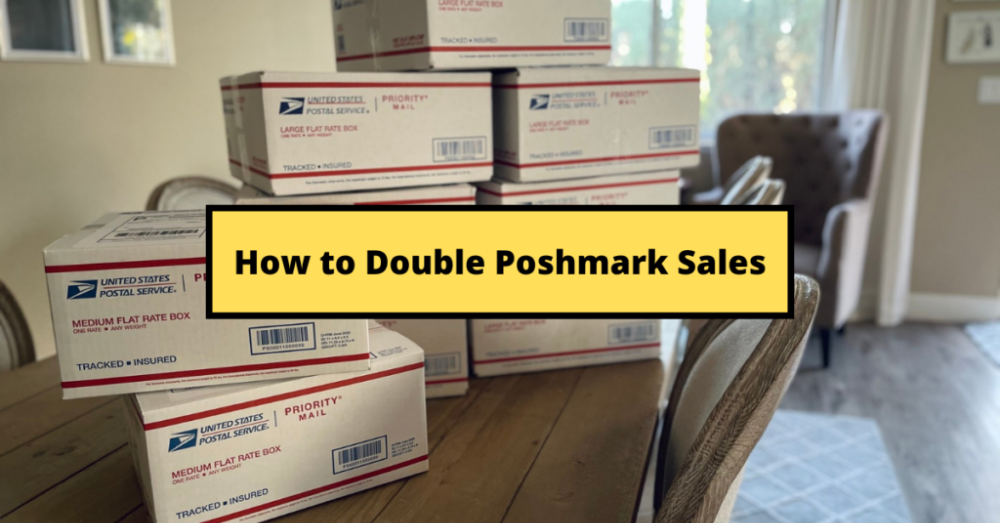

The image above with mail packages, for example, got us a cheap CPI of $9.71 from our Goodwill Stores interest group but, a high $48 CPI from our lookalike audience. Once we had statistically significant data, we turned off the high-cost ad.

New marketers who are just discovering A/B testing may assume it's black and white — winner and loser. However, Facebook ads' machine learning and reporting has gotten so sophisticated that it's hard to call a creative a flat-out loser, but rather a 'bad fit' for some audiences, and perfect for others.

You can see how each creative performs across age groups and optimize.

How Many Installs Did It Take Us to Earn $15K Per Month?

Six months after paying $25K, we got 1,940 app installs, 681 free trials, and 522 $30 monthly subscriptions. 522 * $30 gives us $15,660 in monthly recurring revenue (MRR).

Next, what? $100K per month

The conversation above is with the app's owner. We got on a 30-minute call where I shared how I plan to get the app to be making $100K a month like I’ve done for other businesses.

Reverse Engineering $100K

Formula:

For $100K/month, we need 3,334 people to pay $30/month. 522 people pay that. We need 2,812 more paid users.

522 paid users from 1,940 installs is a 27% conversion rate. To hit $100K/month, we need 10,415 more installs. Assuming...

With a $400 daily ad spend, we average 40 installs per day. This means that if everything stays the same, it would take us 260 days (around 9 months) to get to $100K a month (MRR).

Conclusion

You must market your goods to reach your income objective (without waiting forever). Paid ads is the way to go if you hate knocking on doors or irritating friends and family (who aren’t scalable anyways).

You must also test and optimize different angles, audiences, interest groups, and creatives.

Aaron Dinin, PhD

3 years ago

Are You Unintentionally Creating the Second Difficult Startup Type?

Most don't understand the issue until it's too late.

My first startup was what entrepreneurs call the hardest. A two-sided marketplace.

Two-sided marketplaces are the hardest startups because founders must solve the chicken or the egg conundrum.

A two-sided marketplace needs suppliers and buyers. Without suppliers, buyers won't come. Without buyers, suppliers won't come. An empty marketplace and a founder striving to gain momentum result.

My first venture made me a struggling founder seeking to achieve traction for a two-sided marketplace. The company failed, and I vowed never to start another like it.

I didn’t. Unfortunately, my second venture was almost as hard. It failed like the second-hardest startup.

What kind of startup is the second-hardest?

The second-hardest startup, which is almost as hard to develop, is rarely discussed in the startup community. Because of this, I predict more founders fail each year trying to develop the second-toughest startup than the hardest.

Fairly, I have no proof. I see many startups, so I have enough of firsthand experience. From what I've seen, for every entrepreneur developing a two-sided marketplace, I'll meet at least 10 building this other challenging startup.

I'll describe a startup I just met with its two co-founders to explain the second hardest sort of startup and why it's so hard. They created a financial literacy software for parents of high schoolers.

The issue appears plausible. Children struggle with money. Parents must teach financial responsibility. Problems?

It's possible.

Buyers and users are different.

Buyer-user mismatch.

The financial literacy app I described above targets parents. The parent doesn't utilize the app. Child is end-user. That may not seem like much, but it makes customer and user acquisition and onboarding difficult for founders.

The difficulty of a buyer-user imbalance

The company developing a product faces a substantial operational burden when the buyer and end customer are different. Consider classic firms where the buyer is the end user to appreciate that responsibility.

Entrepreneurs selling directly to end users must educate them about the product's benefits and use. Each demands a lot of time, effort, and resources.

Imagine selling a financial literacy app where the buyer and user are different. To make the first sale, the entrepreneur must establish all the items I mentioned above. After selling, the entrepreneur must supply a fresh set of resources to teach, educate, or train end-users.

Thus, a startup with a buyer-user mismatch must market, sell, and train two organizations at once, requiring twice the work with the same resources.

The second hardest startup is hard for reasons other than the chicken-or-the-egg conundrum. It takes a lot of creativity and luck to solve the chicken-or-egg conundrum.

The buyer-user mismatch problem cannot be overcome by innovation or luck. Buyer-user mismatches must be solved by force. Simply said, when a product buyer is different from an end-user, founders have a lot more work. If they can't work extra, their companies fail.

You might also like

Jack Shepherd

3 years ago

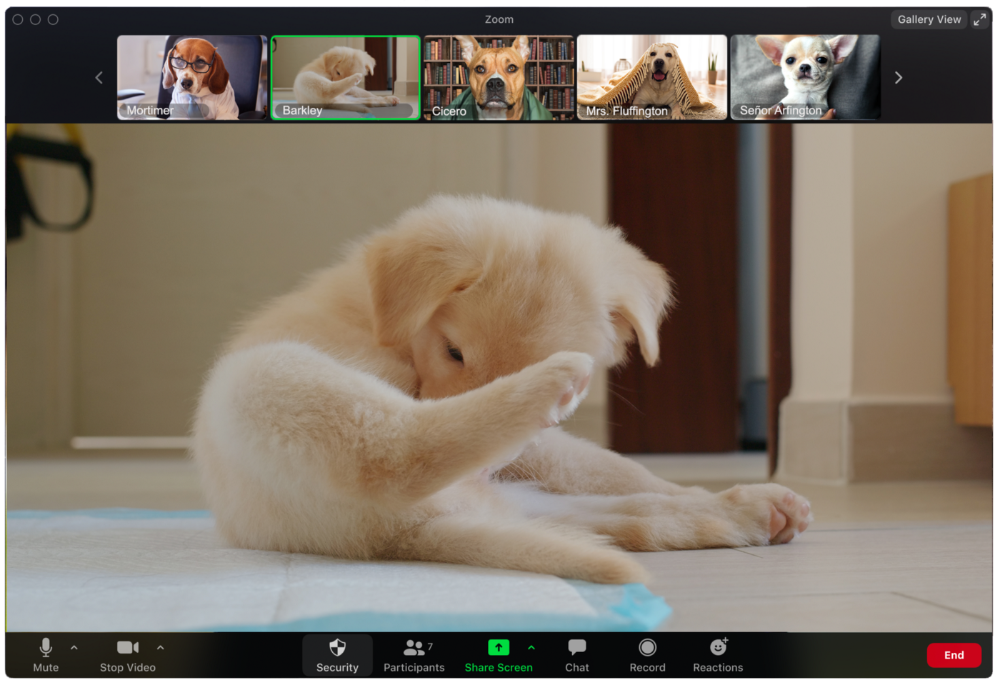

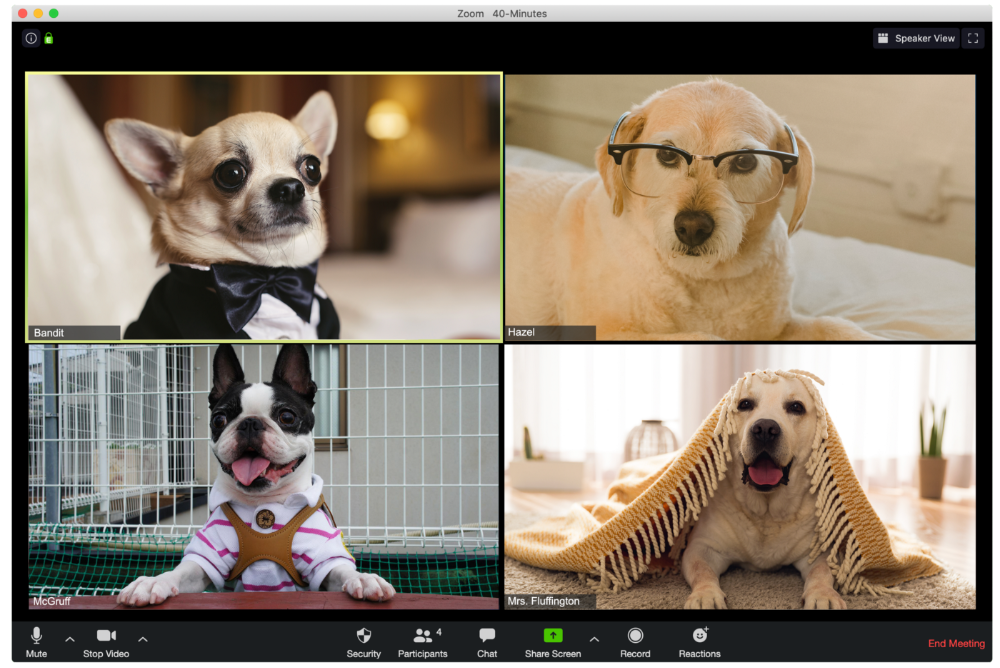

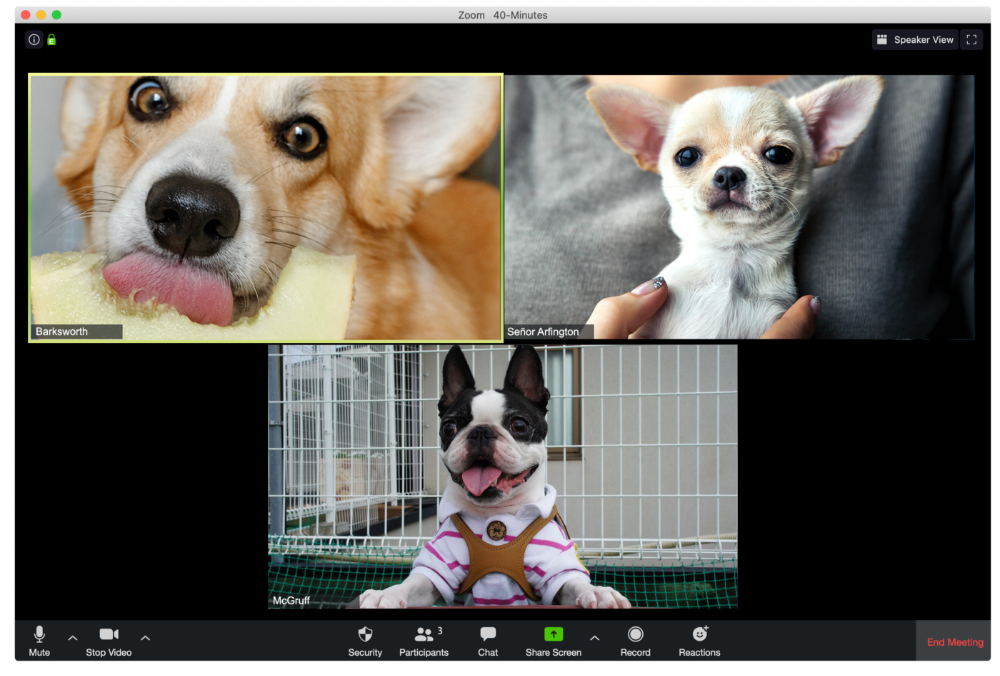

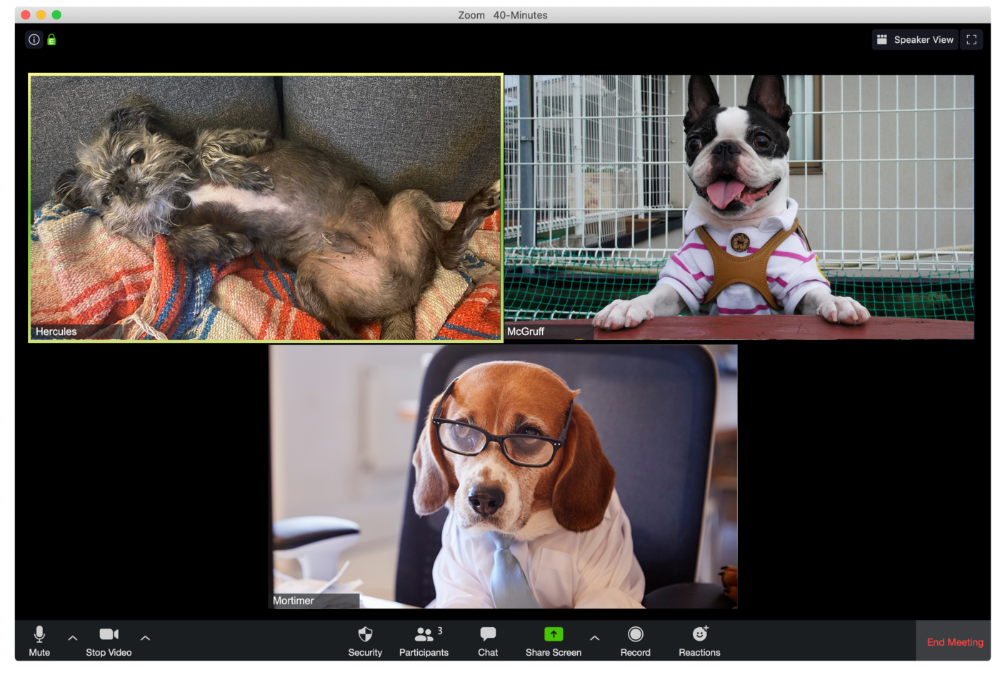

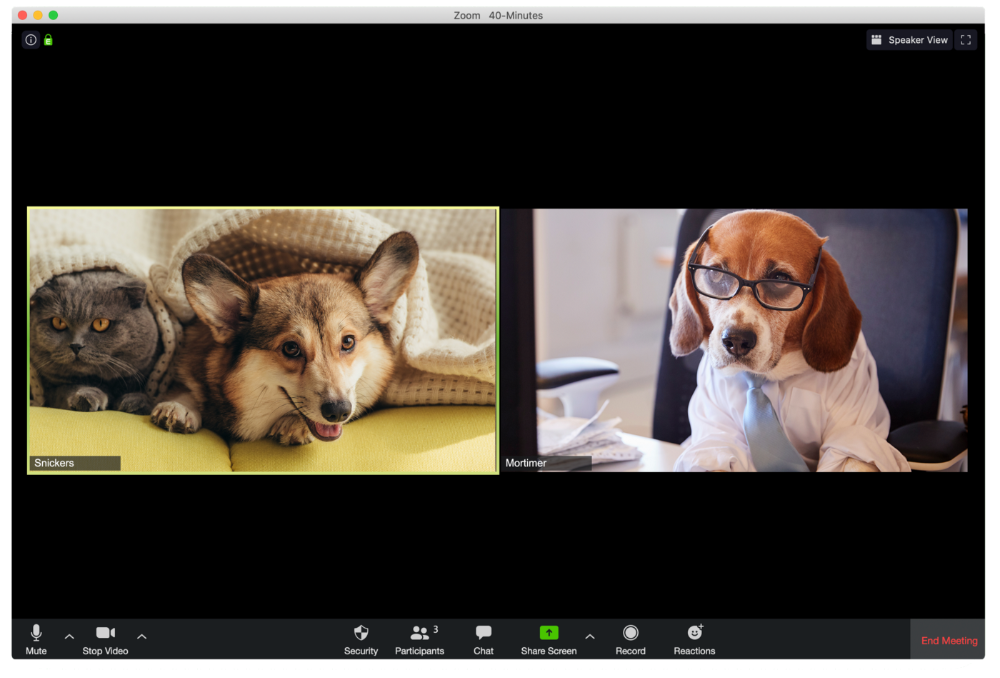

A Dog's Guide to Every Type of Zoom Call Participant

Are you one of these Zoom dogs?

The Person Who Is Apparently Always on Mute

Waffles thinks he can overpower the mute button by shouting loudly.

The person who believed their camera to be off

Barkley's used to remote work, but he hasn't mastered the "Stop Video" button. Everyone is affected.

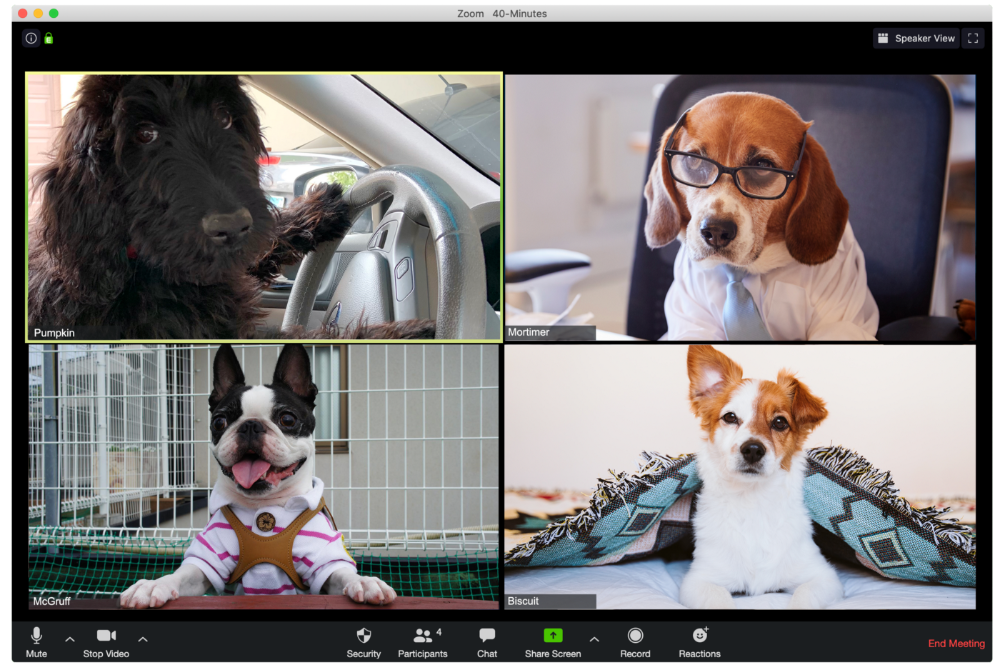

Who is driving for some reason, exactly?

Why is Pumpkin always late? Who knows? Shouldn't she be driving? If you could hear her over the freeway, she'd answer these questions.

The Person With the Amazing Bookcase

Cicero likes to use SAT-words like "leverage" and "robust" in Zoom sessions, presumably from all the books he wants you to see behind him.

The Individual Who Is Unnecessarily Dressed

We hope Bandit is going somewhere beautiful after this meeting, or else he neglected the quarterly earnings report and is overcompensating to distract us.

The person who works through lunch in between zoom calls

Barksworth has back-to-back meetings all day, so you can watch her eat while she talks.

The Person Who Is A Little Too Comfy

Hercules thinks Zoom meetings happen between sleeps. He'd appreciate everyone speaking more quietly.

The Person Who Answered the Phone Outside

Frisbee has a gorgeous backyard and lives in a place with great weather year-round, and she wants you to think about that during the daily team huddle.

Who Wants You to Pay Attention to Their Pet

Snickers hasn't listened to you in 20 minutes unless you tell her how cute her kitten is.

One who is, for some reason, positioned incorrectly on the screen

Nelson's meetings consist primarily of attempting to figure out how he positioned his laptop so absurdly.

The person who says too many goodbyes

Zeus waves farewell like it's your first day of school while everyone else searches for the "Leave Meeting" button. It's nice.

He who has a poor internet connection

Ziggy's connectivity problems continue... She gives a long speech as everyone waits awkwardly to inform her they missed it.

The Clearly Multitasking Person

Tinkerbell can play fetch during the monthly staff meeting if she works from home, but that's not a good idea.

The Person Using Zoom as a Makeup and Hair Mirror

If Gail and Bob knew Zoom had a "hide self view" option, they'd be distraught.

The person who feels at ease with simply leaving

Rusty bails when a Zoom conference is over. Rusty's concept is decent.

Sam Hickmann

3 years ago

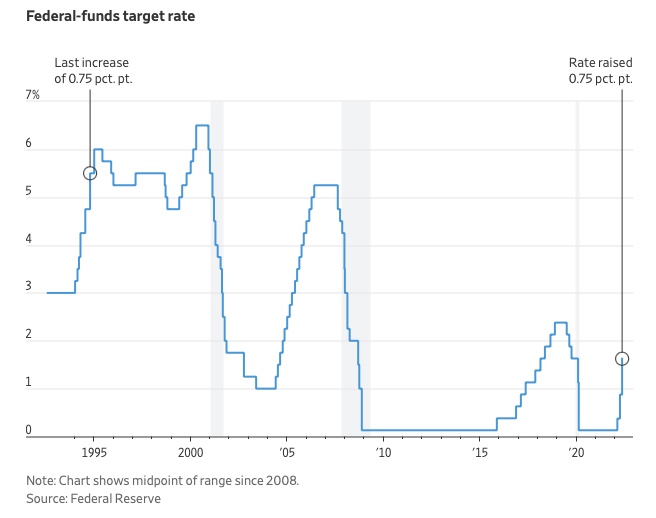

What is this Fed interest rate everybody is talking about that makes or breaks the stock market?

The Federal Funds Rate (FFR) is the target interest rate set by the Federal Reserve System (Fed)'s policy-making body (FOMC). This target is the rate at which the Fed suggests commercial banks borrow and lend their excess reserves overnight to each other.

The FOMC meets 8 times a year to set the target FFR. This is supposed to promote economic growth. The overnight lending market sets the actual rate based on commercial banks' short-term reserves. If the market strays too far, the Fed intervenes.

Banks must keep a certain percentage of their deposits in a Federal Reserve account. A bank's reserve requirement is a percentage of its total deposits. End-of-day bank account balances averaged over two-week reserve maintenance periods are used to determine reserve requirements.

If a bank expects to have end-of-day balances above what's needed, it can lend the excess to another institution.

The FOMC adjusts interest rates based on economic indicators that show inflation, recession, or other issues that affect economic growth. Core inflation and durable goods orders are indicators.

In response to economic conditions, the FFR target has changed over time. In the early 1980s, inflation pushed it to 20%. During the Great Recession of 2007-2009, the rate was slashed to 0.15 percent to encourage growth.

Inflation picked up in May 2022 despite earlier rate hikes, prompting today's 0.75 percent point increase. The largest increase since 1994. It might rise to around 3.375% this year and 3.1% by the end of 2024.

Taher Batterywala

3 years ago

Do You Have Focus Issues? Use These 5 Simple Habits

Many can't concentrate. The first 20% of the day isn't optimized.

Elon Musk, Tony Robbins, and Bill Gates share something:

Morning Routines.

A repeatable morning ritual saves time.

The result?

Time for hobbies.

I'll discuss 5 easy morning routines you can use.

1. Stop pressing snooze

Waking up starts the day. You disrupt your routine by hitting snooze.

One sleep becomes three. Your morning routine gets derailed.

Fix it:

Hide your phone. This disables snooze and wakes you up.

Once awake, staying awake is 10x easier. Simple trick, big results.

2. Drink water

Chronic dehydration is common. Mostly urban, air-conditioned workers/residents.

2% cerebral dehydration causes short-term memory loss.

Dehydration shrinks brain cells.

Drink 3-4 liters of water daily to avoid this.

3. Improve your focus

How to focus better?

Meditation.

Improve your mood

Enhance your memory

increase mental clarity

Reduce blood pressure and stress

Headspace helps with the habit.

Here's a meditation guide.

Sit comfortably

Shut your eyes.

Concentrate on your breathing

Breathe in through your nose

Breathe out your mouth.

5 in, 5 out.

Repeat for 1 to 20 minutes.

Here's a beginner's video:

4. Workout

Exercise raises:

Mental Health

Effort levels

focus and memory

15-60 minutes of fun:

Exercise Lifting

Running

Walking

Stretching and yoga

This helps you now and later.

5. Keep a journal

You have countless thoughts daily. Many quietly steal your focus.

Here’s how to clear these:

Write for 5-10 minutes.

You'll gain 2x more mental clarity.

Recap

5 morning practices for 5x more productivity:

Say no to snoozing

Hydrate

Improve your focus

Exercise

Journaling

Conclusion

One step starts a thousand-mile journey. Try these easy yet effective behaviors if you have trouble concentrating or have too many thoughts.

Start with one of these behaviors, then add the others. Its astonishing results are instant.