More on Technology

Colin Faife

3 years ago

The brand-new USB Rubber Ducky is much riskier than before.

The brand-new USB Rubber Ducky is much riskier than before.

With its own programming language, the well-liked hacking tool may now pwn you.

With a vengeance, the USB Rubber Ducky is back.

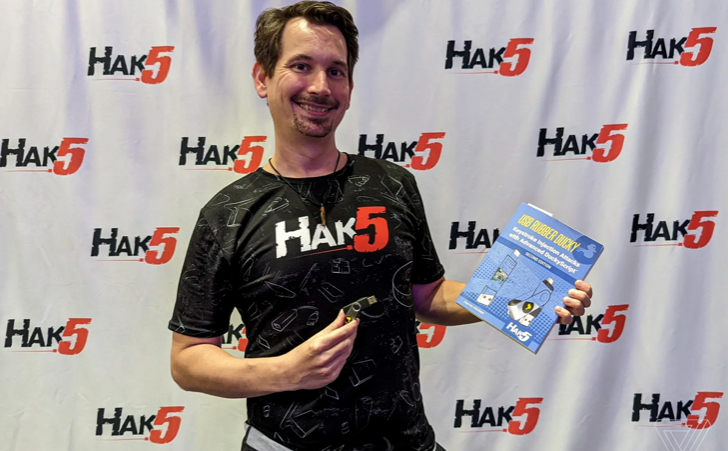

This year's Def Con hacking conference saw the release of a new version of the well-liked hacking tool, and its author, Darren Kitchen, was on hand to explain it. We put a few of the new features to the test and discovered that the most recent version is riskier than ever.

WHAT IS IT?

The USB Rubber Ducky seems to the untrained eye to be an ordinary USB flash drive. However, when you connect it to a computer, the computer recognizes it as a USB keyboard and will accept keystroke commands from the device exactly like a person would type them in.

Kitchen explained to me, "It takes use of the trust model built in, where computers have been taught to trust a human, in that anything it types is trusted to the same degree as the user is trusted. And a computer is aware that clicks and keystrokes are how people generally connect with it.

Over ten years ago, the first Rubber Ducky was published, quickly becoming a hacker favorite (it was even featured in a Mr. Robot scene). Since then, there have been a number of small upgrades, but the most recent Rubber Ducky takes a giant step ahead with a number of new features that significantly increase its flexibility and capability.

WHERE IS ITS USE?

The options are nearly unlimited with the proper strategy.

The Rubber Ducky has already been used to launch attacks including making a phony Windows pop-up window to collect a user's login information or tricking Chrome into sending all saved passwords to an attacker's web server. However, these attacks lacked the adaptability to operate across platforms and had to be specifically designed for particular operating systems and software versions.

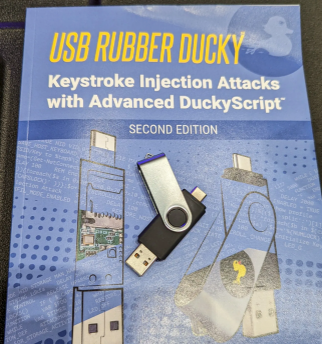

The nuances of DuckyScript 3.0 are described in a new manual.

The most recent Rubber Ducky seeks to get around these restrictions. The DuckyScript programming language, which is used to construct the commands that the Rubber Ducky will enter into a target machine, receives a significant improvement with it. DuckyScript 3.0 is a feature-rich language that allows users to write functions, store variables, and apply logic flow controls, in contrast to earlier versions that were primarily limited to scripting keystroke sequences (i.e., if this... then that).

This implies that, for instance, the new Ducky can check to see if it is hooked into a Windows or Mac computer and then conditionally run code specific to each one, or it can disable itself if it has been attached to the incorrect target. In order to provide a more human effect, it can also generate pseudorandom numbers and utilize them to add a configurable delay between keystrokes.

The ability to steal data from a target computer by encoding it in binary code and transferring it through the signals intended to instruct a keyboard when the CapsLock or NumLock LEDs should light up is perhaps its most astounding feature. By using this technique, a hacker may plug it in for a brief period of time, excuse themselves by saying, "Sorry, I think that USB drive is faulty," and then take it away with all the credentials stored on it.

HOW SERIOUS IS THE RISK?

In other words, it may be a significant one, but because physical device access is required, the majority of people aren't at risk of being a target.

The 500 or so new Rubber Duckies that Hak5 brought to Def Con, according to Kitchen, were his company's most popular item at the convention, and they were all gone on the first day. It's safe to suppose that hundreds of hackers already possess one, and demand is likely to persist for some time.

Additionally, it has an online development toolkit that can be used to create attack payloads, compile them, and then load them onto the target device. A "payload hub" part of the website makes it simple for hackers to share what they've generated, and the Hak5 Discord is also busy with conversation and helpful advice. This makes it simple for users of the product to connect with a larger community.

It's too expensive for most individuals to distribute in volume, so unless your favorite cafe is renowned for being a hangout among vulnerable targets, it's doubtful that someone will leave a few of them there. To that end, if you intend to plug in a USB device that you discovered outside in a public area, pause to consider your decision.

WOULD IT WORK FOR ME?

Although the device is quite straightforward to use, there are a few things that could cause you trouble if you have no prior expertise writing or debugging code. For a while, during testing on a Mac, I was unable to get the Ducky to press the F4 key to activate the launchpad, but after forcing it to identify itself using an alternative Apple keyboard device ID, the problem was resolved.

From there, I was able to create a script that, when the Ducky was plugged in, would instantly run Chrome, open a new browser tab, and then immediately close it once more without requiring any action from the laptop user. Not bad for only a few hours of testing, and something that could be readily changed to perform duties other than reading technology news.

Jussi Luukkonen, MBA

3 years ago

Is Apple Secretly Building A Disruptive Tsunami?

A TECHNICAL THOUGHT

The IT giant is seeding the digital Great Renaissance.

Recently, technology has been dull.

We're still fascinated by processing speeds. Wearables are no longer an engineer's dream.

Apple has been quiet and avoided huge announcements. Slowness speaks something. Everything in the spaceship HQ seems to be turning slowly, unlike competitors around buzzwords.

Is this a sign of the impending storm?

Metas stock has fallen while Google milks dumb people. Microsoft steals money from corporations and annexes platforms like Linkedin.

Just surface bubbles?

Is Apple, one of the technology continents, pushing against all others to create a paradigm shift?

The fundamental human right to privacy

Apple's unusual remarks emphasize privacy. They incorporate it into their business models and judgments.

Apple believes privacy is a human right. There are no compromises.

This makes it hard for other participants to gain Apple's ecosystem's efficiencies.

Other players without hardware platforms lose.

Apple delivers new kidneys without rejection, unlike other software vendors. Nothing compromises your privacy.

Corporate citizenship will become more popular.

Apples have full coffers. They've started using that flow to better communities, which is great.

Apple's $2.5B home investment is one example. Google and Facebook are building or proposing to build workforce housing.

Apple's funding helps marginalized populations in more than 25 California counties, not just Apple employees.

Is this a trend, and does Apple keep giving back? Hope so.

I'm not cynical enough to suspect these investments have malicious motives.

The last frontier is the environment.

Climate change is a battle-to-win.

Long-term winners will be companies that protect the environment, turning climate change dystopia into sustainable growth.

Apple has been quietly changing its supply chain to be carbon-neutral by 2030.

“Apple is dedicated to protecting the planet we all share with solutions that are supporting the communities where we work.” Lisa Jackson, Apple’s vice president of environment.

Apple's $4.7 billion Green Bond investment will produce 1.2 gigawatts of green energy for the corporation and US communities. Apple invests $2.2 billion in Europe's green energy. In the Philippines, Thailand, Nigeria, Vietnam, Colombia, Israel, and South Africa, solar installations are helping communities obtain sustainable energy.

Apple is already carbon neutral today for its global corporate operations, and this new commitment means that by 2030, every Apple device sold will have net zero climate impact. -Apple.

Apple invests in green energy and forests to reduce its paper footprint in China and the US. Apple and the Conservation Fund are safeguarding 36,000 acres of US working forest, according to GreenBiz.

Apple's packaging paper is recycled or from sustainably managed forests.

What matters is the scale.

$1 billion is a rounding error for Apple.

These small investments originate from a tree with deep, spreading roots.

Apple's genes are anchored in building the finest products possible to improve consumers' lives.

I felt it when I switched to my iPhone while waiting for a train and had to pack my Macbook. iOS 16 dictation makes writing more enjoyable. Small change boosts productivity. Smooth transition from laptop to small screen and dictation.

Apples' tiny, well-planned steps have great growth potential for all consumers in everything they do.

There is clearly disruption, but it doesn't have to be violent

Digital channels, methods, and technologies have globalized human consciousness. One person's responsibility affects many.

Apple gives us tools to be privately connected. These technologies foster creativity, innovation, fulfillment, and safety.

Apple has invented a mountain of technologies, services, and channels to assist us adapt to the good future or combat evil forces who cynically aim to control us and ruin the environment and communities. Apple has quietly disrupted sectors for decades.

Google, Microsoft, and Meta, among others, should ride this wave. It's a tsunami, but it doesn't have to be devastating if we care, share, and cooperate with political decision-makers and community leaders worldwide.

A fresh Renaissance

Renaissance geniuses Michelangelo and Da Vinci. Different but seeing something no one else could yet see. Both were talented in many areas and could discover art in science and science in art.

These geniuses exemplified a period that changed humanity for the better. They created, used, and applied new, valuable things. It lives on.

Apple is a digital genius orchard. Wozniak and Jobs offered us fertile ground for the digital renaissance. We'll build on their legacy.

We may put our seeds there and see them bloom despite corporate greed and political ignorance.

I think the coming tsunami will illuminate our planet like the Renaissance.

Gareth Willey

3 years ago

I've had these five apps on my phone for a long time.

TOP APPS

Who survives spring cleaning?

Relax. Notion is off-limits. This topic is popular.

(I wrote about it 2 years ago, before everyone else did.) So).

These apps are probably new to you. I hope you find a new phone app after reading this.

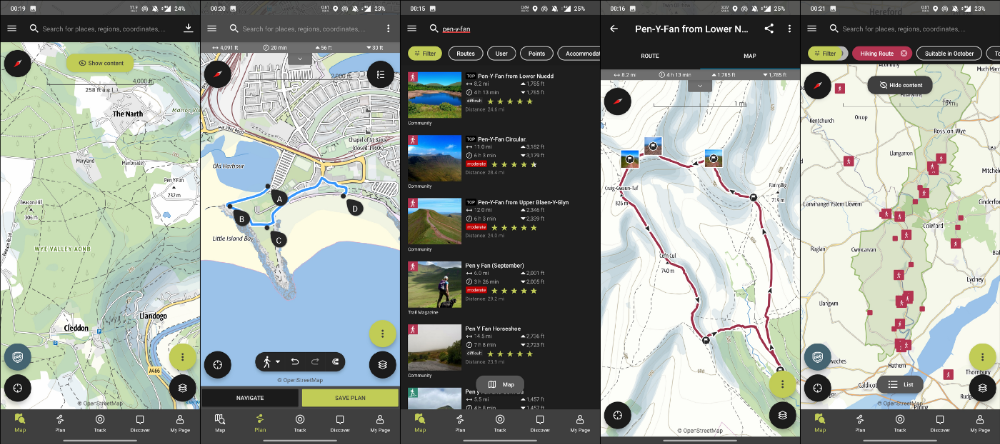

Outdooractive

ViewRanger is Google Maps for outdoor enthusiasts.

This app has been so important to me as a freedom-loving long-distance walker and hiker.

This app shows nearby trails and right-of-ways on top of an Open Street Map.

Helpful detail and data. Any route's distance,

You can download and follow tons of routes planned by app users.

This has helped me find new routes and places a fellow explorer has tried.

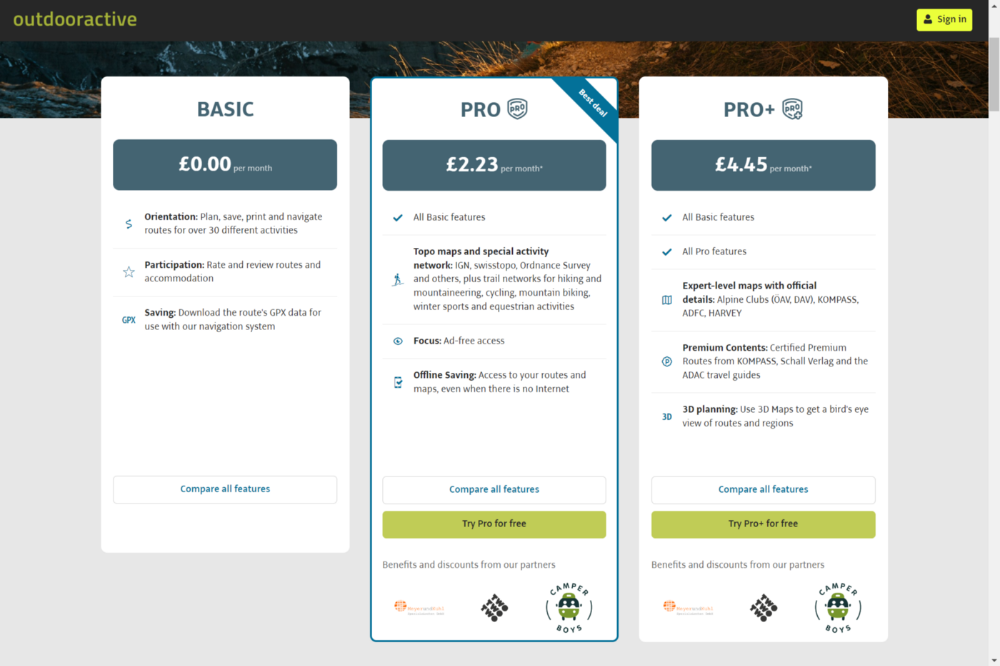

Free with non-intrusive ads. Years passed before I subscribed. Pro costs £2.23/month.

This app is for outdoor lovers.

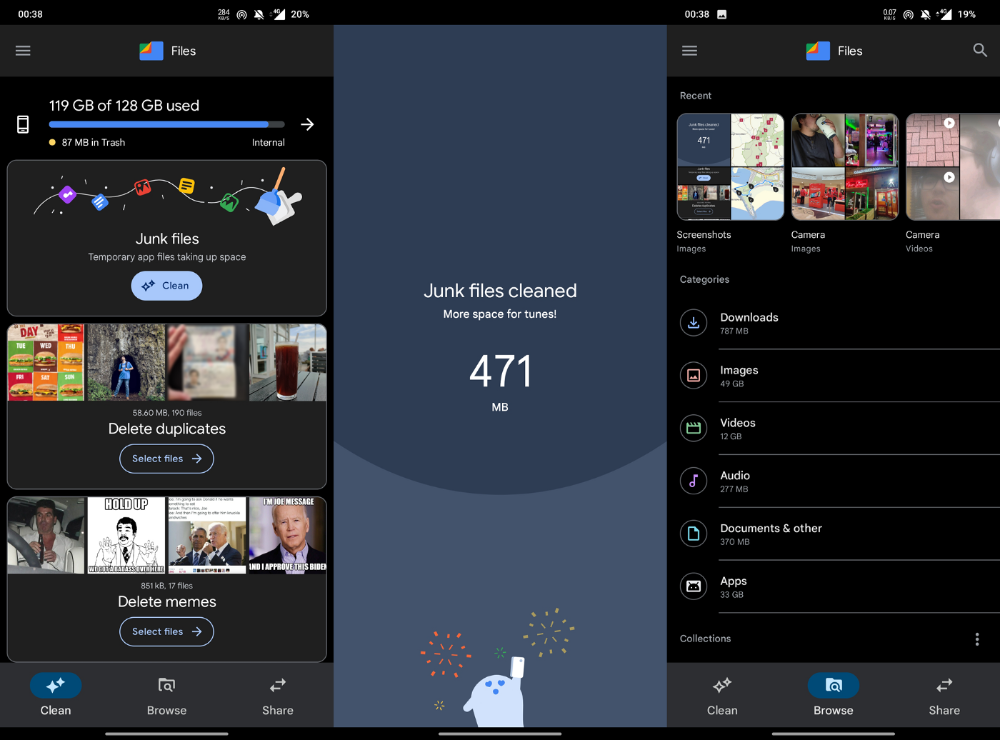

Google Files

New phones come with bloatware. These rushed apps are frustrating.

We must replace these apps. 2017 was Google's year.

Files is a file manager. It's quick, innovative, and clean. They've given people what they want.

It's easy to organize files, clear space, and clear cache.

I recommend Gallery by Google as a gallery app alternative. It's quick and easy.

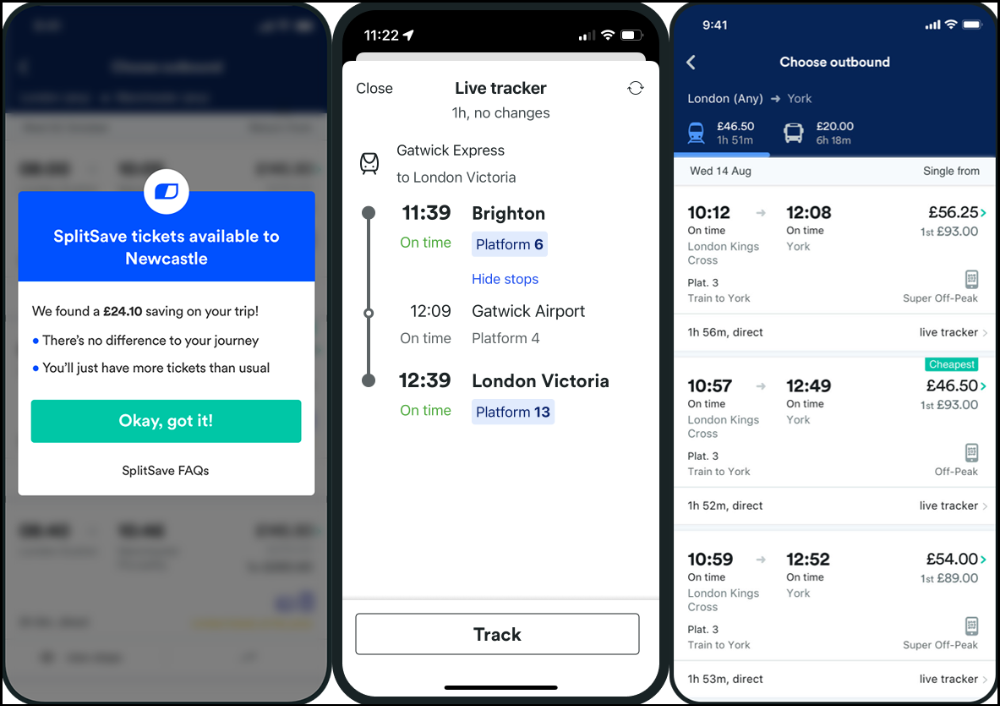

Trainline

App for trains, buses, and coaches.

I've used this app for years. It did the basics well when I first used it.

Since then, it's improved. It's constantly adding features to make traveling easier and less stressful.

Split-ticketing helps me save hundreds a year on train fares. This app is only available in the UK and Europe.

This service doesn't link to a third-party site. Their app handles everything.

Not all train and coach companies use this app. All the big names are there, though.

Here's more on the app.

Battlefield: Mobile

Play Store has 478,000 games. Few can turn my phone into a console.

Call of Duty Mobile and Asphalt 8/9 are examples.

Asphalt's loot boxes and ads make it unplayable. Call of Duty opens with a few ads. Close them to play without hassle.

This game uses all your phone's features to provide a high-quality, seamless experience. If my internet connection is good, I never experience lag or glitches.

The gameplay is energizing and intense, just like on consoles. Sometimes I'm too involved. I've thrown my phone in anger. I'm totally absorbed.

Customizability is my favorite. Since phones have limited screen space, we should only have the buttons we need, placed conveniently.

Size, opacity, and position are modifiable. Adjust audio, graphics, and textures. It's customizable.

This game has been on my phone for three years. It began well and has gotten better. When I think the creators can't do more, they do.

If you play, read my tips for winning a Battle Royale.

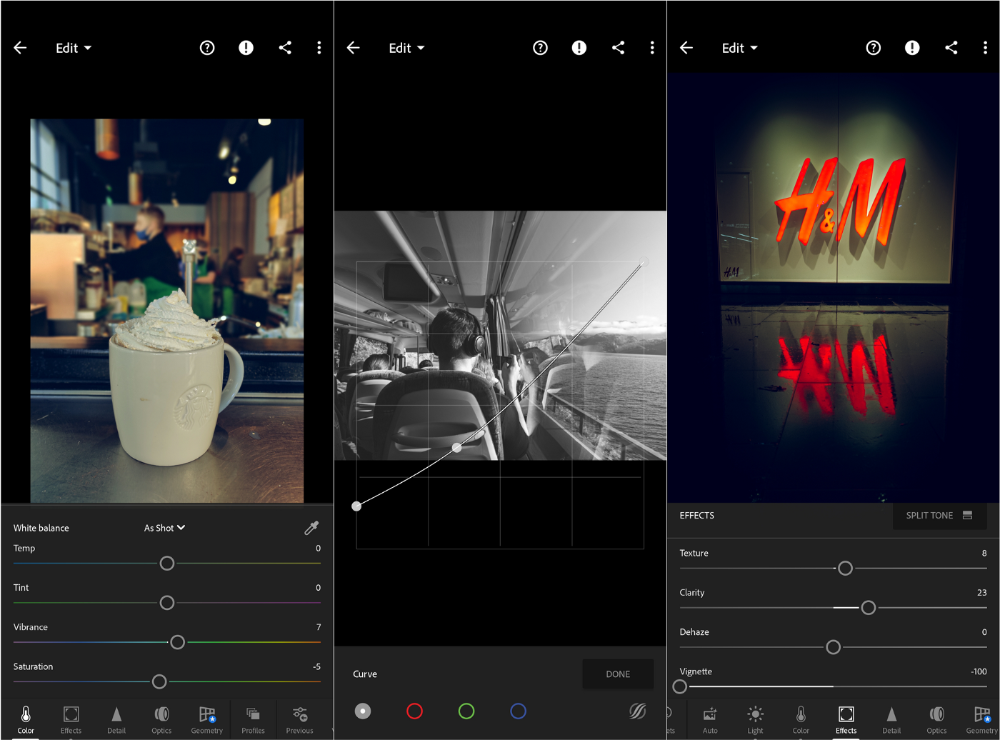

Lightroom

As a photographer, I believe your best camera is on you. The phone.

2017 was a big year for this app. I've tried many photo-editing apps since then. This always wins.

The app is dull. I've never seen better photo editing on a phone.

Adjusting settings and sliders doesn't damage or compress photos. It's detailed.

This is important for phone photos, which are lower quality than professional ones.

Some tools are behind a £4.49/month paywall. Adobe must charge a subscription fee instead of selling licenses. (I'm still bitter about Creative Cloud's price)

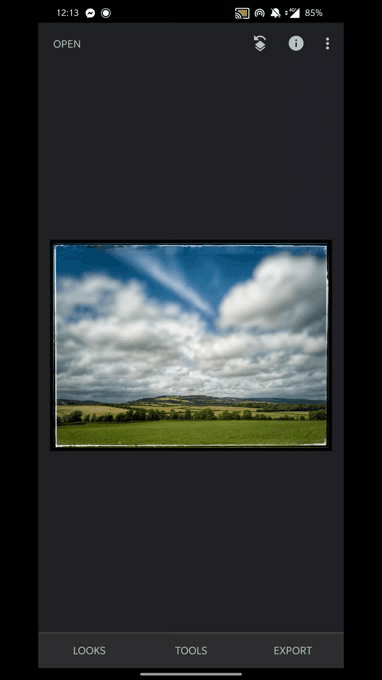

Snapseed is my pick. Lightroom is where I do basic editing before moving to Snapseed. Snapseed review:

These apps are great. They cover basic and complex editing needs while traveling.

Final Reflections

I hope you downloaded one of these. Share your favorite apps. These apps are scarce.

You might also like

Tim Denning

3 years ago

I gave up climbing the corporate ladder once I realized how deeply unhappy everyone at the top was.

Restructuring and layoffs cause career reevaluation. Your career can benefit.

Once you become institutionalized, the corporate ladder is all you know.

You're bubbled. Extremists term it the corporate Matrix. I'm not so severe because the business world brainwashed me, too.

This boosted my corporate career.

Until I hit bottom.

15 months later, I view my corporate life differently. You may wish to advance professionally. Read this before you do.

Your happiness in the workplace may be deceptive.

I've been fortunate to spend time with corporate aces.

Working for 2.5 years in banking social media gave me some of these experiences. Earlier in my career, I recorded interviews with business leaders.

These people have titles like Chief General Manager and Head Of. New titles brought life-changing salaries.

They seemed happy.

I’d pass them in the hallway and they’d smile or shake my hand. I dreamt of having their life.

The ominous pattern

Unfiltered talks with some of them revealed a different world.

They acted well. They were skilled at smiling and saying the correct things. All had the same dark pattern, though.

Something felt off.

I found my conversations with them were generally for their benefit. They hoped my online antics as a writer/coach would shed light on their dilemma.

They'd tell me they wanted more. When you're one position away from CEO, it's hard not to wonder if this next move will matter.

What really displeased corporate ladder chasers

Before ascending further, consider these.

Zero autonomy

As you rise in a company, your days get busier.

Many people and initiatives need supervision. Everyone expects you to know business details. Weak when you don't. A poor leader is fired during the next restructuring and left to pursue their corporate ambition.

Full calendars leave no time for reflection. You can't have a coffee with a friend or waste a day.

You’re always on call. It’s a roll call kinda life.

Unable to express oneself freely

My 8 years of LinkedIn writing helped me meet these leaders.

I didn't think they'd care. Mistake.

Corporate leaders envied me because they wanted to talk freely again without corporate comms or a PR firm directing them what to say.

They couldn't share their flaws or inspiring experiences.

They wanted to.

Every day they were muzzled eroded by their business dream.

Limited family time

Top leaders had families.

They've climbed the corporate ladder. Nothing excellent happens overnight.

Corporate dreamers rarely saw their families.

Late meetings, customer functions, expos, training, leadership days, team days, town halls, and product demos regularly occurred after work.

Or they had to travel interstate or internationally for work events. They used bags and motel showers.

Initially, they said business class flights and hotels were nice. They'd get bored. 5-star hotels become monotonous.

No hotel beats home.

One leader said he hadn't seen his daughter much. They used to Facetime, but now that he's been gone so long, she rarely wants to talk to him.

So they iPad-parented.

You're miserable without your family.

Held captive by other job titles

Going up the business ladder seems like a battle.

Leaders compete for business gains and corporate advancement.

I saw shocking filthy tricks. Leaders would lie to seem nice.

Captives included top officials.

A different section every week. If they ran technology, the Head of Sales would argue their CRM cost millions. Or an Operations chief would battle a product team over support requests.

After one conflict, another began.

Corporate echelons are antagonistic. Huge pay and bonuses guarantee bad behavior.

Overly centered on revenue

As you rise, revenue becomes more prevalent. Most days, you'd believe revenue was everything. Here’s the problem…

Numbers drain us.

Unless you're a closet math nerd, contemplating and talking about numbers drains your creativity.

Revenue will never substitute impact.

Incapable of taking risks

Corporate success requires taking fewer risks.

Risks can cause dismissal. Risks can interrupt business. Keep things moving so you may keep getting paid your enormous salary and bonus.

Restructuring or layoffs are inevitable. All corporate climbers experience it.

On this fateful day, a small few realize the game they’ve been trapped in and escape. Most return to play for a new company, but it takes time.

Addiction keeps them trapped. You know nothing else. The rest is strange.

You start to think “I’m getting old” or “it’s nearly retirement.” So you settle yet again for the trappings of the corporate ladder game to nowhere.

Should you climb the corporate ladder?

Let me end on a surprising note.

Young people should ascend the corporate ladder. It teaches you business skills and helps support your side gig and (potential) online business.

Don't get trapped, shackled, or muzzled.

Your ideas and creativity become stifled after too much gaming play.

Corporate success won't bring happiness.

Find fulfilling employment that matters. That's it.

DC Palter

3 years ago

How Will You Generate $100 Million in Revenue? The Startup Business Plan

A top-down company plan facilitates decision-making and impresses investors.

A startup business plan starts with the product, the target customers, how to reach them, and how to grow the business.

Bottom-up is terrific unless venture investors fund it.

If it can prove how it can exceed $100M in sales, investors will invest. If not, the business may be wonderful, but it's not venture capital-investable.

As a rule, venture investors only fund firms that expect to reach $100M within 5 years.

Investors get nothing until an acquisition or IPO. To make up for 90% of failed investments and still generate 20% annual returns, portfolio successes must exit with a 25x return. A $20M-valued company must be acquired for $500M or more.

This requires $100M in sales (or being on a nearly vertical trajectory to get there). The company has 5 years to attain that milestone and create the requisite ROI.

This motivates venture investors (venture funds and angel investors) to hunt for $100M firms within 5 years. When you pitch investors, you outline how you'll achieve that aim.

I'm wary of pitches after seeing a million hockey sticks predicting $5M to $100M in year 5 that never materialized. Doubtful.

Startups fail because they don't have enough clients, not because they don't produce a great product. That jump from $5M to $100M never happens. The company reaches $5M or $10M, growing at 10% or 20% per year. That's great, but not enough for a $500 million deal.

Once it becomes clear the company won’t reach orbit, investors write it off as a loss. When a corporation runs out of money, it's shut down or sold in a fire sale. The company can survive if expenses are trimmed to match revenues, but investors lose everything.

When I hear a pitch, I'm not looking for bright income projections but a viable plan to achieve them. Answer these questions in your pitch.

Is the market size sufficient to generate $100 million in revenue?

Will the initial beachhead market serve as a springboard to the larger market or as quicksand that hinders progress?

What marketing plan will bring in $100 million in revenue? Is the market diffuse and will cost millions of dollars in advertising, or is it one, focused market that can be tackled with a team of salespeople?

Will the business be able to bridge the gap from a small but fervent set of early adopters to a larger user base and avoid lock-in with their current solution?

Will the team be able to manage a $100 million company with hundreds of people, or will hypergrowth force the organization to collapse into chaos?

Once the company starts stealing market share from the industry giants, how will it deter copycats?

The requirement to reach $100M may be onerous, but it provides a context for difficult decisions: What should the product be? Where should we concentrate? who should we hire? Every strategic choice must consider how to reach $100M in 5 years.

Focusing on $100M streamlines investor pitches. Instead of explaining everything, focus on how you'll attain $100M.

As an investor, I know I'll lose my money if the startup doesn't reach this milestone, so the revenue prediction is the first thing I look at in a pitch deck.

Reaching the $100M goal needs to be the first thing the entrepreneur thinks about when putting together the business plan, the central story of the pitch, and the criteria for every important decision the company makes.

Katherine Kornei

3 years ago

The InSight lander from NASA has recorded the greatest tremor ever felt on Mars.

The magnitude 5 earthquake was responsible for the discharge of energy that was 10 times greater than the previous record holder.

Any Martians who happen to be reading this should quickly learn how to duck and cover.

NASA's Jet Propulsion Laboratory in Pasadena, California, reported that on May 4, the planet Mars was shaken by an earthquake of around magnitude 5, making it the greatest Marsquake ever detected to this point. The shaking persisted for more than six hours and unleashed more than ten times as much energy as the earthquake that had previously held the record for strongest.

The event was captured on record by the InSight lander, which is operated by the United States Space Agency and has been researching the innards of Mars ever since it touched down on the planet in 2018 (SN: 11/26/18). The epicenter of the earthquake was probably located in the vicinity of Cerberus Fossae, which is located more than 1,000 kilometers away from the lander.

The surface of Cerberus Fossae is notorious for being broken up and experiencing periodic rockfalls. According to geophysicist Philippe Lognonné, who is the lead investigator of the Seismic Experiment for Interior Structure, the seismometer that is onboard the InSight lander, it is reasonable to assume that the ground is moving in that area. "This is an old crater from a volcanic eruption."

Marsquakes, which are similar to earthquakes in that they give information about the interior structure of our planet, can be utilized to investigate what lies beneath the surface of Mars (SN: 7/22/21). And according to Lognonné, who works at the Institut de Physique du Globe in Paris, there is a great deal that can be gleaned from analyzing this massive earthquake. Because the quality of the signal is so high, we will be able to focus on the specifics.