Why I quit a $500K job at Amazon to work for myself

I quit my 8-year Amazon job last week. I wasn't motivated to do another year despite promotions, pay, recognition, and praise.

In AWS, I built developer tools. I could have worked in that field forever.

I became an Amazon developer. Within 3.5 years, I was promoted twice to senior engineer and would have been promoted to principal engineer if I stayed. The company said I had great potential.

Over time, I became a reputed expert and leader within the company. I was respected.

First year I made $75K, last year $511K. If I stayed another two years, I could have made $1M.

Despite Amazon's reputation, my work–life balance was good. I no longer needed to prove myself and could do everything in 40 hours a week. My team worked from home once a week, and I rarely opened my laptop nights or weekends.

My coworkers were great. I had three generous, empathetic managers. I’m very grateful to everyone I worked with.

Everything was going well and getting better. My motivation to go to work each morning was declining despite my career and income growth.

Another promotion, pay raise, or big project wouldn't have boosted my motivation. Motivation was also waning. It was my freedom.

Demotivation

My motivation was high in the beginning. I worked with someone on an internal tool with little scrutiny. I had more freedom to choose how and what to work on than in recent years. Me and another person improved it, talked to users, released updates, and tested it. Whatever we wanted, we did. We did our best and were mostly self-directed.

In recent years, things have changed. My department's most important project had many stakeholders and complex goals. What I could do depended on my ability to convince others it was the best way to achieve our goals.

Amazon was always someone else's terms. The terms started out simple (keep fixing it), but became more complex over time (maximize all goals; satisfy all stakeholders). Working in a large organization imposed restrictions on how to do the work, what to do, what goals to set, and what business to pursue. This situation forced me to do things I didn't want to do.

Finding New Motivation

What would I do forever? Not something I did until I reached a milestone (an exit), but something I'd do until I'm 80. What could I do for the next 45 years that would make me excited to wake up and pay my bills? Is that too unambitious? Nope. Because I'm motivated by two things.

One is an external carrot or stick. I'm not forced to file my taxes every April, but I do because I don't want to go to jail. Or I may not like something but do it anyway because I need to pay the bills or want a nice car. Extrinsic motivation

One is internal. When there's no carrot or stick, this motivates me. This fuels hobbies. I wanted a job that was intrinsically motivated.

Is this too low-key? Extrinsic motivation isn't sustainable. Getting promoted felt good for a week, then it was over. When I hit $100K, I admired my W2 for a few days, but then it wore off. Same thing happened at $200K, $300K, $400K, and $500K. Earning $1M or $10M wouldn't change anything. I feel the same about every material reward or possession. Getting them feels good at first, but quickly fades.

Things I've done since I was a kid, when no one forced me to, don't wear off. Coding, selling my creations, charting my own path, and being honest. Why not always use my strengths and motivation? I'm lucky to live in a time when I can work independently in my field without large investments. So that’s what I’m doing.

What’s Next?

I'm going all-in on independence and will make a living from scratch. I won't do only what I like, but on my terms. My goal is to cover my family's expenses before my savings run out while doing something I enjoy. What more could I want from my work?

You can now follow me on Twitter as I continue to document my journey.

This post is a summary. Read full article here

More on Personal Growth

Alex Mathers

3 years ago Draft

12 practices of the zenith individuals I know

Calmness is a vital life skill.

It aids communication. It boosts creativity and performance.

I've studied calm people's habits for years. Commonalities:

Have learned to laugh at themselves.

Those who have something to protect can’t help but make it a very serious business, which drains the energy out of the room.

They are fixated on positive pursuits like making cool things, building a strong physique, and having fun with others rather than on depressing influences like the news and gossip.

Every day, spend at least 20 minutes moving, whether it's walking, yoga, or lifting weights.

Discover ways to take pleasure in life's challenges.

Since perspective is malleable, they change their view.

Set your own needs first.

Stressed people neglect themselves and wonder why they struggle.

Prioritize self-care.

Don't ruin your life to please others.

Make something.

Calm people create more than react.

They love creating beautiful things—paintings, children, relationships, and projects.

Hold your breath, please.

If you're stressed or angry, you may be surprised how much time you spend holding your breath and tightening your belly.

Release, breathe, and relax to find calm.

Stopped rushing.

Rushing is disadvantageous.

Calm people handle life better.

Are attuned to their personal dietary needs.

They avoid junk food and eat foods that keep them healthy, happy, and calm.

Don’t take anything personally.

Stressed people control everything.

Self-conscious.

Calm people put others and their work first.

Keep their surroundings neat.

Maintaining an uplifting and clutter-free environment daily calms the mind.

Minimise negative people.

Calm people are ruthless with their boundaries and avoid negative and drama-prone people.

Jari Roomer

3 years ago

After 240 articles and 2.5M views on Medium, 9 Raw Writing Tips

Late in 2018, I published my first Medium article, but I didn't start writing seriously until 2019. Since then, I've written more than 240 articles, earned over $50,000 through Medium's Partner Program, and had over 2.5 million page views.

Write A Lot

Most people don't have the patience and persistence for this simple writing secret:

Write + Write + Write = possible success

Writing more improves your skills.

The more articles you publish, the more likely one will go viral.

If you only publish once a month, you have no views. If you publish 10 or 20 articles a month, your success odds increase 10- or 20-fold.

Tim Denning, Ayodeji Awosika, Megan Holstein, and Zulie Rane. Medium is their jam. How are these authors alike? They're productive and consistent. They're prolific.

80% is publishable

Many writers battle perfectionism.

To succeed as a writer, you must publish often. You'll never publish if you aim for perfection.

Adopt the 80 percent-is-good-enough mindset to publish more. It sounds terrible, but it'll boost your writing success.

Your work won't be perfect. Always improve. Waiting for perfection before publishing will take a long time.

Second, readers are your true critics, not you. What you consider "not perfect" may be life-changing for the reader. Don't let perfectionism hinder the reader.

Don't let perfectionism hinder the reader. ou don't want to publish mediocre articles. When the article is 80% done, publish it. Don't spend hours editing. Realize it. Get feedback. Only this will work.

Make Your Headline Irresistible

We all judge books by their covers, despite the saying. And headlines. Readers, including yourself, judge articles by their titles. We use it to decide if an article is worth reading.

Make your headlines irresistible. Want more article views? Then, whether you like it or not, write an attractive article title.

Many high-quality articles are collecting dust because of dull, vague headlines. It didn't make the reader click.

As a writer, you must do more than produce quality content. You must also make people click on your article. This is a writer's job. How to create irresistible headlines:

Curiosity makes readers click. Here's a tempting example...

Example: What Women Actually Look For in a Guy, According to a Huge Study by Luba Sigaud

Use Numbers: Click-bait lists. I mean, which article would you click first? ‘Some ways to improve your productivity’ or ’17 ways to improve your productivity.’ Which would I click?

Example: 9 Uncomfortable Truths You Should Accept Early in Life by Sinem Günel

Most headlines are dull. If you want clicks, get 'sexy'. Buzzword-ify. Invoke emotion. Trendy words.

Example: 20 Realistic Micro-Habits To Live Better Every Day by Amardeep Parmar

Concise paragraphs

Our culture lacks focus. If your headline gets a click, keep paragraphs short to keep readers' attention.

Some writers use 6–8 lines per paragraph, but I prefer 3–4. Longer paragraphs lose readers' interest.

A writer should help the reader finish an article, in my opinion. I consider it a job requirement. You can't force readers to finish an article, but you can make it 'snackable'

Help readers finish an article with concise paragraphs, interesting subheadings, exciting images, clever formatting, or bold attention grabbers.

Work And Move On

I've learned over the years not to get too attached to my articles. Many writers report a strange phenomenon:

The articles you're most excited about usually bomb, while the ones you're not tend to do well.

This isn't always true, but I've noticed it in my own writing. My hopes for an article usually make it worse. The more objective I am, the better an article does.

Let go of a finished article. 40 or 40,000 views, whatever. Now let the article do its job. Onward. Next story. Start another project.

Disregard Haters

Online content creators will encounter haters, whether on YouTube, Instagram, or Medium. More views equal more haters. Fun, right?

As a web content creator, I learned:

Don't debate haters. Never.

It's a mistake I've made several times. It's tempting to prove haters wrong, but they'll always find a way to be 'right'. Your response is their fuel.

I smile and ignore hateful comments. I'm indifferent. I won't enter a negative environment. I have goals, money, and a life to build. "I'm not paid to argue," Drake once said.

Use Grammarly

Grammarly saves me as a non-native English speaker. You know Grammarly. It shows writing errors and makes article suggestions.

As a writer, you need Grammarly. I have a paid plan, but their free version works. It improved my writing greatly.

Put The Reader First, Not Yourself

Many writers write for themselves. They focus on themselves rather than the reader.

Ask yourself:

This article teaches what? How can they be entertained or educated?

Personal examples and experiences improve writing quality. Don't focus on yourself.

It's not about you, the content creator. Reader-focused. Putting the reader first will change things.

Extreme ownership: Stop blaming others

I remember writing a lot on Medium but not getting many views. I blamed Medium first. Poor algorithm. Poor publishing. All sucked.

Instead of looking at what I could do better, I blamed others.

When you blame others, you lose power. Owning your results gives you power.

As a content creator, you must take full responsibility. Extreme ownership means 100% responsibility for work and results.

You don’t blame others. You don't blame the economy, president, platform, founders, or audience. Instead, you look for ways to improve. Few people can do this.

Blaming is useless. Zero. Taking ownership of your work and results will help you progress. It makes you smarter, better, and stronger.

Instead of blaming others, you'll learn writing, marketing, copywriting, content creation, productivity, and other skills. Game-changer.

Alex Mathers

3 years ago

12 habits of the zenith individuals I know

Calmness is a vital life skill.

It aids communication. It boosts creativity and performance.

I've studied calm people's habits for years. Commonalities:

Have mastered the art of self-humor.

Protectors take their job seriously, draining the room's energy.

They are fixated on positive pursuits like making cool things, building a strong physique, and having fun with others rather than on depressing influences like the news and gossip.

Every day, spend at least 20 minutes moving, whether it's walking, yoga, or lifting weights.

Discover ways to take pleasure in life's challenges.

Since perspective is malleable, they change their view.

Set your own needs first.

Stressed people neglect themselves and wonder why they struggle.

Prioritize self-care.

Don't ruin your life to please others.

Make something.

Calm people create more than react.

They love creating beautiful things—paintings, children, relationships, and projects.

Don’t hold their breath.

If you're stressed or angry, you may be surprised how much time you spend holding your breath and tightening your belly.

Release, breathe, and relax to find calm.

Stopped rushing.

Rushing is disadvantageous.

Calm people handle life better.

Are aware of their own dietary requirements.

They avoid junk food and eat foods that keep them healthy, happy, and calm.

Don’t take anything personally.

Stressed people control everything.

Self-conscious.

Calm people put others and their work first.

Keep their surroundings neat.

Maintaining an uplifting and clutter-free environment daily calms the mind.

Minimise negative people.

Calm people are ruthless with their boundaries and avoid negative and drama-prone people.

You might also like

Nick Nolan

3 years ago

How to Make $1,037,100 in 4 Months with This Weird Website

One great idea might make you rich.

Imagine having a million-dollar concept in college that made a million.

2005 precisely.

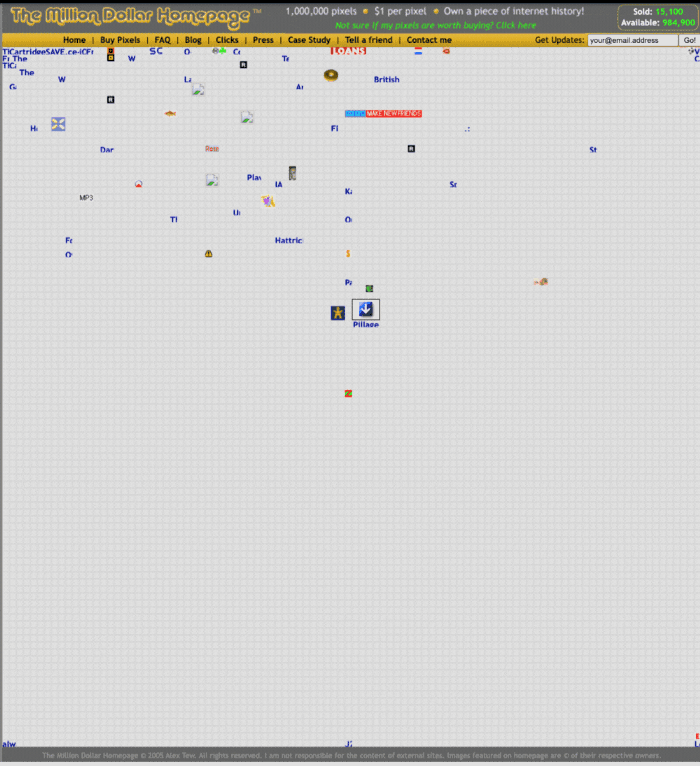

Alex Tew, 21, from Wiltshire, England, created The Million Dollar Homepage in August 2005. The idea is basic but beyond the ordinary, which is why it worked.

Alex built a 1,000,000-pixel webpage.

Each website pixel would cost $1. Since pixels are hard to discern, he sold 10x10 squares for $100.

He'd make a million if all the spots sold.

He may have thought about NFTs and the Metaverse decades ago.

MillionDollarHomepage.com launched in 2005.

Businesses and individuals could buy a website spot and add their logo, website link, and tagline. You bought an ad, but nobody visited the website.

If a few thousand people visited the website, it could drive traffic to your business's site.

Alex promised buyers the website would be up for 5 years, so it was a safe bet.

Alex's friend with a music website was the first to buy real estate on the site. Within two weeks, 4,700 pixels sold, and a tracker showed how many were sold and available.

Word-of-mouth marketing got the press's attention quickly. Everyone loves reading about new ways to make money, so it was a good news story.

By September, over 250,000 pixels had been sold, according to a BBC press release.

Alex and the website gained more media and public attention, so traffic skyrocketed. Two months after the site launched, 1,400 customers bought more than 500,000 pixels.

Businesses bought online real estate. They heard thousands visited the site, so they could get attention cheaply.

Unless you bought a few squares, I'm not sure how many people would notice your ad or click your link.

A sponge website owner emailed Alex:

“We tried Million Dollar Homepage because we were impressed at the level of ingenuity and the sheer simplicity of it. If we’re honest, we didn’t expect too much from it. Now, as a direct result, we are pitching for £18,000 GBP worth of new clients and have seen our site traffic increase over a hundred-fold. We’re even going to have to upgrade our hosting facility! It’s been exceptional.”

Web.archive.org screenshots show how the website changed.

“The idea is to create something of an internet time capsule: a homepage that is unique and permanent. Everything on the internet keeps changing so fast, it will be nice to have something that stays solid and permanent for many years. You can be a part of that!” Alex Tew, 2005

The last 1,000 pixels were sold on January 1, 2006.

By then, the homepage had hundreds of thousands of monthly visitors. Alex put the last space on eBay due to high demand.

MillionDollarWeightLoss.com won the last pixels for $38,100, bringing revenue to $1,037,100 in 4 months.

Many have tried to replicate this website's success. They've all failed.

This idea only worked because no one had seen this website before.

This winner won't be repeated, but it should inspire you to try something new and creative.

Still popular, you could buy one of the linked domains. You can't buy pixels, but you can buy an expired domain.

One link I clicked costs $59,888.

You'd own a piece of internet history if you spent that much on a domain.

Someone bought stablesgallery.co.uk after the domain expired and restored it.

Many of the linked websites have expired or been redirected, but some still link to the original. I couldn't find sponge's website. Can you?

This is a great example of how a simple creative idea can go viral.

Comment on this amazing success story.

Jonathan Vanian

4 years ago

What is Terra? Your guide to the hot cryptocurrency

With cryptocurrencies like Bitcoin, Ether, and Dogecoin gyrating in value over the past few months, many people are looking at so-called stablecoins like Terra to invest in because of their more predictable prices.

Terraform Labs, which oversees the Terra cryptocurrency project, has benefited from its rising popularity. The company said recently that investors like Arrington Capital, Lightspeed Venture Partners, and Pantera Capital have pledged $150 million to help it incubate various crypto projects that are connected to Terra.

Terraform Labs and its partners have built apps that operate on the company’s blockchain technology that helps keep a permanent and shared record of the firm’s crypto-related financial transactions.

Here’s what you need to know about Terra and the company behind it.

What is Terra?

Terra is a blockchain project developed by Terraform Labs that powers the startup’s cryptocurrencies and financial apps. These cryptocurrencies include the Terra U.S. Dollar, or UST, that is pegged to the U.S. dollar through an algorithm.

Terra is a stablecoin that is intended to reduce the volatility endemic to cryptocurrencies like Bitcoin. Some stablecoins, like Tether, are pegged to more conventional currencies, like the U.S. dollar, through cash and cash equivalents as opposed to an algorithm and associated reserve token.

To mint new UST tokens, a percentage of another digital token and reserve asset, Luna, is “burned.” If the demand for UST rises with more people using the currency, more Luna will be automatically burned and diverted to a community pool. That balancing act is supposed to help stabilize the price, to a degree.

“Luna directly benefits from the economic growth of the Terra economy, and it suffers from contractions of the Terra coin,” Terraform Labs CEO Do Kwon said.

Each time someone buys something—like an ice cream—using UST, that transaction generates a fee, similar to a credit card transaction. That fee is then distributed to people who own Luna tokens, similar to a stock dividend.

Who leads Terra?

The South Korean firm Terraform Labs was founded in 2018 by Daniel Shin and Kwon, who is now the company’s CEO. Kwon is a 29-year-old former Microsoft employee; Shin now heads the Chai online payment service, a Terra partner. Kwon said many Koreans have used the Chai service to buy goods like movie tickets using Terra cryptocurrency.

Terraform Labs does not make money from transactions using its crypto and instead relies on outside funding to operate, Kwon said. It has raised $57 million in funding from investors like HashKey Digital Asset Group, Divergence Digital Currency Fund, and Huobi Capital, according to deal-tracking service PitchBook. The amount raised is in addition to the latest $150 million funding commitment announced on July 16.

What are Terra’s plans?

Terraform Labs plans to use Terra’s blockchain and its associated cryptocurrencies—including one pegged to the Korean won—to create a digital financial system independent of major banks and fintech-app makers. So far, its main source of growth has been in Korea, where people have bought goods at stores, like coffee, using the Chai payment app that’s built on Terra’s blockchain. Kwon said the company’s associated Mirror trading app is experiencing growth in China and Thailand.

Meanwhile, Kwon said Terraform Labs would use its latest $150 million in funding to invest in groups that build financial apps on Terra’s blockchain. He likened the scouting and investing in other groups as akin to a “Y Combinator demo day type of situation,” a reference to the popular startup pitch event organized by early-stage investor Y Combinator.

The combination of all these Terra-specific financial apps shows that Terraform Labs is “almost creating a kind of bank,” said Ryan Watkins, a senior research analyst at cryptocurrency consultancy Messari.

In addition to cryptocurrencies, Terraform Labs has a number of other projects including the Anchor app, a high-yield savings account for holders of the group’s digital coins. Meanwhile, people can use the firm’s associated Mirror app to create synthetic financial assets that mimic more conventional ones, like “tokenized” representations of corporate stocks. These synthetic assets are supposed to be helpful to people like “a small retail trader in Thailand” who can more easily buy shares and “get some exposure to the upside” of stocks that they otherwise wouldn’t have been able to obtain, Kwon said. But some critics have said the U.S. Securities and Exchange Commission may eventually crack down on synthetic stocks, which are currently unregulated.

What do critics say?

Terra still has a long way to go to catch up to bigger cryptocurrency projects like Ethereum.

Most financial transactions involving Terra-related cryptocurrencies have originated in Korea, where its founders are based. Although Terra is becoming more popular in Korea thanks to rising interest in its partner Chai, it’s too early to say whether Terra-related currencies will gain traction in other countries.

Terra’s blockchain runs on a “limited number of nodes,” said Messari’s Watkins, referring to the computers that help keep the system running. That helps reduce latency that may otherwise slow processing of financial transactions, he said.

But the tradeoff is that Terra is less “decentralized” than other blockchain platforms like Ethereum, which is powered by thousands of interconnected computing nodes worldwide. That could make Terra less appealing to some blockchain purists.

Jari Roomer

3 years ago

Three Simple Daily Practices That Will Immediately Double Your Output

Most productive people are habitual.

Early in the day, do important tasks.

In his best-selling book Eat That Frog, Brian Tracy advised starting the day with your hardest, most important activity.

Most individuals work best in the morning. Energy and willpower peak then.

Mornings are also ideal for memory, focus, and problem-solving.

Thus, the morning is ideal for your hardest chores.

It makes sense to do these things during your peak performance hours.

Additionally, your morning sets the tone for the day. According to Brian Tracy, the first hour of the workday steers the remainder.

After doing your most critical chores, you may feel accomplished, confident, and motivated for the remainder of the day, which boosts productivity.

Develop Your Essentialism

In Essentialism, Greg McKeown claims that trying to be everything to everyone leads to mediocrity and tiredness.

You'll either burn out, be spread too thin, or compromise your ideals.

Greg McKeown advises Essentialism:

Clarify what’s truly important in your life and eliminate the rest.

Eliminating non-essential duties, activities, and commitments frees up time and energy for what matters most.

According to Greg McKeown, Essentialists live by design, not default.

You'll be happier and more productive if you follow your essentials.

Follow these three steps to live more essentialist.

Prioritize Your Tasks First

What matters most clarifies what matters less. List your most significant aims and values.

The clearer your priorities, the more you can focus on them.

On Essentialism, McKeown wrote, The ultimate form of effectiveness is the ability to deliberately invest our time and energy in the few things that matter most.

#2: Set Your Priorities in Order

Prioritize your priorities, not simply know them.

“If you don’t prioritize your life, someone else will.” — Greg McKeown

Planning each day and allocating enough time for your priorities is the best method to become more purposeful.

#3: Practice saying "no"

If a request or demand conflicts with your aims or principles, you must learn to say no.

Saying no frees up space for our priorities.

Place Sleep Above All Else

Many believe they must forego sleep to be more productive. This is false.

A productive day starts with a good night's sleep.

Matthew Walker (Why We Sleep) says:

“Getting a good night’s sleep can improve cognitive performance, creativity, and overall productivity.”

Sleep helps us learn, remember, and repair.

Unfortunately, 35% of people don't receive the recommended 79 hours of sleep per night.

Sleep deprivation can cause:

increased risk of diabetes, heart disease, stroke, and obesity

Depression, stress, and anxiety risk are all on the rise.

decrease in general contentment

decline in cognitive function

To live an ideal, productive, and healthy life, you must prioritize sleep.

Follow these six sleep optimization strategies to obtain enough sleep:

Establish a nightly ritual to relax and prepare for sleep.

Avoid using screens an hour before bed because the blue light they emit disrupts the generation of melatonin, a necessary hormone for sleep.

Maintain a regular sleep schedule to control your body's biological clock (and optimizes melatonin production)

Create a peaceful, dark, and cool sleeping environment.

Limit your intake of sweets and caffeine (especially in the hours leading up to bedtime)

Regular exercise (but not right before you go to bed, because your body temperature will be too high)

Sleep is one of the best ways to boost productivity.

Sleep is crucial, says Matthew Walker. It's the key to good health and longevity.