More on Entrepreneurship/Creators

Tim Denning

3 years ago

One of the biggest publishers in the world offered me a book deal, but I don't feel deserving of it.

My ego is so huge it won't fit through the door.

I don't know how I feel about it. I should be excited. Many of you have this exact dream to publish a book with a well-known book publisher and get a juicy advance.

Let me dissect how I'm thinking about it to help you.

How it happened

An email comes in. A generic "can we put a backlink on your website and get a freebie" email.

Almost deleted it.

Then I noticed the logo. It seemed shady. I found the URL. Check. I searched the employee's LinkedIn. Legit. I avoided middlemen. Check.

Mixed feelings. LinkedIn hasn't valued my writing for years. I'm just a guy in an unironed t-shirt whose content they sell advertising against.

They get big dollars. I get $0 and a few likes, plus some email subscribers.

Still, I felt adrenaline for hours.

I texted a few friends to see how they felt. I wrapped them.

Messages like "No shocker. You're entertaining online." I didn't like praises, so I blushed.

The thrill faded after hours. Who knows?

Most authors desire this chance.

"You entitled piece of crap, Denning!"

You may think so. Okay. My job is to stand on the internet and get bananas thrown at me.

I approached writing backwards. More important than a book deal was a social media audience converted to an email list.

Romantic authors think backward. They hope a fantastic book will land them a deal and an audience.

Rarely occurs. So I never pursued it. It's like permission-seeking or the lottery.

Not being a professional writer, I've never written a good book. I post online for fun and to express my opinions.

Writing is therapeutic. I overcome mental illness and rebuilt my life this way. Without blogging, I'd be dead.

I've always dreamed of staying alive and doing something I love, not getting a book contract. Writing is my passion. I'm a winner without a book deal.

Why I was given a book deal

You may assume I received a book contract because of my views or follows. Nope.

They gave me a deal because they like my writing style. I've heard this for eight years.

Several authors agree. One asked me to improve their writer's voice.

Takeaway: highlight your writer's voice.

What if they discover I'm writing incompetently?

An edited book is published. It's edited.

I need to master writing mechanics, thus this concerns me. I need help with commas and sentence construction.

I must learn verb, noun, and adjective. Seriously.

Writing a book may reveal my imposter status to a famous publisher. Imagine the email

"It happened again. He doesn't even know how to spell. He thinks 'less' is the correct word, not 'fewer.' Are you sure we should publish his book?"

Fears stink.

I'm capable of blogging. Even listicles. So what?

Writing for a major publisher feels advanced.

I only blog. I'm good at listicles. Digital media executives have criticized me for this.

It is allegedly clickbait.

Or it is following trends.

Alternately, growth hacking.

Never. I learned copywriting to improve my writing.

Apple, Amazon, and Tesla utilize copywriting to woo customers. Whoever thinks otherwise is the wisest person in the room.

Old-schoolers loathe copywriters.

Their novels sell nothing.

They assume their elitist version of writing is better and that the TikTok generation will invest time in random writing with no subheadings and massive walls of text they can't read on their phones.

I'm terrified of book proposals.

My friend's book proposal suggestion was contradictory and made no sense.

They told him to compose another genre. This book got three Amazon reviews. Is that a good model?

The process disappointed him. I've heard other book proposal horror stories. Tim Ferriss' book "The 4-Hour Workweek" was criticized.

Because he has thick skin, his book came out. He wouldn't be known without that.

I hate book proposals.

An ongoing commitment

Writing a book is time-consuming.

I appreciate time most. I want to focus on my daughter for the next few years. I can't recreate her childhood because of a book.

No idea how parents balance kids' goals.

My silly face in a bookstore. Really?

Genuine thought.

I don't want my face in bookstores. I fear fame. I prefer anonymity.

I want to purchase a property in a bad Australian area, then piss off and play drums. Is bookselling worth it?

Are there even bookstores anymore?

(Except for Ryan Holiday's legendary Painted Porch Bookshop in Texas.)

What's most important about books

Many were duped.

Tweets and TikTok hopscotch vids are their future. Short-form content creates devoted audiences that buy newsletter subscriptions.

Books=depth.

Depth wins (if you can get people to buy your book). Creating a book will strengthen my reader relationships.

It's cheaper than my classes, so more people can benefit from my life lessons.

A deeper justification for writing a book

Mind wandered.

If I write this book, my daughter will follow it. "Look what you can do, love, when you ignore critics."

That's my favorite.

I'll be her best leader and teacher. If her dad can accomplish this, she can too.

My kid can read my book when I'm gone to remember her loving father.

Last paragraph made me cry.

The positive

This book thing might make me sound like Karen.

The upside is... Building in public, like I have with online writing, attracts the right people.

Proof-of-work over proposals, beautiful words, or huge aspirations. If you want a book deal, try writing online instead of the old manner.

Next steps

No idea.

I'm a rural Aussie. Writing a book in the big city is intimidating. Will I do it? Lots to think about. Right now, some level of reflection and gratitude feels most appropriate.

Sometimes when you don't feel worthy, it gives you the greatest lessons. That's how I feel about getting offered this book deal.

Perhaps you can relate.

Alex Mathers

3 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

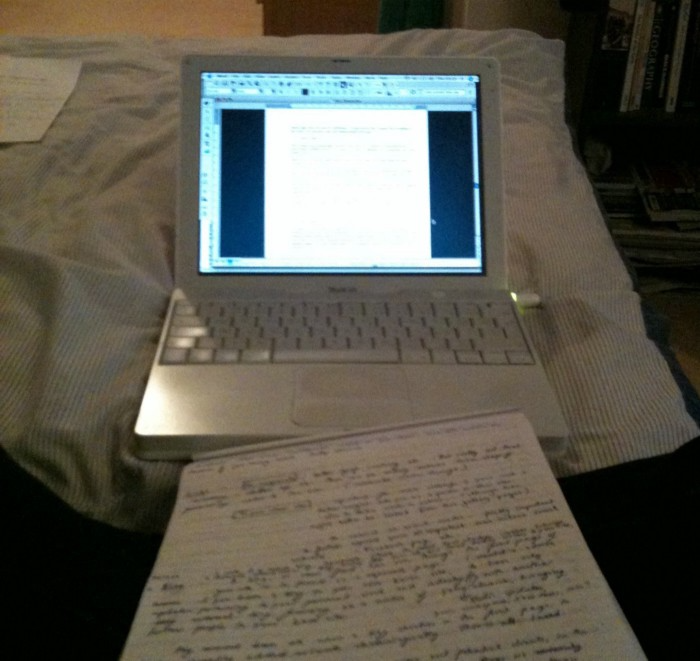

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

Sammy Abdullah

3 years ago

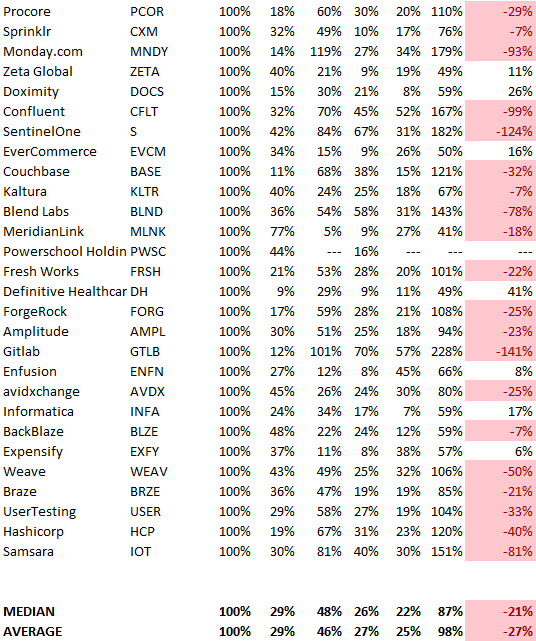

R&D, S&M, and G&A expense ratios for SaaS

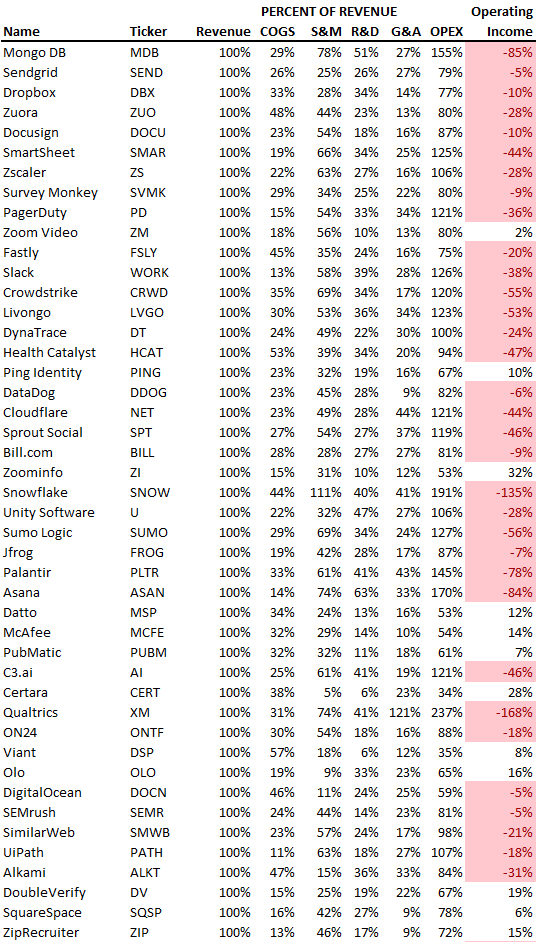

SaaS spending is 40/40/20. 40% of operating expenses should be R&D, 40% sales and marketing, and 20% G&A. We wanted to see the statistics behind the rules of thumb. Since October 2017, 73 SaaS startups have gone public. Perhaps the rule of thumb should be 30/50/20. The data is below.

30/50/20. R&D accounts for 26% of opex, sales and marketing 48%, and G&A 22%. We think R&D/S&M/G&A should be 30/50/20.

There are outliers. There are exceptions to rules of thumb. Dropbox spent 45% on R&D whereas Zoom spent 13%. Zoom spent 73% on S&M, Dropbox 37%, and Bill.com 28%. Snowflake spent 130% of revenue on S&M, while their EBITDA margin is -192%.

G&A shouldn't stand out. Minimize G&A spending. Priorities should be product development and sales. Cloudflare, Sendgrid, Snowflake, and Palantir spend 36%, 34%, 37%, and 43% on G&A.

Another myth is that COGS is 20% of revenue. Median and averages are 29%.

Where is the profitability? Data-driven operating income calculations were simplified (Revenue COGS R&D S&M G&A). 20 of 73 IPO businesses reported operational income. Median and average operating income margins are -21% and -27%.

As long as you're growing fast, have outstanding retention, and marquee clients, you can burn cash since recurring income that doesn't churn is a valuable annuity.

The data was compelling overall. 30/50/20 is the new 40/40/20 for more established SaaS enterprises, unprofitability is alright as long as your business is expanding, and COGS can be somewhat more than 20% of revenue.

You might also like

Christianlauer

3 years ago

Looker Studio Pro is now generally available, according to Google.

Great News about the new Google Business Intelligence Solution

Google has renamed Data Studio to Looker Studio and Looker Studio Pro.

Now, Google releases Looker Studio Pro. Similar to the move from Data Studio to Looker Studio, Looker Studio Pro is basically what Looker was previously, but both solutions will merge. Google says the Pro edition will acquire new enterprise management features, team collaboration capabilities, and SLAs.

![Dashboard Example in Looker Studio Pro — Image Source: Google[2]](https://storage.googleapis.com/int3grity/posts/m9yb4IqJCm7D/images/ZuJudlWT6GUeTKKNVduA5)

In addition to Google's announcements and sales methods, additional features include:

Looker Studio assets can now have organizational ownership. Customers can link Looker Studio to a Google Cloud project and migrate existing assets once. This provides:

Your users' created Looker Studio assets are all kept in a Google Cloud project.

When the users who own assets leave your organization, the assets won't be removed.

Using IAM, you may provide each Looker Studio asset in your company project-level permissions.

Other Cloud services can access Looker Studio assets that are owned by a Google Cloud project.

Looker Studio Pro clients may now manage report and data source access at scale using team workspaces.

Google announcing these features for the pro version is fascinating. Both products will likely converge, but Google may only release many features in the premium version in the future. Microsoft with Power BI and its free and premium variants already achieves this.

Sources and Further Readings

Google, Release Notes (2022)

Google, Looker (2022)

Cody Collins

3 years ago

The direction of the economy is as follows.

What quarterly bank earnings reveal

Big banks know the economy best. Unless we’re talking about a housing crisis in 2007…

Banks are crucial to the U.S. economy. The Fed, communities, and investments exchange money.

An economy depends on money flow. Banks' views on the economy can affect their decision-making.

Most large banks released quarterly earnings and forward guidance last week. Others were pessimistic about the future.

What Makes Banks Confident

Bank of America's profit decreased 30% year-over-year, but they're optimistic about the economy. Comparatively, they're bullish.

Who banks serve affects what they see. Bank of America supports customers.

They think consumers' future is bright. They believe this for many reasons.

The average customer has decent credit, unless the system is flawed. Bank of America's new credit card and mortgage borrowers averaged 771. New-car loan and home equity borrower averages were 791 and 797.

2008's housing crisis affected people with scores below 620.

Bank of America and the economy benefit from a robust consumer. Major problems can be avoided if individuals maintain spending.

Reasons Other Banks Are Less Confident

Spending requires income. Many companies, mostly in the computer industry, have announced they will slow or freeze hiring. Layoffs are frequently an indication of poor times ahead.

BOA is positive, but investment banks are bearish.

Jamie Dimon, CEO of JPMorgan, outlined various difficulties our economy could confront.

But geopolitical tension, high inflation, waning consumer confidence, the uncertainty about how high rates have to go and the never-before-seen quantitative tightening and their effects on global liquidity, combined with the war in Ukraine and its harmful effect on global energy and food prices are very likely to have negative consequences on the global economy sometime down the road.

That's more headwinds than tailwinds.

JPMorgan, which helps with mergers and IPOs, is less enthusiastic due to these concerns. Incoming headwinds signal drying liquidity, they say. Less business will be done.

Final Reflections

I don't think we're done. Yes, stocks are up 10% from a month ago. It's a long way from old highs.

I don't think the stock market is a strong economic indicator.

Many executives foresee a 2023 recession. According to the traditional definition, we may be in a recession when Q2 GDP statistics are released next week.

Regardless of criteria, I predict the economy will have a terrible year.

Weekly layoffs are announced. Inflation persists. Will prices return to 2020 levels if inflation cools? Perhaps. Still expensive energy. Ukraine's war has global repercussions.

I predict BOA's next quarter earnings won't be as bullish about the consumer's strength.

Akshad Singi

3 years ago

Four obnoxious one-minute habits that help me save more than 30 hours each week

These four, when combined, destroy procrastination.

You're not rushed. You waste it on busywork.

You'll accept this eventually.

In 2022, the daily average usage of a user on social media is 2.5 hours.

By 2020, 6 billion hours of video were watched each month by Netflix's customers, who used the service an average of 3.2 hours per day.

When we see these numbers, we think "Wow!" People squander so much time as though they don't contribute. True. These are yours. Likewise.

We don't lack time; we just waste it. Once you realize this, you can change your habits to save time. This article explains. If you adopt ALL 4 of these simple behaviors, you'll see amazing benefits.

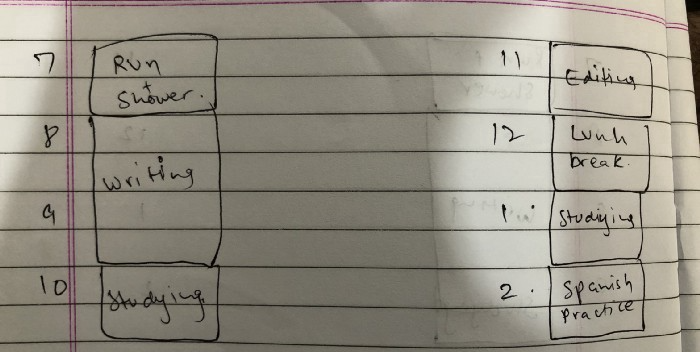

Time-blocking

Cal Newport's time-blocking trick takes a minute but improves your day's clarity.

Divide the next day into 30-minute (or 5-minute, if you're Elon Musk) segments and assign responsibilities. As seen.

Here's why:

The procrastination that results from attempting to determine when to begin working is eliminated. Procrastination is a given if you choose when to begin working in real-time. Even if you may assume you'll start working in five minutes, it won't take you long to realize that five minutes have turned into an hour. But if you've already determined to start working at 2:00 the next day, your odds of procrastinating are greatly decreased, if not eliminated altogether.

You'll also see that you have a lot of time in a day when you plan your day out on paper and assign chores to each hour. Doing this daily will permanently eliminate the lack of time mindset.

5-4-3-2-1: Have breakfast with the frog!

“If it’s your job to eat a frog, it’s best to do it first thing in the morning. And If it’s your job to eat two frogs, it’s best to eat the biggest one first.”

Eating the frog means accomplishing the day's most difficult chore. It's better to schedule it first thing in the morning when time-blocking the night before. Why?

The day's most difficult task is also the one that causes the most postponement. Because of the stress it causes, the later you schedule it, the more time you risk wasting by procrastinating.

However, if you do it right away in the morning, you'll feel good all day. This is the reason it was set for the morning.

Mel Robbins' 5-second rule can help. Start counting backward 54321 and force yourself to start at 1. If you acquire the urge to work on a goal, you must act within 5 seconds or your brain will destroy it. If you're scheduled to eat your frog at 9, eat it at 8:59. Start working.

Micro-visualisation

You've heard of visualizing to enhance the future. Visualizing a bright future won't do much if you're not prepared to focus on the now and develop the necessary habits. Alexander said:

People don’t decide their futures. They decide their habits and their habits decide their future.

I visualize the next day's schedule every morning. My day looks like this

“I’ll start writing an article at 7:30 AM. Then, I’ll get dressed up and reach the medicine outpatient department by 9:30 AM. After my duty is over, I’ll have lunch at 2 PM, followed by a nap at 3 PM. Then, I’ll go to the gym at 4…”

etc.

This reinforces the day you planned the night before. This makes following your plan easy.

Set the timer.

It's the best iPhone productivity app. A timer is incredible for increasing productivity.

Set a timer for an hour or 40 minutes before starting work. Your call. I don't believe in techniques like the Pomodoro because I can focus for varied amounts of time depending on the time of day, how fatigued I am, and how cognitively demanding the activity is.

I work with a timer. A timer keeps you focused and prevents distractions. Your mind stays concentrated because of the timer. Timers generate accountability.

To pee, I'll pause my timer. When I sit down, I'll continue. Same goes for bottle refills. To use Twitter, I must pause the timer. This creates accountability and focuses work.

Connecting everything

If you do all 4, you won't be disappointed. Here's how:

Plan out your day's schedule the night before.

Next, envision in your mind's eye the same timetable in the morning.

Speak aloud 54321 when it's time to work: Eat the frog! In the morning, devour the largest frog.

Then set a timer to ensure that you remain focused on the task at hand.