More on Web3 & Crypto

Julie Plavnik

3 years ago

How to Become a Crypto Broker [Complying and Making Money]

Three options exist. The third one is the quickest and most fruitful.

You've mastered crypto trading and want to become a broker.

So you may wonder: Where to begin?

If so, keep reading.

Today I'll compare three different approaches to becoming a cryptocurrency trader.

What are cryptocurrency brokers, and how do they vary from stockbrokers?

A stockbroker implements clients' market orders (retail or institutional ones).

Brokerage firms are regulated, insured, and subject to regulatory monitoring.

Stockbrokers are required between buyers and sellers. They can't trade without a broker. To trade, a trader must open a broker account and deposit money. When a trader shops, he tells his broker what orders to place.

Crypto brokerage is trade intermediation with cryptocurrency.

In crypto trading, however, brokers are optional.

Crypto exchanges offer direct transactions. Open an exchange account (no broker needed) and make a deposit.

Question:

Since crypto allows DIY trading, why use a broker?

Let's compare cryptocurrency exchanges vs. brokers.

Broker versus cryptocurrency exchange

Most existing crypto exchanges are basically brokers.

Examine their primary services:

connecting purchasers and suppliers

having custody of clients' money (with the exception of decentralized cryptocurrency exchanges),

clearance of transactions.

Brokerage is comparable, don't you think?

There are exceptions. I mean a few large crypto exchanges that follow the stock exchange paradigm. They outsource brokerage, custody, and clearing operations. Classic exchange setups are rare in today's bitcoin industry.

Back to our favorite “standard” crypto exchanges. All-in-one exchanges and brokers. And usually, they operate under a broker or a broker-dealer license, save for the exchanges registered somewhere in a free-trade offshore paradise. Those don’t bother with any licensing.

What’s the sense of having two brokers at a time?

Better liquidity and trading convenience.

The crypto business is compartmentalized.

We have CEXs, DEXs, hybrid exchanges, and semi-exchanges (those that aggregate liquidity but do not execute orders on their sides). All have unique regulations and act as sovereign states.

There are about 18k coins and hundreds of blockchain protocols, most of which are heterogeneous (i.e., different in design and not interoperable).

A trader must register many accounts on different exchanges, deposit funds, and manage them all concurrently to access global crypto liquidity.

It’s extremely inconvenient.

Crypto liquidity fragmentation is the largest obstacle and bottleneck blocking crypto from mass adoption.

Crypto brokers help clients solve this challenge by providing one-gate access to deep and diverse crypto liquidity from numerous exchanges and suppliers. Professionals and institutions need it.

Another killer feature of a brokerage may be allowing clients to trade crypto with fiat funds exclusively, without fiat/crypto conversion. It is essential for professional and institutional traders.

Who may work as a cryptocurrency broker?

Apparently, not anyone. Brokerage requires high-powered specialists because it involves other people's money.

Here's the essentials:

excellent knowledge, skills, and years of trading experience

high-quality, quick, and secure infrastructure

highly developed team

outstanding trading capital

High-ROI network: long-standing, trustworthy connections with customers, exchanges, liquidity providers, payment gates, and similar entities

outstanding marketing and commercial development skills.

What about a license for a cryptocurrency broker? Is it necessary?

Complex question.

If you plan to play in white-glove jurisdictions, you may need a license. For example, in the US, as a “money transmitter” or as a CASSP (crypto asset secondary services provider) in Australia.

Even in these jurisdictions, there are no clear, holistic crypto brokerage and licensing policies.

Your lawyer will help you decide if your crypto brokerage needs a license.

Getting a license isn't quick. Two years of patience are needed.

How can you turn into a cryptocurrency broker?

Finally, we got there! 🎉

Three actionable ways exist:

To kickstart a regulated stand-alone crypto broker

To get a crypto broker franchise, and

To become a liquidity network broker.

Let's examine each.

1. Opening a regulated cryptocurrency broker

It's difficult. Especially If you're targeting first-world users.

You must comply with many regulatory, technical, financial, HR, and reporting obligations to keep your organization running. Some are mentioned above.

The licensing process depends on the products you want to offer (spots or derivatives) and the geographic areas you plan to service. There are no general rules for that.

In an overgeneralized way, here are the boxes you will have to check:

capital availability (usually a large amount of capital c is required)

You will have to move some of your team members to the nation providing the license in order to establish an office presence there.

the core team with the necessary professional training (especially applies to CEO, Head of Trading, Assistant to Head of Trading, etc.)

insurance

infrastructure that is trustworthy and secure

adopted proper AML/KYC/financial monitoring policies, etc.

Assuming you passed, what's next?

I bet it won’t be mind-blowing for you that the license is just a part of the deal. It won't attract clients or revenue.

To bring in high-dollar clientele, you must be a killer marketer and seller. It's not easy to convince people to give you money.

You'll need to be a great business developer to form successful, long-term agreements with exchanges (ideally for no fees), liquidity providers, banks, payment gates, etc. Persuade clients.

It's a tough job, isn't it?

I expect a Quora-type question here:

Can I start an unlicensed crypto broker?

Well, there is always a workaround with crypto!

You can register your broker in a free-trade zone like Seychelles to avoid US and other markets with strong watchdogs.

This is neither wise nor sustainable.

First, such experiments are illegal.

Second, you'll have trouble attracting clients and strategic partners.

A license equals trust. That’s it.

Even a pseudo-license from Mauritius matters.

Here are this method's benefits and downsides.

Cons first.

As you navigate this difficult and expensive legal process, you run the risk of missing out on business prospects. It's quite simple to become excellent compliance yet unable to work. Because your competitors are already courting potential customers while you are focusing all of your effort on paperwork.

Only God knows how long it will take you to pass the break-even point when everything with the license has been completed.

It is a money-burning business, especially in the beginning when the majority of your expenses will go toward marketing, sales, and maintaining license requirements. Make sure you have the fortitude and resources necessary to face such a difficult challenge.

Pros

It may eventually develop into a tool for making money. Because big guys who are professionals at trading require a white-glove regulated brokerage. You have every possibility if you work hard in the areas of sales, marketing, business development, and wealth. Simply put, everything must align.

Launching a regulated crypto broker is analogous to launching a crypto exchange. It's ROUGH. Sure you can take it?

2. Franchise for Crypto Broker (Crypto Sub-Brokerage)

A broker franchise is easier and faster than becoming a regulated crypto broker. Not a traditional brokerage.

A broker franchisee, often termed a sub-broker, joins with a broker (a franchisor) to bring them new clients. Sub-brokers market a broker's products and services to clients.

Sub-brokers are the middlemen between a broker and an investor.

Why is sub-brokering easier?

less demanding qualifications and legal complexity. All you need to do is keep a few certificates on hand (each time depends on the jurisdiction).

No significant investment is required

there is no demand that you be a trading member of an exchange, etc.

As a sub-broker, you can do identical duties without as many rights and certifications.

What about the crypto broker franchise?

Sub-brokers aren't common in crypto.

In most existing examples (PayBito, PCEX, etc.), franchises are offered by crypto exchanges, not brokers. Though we remember that crypto exchanges are, in fact, brokers, do we?

Similarly:

For a commission, a franchiser crypto broker receives new leads from a crypto sub-broker.

See above for why enrolling is easy.

Finding clients is difficult. Most crypto traders prefer to buy-sell on their own or through brokers over sub-broker franchises.

3. Broker of the Crypto Trading Network (or a Network Broker)

It's the greatest approach to execute crypto brokerage, based on effort/return.

Network broker isn't an established word. I wrote it for clarity.

Remember how we called crypto liquidity fragmentation the current crypto finance paradigm's main bottleneck?

Where there's a challenge, there's progress.

Several well-funded projects are aiming to fix crypto liquidity fragmentation. Instead of launching another crypto exchange with siloed trading, the greatest minds create trading networks that aggregate crypto liquidity from desynchronized sources and enable quick, safe, and affordable cross-blockchain transactions. Each project offers a distinct option for users.

Crypto liquidity implies:

One-account access to cryptocurrency liquidity pooled from network participants' exchanges and other liquidity sources

compiled price feeds

Cross-chain transactions that are quick and inexpensive, even for HFTs

link between participants of all kinds, and

interoperability among diverse blockchains

Fast, diversified, and cheap global crypto trading from one account.

How does a trading network help cryptocurrency brokers?

I’ll explain it, taking Yellow Network as an example.

Yellow provides decentralized Layer-3 peer-to-peer trading.

trade across chains globally with real-time settlement and

Between cryptocurrency exchanges, brokers, trading companies, and other sorts of network members, there is communication and the exchange of financial information.

Have you ever heard about ECN (electronic communication network)? If not, it's an automated system that automatically matches buy and sell orders. Yellow is a decentralized digital asset ECN.

Brokers can:

Start trading right now without having to meet stringent requirements; all you need to do is integrate with Yellow Protocol and successfully complete some KYC verification.

Access global aggregated crypto liquidity through a single point.

B2B (Broker to Broker) liquidity channels that provide peer liquidity from other brokers. Orders from the other broker will appear in the order book of a broker who is peering with another broker on the market. It will enable a broker to broaden his offer and raise the total amount of liquidity that is available to his clients.

Select a custodian or use non-custodial practices.

Comparing network crypto brokerage to other types:

A licensed stand-alone brokerage business is much more difficult and time-consuming to launch than network brokerage, and

Network brokerage, in contrast to crypto sub-brokerage, is scalable, independent, and offers limitless possibilities for revenue generation.

Yellow Network Whitepaper. has more details on how to start a brokerage business and what rewards you'll obtain.

Final thoughts

There are three ways to become a cryptocurrency broker, including the non-conventional liquidity network brokerage. The last option appears time/cost-effective.

Crypto brokerage isn't crowded yet. Act quickly to find your right place in this market.

Choose the way that works for you best and see you in crypto trading.

Discover Web3 & DeFi with Yellow Network!

Yellow, powered by Openware, is developing a cross-chain P2P liquidity aggregator to unite the crypto sector and provide global remittance services that aid people.

Join the Yellow Community and plunge into this decade's biggest product-oriented crypto project.

Observe Yellow Twitter

Enroll in Yellow Telegram

Visit Yellow Discord.

On Hacker Noon, look us up.

Yellow Network will expose development, technology, developer tools, crypto brokerage nodes software, and community liquidity mining.

The Verge

4 years ago

Bored Ape Yacht Club creator raises $450 million at a $4 billion valuation.

Yuga Labs, owner of three of the biggest NFT brands on the market, announced today a $450 million funding round. The money will be used to create a media empire based on NFTs, starting with games and a metaverse project.

The team's Otherside metaverse project is an MMORPG meant to connect the larger NFT universe. They want to create “an interoperable world” that is “gamified” and “completely decentralized,” says Wylie Aronow, aka Gordon Goner, co-founder of Bored Ape Yacht Club. “We think the real Ready Player One experience will be player run.”

Just a few weeks ago, Yuga Labs announced the acquisition of CryptoPunks and Meebits from Larva Labs. The deal brought together three of the most valuable NFT collections, giving Yuga Labs more IP to work with when developing games and metaverses. Last week, ApeCoin was launched as a cryptocurrency that will be governed independently and used in Yuga Labs properties.

Otherside will be developed by “a few different game studios,” says Yuga Labs CEO Nicole Muniz. The company plans to create development tools that allow NFTs from other projects to work inside their world. “We're welcoming everyone into a walled garden.”

However, Yuga Labs believes that other companies are approaching metaverse projects incorrectly, allowing the startup to stand out. People won't bond spending time in a virtual space with nothing going on, says Yuga Labs co-founder Greg Solano, aka Gargamel. Instead, he says, people bond when forced to work together.

In order to avoid getting smacked, Solano advises making friends. “We don't think a Zoom chat and walking around saying ‘hi' creates a deep social experience.” Yuga Labs refused to provide a release date for Otherside. Later this year, a play-to-win game is planned.

The funding round was led by Andreessen Horowitz, a major investor in the Web3 space. It previously backed OpenSea and Coinbase. Animoca Brands, Coinbase, and MoonPay are among those who have invested. Andreessen Horowitz general partner Chris Lyons will join Yuga Labs' board. The Financial Times broke the story last month.

"META IS A DOMINANT DIGITAL EXPERIENCE PROVIDER IN A DYSTOPIAN FUTURE."

This emerging [Web3] ecosystem is important to me, as it is to companies like Meta,” Chris Dixon, head of Andreessen Horowitz's crypto arm, tells The Verge. “In a dystopian future, Meta is the dominant digital experience provider, and it controls all the money and power.” (Andreessen Horowitz co-founder Marc Andreessen sits on Meta's board and invested early in Facebook.)

Yuga Labs has been profitable so far. According to a leaked pitch deck, the company made $137 million last year, primarily from its NFT brands, with a 95% profit margin. (Yuga Labs declined to comment on deck figures.)

But the company has built little so far. According to OpenSea data, it has only released one game for a limited time. That means Yuga Labs gets hundreds of millions of dollars to build a gaming company from scratch, based on a hugely lucrative art project.

Investors fund Yuga Labs based on its success. That's what they did, says Dixon, “they created a culture phenomenon”. But ultimately, the company is betting on the same thing that so many others are: that a metaverse project will be the next big thing. Now they must construct it.

Vivek Singh

4 years ago

A Warm Welcome to Web3 and the Future of the Internet

Let's take a look back at the internet's history and see where we're going — and why.

Tim Berners Lee had a problem. He was at CERN, the world's largest particle physics factory, at the time. The institute's stated goal was to study the simplest particles with the most sophisticated scientific instruments. The institute completed the LEP Tunnel in 1988, a 27 kilometer ring. This was Europe's largest civil engineering project (to study smaller particles — electrons).

The problem Tim Berners Lee found was information loss, not particle physics. CERN employed a thousand people in 1989. Due to team size and complexity, people often struggled to recall past project information. While these obstacles could be overcome, high turnover was nearly impossible. Berners Lee addressed the issue in a proposal titled ‘Information Management'.

When a typical stay is two years, data is constantly lost. The introduction of new people takes a lot of time from them and others before they understand what is going on. An emergency situation may require a detective investigation to recover technical details of past projects. Often, the data is recorded but cannot be found. — Information Management: A Proposal

He had an idea. Create an information management system that allowed users to access data in a decentralized manner using a new technology called ‘hypertext'.

To quote Berners Lee, his proposal was “vague but exciting...”. The paper eventually evolved into the internet we know today. Here are three popular W3C standards used by billions of people today:

(credit: CERN)

HTML (Hypertext Markup)

A web formatting language.

URI (Unique Resource Identifier)

Each web resource has its own “address”. Known as ‘a URL'.

HTTP (Hypertext Transfer Protocol)

Retrieves linked resources from across the web.

These technologies underpin all computer work. They were the seeds of our quest to reorganize information, a task as fruitful as particle physics.

Tim Berners-Lee would probably think the three decades from 1989 to 2018 were eventful. He'd be amazed by the billions, the inspiring, the novel. Unlocking innovation at CERN through ‘Information Management'.

The fictional character would probably need a drink, walk, and a few deep breaths to fully grasp the internet's impact. He'd be surprised to see a few big names in the mix.

Then he'd say, "Something's wrong here."

We should review the web's history before going there. Was it a success after Berners Lee made it public? Web1 and Web2: What is it about what we are doing now that so many believe we need a new one, web3?

Per Outlier Ventures' Jamie Burke:

Web 1.0 was read-only.

Web 2.0 was the writable

Web 3.0 is a direct-write web.

Let's explore.

Web1: The Read-Only Web

Web1 was the digital age. We put our books, research, and lives ‘online'. The web made information retrieval easier than any filing cabinet ever. Massive amounts of data were stored online. Encyclopedias, medical records, and entire libraries were put away into floppy disks and hard drives.

In 2015, the web had around 305,500,000,000 pages of content (280 million copies of Atlas Shrugged).

Initially, one didn't expect to contribute much to this database. Web1 was an online version of the real world, but not yet a new way of using the invention.

One gets the impression that the web has been underutilized by historians if all we can say about it is that it has become a giant global fax machine. — Daniel Cohen, The Web's Second Decade (2004)

That doesn't mean developers weren't building. The web was being advanced by great minds. Web2 was born as technology advanced.

Web2: Read-Write Web

Remember when you clicked something on a website and the whole page refreshed? Is it too early to call the mid-2000s ‘the good old days'?

Browsers improved gradually, then suddenly. AJAX calls augmented CGI scripts, and applications began sending data back and forth without disrupting the entire web page. One button to ‘digg' a post (see below). Web experiences blossomed.

In 2006, Digg was the most active ‘Web 2.0' site. (Photo: Ethereum Foundation Taylor Gerring)

Interaction was the focus of new applications. Posting, upvoting, hearting, pinning, tweeting, liking, commenting, and clapping became a lexicon of their own. It exploded in 2004. Easy ways to ‘write' on the internet grew, and continue to grow.

Facebook became a Web2 icon, where users created trillions of rows of data. Google and Amazon moved from Web1 to Web2 by better understanding users and building products and services that met their needs.

Business models based on Software-as-a-Service and then managing consumer data within them for a fee have exploded.

Web2 Emerging Issues

Unbelievably, an intriguing dilemma arose. When creating this read-write web, a non-trivial question skirted underneath the covers. Who owns it all?

You have no control over [Web 2] online SaaS. People didn't realize this because SaaS was so new. People have realized this is the real issue in recent years.

Even if these organizations have good intentions, their incentive is not on the users' side.

“You are not their customer, therefore you are their product,” they say. With Laura Shin, Vitalik Buterin, Unchained

A good plot line emerges. Many amazing, world-changing software products quietly lost users' data control.

For example: Facebook owns much of your social graph data. Even if you hate Facebook, you can't leave without giving up that data. There is no ‘export' or ‘exit'. The platform owns ownership.

While many companies can pull data on you, you cannot do so.

On the surface, this isn't an issue. These companies use my data better than I do! A complex group of stakeholders, each with their own goals. One is maximizing shareholder value for public companies. Tim Berners-Lee (and others) dislike the incentives created.

“Show me the incentive and I will show you the outcome.” — Berkshire Hathaway's CEO

It's easy to see what the read-write web has allowed in retrospect. We've been given the keys to create content instead of just consume it. On Facebook and Twitter, anyone with a laptop and internet can participate. But the engagement isn't ours. Platforms own themselves.

Web3: The ‘Unmediated’ Read-Write Web

Tim Berners Lee proposed a decade ago that ‘linked data' could solve the internet's data problem.

However, until recently, the same principles that allowed the Web of documents to thrive were not applied to data...

The Web of Data also allows for new domain-specific applications. Unlike Web 2.0 mashups, Linked Data applications work with an unbound global data space. As new data sources appear on the Web, they can provide more complete answers.

At around the same time as linked data research began, Satoshi Nakamoto created Bitcoin. After ten years, it appears that Berners Lee's ideas ‘link' spiritually with cryptocurrencies.

What should Web 3 do?

Here are some quick predictions for the web's future.

Users' data:

Users own information and provide it to corporations, businesses, or services that will benefit them.

Defying censorship:

No government, company, or institution should control your access to information (1, 2, 3)

Connect users and platforms:

Create symbiotic rather than competitive relationships between users and platform creators.

Open networks:

“First, the cryptonetwork-participant contract is enforced in open source code. Their voices and exits are used to keep them in check.” Dixon, Chris (4)

Global interactivity:

Transacting value, information, or assets with anyone with internet access, anywhere, at low cost

Self-determination:

Giving you the ability to own, see, and understand your entire digital identity.

Not pull, push:

‘Push' your data to trusted sources instead of ‘pulling' it from others.

Where Does This Leave Us?

Change incentives, change the world. Nick Babalola

People believe web3 can help build a better, fairer system. This is not the same as equal pay or outcomes, but more equal opportunity.

It should be noted that some of these advantages have been discussed previously. Will the changes work? Will they make a difference? These unanswered questions are technical, economic, political, and philosophical. Unintended consequences are likely.

We hope Web3 is a more democratic web. And we think incentives help the user. If there’s one thing that’s on our side, it’s that open has always beaten closed, given a long enough timescale.

We are at the start.

You might also like

Sam Hickmann

3 years ago

What is this Fed interest rate everybody is talking about that makes or breaks the stock market?

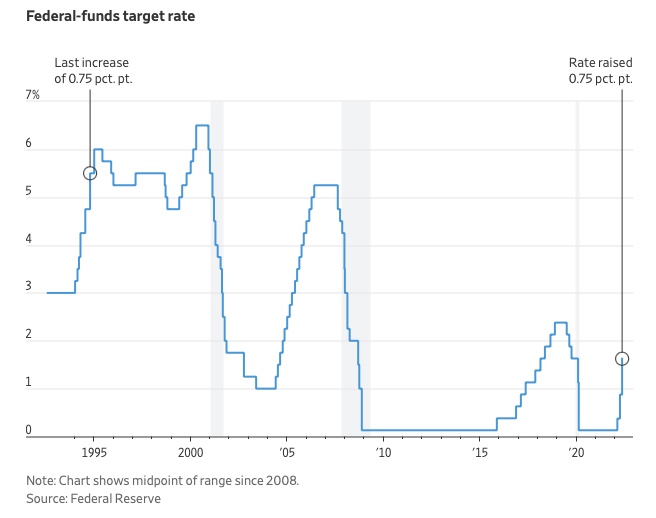

The Federal Funds Rate (FFR) is the target interest rate set by the Federal Reserve System (Fed)'s policy-making body (FOMC). This target is the rate at which the Fed suggests commercial banks borrow and lend their excess reserves overnight to each other.

The FOMC meets 8 times a year to set the target FFR. This is supposed to promote economic growth. The overnight lending market sets the actual rate based on commercial banks' short-term reserves. If the market strays too far, the Fed intervenes.

Banks must keep a certain percentage of their deposits in a Federal Reserve account. A bank's reserve requirement is a percentage of its total deposits. End-of-day bank account balances averaged over two-week reserve maintenance periods are used to determine reserve requirements.

If a bank expects to have end-of-day balances above what's needed, it can lend the excess to another institution.

The FOMC adjusts interest rates based on economic indicators that show inflation, recession, or other issues that affect economic growth. Core inflation and durable goods orders are indicators.

In response to economic conditions, the FFR target has changed over time. In the early 1980s, inflation pushed it to 20%. During the Great Recession of 2007-2009, the rate was slashed to 0.15 percent to encourage growth.

Inflation picked up in May 2022 despite earlier rate hikes, prompting today's 0.75 percent point increase. The largest increase since 1994. It might rise to around 3.375% this year and 3.1% by the end of 2024.

Will Lockett

3 years ago

Tesla recently disclosed its greatest secret.

The VP has revealed a secret that should frighten the rest of the EV world.

Tesla led the EV revolution. Elon Musk's invention offers a viable alternative to gas-guzzlers. Tesla has lost ground in recent years. VW, BMW, Mercedes, and Ford offer EVs with similar ranges, charging speeds, performance, and cost. Tesla's next-generation 4680 battery pack, Roadster, Cybertruck, and Semi were all delayed. CATL offers superior batteries than the 4680. Martin Viecha, Tesla's Vice President, recently told Business Insider something that startled the EV world and will establish Tesla as the EV king.

Viecha mentioned that Tesla's production costs have dropped 57% since 2017. This isn't due to cheaper batteries or devices like Model 3. No, this is due to amazing factory efficiency gains.

Musk wasn't crazy to want a nearly 100% automated production line, and Tesla's strategy of sticking with one model and improving it has paid off. Others change models every several years. This implies they must spend on new R&D, set up factories, and modernize service and parts systems. All of this costs a ton of money and prevents them from refining production to cut expenses.

Meanwhile, Tesla updates its vehicles progressively. Everything from the backseats to the screen has been enhanced in a 2022 Model 3. Tesla can refine, standardize, and cheaply produce every part without changing the production line.

In 2017, Tesla's automobile production averaged $84,000. In 2022, it'll be $36,000.

Mr. Viecha also claimed that new factories in Shanghai and Berlin will be significantly cheaper to operate once fully operating.

Tesla's hand is visible. Tesla selling $36,000 cars for $60,000 This barely beats the competition. Model Y long-range costs just over $60,000. Tesla makes $24,000+ every sale, giving it a 40% profit margin, one of the best in the auto business.

VW I.D4 costs about the same but makes no profit. Tesla's rivals face similar challenges. Their EVs make little or no profit.

Tesla costs the same as other EVs, but they're in a different league.

But don't forget that the battery pack accounts for 40% of an EV's cost. Tesla may soon fully utilize its 4680 battery pack.

The 4680 battery pack has larger cells and a unique internal design. This means fewer cells are needed for a car, making it cheaper to assemble and produce (per kWh). Energy density and charge speeds increase slightly.

Tesla underestimated the difficulty of making this revolutionary new cell. Each time they try to scale up production, quality drops and rejected cells rise.

Tesla recently installed this battery pack in Model Ys and is scaling production. If they succeed, Tesla battery prices will plummet.

Tesla's Model Ys 2170 battery costs $11,000. The same size pack with 4680 cells costs $3,400 less. Once scaled, it could be $5,500 (50%) less. The 4680 battery pack could reduce Tesla production costs by 20%.

With these cost savings, Tesla could sell Model Ys for $40,000 while still making a profit. They could offer a $25,000 car.

Even with new battery technology, it seems like other manufacturers will struggle to make EVs profitable.

Teslas cost about the same as competitors, so don't be fooled. Behind the scenes, they're still years ahead, and the 4680 battery pack and new factories will only increase that lead. Musk faces a first. He could sell Teslas at current prices and make billions while other manufacturers struggle. Or, he could massively undercut everyone and crush the competition once and for all. Tesla and Elon win.

Jan-Patrick Barnert

3 years ago

Wall Street's Bear Market May Stick Around

If history is any guide, this bear market might be long and severe.

This is the S&P 500 Index's fourth such incident in 20 years. The last bear market of 2020 was a "shock trade" caused by the Covid-19 pandemic, although earlier ones in 2000 and 2008 took longer to bottom out and recover.

Peter Garnry, head of equities strategy at Saxo Bank A/S, compares the current selloff to the dotcom bust of 2000 and the 1973-1974 bear market marked by soaring oil prices connected to an OPEC oil embargo. He blamed high tech valuations and the commodity crises.

"This drop might stretch over a year and reach 35%," Garnry wrote.

Here are six bear market charts.

Time/depth

The S&P 500 Index plummeted 51% between 2000 and 2002 and 58% during the global financial crisis; it took more than 1,000 trading days to recover. The former took 638 days to reach a bottom, while the latter took 352 days, suggesting the present selloff is young.

Valuations

Before the tech bubble burst in 2000, valuations were high. The S&P 500's forward P/E was 25 times then. Before the market fell this year, ahead values were near 24. Before the global financial crisis, stocks were relatively inexpensive, but valuations dropped more than 40%, compared to less than 30% now.

Earnings

Every stock crash, especially earlier bear markets, returned stocks to fundamentals. The S&P 500 decouples from earnings trends but eventually recouples.

Support

Central banks won't support equity investors just now. The end of massive monetary easing will terminate a two-year bull run that was among the strongest ever, and equities may struggle without cheap money. After years of "don't fight the Fed," investors must embrace a new strategy.

Bear Haunting Bear

If the past is any indication, rising government bond yields are bad news. After the financial crisis, skyrocketing rates and a falling euro pushed European stock markets back into bear territory in 2011.

Inflation/rates

The current monetary policy climate differs from past bear markets. This is the first time in a while that markets face significant inflation and rising rates.

This post is a summary. Read full article here