More on Entrepreneurship/Creators

Raad Ahmed

3 years ago

How We Just Raised $6M At An $80M Valuation From 100+ Investors Using A Link (Without Pitching)

Lawtrades nearly failed three years ago.

We couldn't raise Series A or enthusiasm from VCs.

We raised $6M (at a $80M valuation) from 100 customers and investors using a link and no pitching.

Step-by-step:

We refocused our business first.

Lawtrades raised $3.7M while Atrium raised $75M. By comparison, we seemed unimportant.

We had to close the company or try something new.

As I've written previously, a pivot saved us. Our initial focus on SMBs attracted many unprofitable customers. SMBs needed one-off legal services, meaning low fees and high turnover.

Tech startups were different. Their General Councels (GCs) needed near-daily support, resulting in higher fees and lower churn than SMBs.

We stopped unprofitable customers and focused on power users. To avoid dilution, we borrowed against receivables. We scaled our revenue 10x, from $70k/mo to $700k/mo.

Then, we reconsidered fundraising (and do it differently)

This time was different. Lawtrades was cash flow positive for most of last year, so we could dictate our own terms. VCs were still wary of legaltech after Atrium's shutdown (though they were thinking about the space).

We neither wanted to rely on VCs nor dilute more than 10% equity. So we didn't compete for in-person pitch meetings.

AngelList Roll-Up Vehicle (RUV). Up to 250 accredited investors can invest in a single RUV. First, we emailed customers the RUV. Why? Because I wanted to help the platform's users.

Imagine if Uber or Airbnb let all drivers or Superhosts invest in an RUV. Humans make the platform, theirs and ours. Giving people a chance to invest increases their loyalty.

We expanded after initial interest.

We created a Journey link, containing everything that would normally go in an investor pitch:

- Slides

- Trailer (from me)

- Testimonials

- Product demo

- Financials

We could also link to our AngelList RUV and send the pitch to an unlimited number of people. Instead of 1:1, we had 1:10,000 pitches-to-investors.

We posted Journey's link in RUV Alliance Discord. 600 accredited investors noticed it immediately. Within days, we raised $250,000 from customers-turned-investors.

Stonks, which live-streamed our pitch to thousands of viewers, was interested in our grassroots enthusiasm. We got $1.4M from people I've never met.

These updates on Pump generated more interest. Facebook, Uber, Netflix, and Robinhood executives all wanted to invest. Sahil Lavingia, who had rejected us, gave us $100k.

We closed the round with public support.

Without a single pitch meeting, we'd raised $2.3M. It was a result of natural enthusiasm: taking care of the people who made us who we are, letting them move first, and leveraging their enthusiasm with VCs, who were interested.

We used network effects to raise $3.7M from a founder-turned-VC, bringing the total to $6M at a $80M valuation (which, by the way, I set myself).

What flipping the fundraising script allowed us to do:

We started with private investors instead of 2–3 VCs to show VCs what we were worth. This gave Lawtrades the ability to:

- Without meetings, share our vision. Many people saw our Journey link. I ended up taking meetings with people who planned to contribute $50k+, but still, the ratio of views-to-meetings was outrageously good for us.

- Leverage ourselves. Instead of us selling ourselves to VCs, they did. Some people with large checks or late arrivals were turned away.

- Maintain voting power. No board seats were lost.

- Utilize viral network effects. People-powered.

- Preemptively halt churn by turning our users into owners. People are more loyal and respectful to things they own. Our users make us who we are — no matter how good our tech is, we need human beings to use it. They deserve to be owners.

I don't blame founders for being hesitant about this approach. Pump and RUVs are new and scary. But it won’t be that way for long. Our approach redistributed some of the power that normally lies entirely with VCs, putting it into our hands and our network’s hands.

This is the future — another way power is shifting from centralized to decentralized.

Aure's Notes

3 years ago

I met a man who in just 18 months scaled his startup to $100 million.

A fascinating business conversation.

This week at Web Summit, I had mentor hour.

Mentor hour connects startups with experienced entrepreneurs.

The YC-selected founder who mentored me had grown his company to $100 million in 18 months.

I had 45 minutes to question him.

I've compiled this.

Context

Founder's name is Zack.

After working in private equity, Zack opted to acquire an MBA.

Surrounded by entrepreneurs at a prominent school, he decided to become one himself.

Unsure how to proceed, he bet on two horses.

On one side, he received an offer from folks who needed help running their startup owing to lack of time. On the other hand, he had an idea for a SaaS to start himself.

He just needed to validate it.

Validating

Since Zack's proposal helped companies, he contacted university entrepreneurs for comments.

He contacted university founders.

Once he knew he'd correctly identified the problem and that people were willing to pay to address it, he started developing.

He earned $100k in a university entrepreneurship competition.

His plan was evident by then.

The other startup's founders saw his potential and granted him $400k to launch his own SaaS.

Hiring

He started looking for a tech co-founder because he lacked IT skills.

He interviewed dozens and picked the finest.

As he didn't want to wait for his program to be ready, he contacted hundreds of potential clients and got 15 letters of intent promising they'd join up when it was available.

YC accepted him by then.

He had enough positive signals to raise.

Raising

He didn't say how many VCs he called, but he indicated 50 were interested.

He jammed meetings into two weeks to generate pressure and encourage them to invest.

Seed raise: $11 million.

Selling

His objective was to contact as many entrepreneurs as possible to promote his product.

He first contacted startups by scraping CrunchBase data.

Once he had more money, he started targeting companies with ZoomInfo.

His VC urged him not to hire salespeople until he closed 50 clients himself.

He closed 100 and hired a CRO through a headhunter.

Scaling

Three persons started the business.

He primarily works in sales.

Coding the product was done by his co-founder.

Another person performing operational duties.

He regretted recruiting the third co-founder, who was ineffective (could have hired an employee instead).

He wanted his company to be big, so he hired two young marketing people from a competing company.

After validating several marketing channels, he chose PR.

$100 Million and under

He developed a sales team and now employs 30 individuals.

He raised a $100 million Series A.

Additionally, he stated

He’s been rejected a lot. Like, a lot.

Two great books to read: Steve Jobs by Isaacson, and Why Startups Fail by Tom Eisenmann.

The best skill to learn for non-tech founders is “telling stories”, which means sales. A founder’s main job is to convince: co-founders, employees, investors, and customers. Learn code, or learn sales.

Conclusion

I often read about these stories but hardly take them seriously.

Zack was amazing.

Three things about him stand out:

His vision. He possessed a certain amount of fire.

His vitality. The man had a lot of enthusiasm and spoke quickly and decisively. He takes no chances and pushes the envelope in all he does.

His Rolex.

He didn't do all this in 18 months.

Not really.

He couldn't launch his company without private equity experience.

These accounts disregard entrepreneurs' original knowledge.

Hormozi will tell you how he founded Gym Launch, but he won't tell you how he had a gym first, how he worked at uni to pay for his gym, or how he went to the gym and learnt about fitness, which gave him the idea to open his own.

Nobody knows nothing. If you scale quickly, it's probable because you gained information early.

Lincoln said, "Give me six hours to chop down a tree, and I'll spend four sharpening the axe."

Sharper axes cut trees faster.

Alex Mathers

3 years ago

400 articles later, nobody bothered to read them.

Writing for readers:

14 years of daily writing.

I post practically everything on social media. I authored hundreds of articles, thousands of tweets, and numerous volumes to almost no one.

Tens of thousands of readers regularly praise me.

I despised writing. I'm stuck now.

I've learned what readers like and what doesn't.

Here are some essential guidelines for writing with impact:

Readers won't understand your work if you can't.

Though obvious, this slipped me up. Share your truths.

Stories engage human brains.

Showing the journey of a person from worm to butterfly inspires the human spirit.

Overthinking hinders powerful writing.

The best ideas come from inner understanding in between thoughts.

Avoid writing to find it. Write.

Writing a masterpiece isn't motivating.

Write for five minutes to simplify. Step-by-step, entertaining, easy steps.

Good writing requires a willingness to make mistakes.

So write loads of garbage that you can edit into a good piece.

Courageous writing.

A courageous story will move readers. Personal experience is best.

Go where few dare.

Templates, outlines, and boundaries help.

Limitations enhance writing.

Excellent writing is straightforward and readable, removing all the unnecessary fat.

Use five words instead of nine.

Use ordinary words instead of uncommon ones.

Readers desire relatability.

Too much perfection will turn it off.

Write to solve an issue if you can't think of anything to write.

Instead, read to inspire. Best authors read.

Every tweet, thread, and novel must have a central idea.

What's its point?

This can make writing confusing.

️ Don't direct your reader.

Readers quit reading. Demonstrate, describe, and relate.

Even if no one responds, have fun. If you hate writing it, the reader will too.

You might also like

Sam Hickmann

4 years ago

A quick guide to formatting your text on INTΞGRITY

[06/20/2022 update] We have now implemented a powerful text editor, but you can still use markdown.

Markdown:

Headers

SYNTAX:

# This is a heading 1

## This is a heading 2

### This is a heading 3

#### This is a heading 4

RESULT:

This is a heading 1

This is a heading 2

This is a heading 3

This is a heading 4

Emphasis

SYNTAX:

**This text will be bold**

~~Strikethrough~~

*You **can** combine them*

RESULT:

This text will be italic

This text will be bold

You can combine them

Images

SYNTAX:

RESULT:

Videos

SYNTAX:

https://www.youtube.com/watch?v=7KXGZAEWzn0

RESULT:

Links

SYNTAX:

[Int3grity website](https://www.int3grity.com)

RESULT:

Tweets

SYNTAX:

https://twitter.com/samhickmann/status/1503800505864130561

RESULT:

Blockquotes

SYNTAX:

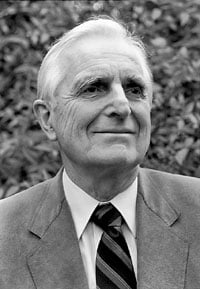

> Human beings face ever more complex and urgent problems, and their effectiveness in dealing with these problems is a matter that is critical to the stability and continued progress of society. \- Doug Engelbart, 1961

RESULT:

Human beings face ever more complex and urgent problems, and their effectiveness in dealing with these problems is a matter that is critical to the stability and continued progress of society. - Doug Engelbart, 1961

Inline code

SYNTAX:

Text inside `backticks` on a line will be formatted like code.

RESULT:

Text inside backticks on a line will be formatted like code.

Code blocks

SYNTAX:

'''js

function fancyAlert(arg) {

if(arg) {

$.facebox({div:'#foo'})

}

}

'''

RESULT:

function fancyAlert(arg) {

if(arg) {

$.facebox({div:'#foo'})

}

}

Maths

We support LaTex to typeset math. We recommend reading the full documentation on the official website

SYNTAX:

$$[x^n+y^n=z^n]$$

RESULT:

[x^n+y^n=z^n]

Tables

SYNTAX:

| header a | header b |

| ---- | ---- |

| row 1 col 1 | row 1 col 2 |

RESULT:

| header a | header b | header c |

|---|---|---|

| row 1 col 1 | row 1 col 2 | row 1 col 3 |

CyberPunkMetalHead

3 years ago

195 countries want Terra Luna founder Do Kwon

Interpol has issued a red alert on Terraform Labs' CEO, South Korean prosecutors said.

After the May crash of Terra Luna revealed tax evasion issues, South Korean officials filed an arrest warrant for Do Kwon, but he is missing.

Do Kwon is now a fugitive in 195 countries after Seoul prosecutors placed him to Interpol's red list. Do Kwon hasn't commented since then. The red list allows any country's local authorities to apprehend Do Kwon.

Do Dwon and Terraform Labs were believed to have moved to Singapore days before the $40 billion wipeout, but Singapore authorities said he fled the country on September 17. Do Kwon tweeted that he wasn't on the run and cited privacy concerns.

Do Kwon was not on the red list at the time and said he wasn't "running," only to reply to his own tweet saying he hasn't jogged in a while and needed to trim calories.

Whether or not it makes sense to read too much into this, the reality is that Do Kwon is now on Interpol red list, despite the firmly asserts on twitter that he does absolutely nothing to hide.

UPDATE:

South Korean authorities are investigating alleged withdrawals of over $60 million U.S. and seeking to freeze these assets. Korean authorities believe a new wallet exchanged over 3000 BTC through OKX and Kucoin.

Do Kwon and the Luna Foundation Guard (of whom Do Kwon is a key member of) have declined all charges and dubbed this disinformation.

Singapore's Luna Foundation Guard (LFG) manages the Terra Ecosystem.

The Legal Situation

Multiple governments are searching for Do Kwon and five other Terraform Labs employees for financial markets legislation crimes.

South Korean authorities arrested a man suspected of tax fraud and Ponzi scheme.

The U.S. SEC is also examining Terraform Labs on how UST was advertised as a stablecoin. No legal precedent exists, so it's unclear what's illegal.

The future of Terraform Labs, Terra, and Terra 2 is unknown, and despite what Twitter shills say about LUNC, the company remains in limbo awaiting a decision that will determine its fate. This project isn't a wise investment.

Will Lockett

2 years ago

There Is A New EV King in Town

McMurtry Spéirling outperforms Tesla in speed and efficiency.

EVs were ridiculously slow for decades. However, the 2008 Tesla Roadster revealed that EVs might go extraordinarily fast. The Tesla Model S Plaid and Rimac Nevera are the fastest-accelerating road vehicles, despite combustion-engined road cars dominating the course. A little-known firm beat Tesla and Rimac in the 0-60 race, beat F1 vehicles on a circuit, and boasts a 350-mile driving range. The McMurtry Spéirling is completely insane.

Mat Watson of CarWow, a YouTube megastar, was recently handed a Spéirling and access to Silverstone Circuit (view video above). Mat ran a quarter-mile on Silverstone straight with former F1 driver Max Chilton. The little pocket-rocket automobile touched 100 mph in 2.7 seconds, completed the quarter mile in 7.97 seconds, and hit 0-60 in 1.4 seconds. When looking at autos quickly, 0-60 times can seem near. The Tesla Model S Plaid does 0-60 in 1.99 seconds, which is comparable to the Spéirling. Despite the meager statistics, the Spéirling is nearly 30% faster than Plaid!

My vintage VW Golf 1.4s has an 8.8-second 0-60 time, whereas a BMW Z4 3.0i is 30% faster (with a 0-60 time of 6 seconds). I tried to beat a Z4 off the lights in my Golf, but the Beamer flew away. If they challenge the Spéirling in a Model S Plaid, they'll feel as I did. Fast!

Insane quarter-mile drag time. Its road car record is 7.97 seconds. A Dodge Demon, meant to run extremely fast quarter miles, finishes so in 9.65 seconds, approximately 20% slower. The Rimac Nevera's 8.582-second quarter-mile record was miles behind drag racing. This run hampered the Spéirling. Because it was employing gearing that limited its top speed to 150 mph, it reached there in a little over 5 seconds without accelerating for most of the quarter mile! McMurtry can easily change the gearing, making the Spéirling run quicker.

McMurtry did this how? First, the Spéirling is a tiny single-seater EV with a 60 kWh battery pack, making it one of the lightest EVs ever. The 1,000-hp Spéirling has more than one horsepower per kg. The Nevera has 0.84 horsepower per kg and the Plaid 0.44.

However, you cannot simply construct a car light and power it. Instead of accelerating, it would spin. This makes the Spéirling a fan car. Its huge fans create massive downforce. These fans provide the Spéirling 2 tonnes of downforce while stationary, so you could park it on the ceiling. Its fast 0-60 time comes from its downforce, which lets it deliver all that power without wheel spin.

It also possesses complete downforce at all speeds, allowing it to tackle turns faster than even race vehicles. Spéirlings overcame VW IDRs and F1 cars to set the Goodwood Hill Climb record (read more here). The Spéirling is a dragstrip winner and track dominator, unlike the Plaid and Nevera.

The Spéirling is astonishing for a single-seater. Fan-generated downforce is more efficient than wings and splitters. It also means the vehicle has very minimal drag without the fan. The Spéirling can go 350 miles per charge (WLTP) or 20-30 minutes at full speed on a track despite its 60 kWh battery pack. The G-forces would hurt your neck before the battery died if you drove around a track for longer. The Spéirling can charge at over 200 kW in about 30 minutes. Thus, driving to track days, having fun, and returning is possible. Unlike other high-performance EVs.

Tesla, Rimac, or Lucid will struggle to defeat the Spéirling. They would need to build a fan automobile because adding power to their current vehicle would make it uncontrollable. The EV and automobile industries now have a new, untouchable performance king.