More on Personal Growth

Simon Ash

3 years ago

The Three Most Effective Questions for Ongoing Development

The Traffic Light Approach to Reviewing Personal, Team and Project Development

What needs improvement? If you want to improve, you need to practice your sport, musical instrument, habit, or work project. You need to assess your progress.

Continuous improvement is the foundation of focused practice and a growth mentality. Not just individually. High-performing teams pursue improvement. Right? Why is it hard?

As a leadership coach, senior manager, and high-level athlete, I've found three key questions that may unlock high performance in individuals and teams.

Problems with Reviews

Reviewing and improving performance is crucial, however I hate seeing review sessions in my diary. I rarely respond to questionnaire pop-ups or emails. Why?

Time constrains. Requests to fill out questionnaires often state they will take 10–15 minutes, but I can think of a million other things to do with that time. Next, review overload. Businesses can easily request comments online. No matter what you buy, someone will ask for your opinion. This bombardment might make feedback seem bad, which is bad.

The problem is that we might feel that way about important things like personal growth and work performance. Managers and team leaders face a greater challenge.

When to Conduct a Review

We must be wise about reviewing things that matter to us. Timing and duration matter. Reviewing the experience as quickly as possible preserves information and sentiments. Time must be brief. The review's importance and size will determine its length. We might only take a few seconds to review our morning coffee, but we might require more time for that six-month work project.

These post-event reviews should be supplemented by periodic reflection. Journaling can help with daily reflections, but I also like to undertake personal reviews every six months on vacation or at a retreat.

As an employee or line manager, you don't want to wait a year for a performance assessment. Little and frequently is best, with a more formal and in-depth assessment (typically with a written report) in 6 and 12 months.

The Easiest Method to Conduct a Review Session

I follow Einstein's review process:

“Make things as simple as possible but no simpler.”

Thus, it should be brief but deliver the necessary feedback. Quality critique is hard to receive if the process is overly complicated or long.

I have led or participated in many review processes, from strategic overhauls of big organizations to personal goal coaching. Three key questions guide the process at either end:

What ought to stop being done?

What should we do going forward?

What should we do first?

Following the Rule of 3, I compare it to traffic lights. Red, amber, and green lights:

Red What ought should we stop?

Amber What ought to we keep up?

Green Where should we begin?

This approach is easy to understand and self-explanatory, however below are some examples under each area.

Red What ought should we stop?

As a team or individually, we must stop doing things to improve.

Sometimes they're bad. If we want to lose weight, we should avoid sweets. If a team culture is bad, we may need to stop unpleasant behavior like gossiping instead of having difficult conversations.

Not all things we should stop are wrong. Time matters. Since it is finite, we sometimes have to stop nice things to focus on the most important. Good to Great author Jim Collins famously said:

“Don’t let the good be the enemy of the great.”

Prioritizing requires this idea. Thus, decide what to stop to prioritize.

Amber What ought to we keep up?

Should we continue with the amber light? It helps us decide what to keep doing during review. Many items fall into this category, so focus on those that make the most progress.

Which activities have the most impact? Which behaviors create the best culture? Success-building habits?

Use these questions to find positive momentum. These are the fly-wheel motions, according to Jim Collins. The Compound Effect author Darren Hardy says:

“Consistency is the key to achieving and maintaining momentum.”

What can you do consistently to reach your goal?

Green Where should we begin?

Finally, green lights indicate new beginnings. Red/amber difficulties may be involved. Stopping a red issue may give you more time to do something helpful (in the amber).

This green space inspires creativity. Kolbs learning cycle requires active exploration to progress. Thus, it's crucial to think of new approaches, try them out, and fail if required.

This notion underpins lean start-build, up's measure, learn approach and agile's trying, testing, and reviewing. Try new things until you find what works. Thomas Edison, the lighting legend, exclaimed:

“There is a way to do it better — find it!”

Failure is acceptable, but if you want to fail forward, look back on what you've done.

John Maxwell concurred with Edison:

“Fail early, fail often, but always fail forward”

A good review procedure lets us accomplish that. To avoid failure, we must act, experiment, and reflect.

Use the traffic light system to prioritize queries. Ask:

Red What needs to stop?

Amber What should continue to occur?

Green What might be initiated?

Take a moment to reflect on your day. Check your priorities with these three questions. Even if merely to confirm your direction, it's a terrific exercise!

Neeramitra Reddy

3 years ago

The best life advice I've ever heard could very well come from 50 Cent.

He built a $40M hip-hop empire from street drug dealing.

50 Cent was nearly killed by 9mm bullets.

Before 50 Cent, Curtis Jackson sold drugs.

He sold coke to worried addicts after being orphaned at 8.

Pursuing police. Murderous hustlers and gangs. Unwitting informers.

Despite his hard life, his hip-hop career was a success.

An assassination attempt ended his career at the start.

What sane producer would want to deal with a man entrenched in crime?

Most would have drowned in self-pity and drank themselves to death.

But 50 Cent isn't most people. Life on the streets had given him fearlessness.

“Having a brush with death, or being reminded in a dramatic way of the shortness of our lives, can have a positive, therapeutic effect. So it is best to make every moment count, to have a sense of urgency about life.” ― 50 Cent, The 50th Law

50 released a series of mixtapes that caught Eminem's attention and earned him a $50 million deal!

50 Cents turned death into life.

Things happen; that is life.

We want problems solved.

Every human has problems, whether it's Jeff Bezos swimming in his billions, Obama in his comfortable retirement home, or Dan Bilzerian with his hired bikini models.

All problems.

Problems churn through life. solve one, another appears.

It's harsh. Life's unfair. We can face reality or run from it.

The latter will worsen your issues.

“The firmer your grasp on reality, the more power you will have to alter it for your purposes.” — 50 Cent, The 50th Law

In a fantasy-obsessed world, 50 Cent loves reality.

Wish for better problem-solving skills rather than problem-free living.

Don't wish, work.

We All Have the True Power of Alchemy

Humans are arrogant enough to think the universe cares about them.

That things happen as if the universe notices our nanosecond existences.

Things simply happen. Period.

By changing our perspective, we can turn good things bad.

The alchemists' search for the philosopher's stone may have symbolized the ability to turn our lead-like perceptions into gold.

Negativity bias tints our perceptions.

Normal sparring broke your elbow? Rest and rethink your training. Fired? You can improve your skills and get a better job.

Consider Curtis if he had fallen into despair.

The legend we call 50 Cent wouldn’t have existed.

The Best Lesson in Life Ever?

Neither avoid nor fear your reality.

That simple sentence contains every self-help tip and life lesson on Earth.

When reality is all there is, why fear it? avoidance?

Or worse, fleeing?

To accept reality, we must eliminate the words should be, could be, wish it were, and hope it will be.

It is. Period.

Only by accepting reality's chaos can you shape your life.

“Behind me is infinite power. Before me is endless possibility, around me is boundless opportunity. My strength is mental, physical and spiritual.” — 50 Cent

Ian Writes

3 years ago

Rich Dad, Poor Dad is a Giant Steaming Pile of Sh*t by Robert Kiyosaki.

Don't promote it.

I rarely read a post on how Rich Dad, Poor Dad motivated someone to grow rich or change their investing/finance attitude. Rich Dad, Poor Dad is a sham, though. This book isn't worth anyone's attention.

Robert Kiyosaki, the author of this garbage, doesn't deserve recognition or attention. This first finance guru wanted to build his own wealth at your expense. These charlatans only care about themselves.

The reason why Rich Dad, Poor Dad is a huge steaming piece of trash

The book's ideas are superficial, apparent, and unsurprising to entrepreneurs and investors. The book's themes may seem profound to first-time readers.

Apparently, starting a business will make you rich.

The book supports founding or buying a business, making it self-sufficient, and being rich through it. Starting a business is time-consuming, tough, and expensive. Entrepreneurship isn't for everyone. Rarely do enterprises succeed.

Robert says we should think like his mentor, a rich parent. Robert never said who or if this guy existed. He was apparently his own father. Robert proposes investing someone else's money in several enterprises and properties. The book proposes investing in:

“have returns of 100 percent to infinity. Investments that for $5,000 are soon turned into $1 million or more.”

In rare cases, a business may provide 200x returns, but 65% of US businesses fail within 10 years. Australia's first-year business failure rate is 60%. A business that lasts 10 years doesn't mean its owner is rich. These statistics only include businesses that survive and pay their owners.

Employees are depressed and broke.

The novel portrays employees as broke and sad. The author degrades workers.

I've owned and worked for a business. I was broke and miserable as a business owner, working 80 hours a week for absolutely little salary. I work 50 hours a week and make over $200,000 a year. My work is hard, intriguing, and I'm surrounded by educated individuals. Self-employed or employee?

Don't listen to a charlatan's tax advice.

From a bad advise perspective, Robert's tax methods were funny. Robert suggests forming a corporation to write off holidays as board meetings or health club costs as business expenses. These actions can land you in serious tax trouble.

Robert dismisses college and traditional schooling. Rich individuals learn by doing or living, while educated people are agitated and destitute, says Robert.

Rich dad says:

“All too often business schools train employees to become sophisticated bean-counters. Heaven forbid a bean counter takes over a business. All they do is look at the numbers, fire people, and kill the business.”

And then says:

“Accounting is possibly the most confusing, boring subject in the world, but if you want to be rich long-term, it could be the most important subject.”

Get rich by avoiding paying your debts to others.

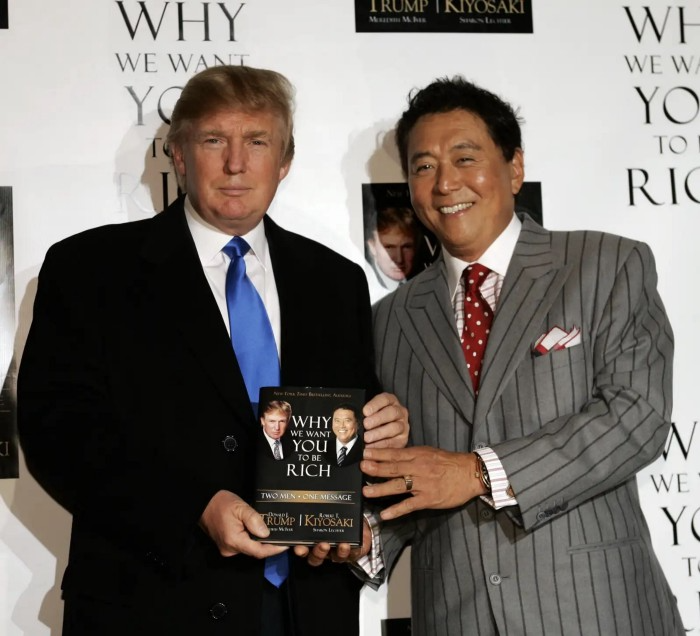

While this book has plenty of bad advice, I'll end with this: Robert advocates paying yourself first. This man's work with Trump isn't surprising.

Rich Dad's book says:

“So you see, after paying myself, the pressure to pay my taxes and the other creditors is so great that it forces me to seek other forms of income. The pressure to pay becomes my motivation. I’ve worked extra jobs, started other companies, traded in the stock market, anything just to make sure those guys don’t start yelling at me […] If I had paid myself last, I would have felt no pressure, but I’d be broke.“

Paying yourself first shouldn't mean ignoring debt, damaging your credit score and reputation, or paying unneeded fees and interest. Good business owners pay employees, creditors, and other costs first. You can pay yourself after everyone else.

If you follow Robert Kiyosaki's financial and business advice, you might as well follow Donald Trump's, the most notoriously ineffective businessman and swindle artist.

This book's popularity is unfortunate. Robert utilized the book's fame to promote paid seminars. At these seminars, he sold more expensive seminars to the gullible. This strategy was utilized by several conmen and Trump University.

It's reasonable that many believed him. It sounded appealing because he was pushing to get rich by thinking like a rich person. Anyway. At a time when most persons addressing wealth development advised early sacrifices (such as eschewing luxury or buying expensive properties), Robert told people to act affluent now and utilize other people's money to construct their fantasy lifestyle. It's exciting and fast.

I often voice my skepticism and scorn for internet gurus now that social media and platforms like Medium make it easier to promote them. Robert Kiyosaki was a guru. Many people still preach his stuff because he was so good at pushing it.

You might also like

Amelia Winger-Bearskin

3 years ago

Reasons Why AI-Generated Images Remind Me of Nightmares

AI images are like funhouse mirrors.

Google's AI Blog introduced the puppy-slug in the summer of 2015.

Puppy-slug isn't a single image or character. "Puppy-slug" refers to Google's DeepDream's unsettling psychedelia. This tool uses convolutional neural networks to train models to recognize dataset entities. If researchers feed the model millions of dog pictures, the network will learn to recognize a dog.

DeepDream used neural networks to analyze and classify image data as well as generate its own images. DeepDream's early examples were created by training a convolutional network on dog images and asking it to add "dog-ness" to other images. The models analyzed images to find dog-like pixels and modified surrounding pixels to highlight them.

Puppy-slugs and other DeepDream images are ugly. Even when they don't trigger my trypophobia, they give me vertigo when my mind tries to reconcile familiar features and forms in unnatural, physically impossible arrangements. I feel like I've been poisoned by a forbidden mushroom or a noxious toad. I'm a Lovecraft character going mad from extradimensional exposure. They're gross!

Is this really how AIs see the world? This is possibly an even more unsettling topic that DeepDream raises than the blatant abjection of the images.

When these photographs originally circulated online, many friends were startled and scandalized. People imagined a computer's imagination would be literal, accurate, and boring. We didn't expect vivid hallucinations and organic-looking formations.

DeepDream's images didn't really show the machines' imaginations, at least not in the way that scared some people. DeepDream displays data visualizations. DeepDream reveals the "black box" of convolutional network training.

Some of these images look scary because the models don't "know" anything, at least not in the way we do.

These images are the result of advanced algorithms and calculators that compare pixel values. They can spot and reproduce trends from training data, but can't interpret it. If so, they'd know dogs have two eyes and one face per head. If machines can think creatively, they're keeping it quiet.

You could be forgiven for thinking otherwise, given OpenAI's Dall-impressive E's results. From a technological perspective, it's incredible.

Arthur C. Clarke once said, "Any sufficiently advanced technology is indistinguishable from magic." Dall-magic E's requires a lot of math, computer science, processing power, and research. OpenAI did a great job, and we should applaud them.

Dall-E and similar tools match words and phrases to image data to train generative models. Matching text to images requires sorting and defining the images. Untold millions of low-wage data entry workers, content creators optimizing images for SEO, and anyone who has used a Captcha to access a website make these decisions. These people could live and die without receiving credit for their work, even though the project wouldn't exist without them.

This technique produces images that are less like paintings and more like mirrors that reflect our own beliefs and ideals back at us, albeit via a very complex prism. Due to the limitations and biases that these models portray, we must exercise caution when viewing these images.

The issue was succinctly articulated by artist Mimi Onuoha in her piece "On Algorithmic Violence":

As we continue to see the rise of algorithms being used for civic, social, and cultural decision-making, it becomes that much more important that we name the reality that we are seeing. Not because it is exceptional, but because it is ubiquitous. Not because it creates new inequities, but because it has the power to cloak and amplify existing ones. Not because it is on the horizon, but because it is already here.

Bastian Hasslinger

3 years ago

Before 2021, most startups had excessive valuations. It is currently causing issues.

Higher startup valuations are often favorable for all parties. High valuations show a business's potential. New customers and talent are attracted. They earn respect.

Everyone benefits if a company's valuation rises.

Founders and investors have always been incentivized to overestimate a company's value.

Post-money valuations were inflated by 2021 market expectations and the valuation model's mechanisms.

Founders must understand both levers to handle a normalizing market.

2021, the year of miracles

2021 must've seemed miraculous to entrepreneurs, employees, and VCs. Valuations rose, and funding resumed after the first Covid-19 epidemic caution.

In 2021, VC investments increased from $335B to $643B. 518 new worldwide unicorns vs. 134 in 2020; 951 US IPOs vs. 431.

Things can change quickly, as 2020-21 showed.

Rising interest rates, geopolitical developments, and normalizing technology conditions drive down share prices and tech company market caps in 2022. Zoom, the poster-child of early lockdown success, is down 37% since 1st Jan.

Once-inflated valuations can become a problem in a normalizing market, especially for founders, employees, and early investors.

the reason why startups are always overvalued

To see why inflated valuations are a problem, consider one of its causes.

Private company values only fluctuate following a new investment round, unlike publicly-traded corporations. The startup's new value is calculated simply:

(Latest round share price) x (total number of company shares)

This is the industry standard Post-Money Valuation model.

Let’s illustrate how it works with an example. If a VC invests $10M for 1M shares (at $10/share), and the company has 10M shares after the round, its Post-Money Valuation is $100M (10/share x 10M shares).

This approach might seem like the most natural way to assess a business, but the model often unintentionally overstates the underlying value of the company even if the share price paid by the investor is fair. All shares aren't equal.

New investors in a corporation will always try to minimize their downside risk, or the amount they lose if things go wrong. New investors will try to negotiate better terms and pay a premium.

How the value of a struggling SpaceX increased

SpaceX's 2008 Series D is an example. Despite the financial crisis and unsuccessful rocket launches, the company's Post-Money Valuation was 36% higher after the investment round. Why?

Series D SpaceX shares were protected. In case of liquidation, Series D investors were guaranteed a 2x return before other shareholders.

Due to downside protection, investors were willing to pay a higher price for this new share class.

The Post-Money Valuation model overpriced SpaceX because it viewed all the shares as equal (they weren't).

Why entrepreneurs, workers, and early investors stand to lose the most

Post-Money Valuation is an effective and sufficient method for assessing a startup's valuation, despite not taking share class disparities into consideration.

In a robust market, where the firm valuation will certainly expand with the next fundraising round or exit, the inflated value is of little significance.

Fairness endures. If a corporation leaves at a greater valuation, each stakeholder will receive a proportional distribution. (i.e., 5% of a $100M corporation yields $5M).

SpaceX's inherent overvaluation was never a problem. Had it been sold for less than its Post-Money Valuation, some shareholders, including founders, staff, and early investors, would have seen their ownership drop.

The unforgiving world of 2022

In 2022, founders, employees, and investors who benefited from inflated values will face below-valuation exits and down-rounds.

For them, 2021 will be a curse, not a blessing.

Some tech giants are worried. Klarna's valuation fell from $45B (Oct 21) to $30B (Jun 22), Canvas from $40B to $27B, and GoPuffs from $17B to $8.3B.

Shazam and Blue Apron have to exit or IPO at a cheaper price. Premium share classes are protected, while others receive less. The same goes for bankrupts.

Those who continue at lower valuations will lose reputation and talent. When their value declines by half, generous employee stock options become less enticing, and their ability to return anything is questioned.

What can we infer about the present situation?

Such techniques to enhance your company's value or stop a normalizing market are fiction.

The current situation is a painful reminder for entrepreneurs and a crucial lesson for future firms.

The devastating market fall of the previous six months has taught us one thing:

Keep in mind that any valuation is speculative. Money Post A startup's valuation is a highly simplified approximation of its true value, particularly in the early phases when it lacks significant income or a cutting-edge product. It is merely a projection of the future and a hypothetical meter. Until it is achieved by an exit, a valuation is nothing more than a number on paper.

Assume the value of your company is lower than it was in the past. Your previous valuation might not be accurate now due to substantial changes in the startup financing markets. There is little reason to think that your company's value will remain the same given the 50%+ decline in many newly listed IT companies. Recognize how the market situation is changing and use caution.

Recognize the importance of the stake you hold. Each share class has a unique value that varies. Know the sort of share class you own and how additional contractual provisions affect the market value of your security. Frameworks have been provided by Metrick and Yasuda (Yale & UC) and Gornall and Strebulaev (Stanford) for comprehending the terms that affect investors' cash-flow rights upon withdrawal. As a result, you will be able to more accurately evaluate your firm and determine the worth of each share class.

Be wary of approving excessively protective share terms.

The trade-offs should be considered while negotiating subsequent rounds. Accepting punitive contractual terms could first seem like a smart option in order to uphold your inflated worth, but you should proceed with caution. Such provisions ALWAYS result in misaligned shareholders, with common shareholders (such as you and your staff) at the bottom of the list.

:max_bytes(150000):strip_icc():format(webp)/adam_hayes-5bfc262a46e0fb005118b414.jpg)

Adam Hayes

3 years ago

Bernard Lawrence "Bernie" Madoff, the largest Ponzi scheme in history

Madoff who?

Bernie Madoff ran the largest Ponzi scheme in history, defrauding thousands of investors over at least 17 years, and possibly longer. He pioneered electronic trading and chaired Nasdaq in the 1990s. On April 14, 2021, he died while serving a 150-year sentence for money laundering, securities fraud, and other crimes.

Understanding Madoff

Madoff claimed to generate large, steady returns through a trading strategy called split-strike conversion, but he simply deposited client funds into a single bank account and paid out existing clients. He funded redemptions by attracting new investors and their capital, but the market crashed in late 2008. He confessed to his sons, who worked at his firm, on Dec. 10, 2008. Next day, they turned him in. The fund reported $64.8 billion in client assets.

Madoff pleaded guilty to 11 federal felony counts, including securities fraud, wire fraud, mail fraud, perjury, and money laundering. Ponzi scheme became a symbol of Wall Street's greed and dishonesty before the financial crisis. Madoff was sentenced to 150 years in prison and ordered to forfeit $170 billion, but no other Wall Street figures faced legal ramifications.

Bernie Madoff's Brief Biography

Bernie Madoff was born in Queens, New York, on April 29, 1938. He began dating Ruth (née Alpern) when they were teenagers. Madoff told a journalist by phone from prison that his father's sporting goods store went bankrupt during the Korean War: "You watch your father, who you idolize, build a big business and then lose everything." Madoff was determined to achieve "lasting success" like his father "whatever it took," but his career had ups and downs.

Early Madoff investments

At 22, he started Bernard L. Madoff Investment Securities LLC. First, he traded penny stocks with $5,000 he earned installing sprinklers and as a lifeguard. Family and friends soon invested with him. Madoff's bets soured after the "Kennedy Slide" in 1962, and his father-in-law had to bail him out.

Madoff felt he wasn't part of the Wall Street in-crowd. "We weren't NYSE members," he told Fishman. "It's obvious." According to Madoff, he was a scrappy market maker. "I was happy to take the crumbs," he told Fishman, citing a client who wanted to sell eight bonds; a bigger firm would turn it down.

Recognition

Success came when he and his brother Peter built electronic trading capabilities, or "artificial intelligence," that attracted massive order flow and provided market insights. "I had all these major banks coming down, entertaining me," Madoff told Fishman. "It was mind-bending."

By the late 1980s, he and four other Wall Street mainstays processed half of the NYSE's order flow. Controversially, he paid for much of it, and by the late 1980s, Madoff was making in the vicinity of $100 million a year. He was Nasdaq chairman from 1990 to 1993.

Madoff's Ponzi scheme

It is not certain exactly when Madoff's Ponzi scheme began. He testified in court that it began in 1991, but his account manager, Frank DiPascali, had been at the firm since 1975.

Why Madoff did the scheme is unclear. "I had enough money to support my family's lifestyle. "I don't know why," he told Fishman." Madoff could have won Wall Street's respect as a market maker and electronic trading pioneer.

Madoff told Fishman he wasn't solely responsible for the fraud. "I let myself be talked into something, and that's my fault," he said, without saying who convinced him. "I thought I could escape eventually. I thought it'd be quick, but I couldn't."

Carl Shapiro, Jeffry Picower, Stanley Chais, and Norm Levy have been linked to Bernard L. Madoff Investment Securities LLC for years. Madoff's scheme made these men hundreds of millions of dollars in the 1960s and 1970s.

Madoff told Fishman, "Everyone was greedy, everyone wanted to go on." He says the Big Four and others who pumped client funds to him, outsourcing their asset management, must have suspected his returns or should have. "How can you make 15%-18% when everyone else is making less?" said Madoff.

How Madoff Got Away with It for So Long

Madoff's high returns made clients look the other way. He deposited their money in a Chase Manhattan Bank account, which merged to become JPMorgan Chase & Co. in 2000. The bank may have made $483 million from those deposits, so it didn't investigate.

When clients redeemed their investments, Madoff funded the payouts with new capital he attracted by promising unbelievable returns and earning his victims' trust. Madoff created an image of exclusivity by turning away clients. This model let half of Madoff's investors profit. These investors must pay into a victims' fund for defrauded investors.

Madoff wooed investors with his philanthropy. He defrauded nonprofits, including the Elie Wiesel Foundation for Peace and Hadassah. He approached congregants through his friendship with J. Ezra Merkin, a synagogue officer. Madoff allegedly stole $1 billion to $2 billion from his investors.

Investors believed Madoff for several reasons:

- His public portfolio seemed to be blue-chip stocks.

- His returns were high (10-20%) but consistent and not outlandish. In a 1992 interview with Madoff, the Wall Street Journal reported: "[Madoff] insists the returns were nothing special, given that the S&P 500-stock index returned 16.3% annually from 1982 to 1992. 'I'd be surprised if anyone thought matching the S&P over 10 years was remarkable,' he says.

- "He said he was using a split-strike collar strategy. A collar protects underlying shares by purchasing an out-of-the-money put option.

SEC inquiry

The Securities and Exchange Commission had been investigating Madoff and his securities firm since 1999, which frustrated many after he was prosecuted because they felt the biggest damage could have been prevented if the initial investigations had been rigorous enough.

Harry Markopolos was a whistleblower. In 1999, he figured Madoff must be lying in an afternoon. The SEC ignored his first Madoff complaint in 2000.

Markopolos wrote to the SEC in 2005: "The largest Ponzi scheme is Madoff Securities. This case has no SEC reward, so I'm turning it in because it's the right thing to do."

Many believed the SEC's initial investigations could have prevented Madoff's worst damage.

Markopolos found irregularities using a "Mosaic Method." Madoff's firm claimed to be profitable even when the S&P fell, which made no mathematical sense given what he was investing in. Markopolos said Madoff Securities' "undisclosed commissions" were the biggest red flag (1 percent of the total plus 20 percent of the profits).

Markopolos concluded that "investors don't know Bernie Madoff manages their money." Markopolos learned Madoff was applying for large loans from European banks (seemingly unnecessary if Madoff's returns were high).

The regulator asked Madoff for trading account documentation in 2005, after he nearly went bankrupt due to redemptions. The SEC drafted letters to two of the firms on his six-page list but didn't send them. Diana Henriques, author of "The Wizard of Lies: Bernie Madoff and the Death of Trust," documents the episode.

In 2008, the SEC was criticized for its slow response to Madoff's fraud.

Confession, sentencing of Bernie Madoff

Bernard L. Madoff Investment Securities LLC reported 5.6% year-to-date returns in November 2008; the S&P 500 fell 39%. As the selling continued, Madoff couldn't keep up with redemption requests, and on Dec. 10, he confessed to his sons Mark and Andy, who worked at his firm. "After I told them, they left, went to a lawyer, who told them to turn in their father, and I never saw them again. 2008-12-11: Bernie Madoff arrested.

Madoff insists he acted alone, but several of his colleagues were jailed. Mark Madoff died two years after his father's fraud was exposed. Madoff's investors committed suicide. Andy Madoff died of cancer in 2014.

2009 saw Madoff's 150-year prison sentence and $170 billion forfeiture. Marshals sold his three homes and yacht. Prisoner 61727-054 at Butner Federal Correctional Institution in North Carolina.

Madoff's lawyers requested early release on February 5, 2020, claiming he has a terminal kidney disease that may kill him in 18 months. Ten years have passed since Madoff's sentencing.

Bernie Madoff's Ponzi scheme aftermath

The paper trail of victims' claims shows Madoff's complexity and size. Documents show Madoff's scam began in the 1960s. His final account statements show $47 billion in "profit" from fake trades and shady accounting.

Thousands of investors lost their life savings, and multiple stories detail their harrowing loss.

Irving Picard, a New York lawyer overseeing Madoff's bankruptcy, has helped investors. By December 2018, Picard had recovered $13.3 billion from Ponzi scheme profiteers.

A Madoff Victim Fund (MVF) was created in 2013 to help compensate Madoff's victims, but the DOJ didn't start paying out the $4 billion until late 2017. Richard Breeden, a former SEC chair who oversees the fund, said thousands of claims were from "indirect investors"

Breeden and his team had to reject many claims because they weren't direct victims. Breeden said he based most of his decisions on one simple rule: Did the person invest more than they withdrew? Breeden estimated 11,000 "feeder" investors.

Breeden wrote in a November 2018 update for the Madoff Victim Fund, "We've paid over 27,300 victims 56.65% of their losses, with thousands more to come." In December 2018, 37,011 Madoff victims in the U.S. and around the world received over $2.7 billion. Breeden said the fund expected to make "at least one more significant distribution in 2019"

This post is a summary. Read full article here