More on Web3 & Crypto

Ben

3 years ago

The Real Value of Carbon Credit (Climate Coin Investment)

Disclaimer : This is not financial advice for any investment.

TL;DR

You might not have realized it, but as we move toward net zero carbon emissions, the globe is already at war.

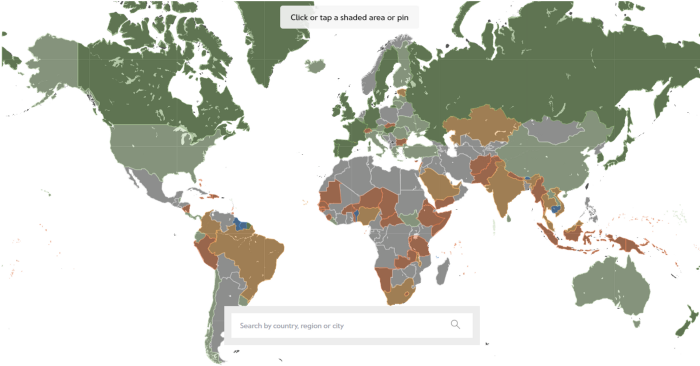

According to the Paris Agreement of COP26, 64% of nations have already declared net zero, and the issue of carbon reduction has already become so important for businesses that it affects their ability to survive. Furthermore, the time when carbon emission standards will be defined and controlled on an individual basis is becoming closer.

Since 2017, the market for carbon credits has experienced extraordinary expansion as a result of widespread talks about carbon credits. The carbon credit market is predicted to expand much more once net zero is implemented and carbon emission rules inevitably tighten.

Hello! Ben here from Nonce Classic. Nonce Classic has recently confirmed the tremendous growth potential of the carbon credit market in the midst of a major trend towards the global goal of net zero (carbon emissions caused by humans — carbon reduction by humans = 0 ). Moreover, we too believed that the questions and issues the carbon credit market suffered from the last 30–40yrs could be perfectly answered through crypto technology and that is why we have added a carbon credit crypto project to the Nonce Classic portfolio. There have been many teams out there that have tried to solve environmental problems through crypto but very few that have measurable experience working in the carbon credit scene. Thus we have put in our efforts to find projects that are not crypto projects created for the sake of issuing tokens but projects that pragmatically use crypto technology to combat climate change by solving problems of the current carbon credit market. In that process, we came to hear of Climate Coin, a veritable carbon credit crypto project, and us Nonce Classic as an accelerator, have begun contributing to its growth and invested in its tokens. Starting with this article, we plan to publish a series of articles explaining why the carbon credit market is bullish, why we invested in Climate Coin, and what kind of project Climate Coin is specifically. In this first article let us understand the carbon credit market and look into its growth potential! Let’s begin :)

The Unavoidable Entry of the Net Zero Era

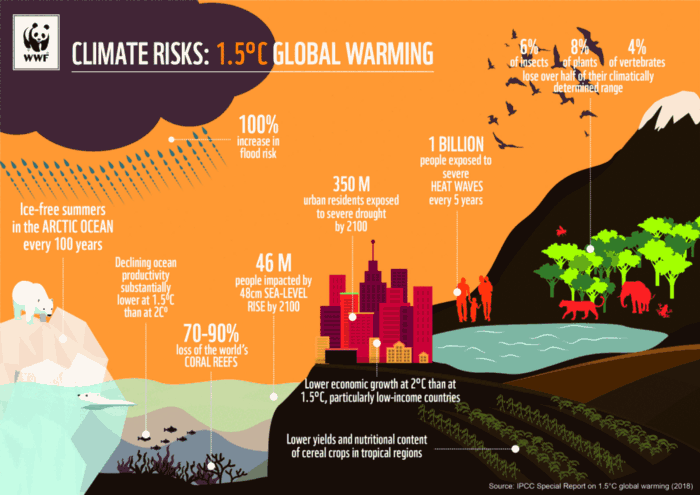

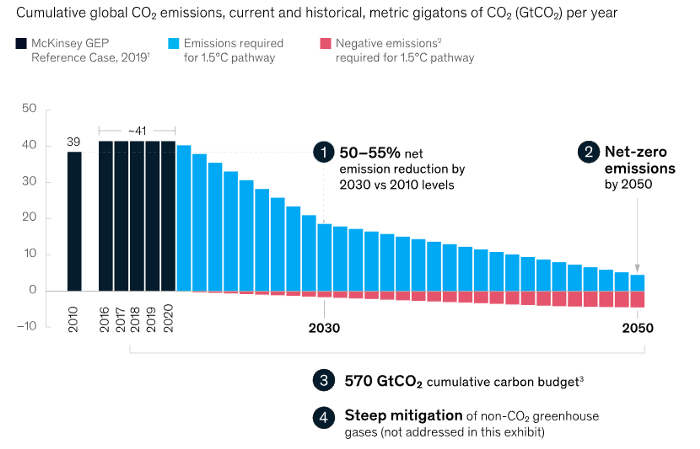

Net zero means... Human carbon emissions are balanced by carbon reduction efforts. A non-environmentalist may find it hard to accept that net zero is attainable by 2050. Global cooperation to save the earth is happening faster than we imagine.

In the Paris Agreement of COP26, concluded in Glasgow, UK on Oct. 31, 2021, nations pledged to reduce worldwide yearly greenhouse gas emissions by more than 50% by 2030 and attain net zero by 2050. Governments throughout the world have pledged net zero at the national level and are holding each other accountable by submitting Nationally Determined Contributions (NDC) every five years to assess implementation. 127 of 198 nations have declared net zero.

Each country's 1.5-degree reduction plans have led to carbon reduction obligations for companies. In places with the strictest environmental regulations, like the EU, companies often face bankruptcy because the cost of buying carbon credits to meet their carbon allowances exceeds their operating profits. In this day and age, minimizing carbon emissions and securing carbon credits are crucial.

Recent SEC actions on climate change may increase companies' concerns about reducing emissions. The SEC required all U.S. stock market companies to disclose their annual greenhouse gas emissions and climate change impact on March 21, 2022. The SEC prepared the proposed regulation through in-depth analysis and stakeholder input since last year. Three out of four SEC members agreed that it should pass without major changes. If the regulation passes, it will affect not only US companies, but also countless companies around the world, directly or indirectly.

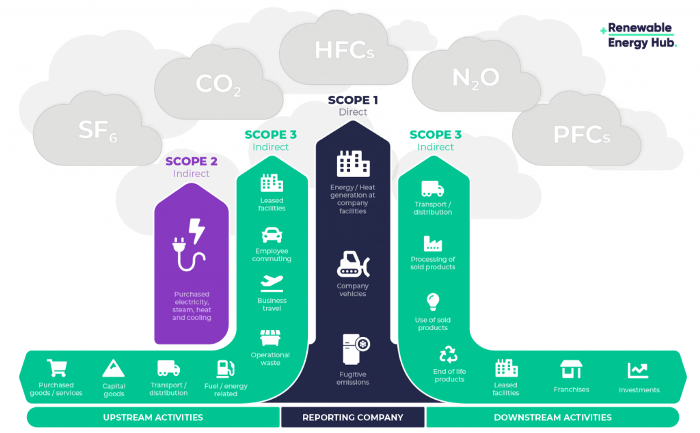

Even companies not listed on the U.S. stock market will be affected and, in most cases, required to disclose emissions. Companies listed on the U.S. stock market with significant greenhouse gas emissions or specific targets are subject to stricter emission standards (Scope 3) and disclosure obligations, which will magnify investigations into all related companies. Greenhouse gas emissions can be calculated three ways. Scope 1 measures carbon emissions from a company's facilities and transportation. Scope 2 measures carbon emissions from energy purchases. Scope 3 covers all indirect emissions from a company's value chains.

The SEC's proposed carbon emission disclosure mandate and regulations are one example of how carbon credit policies can cross borders and affect all parties. As such incidents will continue throughout the implementation of net zero, even companies that are not immediately obligated to disclose their carbon emissions must be prepared to respond to changes in carbon emission laws and policies.

Carbon reduction obligations will soon become individual. Individual consumption has increased dramatically with improved quality of life and convenience, despite national and corporate efforts to reduce carbon emissions. Since consumption is directly related to carbon emissions, increasing consumption increases carbon emissions. Countries around the world have agreed that to achieve net zero, carbon emissions must be reduced on an individual level. Solutions to individual carbon reduction are being actively discussed and studied under the term Personal Carbon Trading (PCT).

PCT is a system that allows individuals to trade carbon emission quotas in the form of carbon credits. Individuals who emit more carbon than their allotment can buy carbon credits from those who emit less. European cities with well-established carbon credit markets are preparing for net zero by conducting early carbon reduction prototype projects. The era of checking product labels for carbon footprints, choosing low-emissions transportation, and worrying about hot shower emissions is closer than we think.

The Market for Carbon Credits Is Expanding Fearfully

Compliance and voluntary carbon markets make up the carbon credit market.

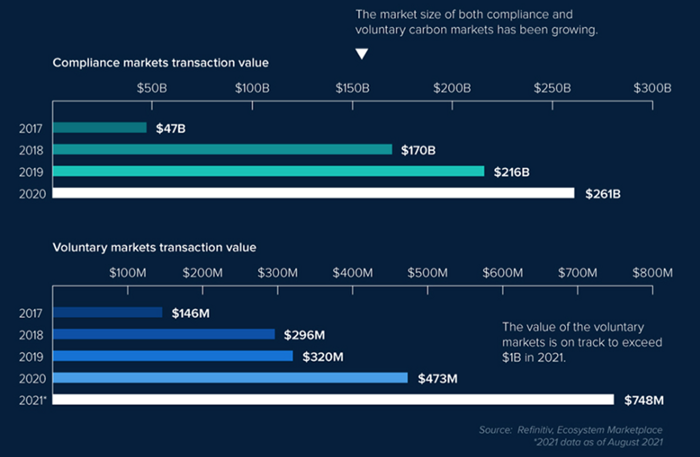

A Compliance Market enforces carbon emission allowances for actors. Companies in industries that previously emitted a lot of carbon are included in the mandatory carbon market, and each government receives carbon credits each year. If a company's emissions are less than the assigned cap and it has extra carbon credits, it can sell them to other companies that have larger emissions and require them (Cap and Trade). The annual number of free emission permits provided to companies is designed to decline, therefore companies' desire for carbon credits will increase. The compliance market's yearly trading volume will exceed $261B in 2020, five times its 2017 level.

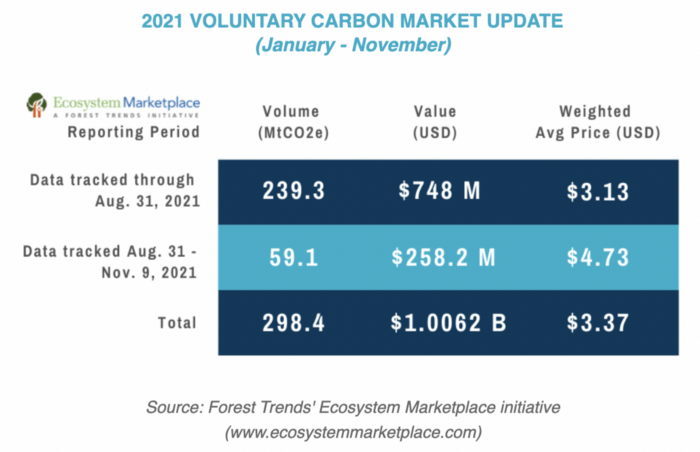

In the Voluntary Market, carbon reduction is voluntary and carbon credits are sold for personal reasons or to build market participants' eco-friendly reputations. Even if not in the compliance market, it is typical for a corporation to be obliged to offset its carbon emissions by acquiring voluntary carbon credits. When a company seeks government or company investment, it may be denied because it is not net zero. If a significant shareholder declares net zero, the companies below it must execute it. As the world moves toward ESG management, becoming an eco-friendly company is no longer a strategic choice to gain a competitive edge, but an important precaution to not fall behind. Due to this eco-friendly trend, the annual market volume of voluntary emission credits will approach $1B by November 2021. The voluntary credit market is anticipated to reach $5B to $50B by 2030. (TSCVM 2021 Report)

In conclusion

This article analyzed how net zero, a target promised by countries around the world to combat climate change, has brought governmental, corporate, and human changes. We discussed how these shifts will become more obvious as we approach net zero, and how the carbon credit market would increase exponentially in response. In the following piece, let's analyze the hurdles impeding the carbon credit market's growth, how the project we invested in tries to tackle these issues, and why we chose Climate Coin. Wait! Jim Skea, co-chair of the IPCC working group, said,

“It’s now or never, if we want to limit global warming to 1.5°C” — Jim Skea

Join nonceClassic’s community:

Telegram: https://t.me/non_stock

Youtube: https://www.youtube.com/channel/UCqeaLwkZbEfsX35xhnLU2VA

Twitter: @nonceclassic

Mail us : general@nonceclassic.org

Faisal Khan

3 years ago

4 typical methods of crypto market manipulation

Market fraud

Due to its decentralized and fragmented character, the crypto market has integrity difficulties.

Cryptocurrencies are an immature sector, therefore market manipulation becomes a bigger issue. Many research have attempted to uncover these abuses. CryptoCompare's newest one highlights some of the industry's most typical scams.

Why are these concerns so common in the crypto market? First, even the largest centralized exchanges remain unregulated due to industry immaturity. A low-liquidity market segment makes an attack more harmful. Finally, market surveillance solutions not implemented reduce transparency.

In CryptoCompare's latest exchange benchmark, 62.4% of assessed exchanges had a market surveillance system, although only 18.1% utilised an external solution. To address market integrity, this measure must improve dramatically. Before discussing the report's malpractices, note that this is not a full list of attacks and hacks.

Clean Trading

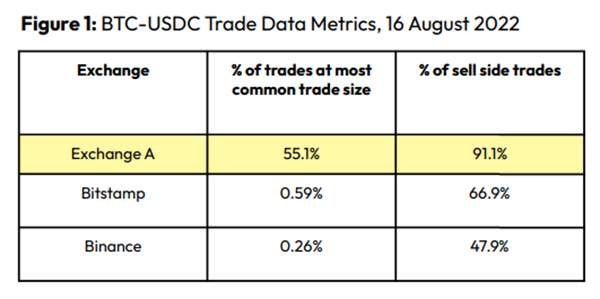

An investor buys and sells concurrently to increase the asset's price. Centralized and decentralized exchanges show this misconduct. 23 exchanges have a volume-volatility correlation < 0.1 during the previous 100 days, according to CryptoCompares. In August 2022, Exchange A reported $2.5 trillion in artificial and/or erroneous volume, up from $33.8 billion the month before.

Spoofing

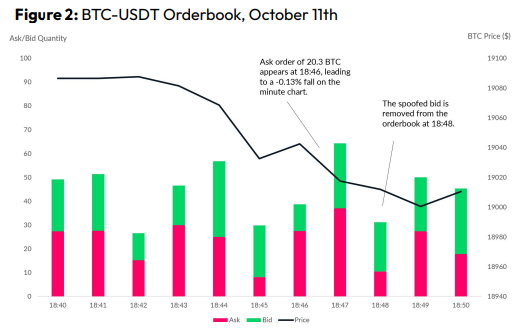

Criminals create and cancel fake orders before they can be filled. Since manipulators can hide in larger trading volumes, larger exchanges have more spoofing. A trader placed a 20.8 BTC ask order at $19,036 when BTC was trading at $19,043. BTC declined 0.13% to $19,018 in a minute. At 18:48, the trader canceled the ask order without filling it.

Front-Running

Most cryptocurrency front-running involves inside trading. Traditional stock markets forbid this. Since most digital asset information is public, this is harder. Retailers could utilize bots to front-run.

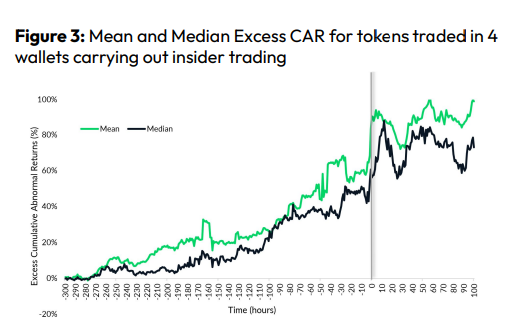

CryptoCompare found digital wallets of people who traded like insiders on exchange listings. The figure below shows excess cumulative anomalous returns (CAR) before a coin listing on an exchange.

Finally, LAYERING is a sequence of spoofs in which successive orders are put along a ladder of greater (layering offers) or lower (layering bids) values. The paper concludes with recommendations to mitigate market manipulation. Exchange data transparency, market surveillance, and regulatory oversight could reduce manipulative tactics.

Ann

3 years ago

These new DeFi protocols are just amazing.

I've never seen this before.

Focus on native crypto development, not price activity or turmoil.

CT is boring now. Either folks are still angry about FTX or they're distracted by AI. Plus, it's year-end, and people rest for the holidays. 2022 was rough.

So DeFi fans can get inspired by something fresh. Who's building? As I read the Defillama daily roundup, many updates are still on FTX and its contagion.

I've used the same method on their Raises page. Not much happened :(. Maybe my high standards are to fault, but the business may be resting. OK.

The handful I locate might last us till the end of the year. (If another big blowup occurs.)

Hashflow

An on-chain monitor account I follow reported a huge transfer of $HFT from Binance to Jump Tradings.

I was intrigued. Stacking? So I checked and discovered out the project was launched through Binance Launchpad, which has introduced many 100x tokens (although momentarily) in the past, such as GALA and STEPN.

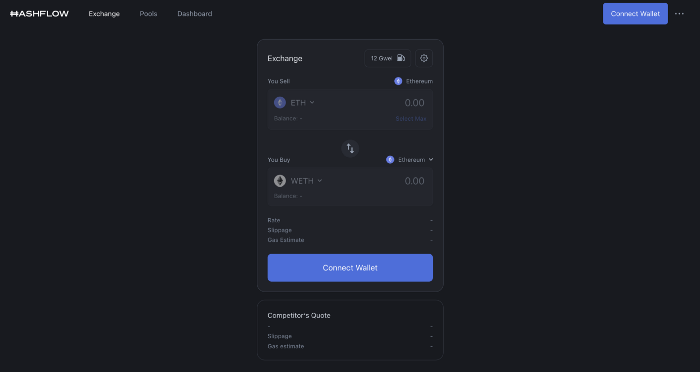

Hashflow appears to be pumpable. Binance launchpad, VC backers, CEX listing immediately. What's the protocol?

Hasflow is intriguing and timely, I discovered. After the FTX collapse, people looked more at DEXs.

Hashflow is a decentralized exchange that connects traders with professional market makers, according to its Binance launchpad description. Post-FTX, market makers lost their MM-ing chance with the collapse of the world's third-largest exchange. Jump and Wintermute back them?

Why is that the case? Hashflow doesn't use bonding curves like standard AMM. On AMMs, you pay more for the following trade because the prior trade reduces liquidity (supply and demand). With market maker quotations, you get a CEX-like experience (fewer coins in the pool, higher price). Stable prices, no MEV frontrunning.

Hashflow is innovative because...

DEXs gained from the FTX crash, but let's be honest: DEXs aren't as good as CEXs. Hashflow will change this.

Hashflow offers MEV protection, which major dealers seek in DEXs. You can trade large amounts without front running and sandwich assaults.

Hasflow offers a user-friendly swapping platform besides MEV. Any chain can be traded smoothly. This is a benefit because DEXs lag CEXs in UX.

Status, timeline:

Wintermute wrote in August that prominent market makers will work on Hashflow. Binance launched a month-long farming session in December. Jump probably participated in this initial sell, therefore we witnessed a significant transfer after the introduction.

Binance began trading HFT token on November 11 (the day FTX imploded). coincidence?)

Tokens are used for community rewards. Perhaps they'd copy dYdX. (Airdrop?). Read their documents about their future plans. Tokenomics doesn't impress me. Governance, rewards, and NFT.

Their stat page details their activity. First came Ethereum, then Arbitrum. For a new protocol in a bear market, they handled a lot of unique users daily.

It’s interesting to see their future. Will they be thriving? Not only against DEXs, but also among the CEXs too.

STFX

I forget how I found STFX. Possibly a Twitter thread concerning Arbitrum applications. STFX was the only new protocol I found interesting.

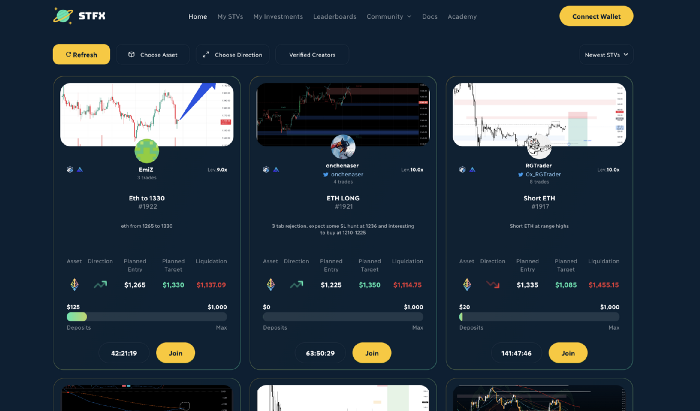

STFX is a new concept and trader problem-solver. I've never seen this protocol.

STFX allows you copy trades. You give someone your money to trade for you.

It's a marketplace. Traders are everywhere. You put your entry, exit, liquidation point, and trading theory. Twitter has a verification system for socials. Leaderboards display your trading skill.

This service could be popular. Staying disciplined is the hardest part of trading. Sometimes you take-profit too early or too late, or sell at a loss when an asset dumps, then it soon recovers (often happens in crypto.) It's hard to stick to entry-exit and liquidation plans.

What if you could hire someone to run your trade for a little commission? Set-and-forget.

Trading money isn't easy. Trust how? How do you know they won't steal your money?

Smart contracts.

STFX's trader is a vault maker/manager. One trade=one vault. User sets long/short, entrance, exit, and liquidation point. Anyone who agrees can exchange instantly. The smart contract will keep the fund during the trade and limit the manager's actions.

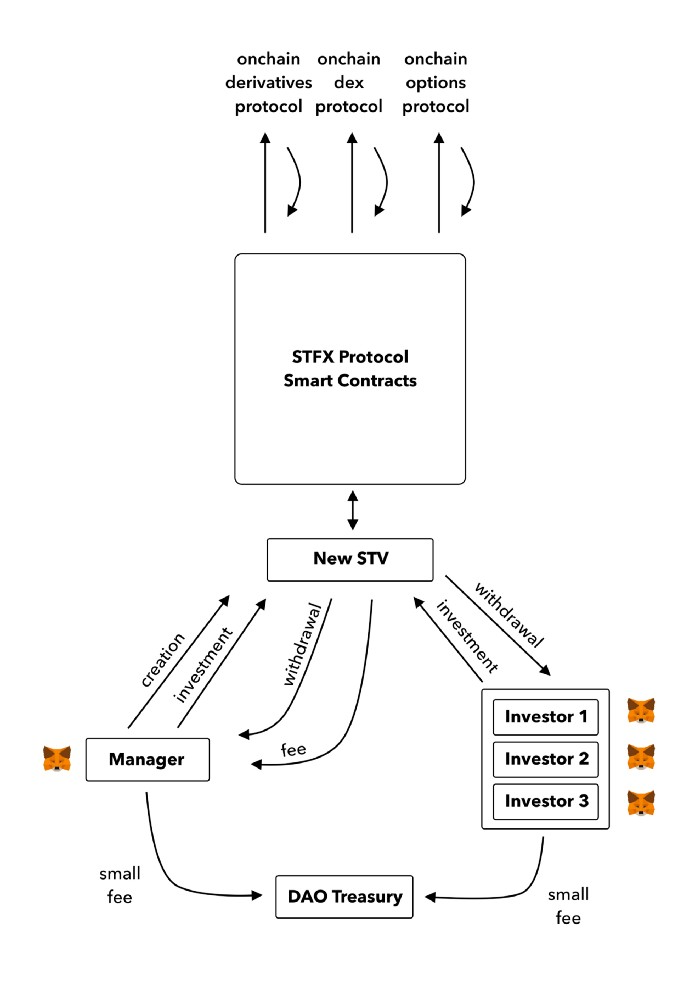

Here's STFX's transaction flow.

Managers and the treasury receive fees. It's a sustainable business strategy that benefits everyone.

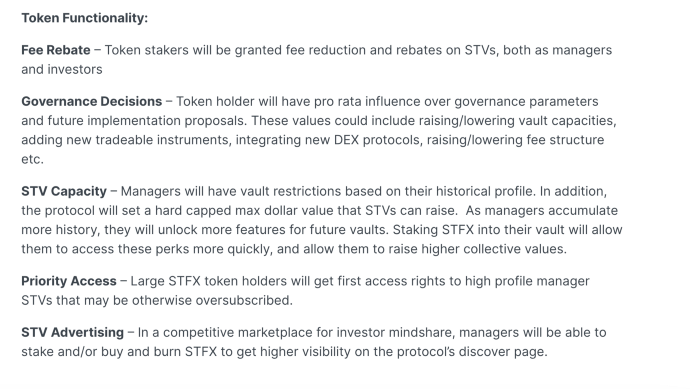

I'm impressed by $STFX's planned use. Brilliant priority access. A crypto dealer opens a vault here. Many would join. STFX tokens offer VIP access over those without tokens.

STFX provides short-term trading, which is mind-blowing to me. I agree with their platform's purpose. Crypto market pricing actions foster short-termism. When you trade, the turnover could be larger than long-term holding or trading. 2017 BTC buyers waited 5 years to complete their holdings.

STFX teams simply adapted. Volatility aids trading.

All things about STFX scream Degen. The protocol fully embraces the degen nature of some, if not most, crypto natives.

An enjoyable dApp. Leaderboards are fun for reputation-building. FLEXING COMPETITIONS. You can join for as low as $10. STFX uses Arbitrum, therefore gas costs are low. Alpha procedure completes the degen feeling.

Despite looking like they don't take themselves seriously, I sense a strong business plan below. There is a real demand for the solution STFX offers.

You might also like

Sanjay Priyadarshi

3 years ago

Using Ruby code, a programmer created a $48,000,000,000 product that Elon Musk admired.

Unexpected Success

Shopify CEO and co-founder Tobias Lutke. Shopify is worth $48 billion.

World-renowned entrepreneur Tobi

Tobi never expected his first online snowboard business to become a multimillion-dollar software corporation.

Tobi founded Shopify to establish a 20-person company.

The publicly traded corporation employs over 10,000 people.

Here's Tobi Lutke's incredible story.

Elon Musk tweeted his admiration for the Shopify creator.

30-October-2019.

Musk praised Shopify founder Tobi Lutke on Twitter.

Happened:

Explore this programmer's journey.

What difficulties did Tobi experience as a young child?

Germany raised Tobi.

Tobi's parents realized he was smart but had trouble learning as a toddler.

Tobi was learning disabled.

Tobi struggled with school tests.

Tobi's learning impairments were undiagnosed.

Tobi struggled to read as a dyslexic.

Tobi also found school boring.

Germany's curriculum didn't inspire Tobi's curiosity.

“The curriculum in Germany was taught like here are all the solutions you might find useful later in life, spending very little time talking about the problem…If I don’t understand the problem I’m trying to solve, it’s very hard for me to learn about a solution to a problem.”

Studying computer programming

After tenth grade, Tobi decided school wasn't for him and joined a German apprenticeship program.

This curriculum taught Tobi software engineering.

He was an apprentice in a small Siemens subsidiary team.

Tobi worked with rebellious Siemens employees.

Team members impressed Tobi.

Tobi joined the team for this reason.

Tobi was pleased to get paid to write programming all day.

His life could not have been better.

Devoted to snowboarding

Tobi loved snowboarding.

He drove 5 hours to ski at his folks' house.

His friends traveled to the US to snowboard when he was older.

However, the cheap dollar conversion rate led them to Canada.

2000.

Tobi originally decided to snowboard instead than ski.

Snowboarding captivated him in Canada.

On the trip to Canada, Tobi encounters his wife.

Tobi meets his wife Fiona McKean on his first Canadian ski trip.

They maintained in touch after the trip.

Fiona moved to Germany after graduating.

Tobi was a startup coder.

Fiona found work in Germany.

Her work included editing, writing, and academics.

“We lived together for 10 months and then she told me that she need to go back for the master's program.”

With Fiona, Tobi immigrated to Canada.

Fiona invites Tobi.

Tobi agreed to move to Canada.

Programming helped Tobi move in with his girlfriend.

Tobi was an excellent programmer, therefore what he did in Germany could be done anywhere.

He worked remotely for his German employer in Canada.

Tobi struggled with remote work.

Due to poor communication.

No slack, so he used email.

Programmers had trouble emailing.

Tobi's startup was developing a browser.

After the dot-com crash, individuals left that startup.

It ended.

Tobi didn't intend to work for any major corporations.

Tobi left his startup.

He believed he had important skills for any huge corporation.

He refused to join a huge corporation.

Because of Siemens.

Tobi learned to write professional code and about himself while working at Siemens in Germany.

Siemens culture was odd.

Employees were distrustful.

Siemens' rigorous dress code implies that the corporation doesn't trust employees' attire.

It wasn't Tobi's place.

“There was so much bad with it that it just felt wrong…20-year-old Tobi would not have a career there.”

Focused only on snowboarding

Tobi lived in Ottawa with his girlfriend.

Canada is frigid in winter.

Ottawa's winters last.

Almost half a year.

Tobi wanted to do something worthwhile now.

So he snowboarded.

Tobi began snowboarding seriously.

He sought every snowboarding knowledge.

He researched the greatest snowboarding gear first.

He created big spreadsheets for snowboard-making technologies.

Tobi grew interested in selling snowboards while researching.

He intended to sell snowboards online.

He had no choice but to start his own company.

A small local company offered Tobi a job.

Interested.

He must sign papers to join the local company.

He needed a work permit when he signed the documents.

Tobi had no work permit.

He was allowed to stay in Canada while applying for permanent residency.

“I wasn’t illegal in the country, but my state didn’t give me a work permit. I talked to a lawyer and he told me it’s going to take a while until I get a permanent residency.”

Tobi's lawyer told him he cannot get a work visa without permanent residence.

His lawyer said something else intriguing.

Tobis lawyer advised him to start a business.

Tobi declined this local company's job offer because of this.

Tobi considered opening an internet store with his technical skills.

He sold snowboards online.

“I was thinking of setting up an online store software because I figured that would exist and use it as a way to sell snowboards…make money while snowboarding and hopefully have a good life.”

What brought Tobi and his co-founder together, and how did he support Tobi?

Tobi lived with his girlfriend's parents.

In Ottawa, Tobi encounters Scott Lake.

Scott was Tobis girlfriend's family friend and worked for Tobi's future employer.

Scott and Tobi snowboarded.

Tobi pitched Scott his snowboard sales software idea.

Scott liked the idea.

They planned a business together.

“I was looking after the technology and Scott was dealing with the business side…It was Scott who ended up developing relationships with vendors and doing all the business set-up.”

Issues they ran into when attempting to launch their business online

Neither could afford a long-term lease.

That prompted their online business idea.

They would open a store.

Tobi anticipated opening an internet store in a week.

Tobi seeks open-source software.

Most existing software was pricey.

Tobi and Scott couldn't afford pricey software.

“In 2004, I was sitting in front of my computer absolutely stunned realising that we hadn’t figured out how to create software for online stores.”

They required software to:

to upload snowboard images to the website.

people to look up the types of snowboards that were offered on the website. There must be a search feature in the software.

Online users transmit payments, and the merchant must receive them.

notifying vendors of the recently received order.

No online selling software existed at the time.

Online credit card payments were difficult.

How did they advance the software while keeping expenses down?

Tobi and Scott needed money to start selling snowboards.

Tobi and Scott funded their firm with savings.

“We both put money into the company…I think the capital we had was around CAD 20,000(Canadian Dollars).”

Despite investing their savings.

They minimized costs.

They tried to conserve.

No office rental.

They worked in several coffee shops.

Tobi lived rent-free at his girlfriend's parents.

He installed software in coffee cafes.

How were the software issues handled?

Tobi found no online snowboard sales software.

Two choices remained:

Change your mind and try something else.

Use his programming expertise to produce something that will aid in the expansion of this company.

Tobi knew he was the sole programmer working on such a project from the start.

“I had this realisation that I’m going to be the only programmer who has ever worked on this, so I don’t have to choose something that lots of people know. I can choose just the best tool for the job…There is been this programming language called Ruby which I just absolutely loved ”

Ruby was open-source and only had Japanese documentation.

Latin is the source code.

Tobi used Ruby twice.

He assumed he could pick the tool this time.

Why not build with Ruby?

How did they find their first time operating a business?

Tobi writes applications in Ruby.

He wrote the initial software version in 2.5 months.

Tobi and Scott founded Snowdevil to sell snowboards.

Tobi coded for 16 hours a day.

His lifestyle was unhealthy.

He enjoyed pizza and coke.

“I would never recommend this to anyone, but at the time there was nothing more interesting to me in the world.”

Their initial purchase and encounter with it

Tobi worked in cafes then.

“I was working in a coffee shop at this time and I remember everything about that day…At some time, while I was writing the software, I had to type the email that the software would send to tell me about the order.”

Tobi recalls everything.

He checked the order on his laptop at the coffee shop.

Pennsylvanian ordered snowboard.

Tobi walked home and called Scott. Tobi told Scott their first order.

They loved the order.

How were people made aware about Snowdevil?

2004 was very different.

Tobi and Scott attempted simple website advertising.

Google AdWords was new.

Ad clicks cost 20 cents.

Online snowboard stores were scarce at the time.

Google ads propelled the snowdevil brand.

Snowdevil prospered.

They swiftly recouped their original investment in the snowboard business because to its high profit margin.

Tobi and Scott struggled with inventories.

“Snowboards had really good profit margins…Our biggest problem was keeping inventory and getting it back…We were out of stock all the time.”

Selling snowboards returned their investment and saved them money.

They did not appoint a business manager.

They accomplished everything alone.

Sales dipped in the spring, but something magical happened.

Spring sales plummeted.

They considered stocking different boards.

They naturally wanted to add boards and grow the business.

However, magic occurred.

Tobi coded and improved software while running Snowdevil.

He modified software constantly. He wanted speedier software.

He experimented to make the software more resilient.

Tobi received emails requesting the Snowdevil license.

They intended to create something similar.

“I didn’t stop programming, I was just like Ok now let me try things, let me make it faster and try different approaches…Increasingly I got people sending me emails and asking me If I would like to licence snowdevil to them. People wanted to start something similar.”

Software or skateboards, your choice

Scott and Tobi had to choose a hobby in 2005.

They might sell alternative boards or use software.

The software was a no-brainer from demand.

Daniel Weinand is invited to join Tobi's business.

Tobis German best friend is Daniel.

Tobi and Scott chose to use the software.

Tobi and Scott kept the software service.

Tobi called Daniel to invite him to Canada to collaborate.

Scott and Tobi had quit snowboarding until then.

How was Shopify launched, and whence did the name come from?

The three chose Shopify.

Named from two words.

First:

Shop

Final part:

Simplify

Shopify

Shopify's crew has always had one goal:

creating software that would make it simple and easy for people to launch online storefronts.

Launched Shopify after raising money for the first time.

Shopify began fundraising in 2005.

First, they borrowed from family and friends.

They needed roughly $200k to run the company efficiently.

$200k was a lot then.

When questioned why they require so much money. Tobi told them to trust him with their goals. The team raised seed money from family and friends.

Shopify.com has a landing page. A demo of their goal was on the landing page.

In 2006, Shopify had about 4,000 emails.

Shopify rented an Ottawa office.

“We sent a blast of emails…Some people signed up just to try it out, which was exciting.”

How things developed after Scott left the company

Shopify co-founder Scott Lake left in 2008.

Scott was CEO.

“He(Scott) realized at some point that where the software industry was going, most of the people who were the CEOs were actually the highly technical person on the founding team.”

Scott leaving the company worried Tobi.

Tobis worried about finding a new CEO.

To Tobi:

A great VC will have the network to identify the perfect CEO for your firm.

Tobi started visiting Silicon Valley to meet with venture capitalists to recruit a CEO.

Initially visiting Silicon Valley

Tobi came to Silicon Valley to start a 20-person company.

This company creates eCommerce store software.

Tobi never wanted a big corporation. He desired a fulfilling existence.

“I stayed in a hostel in the Bay Area. I had one roommate who was also a computer programmer. I bought a bicycle on Craiglist. I was there for a week, but ended up staying two and a half weeks.”

Tobi arrived unprepared.

When venture capitalists asked him business questions.

He answered few queries.

Tobi didn't comprehend VC meetings' terminology.

He wrote the terms down and looked them up.

Some were fascinated after he couldn't answer all these queries.

“I ended up getting the kind of term sheets people dream about…All the offers were conditional on moving our company to Silicon Valley.”

Canada received Tobi.

He wanted to consult his team before deciding. Shopify had five employees at the time.

2008.

A global recession greeted Tobi in Canada. The recession hurt the market.

His term sheets were useless.

The economic downturn in the world provided Shopify with a fantastic opportunity.

The global recession caused significant job losses.

Fired employees had several ideas.

They wanted online stores.

Entrepreneurship was desired. They wanted to quit work.

People took risks and tried new things during the global slump.

Shopify subscribers skyrocketed during the recession.

“In 2009, the company reached neutral cash flow for the first time…We were in a position to think about long-term investments, such as infrastructure projects.”

Then, Tobi Lutke became CEO.

How did Tobi perform as the company's CEO?

“I wasn’t good. My team was very patient with me, but I had a lot to learn…It’s a very subtle job.”

2009–2010.

Tobi limited the company's potential.

He deliberately restrained company growth.

Tobi had one costly problem:

Whether Shopify is a venture or a lifestyle business.

The company's annual revenue approached $1 million.

Tobi battled with the firm and himself despite good revenue.

His wife was supportive, but the responsibility was crushing him.

“It’s a crushing responsibility…People had families and kids…I just couldn’t believe what was going on…My father-in-law gave me money to cover the payroll and it was his life-saving.”

Throughout this trip, everyone supported Tobi.

They believed it.

$7 million in donations received

Tobi couldn't decide if this was a lifestyle or a business.

Shopify struggled with marketing then.

Later, Tobi tried 5 marketing methods.

He told himself that if any marketing method greatly increased their growth, he would call it a venture, otherwise a lifestyle.

The Shopify crew brainstormed and voted on marketing concepts.

Tested.

“Every single idea worked…We did Adwords, published a book on the concept, sponsored a podcast and all the ones we tracked worked.”

To Silicon Valley once more

Shopify marketing concepts worked once.

Tobi returned to Silicon Valley to pitch investors.

He raised $7 million, valuing Shopify at $25 million.

All investors had board seats.

“I find it very helpful…I always had a fantastic relationship with everyone who’s invested in my company…I told them straight that I am not going to pretend I know things, I want you to help me.”

Tobi developed skills via running Shopify.

Shopify had 20 employees.

Leaving his wife's parents' home

Tobi left his wife's parents in 2014.

Tobi had a child.

Shopify has 80,000 customers and 300 staff in 2013.

Public offering in 2015

Shopify investors went public in 2015.

Shopify powers 4.1 million e-Commerce sites.

Shopify stores are 65% US-based.

It is currently valued at $48 billion.

Akshad Singi

3 years ago

Four obnoxious one-minute habits that help me save more than 30 hours each week

These four, when combined, destroy procrastination.

You're not rushed. You waste it on busywork.

You'll accept this eventually.

In 2022, the daily average usage of a user on social media is 2.5 hours.

By 2020, 6 billion hours of video were watched each month by Netflix's customers, who used the service an average of 3.2 hours per day.

When we see these numbers, we think "Wow!" People squander so much time as though they don't contribute. True. These are yours. Likewise.

We don't lack time; we just waste it. Once you realize this, you can change your habits to save time. This article explains. If you adopt ALL 4 of these simple behaviors, you'll see amazing benefits.

Time-blocking

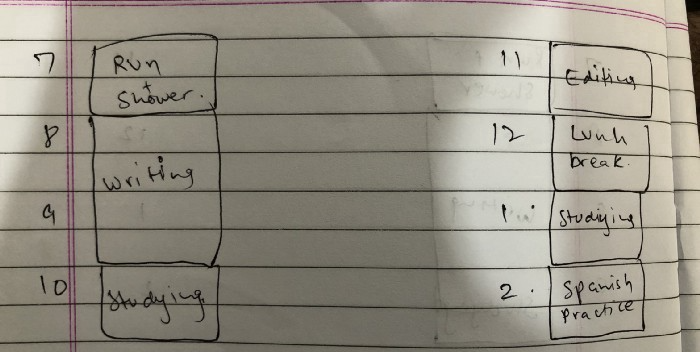

Cal Newport's time-blocking trick takes a minute but improves your day's clarity.

Divide the next day into 30-minute (or 5-minute, if you're Elon Musk) segments and assign responsibilities. As seen.

Here's why:

The procrastination that results from attempting to determine when to begin working is eliminated. Procrastination is a given if you choose when to begin working in real-time. Even if you may assume you'll start working in five minutes, it won't take you long to realize that five minutes have turned into an hour. But if you've already determined to start working at 2:00 the next day, your odds of procrastinating are greatly decreased, if not eliminated altogether.

You'll also see that you have a lot of time in a day when you plan your day out on paper and assign chores to each hour. Doing this daily will permanently eliminate the lack of time mindset.

5-4-3-2-1: Have breakfast with the frog!

“If it’s your job to eat a frog, it’s best to do it first thing in the morning. And If it’s your job to eat two frogs, it’s best to eat the biggest one first.”

Eating the frog means accomplishing the day's most difficult chore. It's better to schedule it first thing in the morning when time-blocking the night before. Why?

The day's most difficult task is also the one that causes the most postponement. Because of the stress it causes, the later you schedule it, the more time you risk wasting by procrastinating.

However, if you do it right away in the morning, you'll feel good all day. This is the reason it was set for the morning.

Mel Robbins' 5-second rule can help. Start counting backward 54321 and force yourself to start at 1. If you acquire the urge to work on a goal, you must act within 5 seconds or your brain will destroy it. If you're scheduled to eat your frog at 9, eat it at 8:59. Start working.

Micro-visualisation

You've heard of visualizing to enhance the future. Visualizing a bright future won't do much if you're not prepared to focus on the now and develop the necessary habits. Alexander said:

People don’t decide their futures. They decide their habits and their habits decide their future.

I visualize the next day's schedule every morning. My day looks like this

“I’ll start writing an article at 7:30 AM. Then, I’ll get dressed up and reach the medicine outpatient department by 9:30 AM. After my duty is over, I’ll have lunch at 2 PM, followed by a nap at 3 PM. Then, I’ll go to the gym at 4…”

etc.

This reinforces the day you planned the night before. This makes following your plan easy.

Set the timer.

It's the best iPhone productivity app. A timer is incredible for increasing productivity.

Set a timer for an hour or 40 minutes before starting work. Your call. I don't believe in techniques like the Pomodoro because I can focus for varied amounts of time depending on the time of day, how fatigued I am, and how cognitively demanding the activity is.

I work with a timer. A timer keeps you focused and prevents distractions. Your mind stays concentrated because of the timer. Timers generate accountability.

To pee, I'll pause my timer. When I sit down, I'll continue. Same goes for bottle refills. To use Twitter, I must pause the timer. This creates accountability and focuses work.

Connecting everything

If you do all 4, you won't be disappointed. Here's how:

Plan out your day's schedule the night before.

Next, envision in your mind's eye the same timetable in the morning.

Speak aloud 54321 when it's time to work: Eat the frog! In the morning, devour the largest frog.

Then set a timer to ensure that you remain focused on the task at hand.

Rita McGrath

3 years ago

Flywheels and Funnels

Traditional sales organizations used the concept of a sales “funnel” to describe the process through which potential customers move, ending up with sales at the end. Winners today have abandoned that way of thinking in favor of building flywheels — business models in which every element reinforces every other.

Ah, the marketing funnel…

Prospective clients go through a predictable set of experiences, students learn in business school marketing classes. It looks like this:

Understanding the funnel helps evaluate sales success indicators. Gail Goodwin, former CEO of small business direct mail provider Constant Contact, said managing the pipeline was key to escaping the sluggish SaaS ramp of death.

Like the funnel concept. To predict how well your business will do, measure how many potential clients are aware of it (awareness) and how many take the next step. If 1,000 people heard about your offering and 10% showed interest, you'd have 100 at that point. If 50% of these people made buyer-like noises, you'd know how many were, etc. It helped model buying trends.

TV, magazine, and radio advertising are pricey for B2C enterprises. Traditional B2B marketing involved armies of sales reps, which was expensive and a barrier to entry.

Cracks in the funnel model

Digital has exposed the funnel's limitations. Hubspot was born at a time when buyers and sellers had huge knowledge asymmetries, according to co-founder Brian Halligan. Those selling a product could use the buyer's lack of information to become a trusted partner.

As the world went digital, getting information and comparing offerings became faster, easier, and cheaper. Buyers didn't need a seller to move through a funnel. Interactions replaced transactions, and the relationship didn't end with a sale.

Instead, buyers and sellers interacted in a constant flow. In many modern models, the sale is midway through the process (particularly true with subscription and software-as-a-service models). Example:

You're creating a winding journey with many touch points, not a funnel (and lots of opportunities for customers to get lost).

From winding journey to flywheel

Beyond this revised view of an interactive customer journey, a company can create what Jim Collins famously called a flywheel. Imagine rolling a heavy disc on its axis. The first few times you roll it, you put in a lot of effort for a small response. The same effort yields faster turns as it gains speed. Over time, the flywheel gains momentum and turns without your help.

Modern digital organizations have created flywheel business models, in which any additional force multiplies throughout the business. The flywheel becomes a force multiplier, according to Collins.

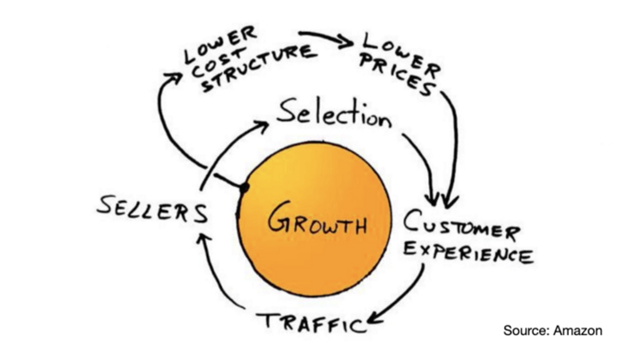

Amazon is a famous flywheel example. Collins explained the concept to Amazon CEO Jeff Bezos at a corporate retreat in 2001. In The Everything Store, Brad Stone describes in his book The Everything Store how he immediately understood Amazon's levers.

The result (drawn on a napkin):

Low prices and a large selection of products attracted customers, while a focus on customer service kept them coming back, increasing traffic. Third-party sellers then increased selection. Low-cost structure supports low-price commitment. It's brilliant! Every wheel turn creates acceleration.

Where from here?

Flywheel over sales funnel! Consider these business terms.