More on Science

Jamie Ducharme

3 years ago

How monkeypox spreads (and doesn't spread)

Monkeypox was rare until recently. In 2005, a research called a cluster of six monkeypox cases in the Republic of Congo "the longest reported chain to date."

That's changed. This year, over 25,000 monkeypox cases have been reported in 83 countries, indicating widespread human-to-human transmission.

What spreads monkeypox? Monkeypox transmission research is ongoing; findings may change. But science says...

Most cases were formerly animal-related.

According to the WHO, monkeypox was first diagnosed in an infant in the DRC in 1970. After that, instances were infrequent and often tied to animals. In 2003, 47 Americans contracted rabies from pet prairie dogs.

In 2017, Nigeria saw a significant outbreak. NPR reported that doctors diagnosed young guys without animal exposure who had genital sores. Nigerian researchers highlighted the idea of sexual transmission in a 2019 study, but the theory didn't catch on. “People tend to cling on to tradition, and the idea is that monkeypox is transmitted from animals to humans,” explains research co-author Dr. Dimie Ogoina.

Most monkeypox cases are sex-related.

Human-to-human transmission of monkeypox occurs, and sexual activity plays a role.

Joseph Osmundson, a clinical assistant professor of biology at NYU, says most transmission occurs in queer and gay sexual networks through sexual or personal contact.

Monkeypox spreads by skin-to-skin contact, especially with its blister-like rash, explains Ogoina. Researchers are exploring whether people can be asymptomatically contagious, but they are infectious until their rash heals and fresh skin forms, according to the CDC.

A July research in the New England Journal of Medicine reported that of more than 500 monkeypox cases in 16 countries as of June, 95% were linked to sexual activity and 98% were among males who have sex with men. WHO Director-General Tedros Adhanom Ghebreyesus encouraged males to temporarily restrict their number of male partners in July.

Is monkeypox a sexually transmitted infection (STI)?

Skin-to-skin contact can spread monkeypox, not simply sexual activities. Dr. Roy Gulick, infectious disease chief at Weill Cornell Medicine and NewYork-Presbyterian, said monkeypox is not a "typical" STI. Monkeypox isn't a STI, claims the CDC.

Most cases in the current outbreak are tied to male sexual behavior, but Osmundson thinks the virus might also spread on sports teams, in spas, or in college dorms.

Can you get monkeypox from surfaces?

Monkeypox can be spread by touching infected clothing or bedding. According to a study, a U.K. health care worker caught monkeypox in 2018 after handling ill patient's bedding.

Angela Rasmussen, a virologist at the University of Saskatchewan in Canada, believes "incidental" contact seldom distributes the virus. “You need enough virus exposure to get infected,” she says. It's conceivable after sharing a bed or towel with an infectious person, but less likely after touching a doorknob, she says.

Dr. Müge evik, a clinical lecturer in infectious diseases at the University of St. Andrews in Scotland, says there is a "spectrum" of risk connected with monkeypox. "Every exposure isn't equal," she explains. "People must know where to be cautious. Reducing [sexual] partners may be more useful than cleaning coffee shop seats.

Is monkeypox airborne?

Exposure to an infectious person's respiratory fluids can cause monkeypox, but the WHO says it needs close, continuous face-to-face contact. CDC researchers are still examining how often this happens.

Under precise laboratory conditions, scientists have shown that monkeypox can spread via aerosols, or tiny airborne particles. But there's no clear evidence that this is happening in the real world, Rasmussen adds. “This is expanding predominantly in communities of males who have sex with men, which suggests skin-to-skin contact,” she explains. If airborne transmission were frequent, she argues, we'd find more occurrences in other demographics.

In the shadow of COVID-19, people are worried about aerosolized monkeypox. Rasmussen believes the epidemiology is different. Different viruses.

Can kids get monkeypox?

More than 80 youngsters have contracted the virus thus far, mainly through household transmission. CDC says pregnant women can spread the illness to their fetus.

Among the 1970s, monkeypox predominantly affected children, but by the 2010s, it was more common in adults, according to a February study. The study's authors say routine smallpox immunization (which protects against monkeypox) halted when smallpox was eradicated. Only toddlers were born after smallpox vaccination halted decades ago. More people are vulnerable now.

Schools and daycares could become monkeypox hotspots, according to pediatric instances. Ogoina adds this hasn't happened in Nigeria's outbreaks, which is encouraging. He says, "I'm not sure if we should worry." We must be careful and seek evidence.

Sara_Mednick

3 years ago

Since I'm a scientist, I oppose biohacking

Understanding your own energy depletion and restoration is how to truly optimize

Hack has meant many bad things for centuries. In the 1800s, a hack was a meager horse used to transport goods.

Modern usage describes a butcher or ax murderer's cleaver chop. The 1980s programming boom distinguished elegant code from "hacks". Both got you to your goal, but the latter made any programmer cringe and mutter about changing the code. From this emerged the hacker trope, the friendless anti-villain living in a murky hovel lit by the computer monitor, eating junk food and breaking into databases to highlight security system failures or steal hotdog money.

Now, start-a-billion-dollar-business-from-your-garage types have shifted their sights from app development to DIY biology, coining the term "bio-hack". This is a required keyword and meta tag for every fitness-related podcast, book, conference, app, or device.

Bio-hacking involves bypassing your body and mind's security systems to achieve a goal. Many biohackers' initial goals were reasonable, like lowering blood pressure and weight. Encouraged by their own progress, self-determination, and seemingly exquisite control of their biology, they aimed to outsmart aging and death to live 180 to 1000 years (summarized well in this vox.com article).

With this grandiose north star, the hunt for novel supplements and genetic engineering began.

Companies selling do-it-yourself biological manipulations cite lab studies in mice as proof of their safety and success in reversing age-related diseases or promoting longevity in humans (the goal changes depending on whether a company is talking to the federal government or private donors).

The FDA is slower than science, they say. Why not alter your biochemistry by buying pills online, editing your DNA with a CRISPR kit, or using a sauna delivered to your home? How about a microchip or electrical stimulator?

What could go wrong?

I'm not the neo-police, making citizen's arrests every time someone introduces a new plumbing gadget or extrapolates from animal research on resveratrol or catechins that we should drink more red wine or eat more chocolate. As a scientist who's spent her career asking, "Can we get better?" I've come to view bio-hacking as misguided, profit-driven, and counterproductive to its followers' goals.

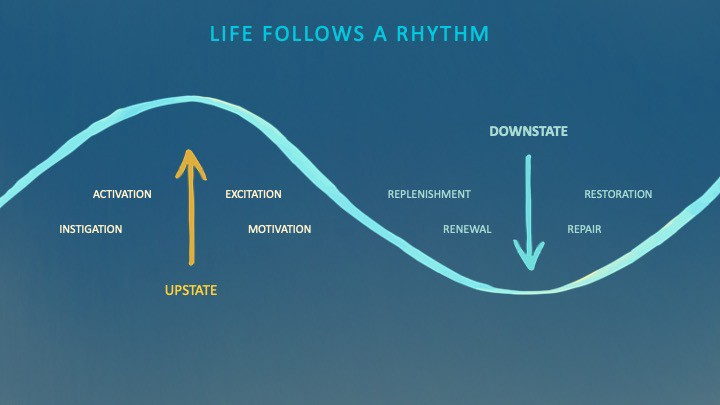

We're creatures of nature. Despite all the new gadgets and bio-hacks, we still use Roman plumbing technology, and the best way to stay fit, sharp, and happy is to follow a recipe passed down since the beginning of time. Bacteria, plants, and all natural beings are rhythmic, with alternating periods of high activity and dormancy, whether measured in seconds, hours, days, or seasons. Nature repeats successful patterns.

During the Upstate, every cell in your body is naturally primed and pumped full of glycogen and ATP (your cells' energy currencies), as well as cortisol, which supports your muscles, heart, metabolism, cognitive prowess, emotional regulation, and general "get 'er done" attitude. This big energy release depletes your batteries and requires the Downstate, when your subsystems recharge at the cellular level.

Downstates are when you give your heart a break from pumping nutrient-rich blood through your body; when you give your metabolism a break from inflammation, oxidative stress, and sympathetic arousal caused by eating fast food — or just eating too fast; or when you give your mind a chance to wander, think bigger thoughts, and come up with new creative solutions. When you're responding to notifications, emails, and fires, you can't relax.

Downstates aren't just for consistently recharging your battery. By spending time in the Downstate, your body and brain get extra energy and nutrients, allowing you to grow smarter, faster, stronger, and more self-regulated. This state supports half-marathon training, exam prep, and mediation. As we age, spending more time in the Downstate is key to mental and physical health, well-being, and longevity.

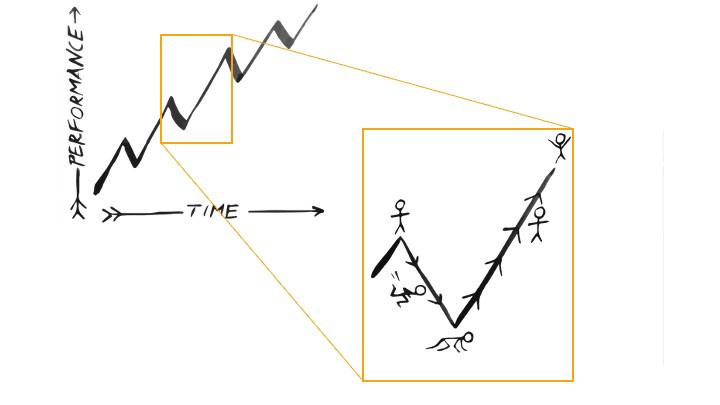

When you prioritize energy-demanding activities during Upstate periods and energy-replenishing activities during Downstate periods, all your subsystems, including cardiovascular, metabolic, muscular, cognitive, and emotional, hum along at their optimal settings. When you synchronize the Upstates and Downstates of these individual rhythms, their functioning improves. A hard workout causes autonomic stress, which triggers Downstate recovery.

By choosing the right timing and type of exercise during the day, you can ensure a deeper recovery and greater readiness for the next workout by working with your natural rhythms and strengthening your autonomic and sleep Downstates.

Morning cardio workouts increase deep sleep compared to afternoon workouts. Timing and type of meals determine when your sleep hormone melatonin is released, ushering in sleep.

Rhythm isn't a hack. It's not a way to cheat the system or the boss. Nature has honed its optimization wisdom over trillions of days and nights. Stop looking for quick fixes. You're a whole system made of smaller subsystems that must work together to function well. No one pill or subsystem will make it all work. Understanding and coordinating your rhythms is free, easy, and only benefits you.

Dr. Sara C. Mednick is a cognitive neuroscientist at UC Irvine and author of The Power of the Downstate (HachetteGO)

Daniel Clery

3 years ago

Twisted device investigates fusion alternatives

German stellarator revamped to run longer, hotter, compete with tokamaks

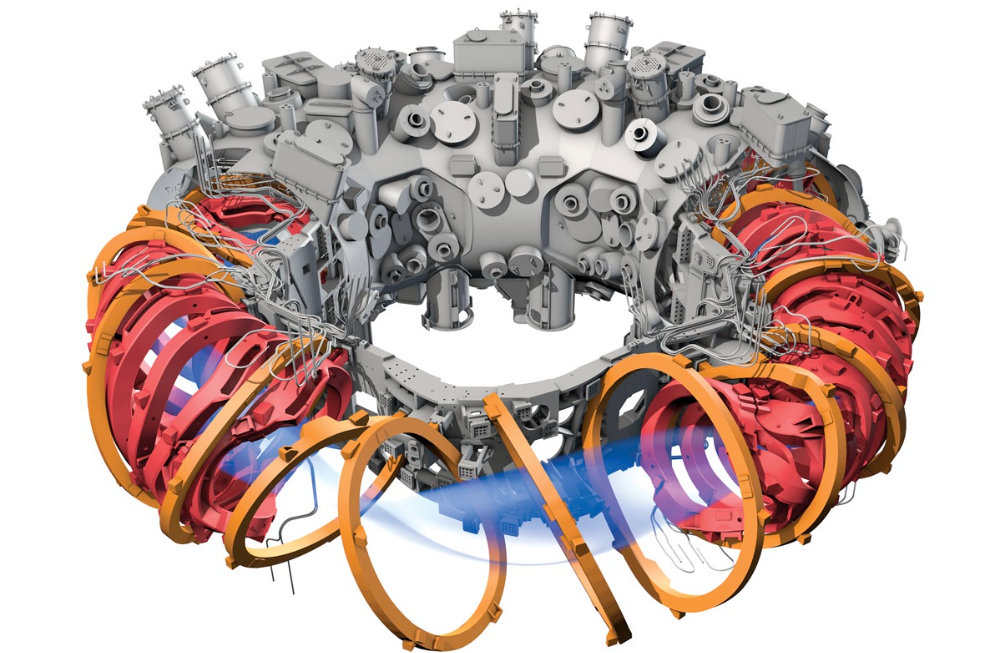

Tokamaks have dominated the search for fusion energy for decades. Just as ITER, the world's largest and most expensive tokamak, nears completion in southern France, a smaller, twistier testbed will start up in Germany.

If the 16-meter-wide stellarator can match or outperform similar-size tokamaks, fusion experts may rethink their future. Stellarators can keep their superhot gases stable enough to fuse nuclei and produce energy. They can theoretically run forever, but tokamaks must pause to reset their magnet coils.

The €1 billion German machine, Wendelstein 7-X (W7-X), is already getting "tokamak-like performance" in short runs, claims plasma physicist David Gates, preventing particles and heat from escaping the superhot gas. If W7-X can go long, "it will be ahead," he says. "Stellarators excel" Eindhoven University of Technology theorist Josefine Proll says, "Stellarators are back in the game." A few of startup companies, including one that Gates is leaving Princeton Plasma Physics Laboratory, are developing their own stellarators.

W7-X has been running at the Max Planck Institute for Plasma Physics (IPP) in Greifswald, Germany, since 2015, albeit only at low power and for brief runs. W7-X's developers took it down and replaced all inner walls and fittings with water-cooled equivalents, allowing for longer, hotter runs. The team reported at a W7-X board meeting last week that the revised plasma vessel has no leaks. It's expected to restart later this month to show if it can get plasma to fusion-igniting conditions.

Wendelstein 7-X's water-cooled inner surface allows for longer runs.

HOSAN/IPP

Both stellarators and tokamaks create magnetic gas cages hot enough to melt metal. Microwaves or particle beams heat. Extreme temperatures create a plasma, a seething mix of separated nuclei and electrons, and cause the nuclei to fuse, releasing energy. A fusion power plant would use deuterium and tritium, which react quickly. Non-energy-generating research machines like W7-X avoid tritium and use hydrogen or deuterium instead.

Tokamaks and stellarators use electromagnetic coils to create plasma-confining magnetic fields. A greater field near the hole causes plasma to drift to the reactor's wall.

Tokamaks control drift by circulating plasma around a ring. Streaming creates a magnetic field that twists and stabilizes ionized plasma. Stellarators employ magnetic coils to twist, not plasma. Once plasma physicists got powerful enough supercomputers, they could optimize stellarator magnets to improve plasma confinement.

W7-X is the first large, optimized stellarator with 50 6- ton superconducting coils. Its construction began in the mid-1990s and cost roughly twice the €550 million originally budgeted.

The wait hasn't disappointed researchers. W7-X director Thomas Klinger: "The machine operated immediately." "It's a friendly machine." It did everything we asked." Tokamaks are prone to "instabilities" (plasma bulging or wobbling) or strong "disruptions," sometimes associated to halted plasma flow. IPP theorist Sophia Henneberg believes stellarators don't employ plasma current, which "removes an entire branch" of instabilities.

In early stellarators, the magnetic field geometry drove slower particles to follow banana-shaped orbits until they collided with other particles and leaked energy. Gates believes W7-X's ability to suppress this effect implies its optimization works.

W7-X loses heat through different forms of turbulence, which push particles toward the wall. Theorists have only lately mastered simulating turbulence. W7-X's forthcoming campaign will test simulations and turbulence-fighting techniques.

A stellarator can run constantly, unlike a tokamak, which pulses. W7-X has run 100 seconds—long by tokamak standards—at low power. The device's uncooled microwave and particle heating systems only produced 11.5 megawatts. The update doubles heating power. High temperature, high plasma density, and extensive runs will test stellarators' fusion power potential. Klinger wants to heat ions to 50 million degrees Celsius for 100 seconds. That would make W7-X "a world-class machine," he argues. The team will push for 30 minutes. "We'll move step-by-step," he says.

W7-X's success has inspired VCs to finance entrepreneurs creating commercial stellarators. Startups must simplify magnet production.

Princeton Stellarators, created by Gates and colleagues this year, has $3 million to build a prototype reactor without W7-X's twisted magnet coils. Instead, it will use a mosaic of 1000 HTS square coils on the plasma vessel's outside. By adjusting each coil's magnetic field, operators can change the applied field's form. Gates: "It moves coil complexity to the control system." The company intends to construct a reactor that can fuse cheap, abundant deuterium to produce neutrons for radioisotopes. If successful, the company will build a reactor.

Renaissance Fusion, situated in Grenoble, France, raised €16 million and wants to coat plasma vessel segments in HTS. Using a laser, engineers will burn off superconductor tracks to carve magnet coils. They want to build a meter-long test segment in 2 years and a full prototype by 2027.

Type One Energy in Madison, Wisconsin, won DOE money to bend HTS cables for stellarator magnets. The business carved twisting grooves in metal with computer-controlled etching equipment to coil cables. David Anderson of the University of Wisconsin, Madison, claims advanced manufacturing technology enables the stellarator.

Anderson said W7-X's next phase will boost stellarator work. “Half-hour discharges are steady-state,” he says. “This is a big deal.”

You might also like

Amelia Winger-Bearskin

3 years ago

Hate NFTs? I must break some awful news to you...

If you think NFTs are awful, check out the art market.

The fervor around NFTs has subsided in recent months due to the crypto market crash and the media's short attention span. They were all anyone could talk about earlier this spring. Last semester, when passions were high and field luminaries were discussing "slurp juices," I asked my students and students from over 20 other universities what they thought of NFTs.

According to many, NFTs were either tasteless pyramid schemes or a new way for artists to make money. NFTs contributed to the climate crisis and harmed the environment, but so did air travel, fast fashion, and smartphones. Some students complained that NFTs were cheap, tasteless, algorithmically generated schlock, but others asked how this was different from other art.

I'm not sure what I expected, but the intensity of students' reactions surprised me. They had strong, emotional opinions about a technology I'd always considered administrative. NFTs address ownership and accounting, like most crypto/blockchain projects.

Art markets can be irrational, arbitrary, and subject to the same scams and schemes as any market. And maybe a few shenanigans that are unique to the art world.

The Fairness Question

Fairness, a deflating moral currency, was the general sentiment (the less of it in circulation, the more ardently we clamor for it.) These students, almost all of whom are artists, complained to the mismatch between the quality of the work in some notable NFT collections and the excessive amounts these items were fetching on the market. They can sketch a Bored Ape or Lazy Lion in their sleep. Why should they buy ramen with school loans while certain swindlers get rich?

I understand students. Art markets are unjust. They can be irrational, arbitrary, and governed by chance and circumstance, like any market. And art-world shenanigans.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets

Over 50% of artworks in circulation are fake, say experts. Sincere art collectors and institutions are upset by the prevalence of fake goods on the market. Not everyone. Wealthy people and companies use art as investments. They can use cultural institutions like museums and galleries to increase the value of inherited art collections. People sometimes buy artworks and use family ties or connections to museums or other cultural taste-makers to hype the work in their collection, driving up the price and allowing them to sell for a profit. Money launderers can disguise capital flows by using market whims, hype, and fluctuating asset prices.

Almost every mainstream critique leveled against NFTs applies just as easily to art markets.

Art has always been this way. Edward Kienholz's 1989 print series satirized art markets. He stamped 395 identical pieces of paper from $1 to $395. Each piece was initially priced as indicated. Kienholz was joking about a strange feature of art markets: once the last print in a series sells for $395, all previous works are worth at least that much. The entire series is valued at its highest auction price. I don't know what a Kienholz print sells for today (inquire with the gallery), but it's more than $395.

I love Lee Lozano's 1969 "Real Money Piece." Lozano put cash in various denominations in a jar in her apartment and gave it to visitors. She wrote, "Offer guests coffee, diet pepsi, bourbon, half-and-half, ice water, grass, and money." "Offer real money as candy."

Lee Lozano kept track of who she gave money to, how much they took, if any, and how they reacted to the offer of free money without explanation. Diverse reactions. Some found it funny, others found it strange, and others didn't care. Lozano rarely says:

Apr 17 Keith Sonnier refused, later screws lid very tightly back on. Apr 27 Kaltenbach takes all the money out of the jar when I offer it, examines all the money & puts it all back in jar. Says he doesn’t need money now. Apr 28 David Parson refused, laughing. May 1 Warren C. Ingersoll refused. He got very upset about my “attitude towards money.” May 4 Keith Sonnier refused, but said he would take money if he needed it which he might in the near future. May 7 Dick Anderson barely glances at the money when I stick it under his nose and says “Oh no thanks, I intend to earn it on my own.” May 8 Billy Bryant Copley didn’t take any but then it was sort of spoiled because I had told him about this piece on the phone & he had time to think about it he said.

Smart Contracts (smart as in fair, not smart as in Blockchain)

Cornell University's Cheryl Finley has done a lot of research on secondary art markets. I first learned about her research when I met her at the University of Florida's Harn Museum, where she spoke about smart contracts (smart as in fair, not smart as in Blockchain) and new protocols that could help artists who are often left out of the economic benefits of their own work, including women and women of color.

Her talk included findings from her ArtNet op-ed with Lauren van Haaften-Schick, Christian Reeder, and Amy Whitaker.

NFTs allow us to think about and hack on formal contractual relationships outside a system of laws that is currently not set up to service our community.

The ArtNet article The Recent Sale of Amy Sherald's ‘Welfare Queen' Symbolizes the Urgent Need for Resale Royalties and Economic Equity for Artists discussed Sherald's 2012 portrait of a regal woman in a purple dress wearing a sparkling crown and elegant set of pearls against a vibrant red background.

Amy Sherald sold "Welfare Queen" to Princeton professor Imani Perry. Sherald agreed to a payment plan to accommodate Perry's budget.

Amy Sherald rose to fame for her 2016 portrait of Michelle Obama and her full-length portrait of Breonna Taylor, one of the most famous works of the past decade.

As is common, Sherald's rising star drove up the price of her earlier works. Perry's "Welfare Queen" sold for $3.9 million in 2021.

Imani Perry's early investment paid off big-time. Amy Sherald, whose work directly increased the painting's value and who was on an artist's shoestring budget when she agreed to sell "Welfare Queen" in 2012, did not see any of the 2021 auction money. Perry and the auction house got that money.

Sherald sold her Breonna Taylor portrait to the Smithsonian and Louisville's Speed Art Museum to fund a $1 million scholarship. This is a great example of what an artist can do for the community if they can amass wealth through their work.

NFTs haven't solved all of the art market's problems — fakes, money laundering, market manipulation — but they didn't create them. Blockchain and NFTs are credited with making these issues more transparent. More ideas emerge daily about what a smart contract should do for artists.

NFTs are a copyright solution. They allow us to hack formal contractual relationships outside a law system that doesn't serve our community.

Amy Sherald shows the good smart contracts can do (as in, well-considered, self-determined contracts, not necessarily blockchain contracts.) Giving back to our community, deciding where and how our work can be sold or displayed, and ensuring artists share in the equity of our work and the economy our labor creates.

Logan Rane

2 years ago

I questioned Chat-GPT for advice on the top nonfiction books. Here's What It Suggests

You have to use it.

Chat-GPT is a revolution.

All social media outlets are discussing it. How it will impact the future and different things.

True.

I've been using Chat-GPT for a few days, and it's a rare revolution. It's amazing and will only improve.

I asked Chat-GPT about the best non-fiction books. It advised this, albeit results rely on interests.

The Immortal Life of Henrietta Lacks

by Rebecca Skloot

Science, Biography

A impoverished tobacco farmer dies of cervical cancer in The Immortal Life of Henrietta Lacks. Her cell strand helped scientists treat polio and other ailments.

Rebecca Skloot discovers about Henrietta, her family, how the medical business exploited black Americans, and how her cells can live forever in a fascinating and surprising research.

You ought to read it.

if you want to discover more about the past of medicine.

if you want to discover more about American history.

Bad Blood: Secrets and Lies in a Silicon Valley Startup

by John Carreyrou

Tech, Bio

Bad Blood tells the terrifying story of how a Silicon Valley tech startup's blood-testing device placed millions of lives at risk.

John Carreyrou, a Pulitzer Prize-winning journalist, wrote this book.

Theranos and its wunderkind CEO, Elizabeth Holmes, climbed to popularity swiftly and then plummeted.

You ought to read it.

if you are a start-up employee.

specialists in medicine.

The Power of Now: A Guide to Spiritual Enlightenment

by Eckhart Tolle

Self-improvement, Spirituality

The Power of Now shows how to stop suffering and attain inner peace by focusing on the now and ignoring your mind.

The book also helps you get rid of your ego, which tries to control your ideas and actions.

If you do this, you may embrace the present, reduce discomfort, strengthen relationships, and live a better life.

You ought to read it.

if you're looking for serenity and illumination.

If you believe that you are ruining your life, stop.

if you're not happy.

The 7 Habits of Highly Effective People

by Stephen R. Covey

Profession, Success

The 7 Habits of Highly Effective People is an iconic self-help book.

This vital book offers practical guidance for personal and professional success.

This non-fiction book is one of the most popular ever.

You ought to read it.

if you want to reach your full potential.

if you want to discover how to achieve all your objectives.

if you are just beginning your journey toward personal improvement.

Sapiens: A Brief History of Humankind

by Yuval Noah Harari

Science, History

Sapiens explains how our species has evolved from our earliest ancestors to the technology age.

How did we, a species of hairless apes without tails, come to control the whole planet?

It describes the shifts that propelled Homo sapiens to the top.

You ought to read it.

if you're interested in discovering our species' past.

if you want to discover more about the origins of human society and culture.

Jayden Levitt

3 years ago

The country of El Salvador's Bitcoin-obsessed president lost $61.6 million.

It’s only a loss if you sell, right?

Nayib Bukele proclaimed himself “the world’s coolest dictator”.

His jokes aren't clear.

El Salvador's 43rd president self-proclaimed “CEO of El Salvador” couldn't be less presidential.

His thin jeans, aviator sunglasses, and baseball caps like a cartel lord.

He's popular, though.

Bukele won 53% of the vote by fighting violent crime and opposition party corruption.

El Salvador's 6.4 million inhabitants are riding the cryptocurrency volatility wave.

They were powerless.

Their autocratic leader, a former Yamaha Motors salesperson and Bitcoin believer, wants to help 70% unbanked locals.

He intended to give the citizens a way to save money and cut the country's $200 million remittance cost.

Transfer and deposit costs.

This makes logical sense when the president’s theatrics don’t blind you.

El Salvador's Bukele revealed plans to make bitcoin legal tender.

Remittances total $5.9 billion (23%) of the country's expenses.

Anything that reduces costs could boost the economy.

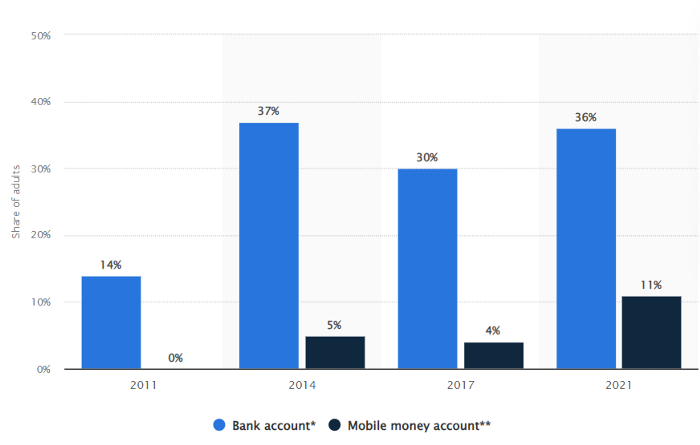

The country’s unbanked population is staggering. Here’s the data by % of people who either have a bank account (Blue) or a mobile money account (Black).

According to Bukele, 46% of the population has downloaded the Chivo Bitcoin Wallet.

In 2021, 36% of El Salvadorans had bank accounts.

Large rural countries like Kenya seem to have resolved their unbanked dilemma.

An economy surfaced where village locals would sell, trade and store network minutes and data as a store of value.

Kenyan phone networks realized unbanked people needed a safe way to accumulate wealth and have an emergency fund.

96% of Kenyans utilize M-PESA, which doesn't require a bank account.

The software involves human agents who hang out with cash and a phone.

These people are like ATMs.

You offer them cash to deposit money in your mobile money account or withdraw cash.

In a country with a faulty banking system, cash availability and a safe place to deposit it are important.

William Jack and Tavneet Suri found that M-PESA brought 194,000 Kenyan households out of poverty by making transactions cheaper and creating a safe store of value.

Mobile money, a service that allows monetary value to be stored on a mobile phone and sent to other users via text messages, has been adopted by most Kenyan households. We estimate that access to the Kenyan mobile money system M-PESA increased per capita consumption levels and lifted 194,000 households, or 2% of Kenyan households, out of poverty.

The impacts, which are more pronounced for female-headed households, appear to be driven by changes in financial behaviour — in particular, increased financial resilience and saving. Mobile money has therefore increased the efficiency of the allocation of consumption over time while allowing a more efficient allocation of labour, resulting in a meaningful reduction of poverty in Kenya.

Currently, El Salvador has 2,301 Bitcoin.

At publication, it's worth $44 million. That remains 41% of Bukele's original $105.6 million.

Unknown if the country has sold Bitcoin, but Bukeles keeps purchasing the dip.

It's still falling.

This might be a fantastic move for the impoverished country over the next five years, if they can live economically till Bitcoin's price recovers.

The evidence demonstrates that a store of value pulls individuals out of poverty, but others say Bitcoin is premature.

You may regard it as an aggressive endeavor to front run the next wave of adoption, offering El Salvador a financial upside.