When My Remote Leadership Skills Took Off

4 Ways To Manage Remote Teams & Employees

The wheels hit the ground as I landed in Rochester.

Our six-person satellite office was now part of my team.

Their manager only reported to me the day before, but I had my ticket booked ahead of time.

I had managed remote employees before but this was different. Engineers dialed into headquarters for every meeting.

So when I learned about the org chart change, I knew a strong first impression would set the tone for everything else.

I was either their boss, or their boss's boss, and I needed them to know I was committed.

Managing a fleet of satellite freelancers or multiple offices requires treating others as more than just a face behind a screen.

You must comprehend each remote team member's perspective and daily interactions.

The good news is that you can start using these techniques right now to better understand and elevate virtual team members.

1. Make Visits To Other Offices

If budgeted, visit and work from offices where teams and employees report to you. Only by living alongside them can one truly comprehend their problems with communication and other aspects of modern life.

2. Have Others Come to You

• Having remote, distributed, or satellite employees and teams visit headquarters every quarter or semi-quarterly allows the main office culture to rub off on them.

When remote team members visit, more people get to meet them, which builds empathy.

If you can't afford to fly everyone, at least bring remote managers or leaders. Hopefully they can resurrect some culture.

3. Weekly Work From Home

No home office policy?

Make one.

WFH is a team-building, problem-solving, and office-viewing opportunity.

For dial-in meetings, I started working from home on occasion.

It also taught me which teams “forget” or “skip” calls.

As a remote team member, you experience all the issues first hand.

This isn't as accurate for understanding teams in other offices, but it can be done at any time.

4. Increase Contact Even If It’s Just To Chat

Don't underestimate office banter.

Sometimes it's about bonding and trust, other times it's about business.

If you get all this information in real-time, please forward it.

Even if nothing critical is happening, call remote team members to check in and chat.

I guarantee that building relationships and rapport will increase both their job satisfaction and yours.

More on Leadership

Nir Zicherman

3 years ago

The Great Organizational Conundrum

Only two of the following three options can be achieved: consistency, availability, and partition tolerance

Someone told me that growing from 30 to 60 is the biggest adjustment for a team or business.

I remember thinking, That's random. Each company is unique. I've seen teams of all types confront the same issues during development periods. With new enterprises starting every year, we should be better at navigating growing difficulties.

As a team grows, its processes and systems break down, requiring reorganization or declining results. Why always? Why isn't there a perfect scaling model? Why hasn't that been found?

The Three Things Productive Organizations Must Have

Any company should be efficient and productive. Three items are needed:

First, it must verify that no two team members have conflicting information about the roadmap, strategy, or any input that could affect execution. Teamwork is required.

Second, it must ensure that everyone can receive the information they need from everyone else quickly, especially as teams become more specialized (an inevitability in a developing organization). It requires everyone's accessibility.

Third, it must ensure that the organization can operate efficiently even if a piece is unavailable. It's partition-tolerant.

From my experience with the many teams I've been on, invested in, or advised, achieving all three is nearly impossible. Why a perfect organization model cannot exist is clear after analysis.

The CAP Theorem: What is it?

Eric Brewer of Berkeley discovered the CAP Theorem, which argues that a distributed data storage should have three benefits. One can only have two at once.

The three benefits are consistency, availability, and partition tolerance, which implies that even if part of the system is offline, the remainder continues to work.

This notion is usually applied to computer science, but I've realized it's also true for human organizations. In a post-COVID world, many organizations are hiring non-co-located staff as they grow. CAP Theorem is more important than ever. Growing teams sometimes think they can develop ways to bypass this law, dooming themselves to a less-than-optimal team dynamic. They should adopt CAP to maximize productivity.

Path 1: Consistency and availability equal no tolerance for partitions

Let's imagine you want your team to always be in sync (i.e., for someone to be the source of truth for the latest information) and to be able to share information with each other. Only division into domains will do.

Numerous developing organizations do this, especially after the early stage (say, 30 people) when everyone may wear many hats and be aware of all the moving elements. After a certain point, it's tougher to keep generalists aligned than to divide them into specialized tasks.

In a specialized, segmented team, leaders optimize consistency and availability (i.e. every function is up-to-speed on the latest strategy, no one is out of sync, and everyone is able to unblock and inform everyone else).

Partition tolerance suffers. If any component of the organization breaks down (someone goes on vacation, quits, underperforms, or Gmail or Slack goes down), productivity stops. There's no way to give the team stability, availability, and smooth operation during a hiccup.

Path 2: Partition Tolerance and Availability = No Consistency

Some businesses avoid relying too heavily on any one person or sub-team by maximizing availability and partition tolerance (the organization continues to function as a whole even if particular components fail). Only redundancy can do that. Instead of specializing each member, the team spreads expertise so people can work in parallel. I switched from Path 1 to Path 2 because I realized too much reliance on one person is risky.

What happens after redundancy? Unreliable. The more people may run independently and in parallel, the less anyone can be the truth. Lack of alignment or updated information can lead to people executing slightly different strategies. So, resources are squandered on the wrong work.

Path 3: Partition and Consistency "Tolerance" equates to "absence"

The third, least-used path stresses partition tolerance and consistency (meaning answers are always correct and up-to-date). In this organizational style, it's most critical to maintain the system operating and keep everyone aligned. No one is allowed to read anything without an assurance that it's up-to-date (i.e. there’s no availability).

Always short-lived. In my experience, a business that prioritizes quality and scalability over speedy information transmission can get bogged down in heavy processes that hinder production. Large-scale, this is unsustainable.

Accepting CAP

When two puzzle pieces fit, the third won't. I've watched developing teams try to tackle these difficulties, only to find, as their ancestors did, that they can never be entirely solved. Idealized solutions fail in reality, causing lost effort, confusion, and lower production.

As teams develop and change, they should embrace CAP, acknowledge there is a limit to productivity in a scaling business, and choose the best two-out-of-three path.

KonstantinDr

3 years ago

Early Adopters And the Fifth Reason WHY

Product management wizardry.

Early adopters buy a product even if it hasn't hit the market or has flaws.

Who are the early adopters?

Early adopters try a new technology or product first. Early adopters are interested in trying or buying new technologies and products before others. They're risk-tolerant and can provide initial cash flow and product reviews. They help a company's new product or technology gain social proof.

Early adopters are most common in the technology industry, but they're in every industry. They don't follow the crowd. They seek innovation and report product flaws before mass production. If the product works well, the first users become loyal customers, and colleagues value their opinion.

What to do with early adopters?

They can be used to collect feedback and initial product promotion, first sales, and product value validation.

How to find early followers?

Start with your immediate environment and target audience. Communicate with them to see if they're interested in your value proposition.

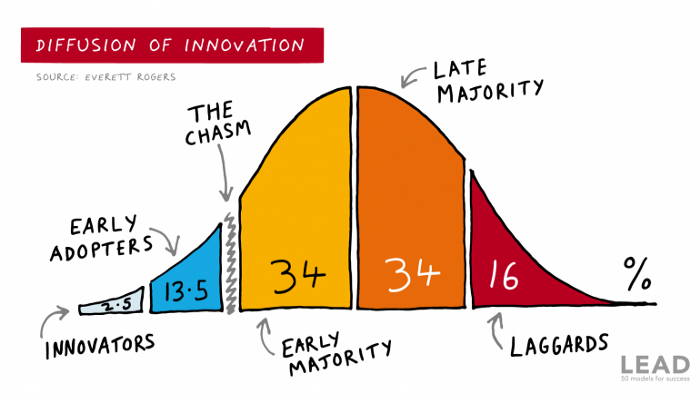

1) Innovators (2.5% of the population) are risk-takers seeking novelty. These people are the first to buy new and trendy items and drive social innovation. However, these people are usually elite;

Early adopters (13.5%) are inclined to accept innovations but are more cautious than innovators; they start using novelties when innovators or famous people do;

3) The early majority (34%) is conservative; they start using new products when many people have mastered them. When the early majority accepted the innovation, it became ingrained in people's minds.

4) Attracting 34% of the population later means the novelty has become a mass-market product. Innovators are using newer products;

5) Laggards (16%) are the most conservative, usually elderly people who use the same products.

Stages of new information acceptance

1. The information is strange and rejected by most. Accepted only by innovators;

2. When early adopters join, more people believe it's not so bad; when a critical mass is reached, the novelty becomes fashionable and most people use it.

3. Fascination with a novelty peaks, then declines; the majority and laggards start using it later; novelty becomes obsolete; innovators master something new.

Problems with early implementation

Early adopter sales have disadvantages.

Higher risk of defects

Selling to first-time users increases the risk of defects. Early adopters are often influential, so this can affect the brand's and its products' long-term perception.

Not what was expected

First-time buyers may be disappointed by the product. Marketing messages can mislead consumers, and if the first users believe the company misrepresented the product, this will affect future sales.

Compatibility issues

Some technological advances cause compatibility issues. Consumers may be disappointed if new technology is incompatible with their electronics.

Method 5 WHY

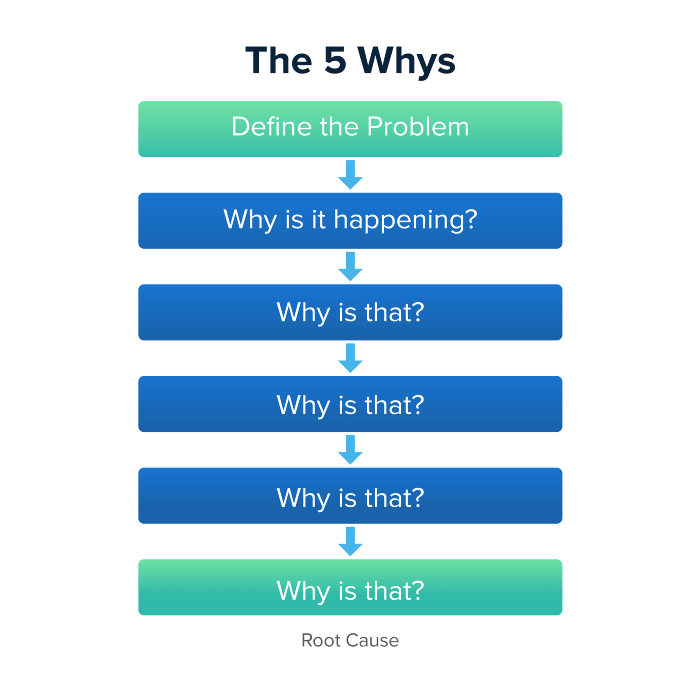

Let's talk about 5 why, a good tool for finding project problems' root causes. This method is also known as the five why rule, method, or questions.

The 5 why technique came from Toyota's lean manufacturing and helps quickly determine a problem's root cause.

On one, two, and three, you simply do this:

We identify and frame the issue for which a solution is sought.

We frequently ponder this question. The first 2-3 responses are frequently very dull, making you want to give up on this pointless exercise. However, after that, things get interesting. And occasionally it's so fascinating that you question whether you really needed to know.

We consider the final response, ponder it, and choose a course of action.

Always do the 5 whys with the customer or team to have a reasonable discussion and better understand what's happening.

And the “five whys” is a wonderful and simplest tool for introspection. With the accumulated practice, it is used almost automatically in any situation like “I can’t force myself to work, the mood is bad in the morning” or “why did I decide that I have no life without this food processor for 20,000 rubles, which will take half of my rather big kitchen.”

An illustration of the five whys

A simple, but real example from my work practice that I think is very indicative, given the participants' low IT skills. Anonymized, of course.

Users spend too long looking for tender documents.

Why? Because they must search through many company tender documents.

Why? Because the system can't filter department-specific bids.

Why? Because our contract management system requirements didn't include a department-tender link. That's it, right? We'll add a filter and be happy. but still…

why? Because we based the system's requirements on regulations for working with paper tender documents (when they still had envelopes and autopsies), not electronic ones, and there was no search mechanism.

Why? We didn't consider how our work would change when switching from paper to electronic tenders when drafting the requirements.

Now I know what to do in the future. We add a filter, enter department data, and teach users to use it. This is tactical, but strategically we review the same forgotten requirements to make all the necessary changes in a package, plus we include it in the checklist for the acceptance of final requirements for the future.

Errors when using 5 why

Five whys seems simple, but it can be misused.

Popular ones:

The accusation of everyone and everything is then introduced. After all, the 5 why method focuses on identifying the underlying causes rather than criticizing others. As a result, at the third step, it is not a good idea to conclude that the system is ineffective because users are stupid and that we can therefore do nothing about it.

to fight with all my might so that the outcome would be exactly 5 reasons, neither more nor less. 5 questions is a typical number (it sounds nice, yes), but there could be 3 or 7 in actuality.

Do not capture in-between responses. It is difficult to overestimate the power of the written or printed word, so the result is so-so when the focus is lost. That's it, I suppose. Simple, quick, and brilliant, like other project management tools.

Conclusion

Today we analyzed important study elements:

Early adopters and 5 WHY We've analyzed cases and live examples of how these methods help with product research and growth point identification. Next, consider the HADI cycle.

Hunter Walk

3 years ago

Is it bad of me to want our portfolio companies to generate greater returns for outside investors than they did for us as venture capitalists?

Wishing for Lasting Companies, Not Penny Stocks or Goodwill Write-Downs

Get me a NASCAR-style company-logoed cremation urn (notice to the executor of my will, theres gonna be a lot of weird requests). I believe in working on projects that would be on your tombstone. As the Homebrew logo is tattooed on my shoulder, expanding the portfolio to my posthumous commemoration is easy. But this isn't an IRR victory lap; it's a hope that the firms we worked for would last beyond my lifetime.

![a little boy planting a dollar bill in the ground and pouring a watering can out on it, digital art [DALL-E]](https://storage.googleapis.com/int3grity/posts/V62hkReDx56S/images/vMwzqrYeXaYnUIMXAdTY9)

Venture investors too often take credit or distance themselves from startups based on circumstances. Successful companies tell stories of crucial introductions, strategy conversations, and other value. Defeats Even whether our term involves Board service or systematic ethical violations, I'm just a little investment, so there's not much I can do. Since I'm guilty, I'm tossing stones from within the glass home (although we try to own our decisions through the lifecycle).

Post-exit company trajectories are usually unconfounded. Off the cap table, no longer a shareholder (or a diminishing one as you sell off/distribute), eventually leaving the Board. You can cheer for the squad or forget about it, but you've freed the corporation and it's back to portfolio work.

As I look at the downward track of most SPACs and other tarnished IPOs from the last few years, I wonder how I would feel if those were my legacy. Is my job done? Yes. When investing in a business, the odds are against it surviving, let alone thriving and being able to find sunlight. SPAC sponsors, institutional buyers, retail investments. Free trade in an open market is their right. Risking and losing capital is the system working! But

We were lead or co-lead investors in our first three funds, but as additional VCs joined the company, we were pushed down the cap table. Voting your shares rarely matters; supporting the firm when they need it does. Being valuable, consistent, and helping the company improve builds trust with the founders.

I hope every startup we sponsor becomes a successful public company before, during, and after we benefit. My perspective of American capitalism. Well, a stock ticker has a lot of garbage, and I support all types of regulation simplification (in addition to being a person investor in the Long-Term Stock Exchange). Yet being owned by a large group of investors and making actual gains for them is great. Likewise does seeing someone you met when they were just starting out become a public company CEO without losing their voice, leadership, or beliefs.

I'm just thinking about what we can do from the start to realize value from our investments and build companies with bright futures. Maybe seed venture financing shouldn't impact those outcomes, but I'm not comfortable giving up that obligation.

You might also like

Matthew Cluff

3 years ago

GTO Poker 101

"GTO" (Game Theory Optimal) has been used a lot in poker recently. To clarify its meaning and application, the aim of this article is to define what it is, when to use it when playing, what strategies to apply for how to play GTO poker, for beginner and more advanced players!

Poker GTO

In poker, you can choose between two main winning strategies:

Exploitative play maximizes expected value (EV) by countering opponents' sub-optimal plays and weaker tendencies. Yes, playing this way opens you up to being exploited, but the weaker opponents you're targeting won't change their game to counteract this, allowing you to reap maximum profits over the long run.

GTO (Game-Theory Optimal): You try to play perfect poker, which forces your opponents to make mistakes (which is where almost all of your profit will be derived from). It mixes bluffs or semi-bluffs with value bets, clarifies bet sizes, and more.

GTO vs. Exploitative: Which is Better in Poker?

Before diving into GTO poker strategy, it's important to know which of these two play styles is more profitable for beginners and advanced players. The simple answer is probably both, but usually more exploitable.

Most players don't play GTO poker and can be exploited in their gameplay and strategy, allowing for more profits to be made using an exploitative approach. In fact, it’s only in some of the largest games at the highest stakes that GTO concepts are fully utilized and seen in practice, and even then, exploitative plays are still sometimes used.

Knowing, understanding, and applying GTO poker basics will create a solid foundation for your poker game. It's also important to understand GTO so you can deviate from it to maximize profits.

GTO Poker Strategy

According to Ed Miller's book "Poker's 1%," the most fundamental concept that only elite poker players understand is frequency, which could be in relation to cbets, bluffs, folds, calls, raises, etc.

GTO poker solvers (downloadable online software) give solutions for how to play optimally in any given spot and often recommend using mixed strategies based on select frequencies.

In a river situation, a solver may tell you to call 70% of the time and fold 30%. It may also suggest calling 50% of the time, folding 35% of the time, and raising 15% of the time (with a certain range of hands).

Frequencies are a fundamental and often unrecognized part of poker, but they run through these 5 GTO concepts.

1. Preflop ranges

To compensate for positional disadvantage, out-of-position players must open tighter hand ranges.

Premium starting hands aren't enough, though. Considering GTO poker ranges and principles, you want a good, balanced starting hand range from each position with at least some hands that can make a strong poker hand regardless of the flop texture (low, mid, high, disconnected, etc).

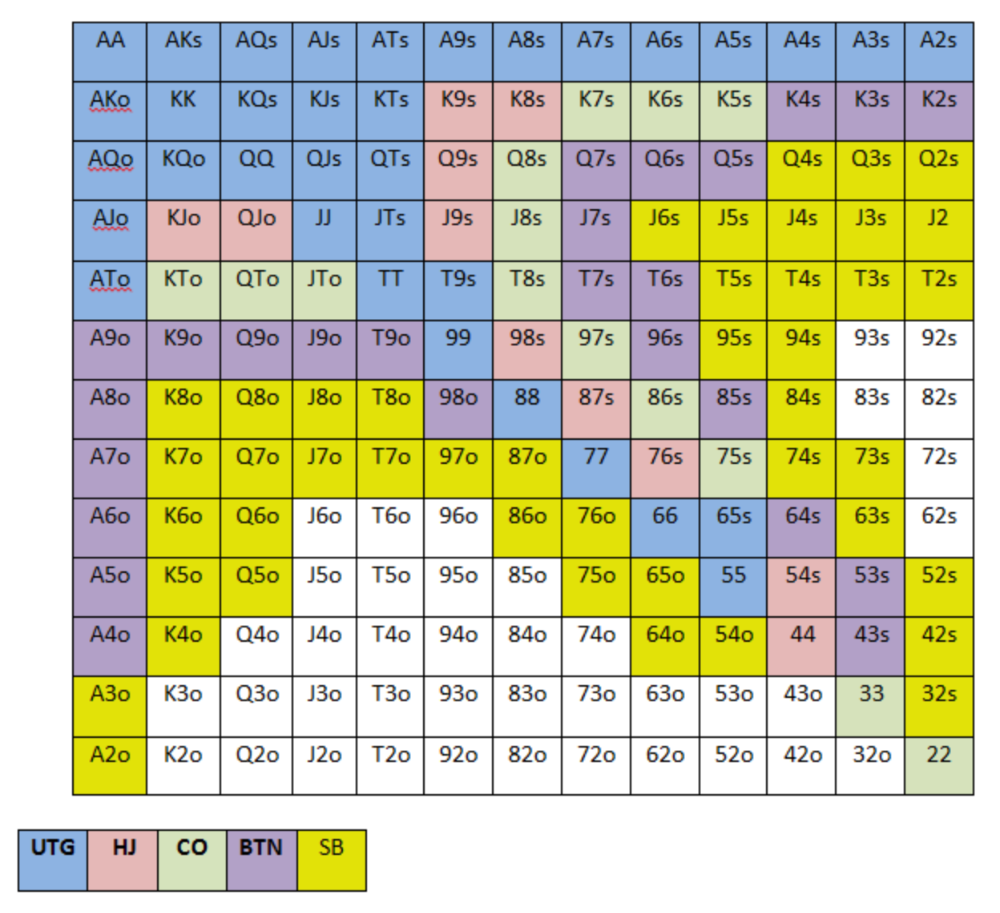

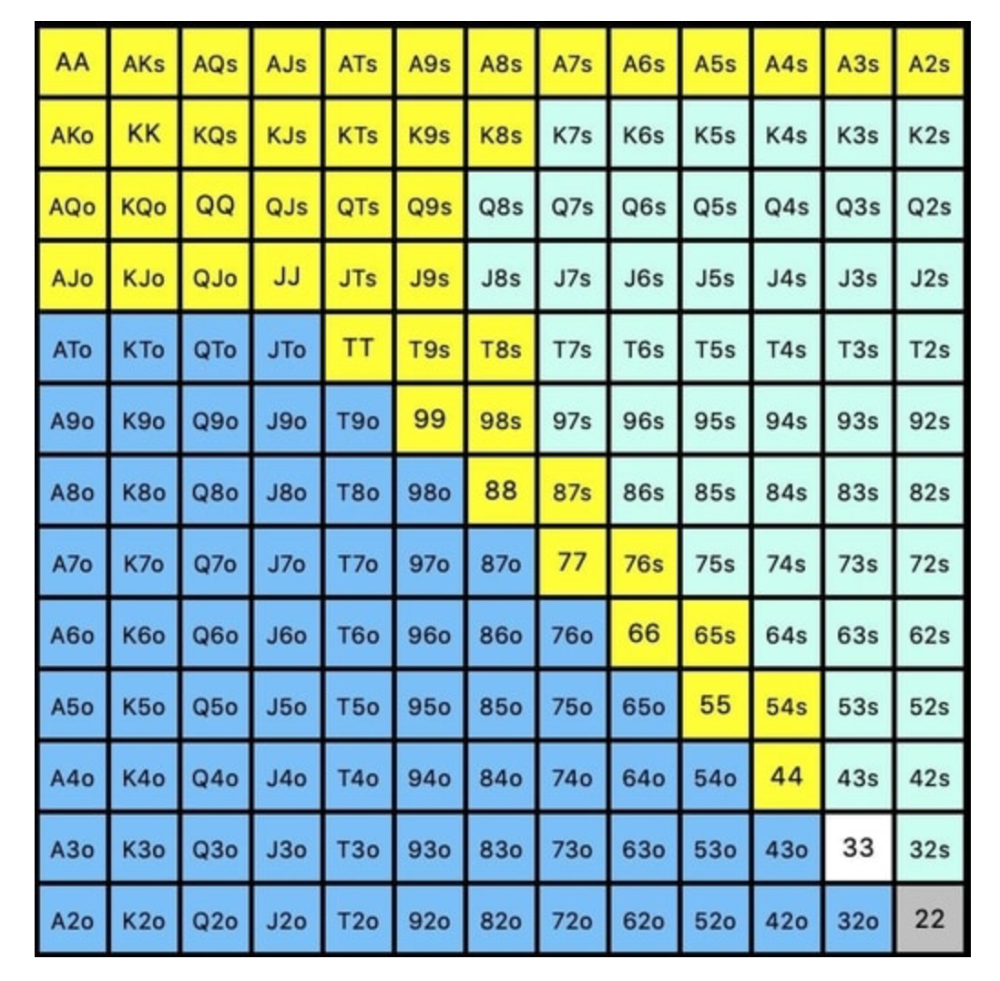

Below is a GTO preflop beginner poker chart for online 6-max play, showing which hand ranges one should open-raise with. Table positions are color-coded (see key below).

NOTE: For GTO play, it's advisable to use a mixed strategy for opening in the small blind, combining open-limps and open-raises for various hands. This cannot be illustrated with the color system used for the chart.

Choosing which hands to play is often a math problem, as discussed below.

Other preflop GTO poker charts include which hands to play after a raise, which to 3bet, etc. Solvers can help you decide which preflop hands to play (call, raise, re-raise, etc.).

2. Pot Odds

Always make +EV decisions that profit you as a poker player. Understanding pot odds (and equity) can help.

Postflop Pot Odds

Let’s say that we have JhTh on a board of 9h8h2s4c (open-ended straight-flush draw). We have $40 left and $50 in the pot. He has you covered and goes all-in. As calling or folding are our only options, playing GTO involves calculating whether a call is +EV or –EV. (The hand was empty.)

Any remaining heart, Queen, or 7 wins the hand. This means we can improve 15 of 46 unknown cards, or 32.6% of the time.

What if our opponent has a set? The 4h or 2h could give us a flush, but it could also give the villain a boat. If we reduce outs from 15 to 14.5, our equity would be 31.5%.

We must now calculate pot odds.

(bet/(our bet+pot)) = pot odds

= $50 / ($40 + $90)

= $40 / $130

= 30.7%

To make a profitable call, we need at least 30.7% equity. This is a profitable call as we have 31.5% equity (even if villain has a set). Yes, we will lose most of the time, but we will make a small profit in the long run, making a call correct.

Pot odds aren't just for draws, either. If an opponent bets 50% pot, you get 3 to 1 odds on a call, so you must win 25% of the time to be profitable. If your current hand has more than 25% equity against your opponent's perceived range, call.

Preflop Pot Odds

Preflop, you raise to 3bb and the button 3bets to 9bb. You must decide how to act. In situations like these, we can actually use pot odds to assist our decision-making.

This pot is:

(our open+3bet size+small blind+big blind)

(3bb+9bb+0.5bb+1bb)

= 13.5

This means we must call 6bb to win a pot of 13.5bb, which requires 30.7% equity against the 3bettor's range.

Three additional factors must be considered:

Being out of position on our opponent makes it harder to realize our hand's equity, as he can use his position to put us in tough spots. To profitably continue against villain's hand range, we should add 7% to our equity.

Implied Odds / Reverse Implied Odds: The ability to win or lose significantly more post-flop (than pre-flop) based on our remaining stack.

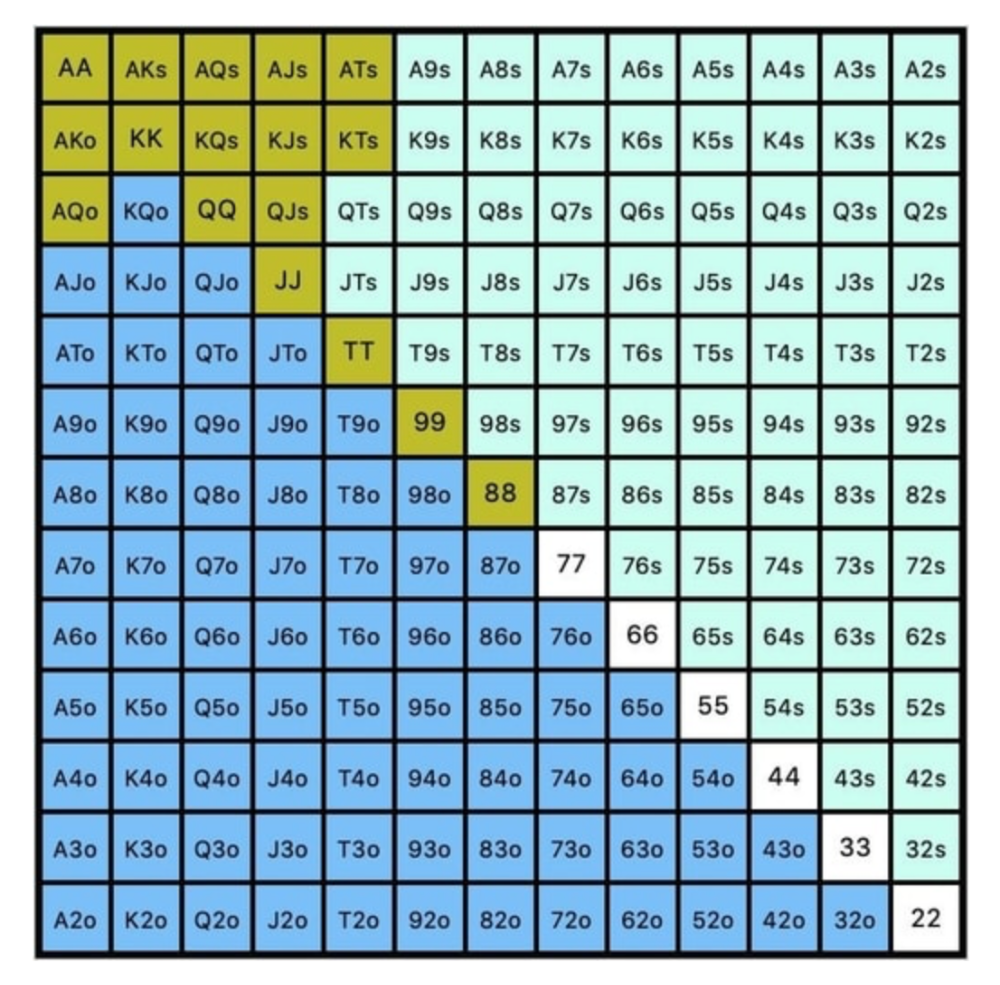

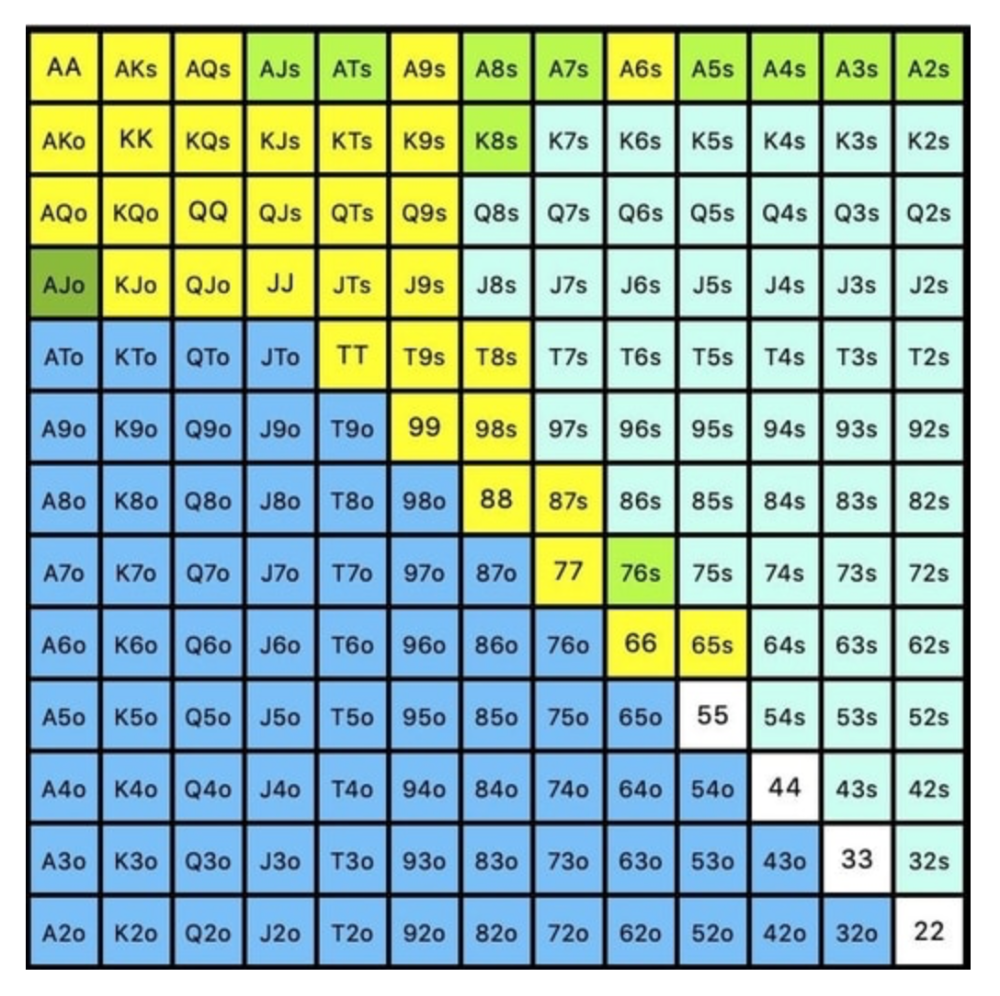

While statistics on 3bet stats can be gained with a large enough sample size (i.e. 8% 3bet stat from button), the numbers don't tell us which 8% of hands villain could be 3betting with. Both polarized and depolarized charts below show 8% of possible hands.

7.4% of hands are depolarized.

Polarized Hand range (7.54%):

Each hand range has different contents. We don't know if he 3bets some hands and calls or folds others.

Using an exploitable strategy can help you play a hand range correctly. The next GTO concept will make things easier.

3. Minimum Defense Frequency:

This concept refers to the % of our range we must continue with (by calling or raising) to avoid being exploited by our opponents. This concept is most often used off-table and is difficult to apply in-game.

These beginner GTO concepts will help your decision-making during a hand, especially against aggressive opponents.

MDF formula:

MDF = POT SIZE/(POT SIZE+BET SIZE)

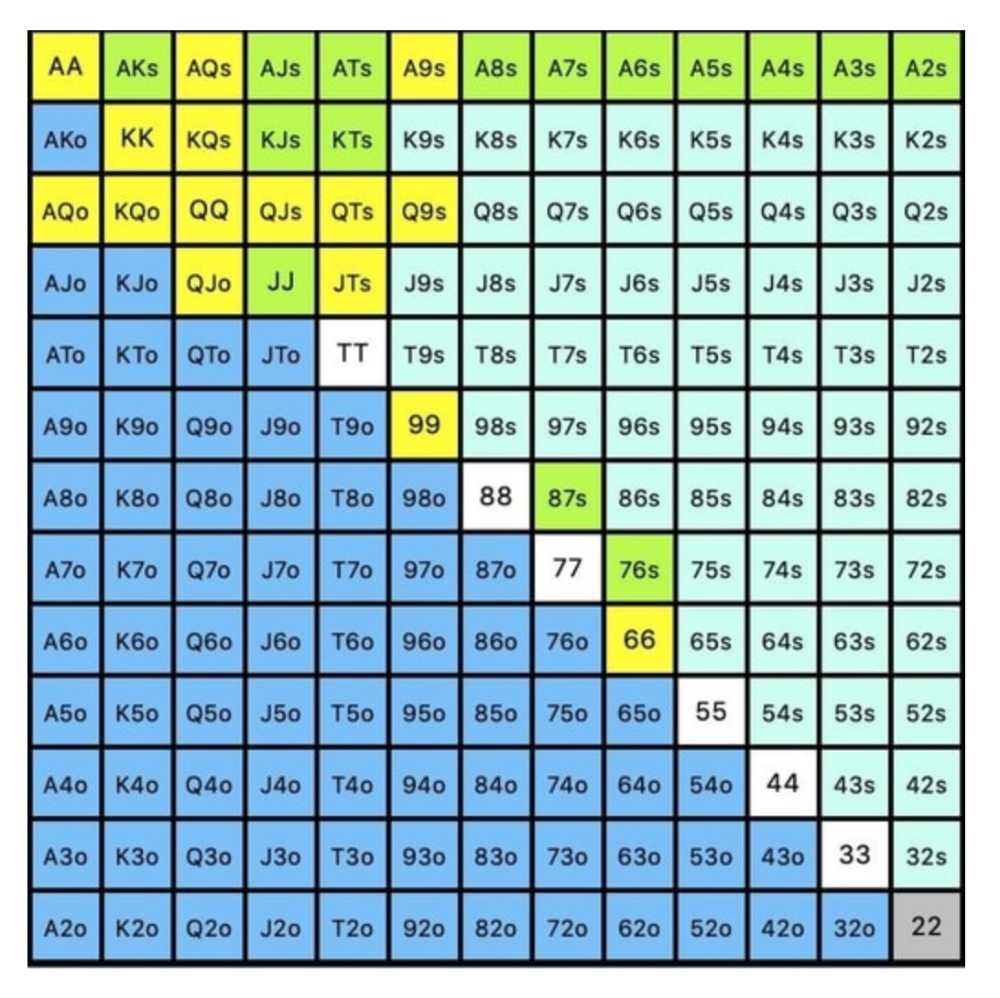

Here's a poker GTO chart of common bet sizes and minimum defense frequency.

Take the number of hand combos in your starting hand range and use the MDF to determine which hands to continue with. Choose hands with the most playability and equity against your opponent's betting range.

Say you open-raise HJ and BB calls. Qh9h6c flop. Your opponent leads you for a half-pot bet. MDF suggests keeping 67% of our range.

Using the above starting hand chart, we can determine that the HJ opens 254 combos:

We must defend 67% of these hands, or 170 combos, according to MDF. Hands we should keep include:

Flush draws

Open-Ended Straight Draws

Gut-Shot Straight Draws

Overcards

Any Pair or better

So, our flop continuing range could be:

Some highlights:

Fours and fives have little chance of improving on the turn or river.

We only continue with AX hearts (with a flush draw) without a pair or better.

We'll also include 4 AJo combos, all of which have the Ace of hearts, and AcJh, which can block a backdoor nut flush combo.

Let's assume all these hands are called and the turn is blank (2 of spades). Opponent bets full-pot. MDF says we must defend 50% of our flop continuing range, or 85 of 170 combos, to be unexploitable. This strategy includes our best flush draws, straight draws, and made hands.

Here, we keep combining:

Nut flush draws

Pair + flush draws

GS + flush draws

Second Pair, Top Kicker+

One combo of JJ that doesn’t block the flush draw or backdoor flush draw.

On the river, we can fold our missed draws and keep our best made hands. When calling with weaker hands, consider blocker effects and card removal to avoid overcalling and decide which combos to continue.

4. Poker GTO Bet Sizing

To avoid being exploited, balance your bluffs and value bets. Your betting range depends on how much you bet (in relation to the pot). This concept only applies on the river, as draws (bluffs) on the flop and turn still have equity (and are therefore total bluffs).

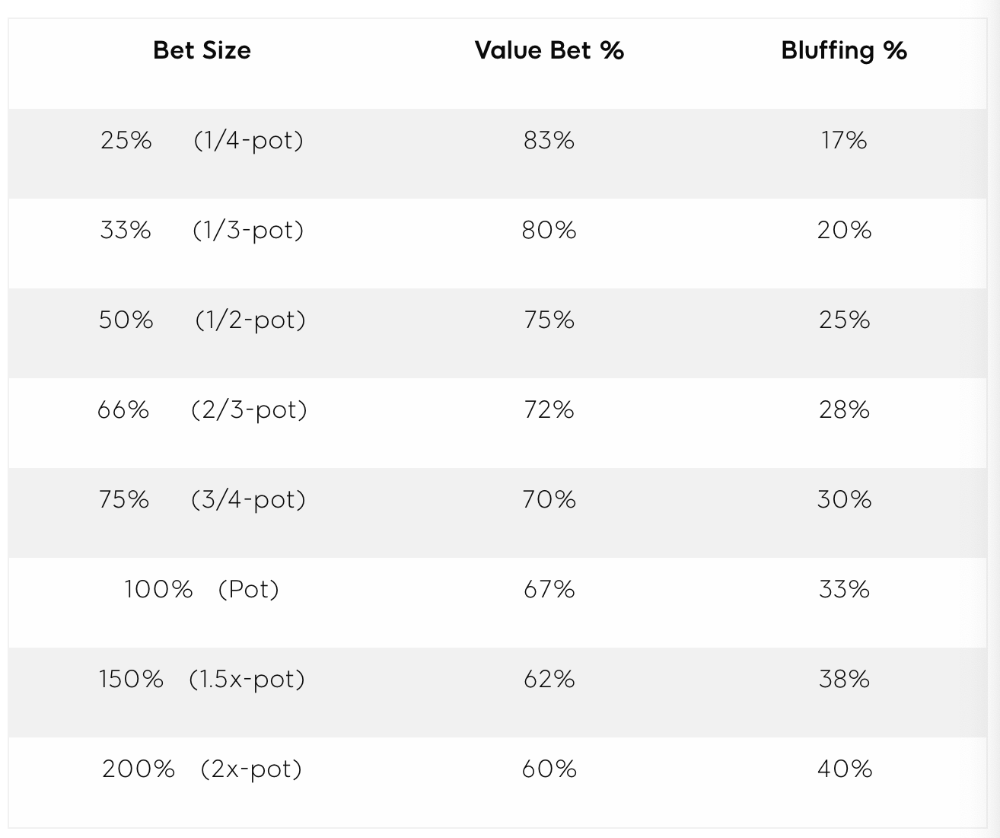

On the flop, you want a 2:1 bluff-to-value-bet ratio. On the flop, there won't be as many made hands as on the river, and your bluffs will usually contain equity. The turn should have a "bluffing" ratio of 1:1. Use the chart below to determine GTO river bluff frequencies (relative to your bet size):

This chart relates to your opponent's pot odds. If you bet 50% pot, your opponent gets 3:1 odds and must win 25% of the time to call. Poker GTO theory suggests including 25% bluff combinations in your betting range so you're indifferent to your opponent calling or folding.

Best river bluffs don't block hands you want your opponent to have (or not have). For example, betting with missed Ace-high flush draws is often a mistake because you block a missed flush draw you want your opponent to have when bluffing on the river (meaning that it would subsequently be less likely he would have it, if you held two of the flush draw cards). Ace-high usually has some river showdown value.

If you had a 3-flush on the river and wanted to raise, you could bluff raise with AX combos holding the bluff suit Ace. Blocking the nut flush prevents your opponent from using that combo.

5. Bet Sizes and Frequency

GTO beginner strategies aren't just bluffs and value bets. They show how often and how much to bet in certain spots. Top players have benefited greatly from poker solvers, which we'll discuss next.

GTO Poker Software

In recent years, various poker GTO solvers have been released to help beginner, intermediate, and advanced players play balanced/GTO poker in various situations.

PokerSnowie and PioSolver are popular GTO and poker study programs.

While you can't compute players' hand ranges and what hands to bet or check with in real time, studying GTO play strategies with these programs will pay off. It will improve your poker thinking and understanding.

Solvers can help you balance ranges, choose optimal bet sizes, and master cbet frequencies.

GTO Poker Tournament

Late-stage tournaments have shorter stacks than cash games. In order to follow GTO poker guidelines, Nash charts have been created, tweaked, and used for many years (and also when to call, depending on what number of big blinds you have when you find yourself shortstacked).

The charts are for heads-up push/fold. In a multi-player game, the "pusher" chart can only be used if play is folded to you in the small blind. The "caller" chart can only be used if you're in the big blind and assumes a small blind "pusher" (with a much wider range than if a player in another position was open-shoving).

Divide the pusher chart's numbers by 2 to see which hand to use from the Button. Divide the original chart numbers by 4 to find the CO's pushing range. Some of the figures will be impossible to calculate accurately for the CO or positions to the right of the blinds because the chart's highest figure is "20+" big blinds, which is also used for a wide range of hands in the push chart.

Both of the GTO charts below are ideal for heads-up play, but exploitable HU shortstack strategies can lead to more +EV decisions against certain opponents. Following the charts will make your play GTO and unexploitable.

Poker pro Max Silver created the GTO push/fold software SnapShove. (It's accessible online at www.snapshove.com or as iOS or Android apps.)

Players can access GTO shove range examples in the full version. (You can customize the number of big blinds you have, your position, the size of the ante, and many other options.)

In Conclusion

Due to the constantly changing poker landscape, players are always improving their skills. Exploitable strategies often yield higher profit margins than GTO-based approaches, but knowing GTO beginner and advanced concepts can give you an edge for a few reasons.

It creates a solid gameplay base.

Having a baseline makes it easier to exploit certain villains.

You can avoid leveling wars with your opponents by making sound poker decisions based on GTO strategy.

It doesn't require assuming opponents' play styles.

Not results-oriented.

This is just the beginning of GTO and poker theory. Consider investing in the GTO poker solver software listed above to improve your game.

joyce shen

4 years ago

Framework to Evaluate Metaverse and Web3

Everywhere we turn, there's a new metaverse or Web3 debut. Microsoft recently announced a $68.7 BILLION cash purchase of Activision.

Like AI in 2013 and blockchain in 2014, NFT growth in 2021 feels like this year's metaverse and Web3 growth. We are all bombarded with information, conflicting signals, and a sensation of FOMO.

How can we evaluate the metaverse and Web3 in a noisy, new world? My framework for evaluating upcoming technologies and themes is shown below. I hope you will also find them helpful.

Understand the “pipes” in a new space.

Whatever people say, Metaverse and Web3 will have to coexist with the current Internet. Companies who host, move, and store data over the Internet have a lot of intriguing use cases in Metaverse and Web3, whether in infrastructure, data analytics, or compliance. Hence the following point.

## Understand the apps layer and their infrastructure.

Gaming, crypto exchanges, and NFT marketplaces would not exist today if not for technology that enables rapid app creation. Yes, according to Chainalysis and other research, 30–40% of Ethereum is self-hosted, with the rest hosted by large cloud providers. For Microsoft to acquire Activision makes strategic sense. It's not only about the games, but also the infrastructure that supports them.

Follow the money

Understanding how money and wealth flow in a complex and dynamic environment helps build clarity. Unless you are exceedingly wealthy, you have limited ability to significantly engage in the Web3 economy today. Few can just buy 10 ETH and spend it in one day. You must comprehend who benefits from the process, and how that 10 ETH circulates now and possibly tomorrow. Major holders and players control supply and liquidity in any market. Today, most Web3 apps are designed to increase capital inflow so existing significant holders can utilize it to create a nascent Web3 economy. When you see a new Metaverse or Web3 application, remember how money flows.

What is the use case?

What does the app do? If there is no clear use case with clear makers and consumers solving a real problem, then the euphoria soon fades, and the only stakeholders who remain enthused are those who have too much to lose.

Time is a major competition that is often overlooked.

We're only busier, but each day is still 24 hours. Using new apps may mean that time is lost doing other things. The user must be eager to learn. Metaverse and Web3 vs. our time? I don't think we know the answer yet (at least for working adults whose cost of time is higher).

I don't think we know the answer yet (at least for working adults whose cost of time is higher).

People and organizations need security and transparency.

For new technologies or apps to be widely used, they must be safe, transparent, and trustworthy. What does secure Metaverse and Web3 mean? This is an intriguing subject for both the business and public sectors. Cloud adoption grew in part due to improved security and data protection regulations.

The following frameworks can help analyze and understand new technologies and emerging technological topics, unless you are a significant investment fund with the financial ability to gamble on numerous initiatives and essentially form your own “index fund”.

I write on VC, startups, and leadership.

More on https://www.linkedin.com/in/joycejshen/ and https://joyceshen.substack.com/

This writing is my own opinion and does not represent investment advice.

Khyati Jain

3 years ago

By Engaging in these 5 Duplicitous Daily Activities, You Rapidly Kill Your Brain Cells

No, it’s not smartphones, overeating, or sugar.

Everyday practices affect brain health. Good brain practices increase memory and cognition.

Bad behaviors increase stress, which destroys brain cells.

Bad behaviors can reverse evolution and diminish the brain. So, avoid these practices for brain health.

1. The silent assassin

Introverts appreciated quarantine.

Before the pandemic, they needed excuses to remain home; thereafter, they had enough.

I am an introvert, and I didn’t hate quarantine. There are billions of people like me who avoid people.

Social relationships are important for brain health. Social anxiety harms your brain.

Antisocial behavior changes brains. It lowers IQ and increases drug abuse risk.

What you can do is as follows:

Make a daily commitment to engage in conversation with a stranger. Who knows, you might turn out to be your lone mate.

Get outside for at least 30 minutes each day.

Shop for food locally rather than online.

Make a call to a friend you haven't spoken to in a while.

2. Try not to rush things.

People love hustle culture. This economy requires a side gig to save money.

Long hours reduce brain health. A side gig is great until you burn out.

Work ages your wallet and intellect. Overworked brains age faster and lose cognitive function.

Working longer hours can help you make extra money, but it can harm your brain.

Side hustle but don't overwork.

What you can do is as follows:

Decide what hour you are not permitted to work after.

Three hours prior to night, turn off your laptop.

Put down your phone and work.

Assign due dates to each task.

3. Location is everything!

The environment may cause brain fog. High pollution can cause brain damage.

Air pollution raises Alzheimer's risk. Air pollution causes cognitive and behavioral abnormalities.

Polluted air can trigger early development of incurable brain illnesses, not simply lung harm.

Your city's air quality is uncontrollable. You may take steps to improve air quality.

In Delhi, schools and colleges are closed to protect pupils from polluted air. So I've adapted.

What you can do is as follows:

To keep your mind healthy and young, make an investment in a high-quality air purifier.

Enclose your windows during the day.

Use a N95 mask every day.

4. Don't skip this meal.

Fasting intermittently is trendy. Delaying breakfast to finish fasting is frequent.

Some skip breakfast and have a hefty lunch instead.

Skipping breakfast might affect memory and focus. Skipping breakfast causes low cognition, delayed responsiveness, and irritation.

Breakfast affects mood and productivity.

Intermittent fasting doesn't prevent healthy breakfasts.

What you can do is as follows:

Try to fast for 14 hours, then break it with a nutritious breakfast.

So that you can have breakfast in the morning, eat dinner early.

Make sure your breakfast is heavy in fiber and protein.

5. The quickest way to damage the health of your brain

Brain health requires water. 1% dehydration can reduce cognitive ability by 5%.

Cerebral fog and mental clarity might result from 2% brain dehydration. Dehydration shrinks brain cells.

Dehydration causes midday slumps and unproductivity. Water improves work performance.

Dehydration can harm your brain, so drink water throughout the day.

What you can do is as follows:

Always keep a water bottle at your desk.

Enjoy some tasty herbal teas.

With a big glass of water, begin your day.

Bring your own water bottle when you travel.

Conclusion

Bad habits can harm brain health. Low cognition reduces focus and productivity.

Unproductive work leads to procrastination, failure, and low self-esteem.

Avoid these harmful habits to optimize brain health and function.