DAOs are legal entities in Marshall Islands.

The Pacific island state recognizes decentralized autonomous organizations.

The Republic of the Marshall Islands has recognized decentralized autonomous organizations (DAOs) as legal entities, giving collectively owned and managed blockchain projects global recognition.

The Marshall Islands' amended the Non-Profit Entities Act 2021 that now recognizes DAOs, which are blockchain-based entities governed by self-organizing communities. Incorporating Admiralty LLC, the island country's first DAO, was made possible thanks to the amendement. MIDAO Directory Services Inc., a domestic organization established to assist DAOs in the Marshall Islands, assisted in the incorporation.

The new law currently allows any DAO to register and operate in the Marshall Islands.

“This is a unique moment to lead,” said Bobby Muller, former Marshall Islands chief secretary and co-founder of MIDAO. He believes DAOs will help create “more efficient and less hierarchical” organizations.

A global hub for DAOs, the Marshall Islands hopes to become a global hub for DAO registration, domicile, use cases, and mass adoption. He added:

"This includes low-cost incorporation, a supportive government with internationally recognized courts, and a technologically open environment."

According to the World Bank, the Marshall Islands is an independent island state in the Pacific Ocean near the Equator. To create a blockchain-based cryptocurrency that would be legal tender alongside the US dollar, the island state has been actively exploring use cases for digital assets since at least 2018.

In February 2018, the Marshall Islands approved the creation of a new cryptocurrency, Sovereign (SOV). As expected, the IMF has criticized the plan, citing concerns that a digital sovereign currency would jeopardize the state's financial stability. They have also criticized El Salvador, the first country to recognize Bitcoin (BTC) as legal tender.

Marshall Islands senator David Paul said the DAO legislation does not pose the same issues as a government-backed cryptocurrency. “A sovereign digital currency is financial and raises concerns about money laundering,” . This is more about giving DAOs legal recognition to make their case to regulators, investors, and consumers.

More on Web3 & Crypto

Modern Eremite

3 years ago

The complete, easy-to-understand guide to bitcoin

Introduction

Markets rely on knowledge.

The internet provided practically endless knowledge and wisdom. Humanity has never seen such leverage. Technology's progress drives us to adapt to a changing world, changing our routines and behaviors.

In a digital age, people may struggle to live in the analogue world of their upbringing. Can those who can't adapt change their lives? I won't answer. We should teach those who are willing to learn, nevertheless. Unravel the modern world's riddles and give them wisdom.

Adapt or die . Accept the future or remain behind.

This essay will help you comprehend Bitcoin better than most market participants and the general public. Let's dig into Bitcoin.

Join me.

Ascension

Bitcoin.org was registered in August 2008. Bitcoin whitepaper was published on 31 October 2008. The document intrigued and motivated people around the world, including technical engineers and sovereignty seekers. Since then, Bitcoin's whitepaper has been read and researched to comprehend its essential concept.

I recommend reading the whitepaper yourself. You'll be able to say you read the Bitcoin whitepaper instead of simply Googling "what is Bitcoin" and reading the fundamental definition without knowing the revolution's scope. The article links to Bitcoin's whitepaper. To avoid being overwhelmed by the whitepaper, read the following article first.

Bitcoin isn't the first peer-to-peer digital currency. Hashcash or Bit Gold were once popular cryptocurrencies. These two Bitcoin precursors failed to gain traction and produce the network effect needed for general adoption. After many struggles, Bitcoin emerged as the most successful cryptocurrency, leading the way for others.

Satoshi Nakamoto, an active bitcointalk.org user, created Bitcoin. Satoshi's identity remains unknown. Satoshi's last bitcointalk.org login was 12 December 2010. Since then, he's officially disappeared. Thus, conspiracies and riddles surround Bitcoin's creators. I've heard many various theories, some insane and others well-thought-out.

It's not about who created it; it's about knowing its potential. Since its start, Satoshi's legacy has changed the world and will continue to.

Block-by-block blockchain

Bitcoin is a distributed ledger. What's the meaning?

Everyone can view all blockchain transactions, but no one can undo or delete them.

Imagine you and your friends routinely eat out, but only one pays. You're careful with money and what others owe you. How can everyone access the info without it being changed?

You'll keep a notebook of your evening's transactions. Everyone will take a page home. If one of you changed the page's data, the group would notice and reject it. The majority will establish consensus and offer official facts.

Miners add a new Bitcoin block to the main blockchain every 10 minutes. The appended block contains miner-verified transactions. Now that the next block has been added, the network will receive the next set of user transactions.

Bitcoin Proof of Work—prove you earned it

Any firm needs hardworking personnel to expand and serve clients. Bitcoin isn't that different.

Bitcoin's Proof of Work consensus system needs individuals to validate and create new blocks and check for malicious actors. I'll discuss Bitcoin's blockchain consensus method.

Proof of Work helps Bitcoin reach network consensus. The network is checked and safeguarded by CPU, GPU, or ASIC Bitcoin-mining machines (Application-Specific Integrated Circuit).

Every 10 minutes, miners are rewarded in Bitcoin for securing and verifying the network. It's unlikely you'll finish the block. Miners build pools to increase their chances of winning by combining their processing power.

In the early days of Bitcoin, individual mining systems were more popular due to high maintenance costs and larger earnings prospects. Over time, people created larger and larger Bitcoin mining facilities that required a lot of space and sophisticated cooling systems to keep machines from overheating.

Proof of Work is a vital part of the Bitcoin network, as network security requires the processing power of devices purchased with fiat currency. Miners must invest in mining facilities, which creates a new business branch, mining facilities ownership. Bitcoin mining is a topic for a future article.

More mining, less reward

Bitcoin is usually scarce.

Why is it rare? It all comes down to 21,000,000 Bitcoins.

Were all Bitcoins mined? Nope. Bitcoin's supply grows until it hits 21 million coins. Initially, 50BTC each block was mined, and each block took 10 minutes. Around 2140, the last Bitcoin will be mined.

But 50BTC every 10 minutes does not give me the year 2140. Indeed careful reader. So important is Bitcoin's halving process.

What is halving?

The block reward is halved every 210,000 blocks, which takes around 4 years. The initial payout was 50BTC per block and has been decreased to 25BTC after 210,000 blocks. First halving occurred on November 28, 2012, when 10,500,000 BTC (50%) had been mined. As of April 2022, the block reward is 6.25BTC and will be lowered to 3.125BTC by 19 March 2024.

The halving method is tied to Bitcoin's hashrate. Here's what "hashrate" means.

What if we increased the number of miners and hashrate they provide to produce a block every 10 minutes? Wouldn't we manufacture blocks faster?

Every 10 minutes, blocks are generated with little asymmetry. Due to the built-in adaptive difficulty algorithm, the overall hashrate does not affect block production time. With increased hashrate, it's harder to construct a block. We can estimate when the next halving will occur because 10 minutes per block is fixed.

Building with nodes and blocks

For someone new to crypto, the unusual terms and words may be overwhelming. You'll also find everyday words that are easy to guess or have a vague idea of what they mean, how they work, and what they do. Consider blockchain technology.

Nodes and blocks: Think about that for a moment. What is your first idea?

The blockchain is a chain of validated blocks added to the main chain. What's a "block"? What's inside?

The block is another page in the blockchain book that has been filled with transaction information and accepted by the majority.

We won't go into detail about what each block includes and how it's built, as long as you understand its purpose.

What about nodes?

Nodes, along with miners, verify the blockchain's state independently. But why?

To create a full blockchain node, you must download the whole Bitcoin blockchain and check every transaction against Bitcoin's consensus criteria.

What's Bitcoin's size?

In April 2022, the Bitcoin blockchain was 389.72GB.

Bitcoin's blockchain has miners and node runners.

Let's revisit the US gold rush. Miners mine gold with their own power (physical and monetary resources) and are rewarded with gold (Bitcoin). All become richer with more gold, and so does the country.

Nodes are like sheriffs, ensuring everything is done according to consensus rules and that there are no rogue miners or network users.

Lost and held bitcoin

Does the Bitcoin exchange price match each coin's price? How many coins remain after 21,000,000? 21 million or less?

Common reason suggests a 21 million-coin supply.

What if I lost 1BTC from a cold wallet?

What if I saved 1000BTC on paper in 2010 and it was damaged?

What if I mined Bitcoin in 2010 and lost the keys?

Satoshi Nakamoto's coins? Since then, those coins haven't moved.

How many BTC are truly in circulation?

Many people are trying to answer this question, and you may discover a variety of studies and individual research on the topic. Be cautious of the findings because they can't be evaluated and the statistics are hazy guesses.

On the other hand, we have long-term investors who won't sell their Bitcoin or will sell little amounts to cover mining or living needs.

The price of Bitcoin is determined by supply and demand on exchanges using liquid BTC. How many BTC are left after subtracting lost and non-custodial BTC?

We have significantly less Bitcoin in circulation than you think, thus the price may not reflect demand if we knew the exact quantity of coins available.

True HODLers and diamond-hand investors won't sell you their coins, no matter the market.

What's UTXO?

Unspent (U) Transaction (TX) Output (O)

Imagine taking a $100 bill to a store. After choosing a drink and munchies, you walk to the checkout to pay. The cashier takes your $100 bill and gives you $25.50 in change. It's in your wallet.

Is it simply 100$? No way.

The $25.50 in your wallet is unrelated to the $100 bill you used. Your wallet's $25.50 is just bills and coins. Your wallet may contain these coins and bills:

2x 10$ 1x 10$

1x 5$ or 3x 5$

1x 0.50$ 2x 0.25$

Any combination of coins and bills can equal $25.50. You don't care, and I'd wager you've never ever considered it.

That is UTXO. Now, I'll detail the Bitcoin blockchain and how UTXO works, as it's crucial to know what coins you have in your (hopefully) cold wallet.

You purchased 1BTC. Is it all? No. UTXOs equal 1BTC. Then send BTC to a cold wallet. Say you pay 0.001BTC and send 0.999BTC to your cold wallet. Is it the 1BTC you got before? Well, yes and no. The UTXOs are the same or comparable as before, but the blockchain address has changed. It's like if you handed someone a wallet, they removed the coins needed for a network charge, then returned the rest of the coins and notes.

UTXO is a simple concept, but it's crucial to grasp how it works to comprehend dangers like dust attacks and how coins may be tracked.

Lightning Network: fast cash

You've probably heard of "Layer 2 blockchain" projects.

What does it mean?

Layer 2 on a blockchain is an additional layer that increases the speed and quantity of transactions per minute and reduces transaction fees.

Imagine going to an obsolete bank to transfer money to another account and having to pay a charge and wait. You can transfer funds via your bank account or a mobile app without paying a fee, or the fee is low, and the cash appear nearly quickly. Layer 1 and 2 payment systems are different.

Layer 1 is not obsolete; it merely has more essential things to focus on, including providing the blockchain with new, validated blocks, whereas Layer 2 solutions strive to offer Layer 1 with previously processed and verified transactions. The primary blockchain, Bitcoin, will only receive the wallets' final state. All channel transactions until shutting and balancing are irrelevant to the main chain.

Layer 2 and the Lightning Network's goal are now clear. Most Layer 2 solutions on multiple blockchains are created as blockchains, however Lightning Network is not. Remember the following remark, as it best describes Lightning.

Lightning Network connects public and private Bitcoin wallets.

Opening a private channel with another wallet notifies just two parties. The creation and opening of a public channel tells the network that anyone can use it.

Why create a public Lightning Network channel?

Every transaction through your channel generates fees.

Money, if you don't know.

See who benefits when in doubt.

Anonymity, huh?

Bitcoin anonymity? Bitcoin's anonymity was utilized to launder money.

Well… You've heard similar stories. When you ask why or how it permits people to remain anonymous, the conversation ends as if it were just a story someone heard.

Bitcoin isn't private. Pseudonymous.

What if someone tracks your transactions and discovers your wallet address? Where is your anonymity then?

Bitcoin is like bulletproof glass storage; you can't take or change the money. If you dig and analyze the data, you can see what's inside.

Every online action leaves a trace, and traces may be tracked. People often forget this guideline.

A tool like that can help you observe what the major players, or whales, are doing with their coins when the market is uncertain. Many people spend time analyzing on-chain data. Worth it?

Ask yourself a question. What are the big players' options? Do you think they're letting you see their wallets for a small on-chain data fee?

Instead of short-term behaviors, focus on long-term trends.

More wallet transactions leave traces. Having nothing to conceal isn't a defect. Can it lead to regulating Bitcoin so every transaction is tracked like in banks today?

But wait. How can criminals pay out Bitcoin? They're doing it, aren't they?

Mixers can anonymize your coins, letting you to utilize them freely. This is not a guide on how to make your coins anonymous; it could do more harm than good if you don't know what you're doing.

Remember, being anonymous attracts greater attention.

Bitcoin isn't the only cryptocurrency we can use to buy things. Using cryptocurrency appropriately can provide usability and anonymity. Monero (XMR), Zcash (ZEC), and Litecoin (LTC) following the Mimblewimble upgrade are examples.

Summary

Congratulations! You've reached the conclusion of the article and learned about Bitcoin and cryptocurrency. You've entered the future.

You know what Bitcoin is, how its blockchain works, and why it's not anonymous. I bet you can explain Lightning Network and UTXO to your buddies.

Markets rely on knowledge. Prepare yourself for success before taking the first step. Let your expertise be your edge.

This article is a summary of this one.

Juxtathinka

3 years ago

Why Is Blockchain So Popular?

What is Bitcoin?

The blockchain is a shared, immutable ledger that helps businesses record transactions and track assets. The blockchain can track tangible assets like cars, houses, and land. Tangible assets like intellectual property can also be tracked on the blockchain.

Imagine a blockchain as a distributed database split among computer nodes. A blockchain stores data in blocks. When a block is full, it is closed and linked to the next. As a result, all subsequent information is compiled into a new block that will be added to the chain once it is filled.

The blockchain is designed so that adding a transaction requires consensus. That means a majority of network nodes must approve a transaction. No single authority can control transactions on the blockchain. The network nodes use cryptographic keys and passwords to validate each other's transactions.

Blockchain History

The blockchain was not as popular in 1991 when Stuart Haber and W. Scott Stornetta worked on it. The blocks were designed to prevent tampering with document timestamps. Stuart Haber and W. Scott Stornetta improved their work in 1992 by using Merkle trees to increase efficiency and collect more documents on a single block.

In 2004, he developed Reusable Proof of Work. This system allows users to verify token transfers in real time. Satoshi Nakamoto invented distributed blockchains in 2008. He improved the blockchain design so that new blocks could be added to the chain without being signed by trusted parties.

Satoshi Nakomoto mined the first Bitcoin block in 2009, earning 50 Bitcoins. Then, in 2013, Vitalik Buterin stated that Bitcoin needed a scripting language for building decentralized applications. He then created Ethereum, a new blockchain-based platform for decentralized apps. Since the Ethereum launch in 2015, different blockchain platforms have been launched: from Hyperledger by Linux Foundation, EOS.IO by block.one, IOTA, NEO and Monero dash blockchain. The block chain industry is still growing, and so are the businesses built on them.

Blockchain Components

The Blockchain is made up of many parts:

1. Node: The node is split into two parts: full and partial. The full node has the authority to validate, accept, or reject any transaction. Partial nodes or lightweight nodes only keep the transaction's hash value. It doesn't keep a full copy of the blockchain, so it has limited storage and processing power.

2. Ledger: A public database of information. A ledger can be public, decentralized, or distributed. Anyone on the blockchain can access the public ledger and add data to it. It allows each node to participate in every transaction. The distributed ledger copies the database to all nodes. A group of nodes can verify transactions or add data blocks to the blockchain.

3. Wallet: A blockchain wallet allows users to send, receive, store, and exchange digital assets, as well as monitor and manage their value. Wallets come in two flavors: hardware and software. Online or offline wallets exist. Online or hot wallets are used when online. Without an internet connection, offline wallets like paper and hardware wallets can store private keys and sign transactions. Wallets generally secure transactions with a private key and wallet address.

4. Nonce: A nonce is a short term for a "number used once''. It describes a unique random number. Nonces are frequently generated to modify cryptographic results. A nonce is a number that changes over time and is used to prevent value reuse. To prevent document reproduction, it can be a timestamp. A cryptographic hash function can also use it to vary input. Nonces can be used for authentication, hashing, or even electronic signatures.

5. Hash: A hash is a mathematical function that converts inputs of arbitrary length to outputs of fixed length. That is, regardless of file size, the hash will remain unique. A hash cannot generate input from hashed output, but it can identify a file. Hashes can be used to verify message integrity and authenticate data. Cryptographic hash functions add security to standard hash functions, making it difficult to decipher message contents or track senders.

Blockchain: Pros and Cons

The blockchain provides a trustworthy, secure, and trackable platform for business transactions quickly and affordably. The blockchain reduces paperwork, documentation errors, and the need for third parties to verify transactions.

Blockchain security relies on a system of unaltered transaction records with end-to-end encryption, reducing fraud and unauthorized activity. The blockchain also helps verify the authenticity of items like farm food, medicines, and even employee certification. The ability to control data gives users a level of privacy that no other platform can match.

In the case of Bitcoin, the blockchain can only handle seven transactions per second. Unlike Hyperledger and Visa, which can handle ten thousand transactions per second. Also, each participant node must verify and approve transactions, slowing down exchanges and limiting scalability.

The blockchain requires a lot of energy to run. In addition, the blockchain is not a hugely distributable system and it is destructible. The security of the block chain can be compromised by hackers; it is not completely foolproof. Also, since blockchain entries are immutable, data cannot be removed. The blockchain's high energy consumption and limited scalability reduce its efficiency.

Why Is Blockchain So Popular?

The blockchain is a technology giant. In 2018, 90% of US and European banks began exploring blockchain's potential. In 2021, 24% of companies are expected to invest $5 million to $10 million in blockchain. By the end of 2024, it is expected that corporations will spend $20 billion annually on blockchain technical services.

Blockchain is used in cryptocurrency, medical records storage, identity verification, election voting, security, agriculture, business, and many other fields. The blockchain offers a more secure, decentralized, and less corrupt system of making global payments, which cryptocurrency enthusiasts love. Users who want to save time and energy prefer it because it is faster and less bureaucratic than banking and healthcare systems.

Most organizations have jumped on the blockchain bandwagon, and for good reason: the blockchain industry has never had more potential. The launch of IBM's Blockchain Wire, Paystack, Aza Finance and Bloom are visible proof of the wonders that the blockchain has done. The blockchain's cryptocurrency segment may not be as popular in the future as the blockchain's other segments, as evidenced by the various industries where it is used. The blockchain is here to stay, and it will be discussed for a long time, not just in tech, but in many industries.

Read original post here

Henrique Centieiro

3 years ago

DAO 101: Everything you need to know

Maybe you'll work for a DAO next! Over $1 Billion in NFTs in the Flamingo DAO Another DAO tried to buy the NFL team Denver Broncos. The UkraineDAO raised over $7 Million for Ukraine. The PleasrDAO paid $4m for a Wu-Tang Clan album that belonged to the “pharma bro.”

DAOs move billions and employ thousands. So learn what a DAO is, how it works, and how to create one!

DAO? So, what? Why is it better?

A Decentralized Autonomous Organization (DAO). Some people like to also refer to it as Digital Autonomous Organization, but I prefer the former.

They are virtual organizations. In the real world, you have organizations or companies right? These firms have shareholders and a board. Usually, anyone with authority makes decisions. It could be the CEO, the Board, or the HIPPO. If you own stock in that company, you may also be able to influence decisions. It's now possible to do something similar but much better and more equitable in the cryptocurrency world.

This article informs you:

DAOs- What are the most common DAOs, their advantages and disadvantages over traditional companies? What are they if any?

Is a DAO legally recognized?

How secure is a DAO?

I’m ready whenever you are!

A DAO is a type of company that is operated by smart contracts on the blockchain. Smart contracts are computer code that self-executes our commands. Those contracts can be any. Most second-generation blockchains support smart contracts. Examples are Ethereum, Solana, Polygon, Binance Smart Chain, EOS, etc. I think I've gone off topic. Back on track. Now let's go!

Unlike traditional corporations, DAOs are governed by smart contracts. Unlike traditional company governance, DAO governance is fully transparent and auditable. That's one of the things that sets it apart. The clarity!

A DAO, like a traditional company, has one major difference. In other words, it is decentralized. DAOs are more ‘democratic' than traditional companies because anyone can vote on decisions. Anyone! In a DAO, we (you and I) make the decisions, not the top-shots. We are the CEO and investors. A DAO gives its community members power. We get to decide.

As long as you are a stakeholder, i.e. own a portion of the DAO tokens, you can participate in the DAO. Tokens are open to all. It's just a matter of exchanging it. Ownership of DAO tokens entitles you to exclusive benefits such as governance, voting, and so on. You can vote for a move, a plan, or the DAO's next investment. You can even pitch for funding. Any ‘big' decision in a DAO requires a vote from all stakeholders. In this case, ‘token-holders'! In other words, they function like stock.

What are the 5 DAO types?

Different DAOs exist. We will categorize decentralized autonomous organizations based on their mode of operation, structure, and even technology. Here are a few. You've probably heard of them:

1. DeFi DAO

These DAOs offer DeFi (decentralized financial) services via smart contract protocols. They use tokens to vote protocol and financial changes. Uniswap, Aave, Maker DAO, and Olympus DAO are some examples. Most DAOs manage billions.

Maker DAO was one of the first protocols ever created. It is a decentralized organization on the Ethereum blockchain that allows cryptocurrency lending and borrowing without a middleman.

Maker DAO issues DAI, a stable coin. DAI is a top-rated USD-pegged stable coin.

Maker DAO has an MKR token. These token holders are in charge of adjusting the Dai stable coin policy. Simply put, MKR tokens represent DAO “shares”.

2. Investment DAO

Investors pool their funds and make investment decisions. Investing in new businesses or art is one example. Investment DAOs help DeFi operations pool capital. The Meta Cartel DAO is a community of people who want to invest in new projects built on the Ethereum blockchain. Instead of investing one by one, they want to pool their resources and share ideas on how to make better financial decisions.

Other investment DAOs include the LAO and Friends with Benefits.

3. DAO Grant/Launchpad

In a grant DAO, community members contribute funds to a grant pool and vote on how to allocate and distribute them. These DAOs fund new DeFi projects. Those in need only need to apply. The Moloch DAO is a great Grant DAO. The tokens are used to allocate capital. Also see Gitcoin and Seedify.

4. DAO Collector

I debated whether to put it under ‘Investment DAO' or leave it alone. It's a subset of investment DAOs. This group buys non-fungible tokens, artwork, and collectibles. The market for NFTs has recently exploded, and it's time to investigate. The Pleasr DAO is a collector DAO. One copy of Wu-Tang Clan's "Once Upon a Time in Shaolin" cost the Pleasr DAO $4 million. Pleasr DAO is known for buying Doge meme NFT. Collector DAOs include the Flamingo, Mutant Cats DAO, and Constitution DAOs. Don't underestimate their websites' "childish" style. They have millions.

5. Social DAO

These are social networking and interaction platforms. For example, Decentraland DAO and Friends With Benefits DAO.

What are the DAO Benefits?

Here are some of the benefits of a decentralized autonomous organization:

- They are trustless. You don’t need to trust a CEO or management team

- It can’t be shut down unless a majority of the token holders agree. The government can't shut - It down because it isn't centralized.

- It's fully democratic

- It is open-source and fully transparent.

What about DAO drawbacks?

We've been saying DAOs are the bomb? But are they really the shit? What could go wrong with DAO?

DAOs may contain bugs. If they are hacked, the results can be catastrophic.

No trade secrets exist. Because the smart contract is transparent and coded on the blockchain, it can be copied. It may be used by another organization without credit. Maybe DAOs should use Secret, Oasis, or Horizen blockchain networks.

Are DAOs legally recognized??

In most counties, DAO regulation is inexistent. It's unclear. Most DAOs don’t have a legal personality. The Howey Test and the Securities Act of 1933 determine whether DAO tokens are securities. Although most countries follow the US, this is only considered for the US. Wyoming became the first state to recognize DAOs as legal entities in July 2021 after passing a DAO bill. DAOs registered in Wyoming are thus legally recognized as business entities in the US and thus receive the same legal protections as a Limited Liability Company.

In terms of cyber-security, how secure is a DAO?

Blockchains are secure. However, smart contracts may have security flaws or bugs. This can be avoided by third-party smart contract reviews, testing, and auditing

Finally, Decentralized Autonomous Organizations are timeless. Let us examine the current situation: Ukraine's invasion. A DAO was formed to help Ukrainian troops fighting the Russians. It was named Ukraine DAO. Pleasr DAO, NFT studio Trippy Labs, and Russian art collective Pussy Riot organized this fundraiser. Coindesk reports that over $3 million has been raised in Ethereum-based tokens. AidForUkraine, a DAO aimed at supporting Ukraine's defense efforts, has launched. Accepting Solana token donations. They are fully transparent, uncensorable, and can’t be shut down or sanctioned.

DAOs are undeniably the future of blockchain. Everyone is paying attention. Personally, I believe traditional companies will soon have to choose between adapting or being left behind.

Long version of this post: https://medium.datadriveninvestor.com/dao-101-all-you-need-to-know-about-daos-275060016663

You might also like

SAHIL SAPRU

3 years ago

Growth tactics that grew businesses from 1 to 100

Everyone wants a scalable startup.

Innovation helps launch a startup. The secret to a scalable business is growth trials (from 1 to 100).

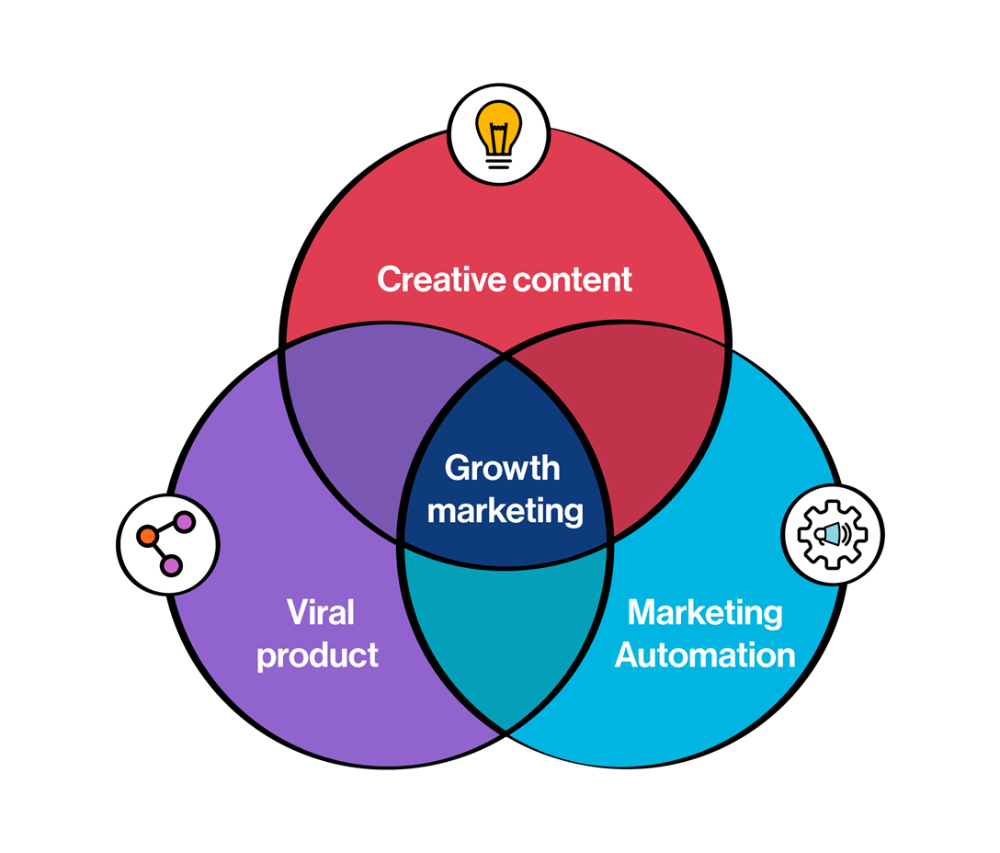

Growth marketing combines marketing and product development for long-term growth.

Today, I'll explain growth hacking strategies popular startups used to scale.

1/ A Facebook user's social value is proportional to their friends.

Facebook built its user base using content marketing and paid ads. Mark and his investors feared in 2007 when Facebook's growth stalled at 90 million users.

Chamath Palihapitiya was brought in by Mark.

The team tested SEO keywords and MAU chasing. The growth team introduced “people you may know”

This feature reunited long-lost friends and family. Casual users became power users as the retention curve flattened.

Growth Hack Insights: With social network effect the value of your product or platform increases exponentially if you have users you know or can relate with.

2/ Airbnb - Focus on your value propositions

Airbnb nearly failed in 2009. The company's weekly revenue was $200 and they had less than 2 months of runway.

Enter Paul Graham. The team noticed a pattern in 40 listings. Their website's property photos sucked.

Why?

Because these photos were taken with regular smartphones. Users didn't like the first impression.

Graham suggested traveling to New York to rent a camera, meet with property owners, and replace amateur photos with high-resolution ones.

A week later, the team's weekly revenue doubled to $400, indicating they were on track.

Growth Hack Insights: When selling an “online experience” ensure that your value proposition is aesthetic enough for users to enjoy being associated with them.

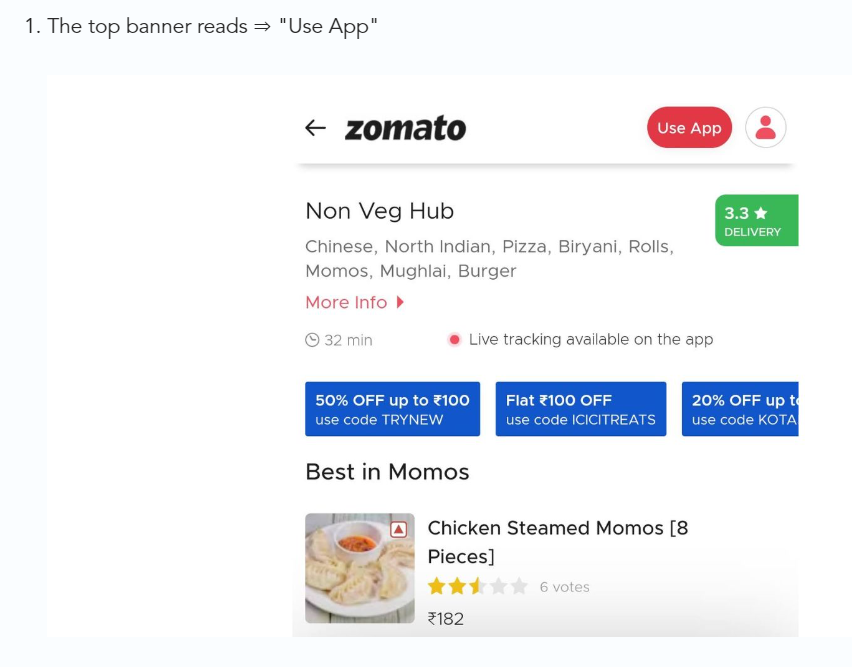

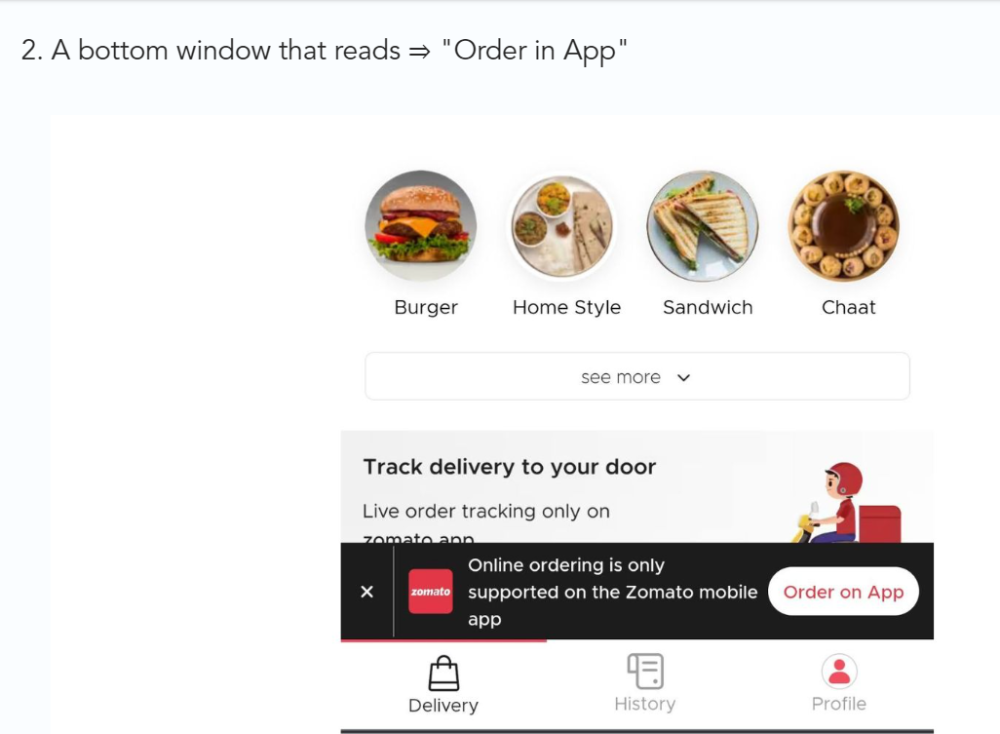

3/ Zomato - A company's smartphone push ensured growth.

Zomato delivers food. User retention was a challenge for the founders. Indian food customers are notorious for switching brands at the drop of a hat.

Zomato wanted users to order food online and repeat orders throughout the week.

Zomato created an attractive website with “near me” keywords for SEO indexing.

Zomato gambled to increase repeat orders. They only allowed mobile app food orders.

Zomato thought mobile apps were stickier. Product innovations in search/discovery/ordering or marketing campaigns like discounts/in-app notifications/nudges can improve user experience.

Zomato went public in 2021 after users kept ordering food online.

Growth Hack Insights: To improve user retention try to build platforms that build user stickiness. Your product and marketing team will do the rest for them.

4/ Hotmail - Signaling helps build premium users.

Ever sent or received an email or tweet with a sign — sent from iPhone?

Hotmail did it first! One investor suggested Hotmail add a signature to every email.

Overnight, thousands joined the company. Six months later, the company had 1 million users.

When serving an existing customer, improve their social standing. Signaling keeps the top 1%.

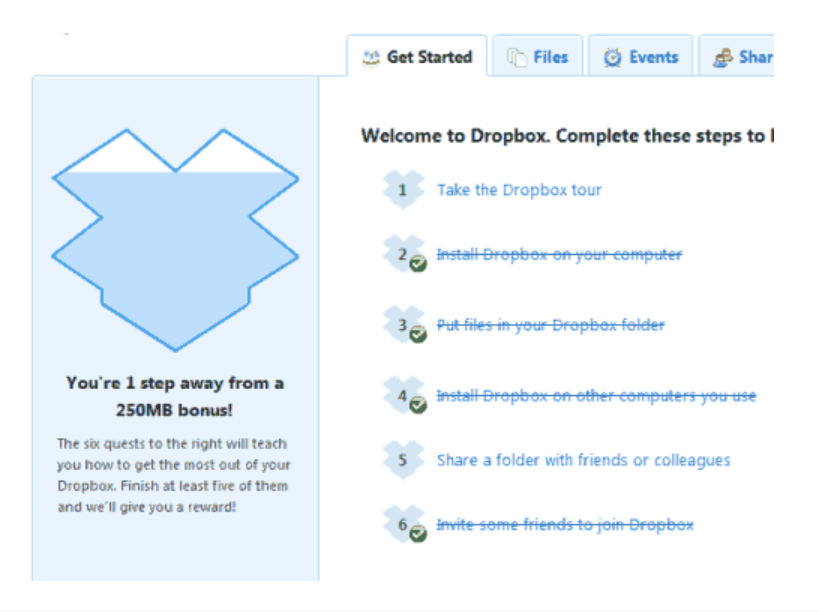

5/ Dropbox - Respect loyal customers

Dropbox is a company that puts people over profits. The company prioritized existing users.

Dropbox rewarded loyal users by offering 250 MB of free storage to anyone who referred a friend. The referral hack helped Dropbox get millions of downloads in its first few months.

Growth Hack Insights: Think of ways to improve the social positioning of your end-user when you are serving an existing customer. Signaling goes a long way in attracting the top 1% to stay.

These experiments weren’t hacks. Hundreds of failed experiments and user research drove these experiments. Scaling up experiments is difficult.

Contact me if you want to grow your startup's user base.

Daniel Vassallo

3 years ago

Why I quit a $500K job at Amazon to work for myself

I quit my 8-year Amazon job last week. I wasn't motivated to do another year despite promotions, pay, recognition, and praise.

In AWS, I built developer tools. I could have worked in that field forever.

I became an Amazon developer. Within 3.5 years, I was promoted twice to senior engineer and would have been promoted to principal engineer if I stayed. The company said I had great potential.

Over time, I became a reputed expert and leader within the company. I was respected.

First year I made $75K, last year $511K. If I stayed another two years, I could have made $1M.

Despite Amazon's reputation, my work–life balance was good. I no longer needed to prove myself and could do everything in 40 hours a week. My team worked from home once a week, and I rarely opened my laptop nights or weekends.

My coworkers were great. I had three generous, empathetic managers. I’m very grateful to everyone I worked with.

Everything was going well and getting better. My motivation to go to work each morning was declining despite my career and income growth.

Another promotion, pay raise, or big project wouldn't have boosted my motivation. Motivation was also waning. It was my freedom.

Demotivation

My motivation was high in the beginning. I worked with someone on an internal tool with little scrutiny. I had more freedom to choose how and what to work on than in recent years. Me and another person improved it, talked to users, released updates, and tested it. Whatever we wanted, we did. We did our best and were mostly self-directed.

In recent years, things have changed. My department's most important project had many stakeholders and complex goals. What I could do depended on my ability to convince others it was the best way to achieve our goals.

Amazon was always someone else's terms. The terms started out simple (keep fixing it), but became more complex over time (maximize all goals; satisfy all stakeholders). Working in a large organization imposed restrictions on how to do the work, what to do, what goals to set, and what business to pursue. This situation forced me to do things I didn't want to do.

Finding New Motivation

What would I do forever? Not something I did until I reached a milestone (an exit), but something I'd do until I'm 80. What could I do for the next 45 years that would make me excited to wake up and pay my bills? Is that too unambitious? Nope. Because I'm motivated by two things.

One is an external carrot or stick. I'm not forced to file my taxes every April, but I do because I don't want to go to jail. Or I may not like something but do it anyway because I need to pay the bills or want a nice car. Extrinsic motivation

One is internal. When there's no carrot or stick, this motivates me. This fuels hobbies. I wanted a job that was intrinsically motivated.

Is this too low-key? Extrinsic motivation isn't sustainable. Getting promoted felt good for a week, then it was over. When I hit $100K, I admired my W2 for a few days, but then it wore off. Same thing happened at $200K, $300K, $400K, and $500K. Earning $1M or $10M wouldn't change anything. I feel the same about every material reward or possession. Getting them feels good at first, but quickly fades.

Things I've done since I was a kid, when no one forced me to, don't wear off. Coding, selling my creations, charting my own path, and being honest. Why not always use my strengths and motivation? I'm lucky to live in a time when I can work independently in my field without large investments. So that’s what I’m doing.

What’s Next?

I'm going all-in on independence and will make a living from scratch. I won't do only what I like, but on my terms. My goal is to cover my family's expenses before my savings run out while doing something I enjoy. What more could I want from my work?

You can now follow me on Twitter as I continue to document my journey.

This post is a summary. Read full article here

Saskia Ketz

2 years ago

I hate marketing for my business, but here's how I push myself to keep going

Start now.

When it comes to building my business, I’m passionate about a lot of things. I love creating user experiences that simplify branding essentials. I love creating new typefaces and color combinations to inspire logo designers. I love fixing problems to improve my product.

Business marketing isn't my thing.

This is shared by many. Many solopreneurs, like me, struggle to advertise their business and drive themselves to work on it.

Without a lot of promotion, no company will succeed. Marketing is 80% of developing a firm, and when you're starting out, it's even more. Some believe that you shouldn't build anything until you've begun marketing your idea and found enough buyers.

Marketing your business without marketing experience is difficult. There are various outlets and techniques to learn. Instead of figuring out where to start, it's easier to return to your area of expertise, whether that's writing, designing product features, or improving your site's back end. Right?

First, realize that your role as a founder is to market your firm. Being a founder focused on product, I rarely work on it.

Secondly, use these basic methods that have helped me dedicate adequate time and focus to marketing. They're all simple to apply, and they've increased my business's visibility and success.

1. Establish buckets for every task.

You've probably heard to schedule tasks you don't like. As simple as it sounds, blocking a substantial piece of my workday for marketing duties like LinkedIn or Twitter outreach, AppSumo customer support, or SEO has forced me to spend time on them.

Giving me lots of room to focus on product development has helped even more. Sure, this means scheduling time to work on product enhancements after my four-hour marketing sprint.

It also involves making space to store product inspiration and ideas throughout the day so I don't get distracted. This is like the advice to keep a notebook beside your bed to write down your insomniac ideas. I keep fonts, color palettes, and product ideas in folders on my desktop. Knowing these concepts won't be lost lets me focus on marketing in the moment. When I have limited time to work on something, I don't have to conduct the research I've been collecting, so I can get more done faster.

2. Look for various accountability systems

Accountability is essential for self-discipline. To keep focused on my marketing tasks, I've needed various streams of accountability, big and little.

Accountability groups are great for bigger things. SaaS Camp, a sales outreach coaching program, is mine. We discuss marketing duties and results every week. This motivates me to do enough each week to be proud of my accomplishments. Yet hearing what works (or doesn't) for others gives me benchmarks for my own marketing outcomes and plenty of fresh techniques to attempt.

… say, I want to DM 50 people on Twitter about my product — I get that many Q-tips and place them in one pen holder on my desk.

The best accountability group can't watch you 24/7. I use a friend's simple method that shouldn't work (but it does). When I have a lot of marketing chores, like DMing 50 Twitter users about my product, That many Q-tips go in my desk pen holder. After each task, I relocate one Q-tip to an empty pen holder. When you have a lot of minor jobs to perform, it helps to see your progress. You might use toothpicks, M&Ms, or anything else you have a lot of.

3. Continue to monitor your feedback loops

Knowing which marketing methods work best requires monitoring results. As an entrepreneur with little go-to-market expertise, every tactic I pursue is an experiment. I need to know how each trial is doing to maximize my time.

I placed Google and Facebook advertisements on hold since they took too much time and money to obtain Return. LinkedIn outreach has been invaluable to me. I feel that talking to potential consumers one-on-one is the fastest method to grasp their problem areas, figure out my messaging, and find product market fit.

Data proximity offers another benefit. Seeing positive results makes it simpler to maintain doing a work you don't like. Why every fitness program tracks progress.

Marketing's goal is to increase customers and revenues, therefore I've found it helpful to track those metrics and celebrate monthly advances. I provide these updates for extra accountability.

Finding faster feedback loops is also motivating. Marketing brings more clients and feedback, in my opinion. Product-focused founders love that feedback. Positive reviews make me proud that my product is benefitting others, while negative ones provide me with suggestions for product changes that can improve my business.

The best advice I can give a lone creator who's afraid of marketing is to just start. Start early to learn by doing and reduce marketing stress. Start early to develop habits and successes that will keep you going. The sooner you start, the sooner you'll have enough consumers to return to your favorite work.