10 Ways to Make Money Online in 2022

As a tech-savvy person (and software engineer) or just a casual technology user, I'm sure you've had this same question countless times: How do I make money online? and how do I make money with my PC/Mac?

You're in luck! Today, I will list the top 5 easiest ways to make money online. Maybe a top ten in the future? Top 5 tips for 2022.

1. Using the gig economy

There are many websites on the internet that allow you to earn extra money using skills and equipment that you already own.

I'm referring to the gig economy. It's a great way to earn a steady passive income from the comfort of your own home. For some sites, premium subscriptions are available to increase sales and access features like bidding on more proposals.

Some of these are:

- Freelancer

- Upwork

- Fiverr (⭐ my personal favorite)

- TaskRabbit

2. Mineprize

MINEPRIZE is a great way to make money online. What's more, You need not do anything! You earn money by lending your idle CPU power to MINEPRIZE.

To register with MINEPRIZE, all you need is an email address and a password. Let MINEPRIZE use your resources, and watch the money roll in! You can earn up to $100 per month by letting your computer calculate. That's insane.

3. Writing

“O Romeo, Romeo, why art thou Romeo?” Okay, I admit that not all writing is Shakespearean. To be a copywriter, you'll need to be fluent in English. Thankfully, we don't have to use typewriters anymore.

Writing is a skill that can earn you a lot of money (claps for the rhyme).

Here are a few ways you can make money typing on your fancy keyboard:

Self-publish a book

Write scripts for video creators

Write for social media

Book-checking

Content marketing help

What a list within a list!

4. Coding

Yes, kids. You've probably coded before if you understand

You've probably coded before if you understand

print("hello world");

Computational thinking (or coding) is one of the most lucrative ways to earn extra money, or even as a main source of income.

Of course, there are hardcode coders (like me) who write everything line by line, binary di — okay, that last part is a bit exaggerated.

But you can also make money by writing websites or apps or creating low code or no code platforms.

But you can also make money by writing websites or apps or creating low code or no code platforms.

Some low-code platforms

Sheet : spreadsheets to apps :

Loading... We'll install your new app... No-Code Your team can create apps and automate tasks. Agile…

www.appsheet.com

Low-code platform | Business app creator - Zoho Creator

Work is going digital, and businesses of all sizes must adapt quickly. Zoho Creator is a...

www.zoho.com

Sell your data with TrueSource. NO CODE NEEDED

Upload data, configure your product, and earn in minutes.

www.truesource.io

Cool, huh?

5. Created Content

If we use the internet correctly, we can gain unfathomable wealth and extra money. But this one is a bit more difficult. Unlike some of the other items on this list, it takes a lot of time up front.

I'm referring to sites like YouTube and Medium. It's a great way to earn money both passively and actively. With the likes of Jake- and Logan Paul, PewDiePie (a.k.a. Felix Kjellberg) and others, it's never too late to become a millionaire on YouTube. YouTubers are always rising to the top with great content.

6. NFTs and Cryptocurrency

It is now possible to amass large sums of money by buying and selling digital assets on NFTs and cryptocurrency exchanges. Binance's Initial Game Offer rewards early investors who produce the best results.

One awesome game sold a piece of its plot for US$7.2 million! It's Axie Infinity. It's free and available on Google Play and Apple Store.

7. Affiliate Marketing

Affiliate marketing is a form of advertising where businesses pay others (like bloggers) to promote their goods and services. Here's an example. I write a blog (like this one) and post an affiliate link to an item I recommend buying — say, a camera — and if you buy the camera, I get a commission!

These programs pay well:

- Elementor

- AWeber

- Sendinblue

- ConvertKit\sLeadpages

- GetResponse

- SEMRush\sFiverr

- Pabbly

8. Start a blog

Now, if you're a writer or just really passionate about something or a niche, blogging could potentially monetize that passion!

Create a blog about anything you can think of. It's okay to start right here on Medium, as I did.

9. Dropshipping

And I mean that in the best possible way — drop shopping is ridiculously easy to set up, but difficult to maintain for some.

Luckily, Shopify has made setting up an online store a breeze. Drop-shipping from Alibaba and DHGate is quite common. You've got a winner if you can find a local distributor willing to let you drop ship their product!

10. Set up an Online Course

If you have a skill and can articulate it, online education is for you.

Skillshare, Pluralsight, and Coursera have all made inroads in recent years, upskilling people with courses that YOU can create and earn from.

That's it for today! Please share if you liked this post. If not, well —

More on Web3 & Crypto

Langston Thomas

3 years ago

A Simple Guide to NFT Blockchains

Ethereum's blockchain rules NFTs. Many consider it the one-stop shop for NFTs, and it's become the most talked-about and trafficked blockchain in existence.

Other blockchains are becoming popular in NFTs. Crypto-artists and NFT enthusiasts have sought new places to mint and trade NFTs due to Ethereum's high transaction costs and environmental impact.

When choosing a blockchain to mint on, there are several factors to consider. Size, creator costs, consumer spending habits, security, and community input are important. We've created a high-level summary of blockchains for NFTs to help clarify the fast-paced world of web3 tech.

Ethereum

Ethereum currently has the most NFTs. It's decentralized and provides financial and legal services without intermediaries. It houses popular NFT marketplaces (OpenSea), projects (CryptoPunks and the Bored Ape Yacht Club), and artists (Pak and Beeple).

It's also expensive and energy-intensive. This is because Ethereum works using a Proof-of-Work (PoW) mechanism. PoW requires computers to solve puzzles to add blocks and transactions to the blockchain. Solving these puzzles requires a lot of computer power, resulting in astronomical energy loss.

You should consider this blockchain first due to its popularity, security, decentralization, and ease of use.

Solana

Solana is a fast programmable blockchain. Its proof-of-history and proof-of-stake (PoS) consensus mechanisms eliminate complex puzzles. Reduced validation times and fees result.

PoS users stake their cryptocurrency to become a block validator. Validators get SOL. This encourages and rewards users to become stakers. PoH works with PoS to cryptographically verify time between events. Solana blockchain ensures transactions are in order and found by the correct leader (validator).

Solana's PoS and PoH mechanisms keep transaction fees and times low. Solana isn't as popular as Ethereum, so there are fewer NFT marketplaces and blockchain traders.

Tezos

Tezos is a greener blockchain. Tezos rose in 2021. Hic et Nunc was hailed as an economic alternative to Ethereum-centric marketplaces until Nov. 14, 2021.

Similar to Solana, Tezos uses a PoS consensus mechanism and only a PoS mechanism to reduce computational work. This blockchain uses two million times less energy than Ethereum. It's cheaper than Ethereum (but does cost more than Solana).

Tezos is a good place to start minting NFTs in bulk. Objkt is the largest Tezos marketplace.

Flow

Flow is a high-performance blockchain for NFTs, games, and decentralized apps (dApps). Flow is built with scalability in mind, so billions of people could interact with NFTs on the blockchain.

Flow became the NBA's blockchain partner in 2019. Flow, a product of Dapper labs (the team behind CryptoKitties), launched and hosts NBA Top Shot, making the blockchain integral to the popularity of non-fungible tokens.

Flow uses PoS to verify transactions, like Tezos. Developers are working on a model to handle 10,000 transactions per second on the blockchain. Low transaction fees.

Flow NFTs are tradeable on Blocktobay, OpenSea, Rarible, Foundation, and other platforms. NBA, NFL, UFC, and others have launched NFT marketplaces on Flow. Flow isn't as popular as Ethereum, resulting in fewer NFT marketplaces and blockchain traders.

Asset Exchange (WAX)

WAX is king of virtual collectibles. WAX is popular for digitalized versions of legacy collectibles like trading cards, figurines, memorabilia, etc.

Wax uses a PoS mechanism, but also creates carbon offset NFTs and partners with Climate Care. Like Flow, WAX transaction fees are low, and network fees are redistributed to the WAX community as an incentive to collectors.

WAX marketplaces host Topps, NASCAR, Hot Wheels, and cult classic film franchises like Godzilla, The Princess Bride, and Spiderman.

Binance Smart Chain

BSC is another good option for balancing fees and performance. High-speed transactions and low fees hurt decentralization. BSC is most centralized.

Binance Smart Chain uses Proof of Staked Authority (PoSA) to support a short block time and low fees. The 21 validators needed to run the exchange switch every 24 hours. 11 of the 21 validators are directly connected to the Binance Crypto Exchange, according to reports.

While many in the crypto and NFT ecosystems dislike centralization, the BSC NFT market picked up speed in 2021. OpenBiSea, AirNFTs, JuggerWorld, and others are gaining popularity despite not having as robust an ecosystem as Ethereum.

Ashraful Islam

4 years ago

Clean API Call With React Hooks

| Photo by Juanjo Jaramillo on Unsplash |

Calling APIs is the most common thing to do in any modern web application. When it comes to talking with an API then most of the time we need to do a lot of repetitive things like getting data from an API call, handling the success or error case, and so on.

When calling tens of hundreds of API calls we always have to do those tedious tasks. We can handle those things efficiently by putting a higher level of abstraction over those barebone API calls, whereas in some small applications, sometimes we don’t even care.

The problem comes when we start adding new features on top of the existing features without handling the API calls in an efficient and reusable manner. In that case for all of those API calls related repetitions, we end up with a lot of repetitive code across the whole application.

In React, we have different approaches for calling an API. Nowadays mostly we use React hooks. With React hooks, it’s possible to handle API calls in a very clean and consistent way throughout the application in spite of whatever the application size is. So let’s see how we can make a clean and reusable API calling layer using React hooks for a simple web application.

I’m using a code sandbox for this blog which you can get here.

import "./styles.css";

import React, { useEffect, useState } from "react";

import axios from "axios";

export default function App() {

const [posts, setPosts] = useState(null);

const [error, setError] = useState("");

const [loading, setLoading] = useState(false);

useEffect(() => {

handlePosts();

}, []);

const handlePosts = async () => {

setLoading(true);

try {

const result = await axios.get(

"https://jsonplaceholder.typicode.com/posts"

);

setPosts(result.data);

} catch (err) {

setError(err.message || "Unexpected Error!");

} finally {

setLoading(false);

}

};

return (

<div className="App">

<div>

<h1>Posts</h1>

{loading && <p>Posts are loading!</p>}

{error && <p>{error}</p>}

<ul>

{posts?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

</div>

);

}

I know the example above isn’t the best code but at least it’s working and it’s valid code. I will try to improve that later. For now, we can just focus on the bare minimum things for calling an API.

Here, you can try to get posts data from JsonPlaceholer. Those are the most common steps we follow for calling an API like requesting data, handling loading, success, and error cases.

If we try to call another API from the same component then how that would gonna look? Let’s see.

500: Internal Server Error

Now it’s going insane! For calling two simple APIs we’ve done a lot of duplication. On a top-level view, the component is doing nothing but just making two GET requests and handling the success and error cases. For each request, it’s maintaining three states which will periodically increase later if we’ve more calls.

Let’s refactor to make the code more reusable with fewer repetitions.

Step 1: Create a Hook for the Redundant API Request Codes

Most of the repetitions we have done so far are about requesting data, handing the async things, handling errors, success, and loading states. How about encapsulating those things inside a hook?

The only unique things we are doing inside handleComments and handlePosts are calling different endpoints. The rest of the things are pretty much the same. So we can create a hook that will handle the redundant works for us and from outside we’ll let it know which API to call.

500: Internal Server Error

Here, this request function is identical to what we were doing on the handlePosts and handleComments. The only difference is, it’s calling an async function apiFunc which we will provide as a parameter with this hook. This apiFunc is the only independent thing among any of the API calls we need.

With hooks in action, let’s change our old codes in App component, like this:

500: Internal Server Error

How about the current code? Isn’t it beautiful without any repetitions and duplicate API call handling things?

Let’s continue our journey from the current code. We can make App component more elegant. Now it knows a lot of details about the underlying library for the API call. It shouldn’t know that. So, here’s the next step…

Step 2: One Component Should Take Just One Responsibility

Our App component knows too much about the API calling mechanism. Its responsibility should just request the data. How the data will be requested under the hood, it shouldn’t care about that.

We will extract the API client-related codes from the App component. Also, we will group all the API request-related codes based on the API resource. Now, this is our API client:

import axios from "axios";

const apiClient = axios.create({

// Later read this URL from an environment variable

baseURL: "https://jsonplaceholder.typicode.com"

});

export default apiClient;

All API calls for comments resource will be in the following file:

import client from "./client";

const getComments = () => client.get("/comments");

export default {

getComments

};

All API calls for posts resource are placed in the following file:

import client from "./client";

const getPosts = () => client.get("/posts");

export default {

getPosts

};

Finally, the App component looks like the following:

import "./styles.css";

import React, { useEffect } from "react";

import commentsApi from "./api/comments";

import postsApi from "./api/posts";

import useApi from "./hooks/useApi";

export default function App() {

const getPostsApi = useApi(postsApi.getPosts);

const getCommentsApi = useApi(commentsApi.getComments);

useEffect(() => {

getPostsApi.request();

getCommentsApi.request();

}, []);

return (

<div className="App">

{/* Post List */}

<div>

<h1>Posts</h1>

{getPostsApi.loading && <p>Posts are loading!</p>}

{getPostsApi.error && <p>{getPostsApi.error}</p>}

<ul>

{getPostsApi.data?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

{/* Comment List */}

<div>

<h1>Comments</h1>

{getCommentsApi.loading && <p>Comments are loading!</p>}

{getCommentsApi.error && <p>{getCommentsApi.error}</p>}

<ul>

{getCommentsApi.data?.map((comment) => (

<li key={comment.id}>{comment.name}</li>

))}

</ul>

</div>

</div>

);

}

Now it doesn’t know anything about how the APIs get called. Tomorrow if we want to change the API calling library from axios to fetch or anything else, our App component code will not get affected. We can just change the codes form client.js This is the beauty of abstraction.

Apart from the abstraction of API calls, Appcomponent isn’t right the place to show the list of the posts and comments. It’s a high-level component. It shouldn’t handle such low-level data interpolation things.

So we should move this data display-related things to another low-level component. Here I placed those directly in the App component just for the demonstration purpose and not to distract with component composition-related things.

Final Thoughts

The React library gives the flexibility for using any kind of third-party library based on the application’s needs. As it doesn’t have any predefined architecture so different teams/developers adopted different approaches to developing applications with React. There’s nothing good or bad. We choose the development practice based on our needs/choices. One thing that is there beyond any choices is writing clean and maintainable codes.

Scott Hickmann

4 years ago

YouTube

This is a YouTube video:

You might also like

Jenn Leach

3 years ago

This clever Instagram marketing technique increased my sales to $30,000 per month.

No Paid Ads Required

I had an online store. After a year of running the company alongside my 9-to-5, I made enough to resign.

That day was amazing.

This Instagram marketing plan helped the store succeed.

How did I increase my sales to five figures a month without using any paid advertising?

I used customer event marketing.

I'm not sure this term exists. I invented it to describe what I was doing.

Instagram word-of-mouth, fan engagement, and interaction drove sales.

If a customer liked or disliked a product, the buzz would drive attention to the store.

I used customer-based events to increase engagement and store sales.

Success!

Here are the weekly Instagram customer events I coordinated while running my business:

Be the Buyer Days

Flash sales

Mystery boxes

Be the Buyer Days: How do they work?

Be the Buyer Days are exactly that.

You choose a day to share stock selections with social media followers.

This is an easy approach to engaging customers and getting fans enthusiastic about new releases.

First, pick a handful of items you’re considering ordering. I’d usually pick around 3 for Be the Buyer Day.

Then I'd poll the crowd on Instagram to vote on their favorites.

This was before Instagram stories, polls, and all the other cool features Instagram offers today. I think using these tools now would make this event even better.

I'd ask customers their favorite back then.

The growing comments excited customers.

Then I'd declare the winner, acquire the products, and start selling it.

How do flash sales work?

I mostly ran flash sales.

You choose a limited number of itemsdd for a few-hour sale.

We wanted most sales to result in sold-out items.

When an item sells out, it contributes to the sensation of scarcity and can inspire customers to visit your store to buy a comparable product, join your email list, become a fan, etc.

We hoped they'd act quickly.

I'd hold flash deals twice a week, which generated scarcity and boosted sales.

The store had a few thousand Instagram followers when I started flash deals.

Each flash sale item would make $400 to $600.

$400 x 3= $1,200

That's $1,200 on social media!

Twice a week, you'll make roughly $10K a month from Instagram.

$1,200/day x 8 events/month=$9,600

Flash sales did great.

We held weekly flash deals and sent social media and email reminders. That’s about it!

How are mystery boxes put together?

All you do is package a box of store products and sell it as a mystery box on TikTok or retail websites.

A $100 mystery box would cost $30.

You're discounting high-value boxes.

This is a clever approach to get rid of excess inventory and makes customers happy.

It worked!

Be the Buyer Days, flash deals, and mystery boxes helped build my company without paid advertisements.

All companies can use customer event marketing. Involving customers and providing an engaging environment can boost sales.

Try it!

Stephen Moore

3 years ago

Adam Neumanns is working to create the future of living in a classic example of a guy failing upward.

The comeback tour continues…

First, he founded a $47 billion co-working company (sorry, a “tech company”).

He established WeLive to disrupt apartment life.

Then he created WeGrow, a school that tossed aside the usual curriculum to feed children's souls and release their potential.

He raised the world’s consciousness.

Then he blew it all up (without raising the world’s consciousness). (He bought a wave pool.)

Adam Neumann's WeWork business burned investors' money. The founder sailed off with unimaginable riches, leaving long-time employees with worthless stocks and the company bleeding money. His track record, which includes a failing baby clothing company, should have stopped investors cold.

Once the dust settled, folks went on. We forgot about the Neumanns! We forgot about the private jets, company retreats, many houses, and WeWork's crippling. In that moment, the prodigal son of entrepreneurship returned, choosing the blockchain as his industry. His homecoming tour began with Flowcarbon, which sold Goddess Nature Tokens to lessen companies' carbon footprints.

Did it work?

Of course not.

Despite receiving $70 million from Andreessen Horowitz's a16z, the project has been halted just two months after its announcement.

This triumph should lower his grade.

Neumann seems to have moved on and has another revolutionary idea for the future of living. Flow (not Flowcarbon) aims to help people live in flow and will launch in 2023. It's the classic Neumann pitch: lofty goals, yogababble, and charisma to attract investors.

It's a winning formula for one investment fund. a16z has backed the project with its largest single check, $350 million. It has a splash page and 3,000 rental units, but is valued at over $1 billion. The blog post praised Neumann for reimagining the office and leading a paradigm-shifting global company.

Flow's mission is to solve the nation's housing crisis. How? Idk. It involves offering community-centric services in apartment properties to the same remote workforce he once wooed with free beer and a pingpong table. Revolutionary! It seems the goal is to apply WeWork's goals of transforming physical spaces and building community to apartments to solve many of today's housing problems.

The elevator pitch probably sounded great.

At least a16z knows it's a near-impossible task, calling it a seismic shift. Marc Andreessen opposes affordable housing in his wealthy Silicon Valley town. As details of the project emerge, more investors will likely throw ethics and morals out the window to go with the flow, throwing money at a man known for burning through it while building toxic companies, hoping he can bank another fantasy valuation before it all crashes.

Insanity is repeating the same action and expecting a different result. Everyone on the Neumann hype train needs to sober up.

Like WeWork, this venture Won’tWork.

Like before, it'll cause a shitstorm.

Dmytro Spilka

3 years ago

Why NFTs Have a Bright Future Away from Collectible Art After Punks and Apes

After a crazy second half of 2021 and significant trade volumes into 2022, the market for NFT artworks like Bored Ape Yacht Club, CryptoPunks, and Pudgy Penguins has begun a sharp collapse as market downturns hit token values.

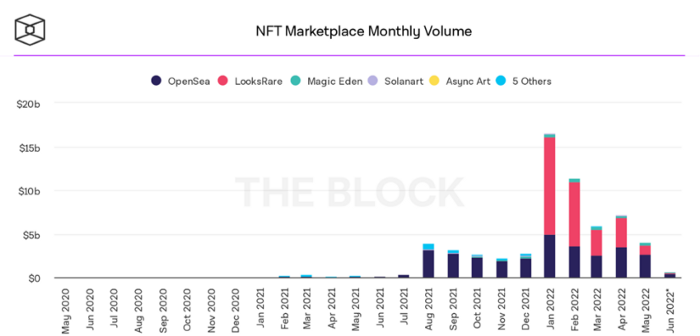

DappRadar data shows NFT monthly sales have fallen below $1 billion since June 2021. OpenSea, the world's largest NFT exchange, has seen sales volume decline 75% since May and is trading like July 2021.

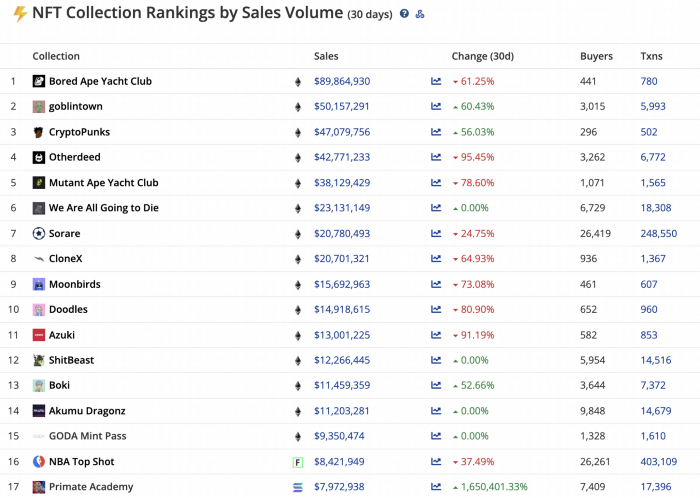

Prices of popular non-fungible tokens have also decreased. Bored Ape Yacht Club (BAYC) has witnessed volume and sales drop 63% and 15%, respectively, in the past month.

BeInCrypto analysis shows market decline. May 2022 cryptocurrency marketplace volume was $4 billion, according to a news platform. This is a sharp drop from April's $7.18 billion.

OpenSea, a big marketplace, contributed $2.6 billion, while LooksRare, Magic Eden, and Solanart also contributed.

NFT markets are digital platforms for buying and selling tokens, similar stock trading platforms. Although some of the world's largest exchanges offer NFT wallets, most users store their NFTs on their favorite marketplaces.

In January 2022, overall NFT sales volume was $16.57 billion, with LooksRare contributing $11.1 billion. May 2022's volume was $12.57 less than January, a 75% drop, and June's is expected to be considerably smaller.

A World Based on Utility

Despite declines in NFT trading volumes, not all investors are negative on NFTs. Although there are uncertainties about the sustainability of NFT-based art collections, there are fewer reservations about utility-based tokens and their significance in technology's future.

In June, business CEO Christof Straub said NFTs may help artists monetize unreleased content, resuscitate catalogs, establish deeper fan connections, and make processes more efficient through technology.

We all know NFTs can't be JPEGs. Straub noted that NFT music rights can offer more equitable rewards to musicians.

Music NFTs are here to stay if they have real value, solve real problems, are trusted and lawful, and have fair and sustainable business models.

NFTs can transform numerous industries, including music. Market opinion is shifting towards tokens with more utility than the social media artworks we're used to seeing.

While the major NFT names remain dominant in terms of volume, new utility-based initiatives are emerging as top 20 collections.

Otherdeed, Sorare, and NBA Top Shot are NFT-based games that rank above Bored Ape Yacht Club and Cryptopunks.

Users can switch video NFTs of basketball players in NBA Top Shot. Similar efforts are emerging in the non-fungible landscape.

Sorare shows how NFTs can support a new way of playing fantasy football, where participants buy and swap trading cards to create a 5-player team that wins rewards based on real-life performances.

Sorare raised 579.7 million in one of Europe's largest Series B financing deals in September 2021. Recently, the platform revealed plans to expand into Major League Baseball.

Strong growth indications suggest a promising future for NFTs. The value of art-based collections like BAYC and CryptoPunks may be questioned as markets become diluted by new limited collections, but the potential for NFTs to become intrinsically linked to tangible utility like online gaming, music and art, and even corporate reward schemes shows the industry has a bright future.