LCX is the latest CEX to have suffered a private key exploit.

The attack began around 10:30 PM +UTC on January 8th.

Peckshield spotted it first, then an official announcement came shortly after.

We’ve said it before; if established companies holding millions of dollars of users’ funds can’t manage their own hot wallet security, what purpose do they serve?

The Unique Selling Proposition (USP) of centralised finance grows smaller by the day.

The official incident report states that 7.94M USD were stolen in total, and that deposits and withdrawals to the platform have been paused.

LCX hot wallet: 0x4631018f63d5e31680fb53c11c9e1b11f1503e6f

Hacker’s wallet: 0x165402279f2c081c54b00f0e08812f3fd4560a05

Stolen funds:

- 162.68 ETH (502,671 USD)

- 3,437,783.23 USDC (3,437,783 USD)

- 761,236.94 EURe (864,840 USD)

- 101,249.71 SAND Token (485,995 USD)

- 1,847.65 LINK (48,557 USD)

- 17,251,192.30 LCX Token (2,466,558 USD)

- 669.00 QNT (115,609 USD)

- 4,819.74 ENJ (10,890 USD)

- 4.76 MKR (9,885 USD)

**~$1M worth of $LCX remains in the address, along with 611k EURe which has been frozen by Monerium.

The rest, a total of 1891 ETH (~$6M) was sent to Tornado Cash.**

Why can’t they keep private keys private?

Is it really that difficult for a traditional corporate structure to maintain good practice?

CeFi hacks leave us with little to say - we can only go on what the team chooses to tell us.

Next time, they can write this article themselves.

See below for a template.

More on Web3 & Crypto

Jonathan Vanian

4 years ago

What is Terra? Your guide to the hot cryptocurrency

With cryptocurrencies like Bitcoin, Ether, and Dogecoin gyrating in value over the past few months, many people are looking at so-called stablecoins like Terra to invest in because of their more predictable prices.

Terraform Labs, which oversees the Terra cryptocurrency project, has benefited from its rising popularity. The company said recently that investors like Arrington Capital, Lightspeed Venture Partners, and Pantera Capital have pledged $150 million to help it incubate various crypto projects that are connected to Terra.

Terraform Labs and its partners have built apps that operate on the company’s blockchain technology that helps keep a permanent and shared record of the firm’s crypto-related financial transactions.

Here’s what you need to know about Terra and the company behind it.

What is Terra?

Terra is a blockchain project developed by Terraform Labs that powers the startup’s cryptocurrencies and financial apps. These cryptocurrencies include the Terra U.S. Dollar, or UST, that is pegged to the U.S. dollar through an algorithm.

Terra is a stablecoin that is intended to reduce the volatility endemic to cryptocurrencies like Bitcoin. Some stablecoins, like Tether, are pegged to more conventional currencies, like the U.S. dollar, through cash and cash equivalents as opposed to an algorithm and associated reserve token.

To mint new UST tokens, a percentage of another digital token and reserve asset, Luna, is “burned.” If the demand for UST rises with more people using the currency, more Luna will be automatically burned and diverted to a community pool. That balancing act is supposed to help stabilize the price, to a degree.

“Luna directly benefits from the economic growth of the Terra economy, and it suffers from contractions of the Terra coin,” Terraform Labs CEO Do Kwon said.

Each time someone buys something—like an ice cream—using UST, that transaction generates a fee, similar to a credit card transaction. That fee is then distributed to people who own Luna tokens, similar to a stock dividend.

Who leads Terra?

The South Korean firm Terraform Labs was founded in 2018 by Daniel Shin and Kwon, who is now the company’s CEO. Kwon is a 29-year-old former Microsoft employee; Shin now heads the Chai online payment service, a Terra partner. Kwon said many Koreans have used the Chai service to buy goods like movie tickets using Terra cryptocurrency.

Terraform Labs does not make money from transactions using its crypto and instead relies on outside funding to operate, Kwon said. It has raised $57 million in funding from investors like HashKey Digital Asset Group, Divergence Digital Currency Fund, and Huobi Capital, according to deal-tracking service PitchBook. The amount raised is in addition to the latest $150 million funding commitment announced on July 16.

What are Terra’s plans?

Terraform Labs plans to use Terra’s blockchain and its associated cryptocurrencies—including one pegged to the Korean won—to create a digital financial system independent of major banks and fintech-app makers. So far, its main source of growth has been in Korea, where people have bought goods at stores, like coffee, using the Chai payment app that’s built on Terra’s blockchain. Kwon said the company’s associated Mirror trading app is experiencing growth in China and Thailand.

Meanwhile, Kwon said Terraform Labs would use its latest $150 million in funding to invest in groups that build financial apps on Terra’s blockchain. He likened the scouting and investing in other groups as akin to a “Y Combinator demo day type of situation,” a reference to the popular startup pitch event organized by early-stage investor Y Combinator.

The combination of all these Terra-specific financial apps shows that Terraform Labs is “almost creating a kind of bank,” said Ryan Watkins, a senior research analyst at cryptocurrency consultancy Messari.

In addition to cryptocurrencies, Terraform Labs has a number of other projects including the Anchor app, a high-yield savings account for holders of the group’s digital coins. Meanwhile, people can use the firm’s associated Mirror app to create synthetic financial assets that mimic more conventional ones, like “tokenized” representations of corporate stocks. These synthetic assets are supposed to be helpful to people like “a small retail trader in Thailand” who can more easily buy shares and “get some exposure to the upside” of stocks that they otherwise wouldn’t have been able to obtain, Kwon said. But some critics have said the U.S. Securities and Exchange Commission may eventually crack down on synthetic stocks, which are currently unregulated.

What do critics say?

Terra still has a long way to go to catch up to bigger cryptocurrency projects like Ethereum.

Most financial transactions involving Terra-related cryptocurrencies have originated in Korea, where its founders are based. Although Terra is becoming more popular in Korea thanks to rising interest in its partner Chai, it’s too early to say whether Terra-related currencies will gain traction in other countries.

Terra’s blockchain runs on a “limited number of nodes,” said Messari’s Watkins, referring to the computers that help keep the system running. That helps reduce latency that may otherwise slow processing of financial transactions, he said.

But the tradeoff is that Terra is less “decentralized” than other blockchain platforms like Ethereum, which is powered by thousands of interconnected computing nodes worldwide. That could make Terra less appealing to some blockchain purists.

Amelie Carver

3 years ago

Web3 Needs More Writers to Educate Us About It

WRITE FOR THE WEB3

Why web3’s messaging is lost and how crypto winter is growing growth seeds

People interested in crypto, blockchain, and web3 typically read Bitcoin and Ethereum's white papers. It's a good idea. Documents produced for developers and academia aren't always the ideal resource for beginners.

Given the surge of extremely technical material and the number of fly-by-nights, rug pulls, and other scams, it's little wonder mainstream audiences regard the blockchain sector as an expensive sideshow act.

What's the solution?

Web3 needs more than just builders.

After joining TikTok, I followed Amy Suto of SutoScience. Amy switched from TV scriptwriting to IT copywriting years ago. She concentrates on web3 now. Decentralized autonomous organizations (DAOs) are seeking skilled copywriters for web3.

Amy has found that web3's basics are easy to grasp; you don't need technical knowledge. There's a paradigm shift in knowing the basics; be persistent and patient.

Apple is positioning itself as a data privacy advocate, leveraging web3's zero-trust ethos on data ownership.

Finn Lobsien, who writes about web3 copywriting for the Mirror and Twitter, agrees: acronyms and abstractions won't do.

Web3 preached to the choir. Curious newcomers have only found whitepapers and scams when trying to learn why the community loves it. No wonder people resist education and buy-in.

Due to the gender gap in crypto (Crypto Bro is not just a stereotype), it attracts people singing to the choir or trying to cash in on the next big thing.

Last year, the industry was booming, so writing wasn't necessary. Now that the bear market has returned (for everyone, but especially web3), holding readers' attention is a valuable skill.

White papers and the Web3

Why does web3 rely so much on non-growth content?

Businesses must polish and improve their messaging moving into the 2022 recession. The 2021 tech boom provided such a sense of affluence and (unsustainable) growth that no one needed great marketing material. The market found them.

This was especially true for web3 and the first-time crypto believers. Obviously. If they knew which was good.

White papers help. White papers are highly technical texts that walk a reader through a product's details. How Does a White Paper Help Your Business and That White Paper Guy discuss them.

They're meant for knowledgeable readers. Investors and the technical (academic/developer) community read web3 white papers. White papers are used when a product is extremely technical or difficult to assist an informed reader to a conclusion. Web3 uses them most often for ICOs (initial coin offerings).

White papers for web3 education help newcomers learn about the web3 industry's components. It's like sending a first-grader to the Annotated Oxford English Dictionary to learn to read. It's a reference, not a learning tool, for words.

Newcomers can use platforms that teach the basics. These included Coinbase's Crypto Basics tutorials or Cryptochicks Academy, founded by the mother of Ethereum's inventor to get more women utilizing and working in crypto.

Discord and Web3 communities

Discord communities are web3's opposite. Discord communities involve personal communications and group involvement.

Online audience growth begins with community building. User personas prefer 1000 dedicated admirers over 1 million lukewarm followers, and the language is much more easygoing. Discord groups are renowned for phishing scams, compromised wallets, and incorrect information, especially since the crypto crisis.

White papers and Discord increase industry insularity. White papers are complicated, and Discord has a high risk threshold.

Web3 and writing ads

Copywriting is emotional, but white papers are logical. It uses the brain's quick-decision centers. It's meant to make the reader invest immediately.

Not bad. People think sales are sleazy, but they can spot the poor things.

Ethical copywriting helps you reach the correct audience. People who gain a following on Medium are likely to have copywriting training and a readership (or three) in mind when they publish. Tim Denning and Sinem Günel know how to identify a target audience and make them want to learn more.

In a fast-moving market, copywriting is less about long-form content like sales pages or blogs, but many organizations do. Instead, the copy is concise, individualized, and high-value. Tweets, email marketing, and IM apps (Discord, Telegram, Slack to a lesser extent) keep engagement high.

What does web3's messaging lack? As DAOs add stricter copyrighting, narrative and connecting tales seem to be missing.

Web3 is passionate about constructing the next internet. Now, they can connect their passion to a specific audience so newcomers understand why.

OnChain Wizard

3 years ago

How to make a >800 million dollars in crypto attacking the once 3rd largest stablecoin, Soros style

Everyone is talking about the $UST attack right now, including Janet Yellen. But no one is talking about how much money the attacker made (or how brilliant it was). Lets dig in.

Our story starts in late March, when the Luna Foundation Guard (or LFG) starts buying BTC to help back $UST. LFG started accumulating BTC on 3/22, and by March 26th had a $1bn+ BTC position. This is leg #1 that made this trade (or attack) brilliant.

The second leg comes in the form of the 4pool Frax announcement for $UST on April 1st. This added the second leg needed to help execute the strategy in a capital efficient way (liquidity will be lower and then the attack is on).

We don't know when the attacker borrowed 100k BTC to start the position, other than that it was sold into Kwon's buying (still speculation). LFG bought 15k BTC between March 27th and April 11th, so lets just take the average price between these dates ($42k).

So you have a ~$4.2bn short position built. Over the same time, the attacker builds a $1bn OTC position in $UST. The stage is now set to create a run on the bank and get paid on your BTC short. In anticipation of the 4pool, LFG initially removes $150mm from 3pool liquidity.

The liquidity was pulled on 5/8 and then the attacker uses $350mm of UST to drain curve liquidity (and LFG pulls another $100mm of liquidity).

But this only starts the de-pegging (down to 0.972 at the lows). LFG begins selling $BTC to defend the peg, causing downward pressure on BTC while the run on $UST was just getting started.

With the Curve liquidity drained, the attacker used the remainder of their $1b OTC $UST position ($650mm or so) to start offloading on Binance. As withdrawals from Anchor turned from concern into panic, this caused a real de-peg as people fled for the exits

So LFG is selling $BTC to restore the peg while the attacker is selling $UST on Binance. Eventually the chain gets congested and the CEXs suspend withdrawals of $UST, fueling the bank run panic. $UST de-pegs to 60c at the bottom, while $BTC bleeds out.

The crypto community panics as they wonder how much $BTC will be sold to keep the peg. There are liquidations across the board and LUNA pukes because of its redemption mechanism (the attacker very well could have shorted LUNA as well). BTC fell 25% from $42k on 4/11 to $31.3k

So how much did our attacker make? There aren't details on where they covered obviously, but if they are able to cover (or buy back) the entire position at ~$32k, that means they made $952mm on the short.

On the $350mm of $UST curve dumps I don't think they took much of a loss, lets assume 3% or just $11m. And lets assume that all the Binance dumps were done at 80c, thats another $125mm cost of doing business. For a grand total profit of $815mm (bf borrow cost).

BTC was the perfect playground for the trade, as the liquidity was there to pull it off. While having LFG involved in BTC, and foreseeing they would sell to keep the peg (and prevent LUNA from dying) was the kicker.

Lastly, the liquidity being low on 3pool in advance of 4pool allowed the attacker to drain it with only $350mm, causing the broader panic in both BTC and $UST. Any shorts on LUNA would've added a lot of P&L here as well, with it falling -65% since 5/7.

And for the reply guys, yes I know a lot of this involves some speculation & assumptions. But a lot of money was made here either way, and I thought it would be cool to dive into how they did it.

You might also like

Joanna Henderson

3 years ago

An Average Day in the Life of a 25-Year-Old -A Rich Man's At-Home Unemployed Girlfriend

And morning water bottle struggles.

Welcome to my TikTok, where I share my stay-at-home life! I'll show you my usual day from morning to night.

I rise early to prepare my guy iced coffee. I make matcha, my favorite drink. I also fill our water bottles, which takes time and effort, so I record and describe the procedure. As you see me perform the unthinkable by putting a water bottle in a soda machine, you'll see my magnificent but unowned condo. My lover has everything, including:

In the living room, a sizable velvet alabaster divan. I was unable to use the words white or sofa in place of alabaster or a divan since they are insufficiently elegant and do not adequately convey how opulent the item is. The price tag on the divan was another huge feature; I'm sure my lover wouldn't purchase any furniture for less than $20k because it would be beneath him.

A plush Swiss coffee-colored Tabriz carpet. Once more, white is a color associated with the underclass; for us, the wealthy, it's alabaster or swiss coffee. Sorry, my boyfriend is wealthy; I'm truly in the same situation. And yet, I’m the one whos freeloading off of him, not you haha!

Soft translucent powder is the hue of the vinyl wallcoverings. I merely made up the name of that hue, but I have to maintain the online character I've established. There is no room for adopting language typical of peasant people; I must reiterate that I am wealthy while they are not.

I rest after filling our water bottles. I'm really fatigued from chores. My boyfriend is skeptical about hiring a housekeeper and cook. Does he assume I'm a servant or maid? I can't be overly demanding or throw a tantrum since he may replace me with a younger version. Leonardo Di Caprio's fault!

After the break, I bring my lover a water bottle. He's off to work with my best wishes. After cleaning the shower, I text my BF saying I broke a nail. He charged $675 for a crystal-topped shellac manicure. Lucky me!

After this morning's crazy choirs, especially the water bottle one, I'm famished. I dress quickly and go to the neighborhood organic-vegan-gluten-free-sugar-free-plasma-free-GMO-free-HBO-free breakfast place. Most folks can't afford $17.99 for a caffeine-free-mushroom-plus-mud-and-electrolytes morning beverage. It goes nicely with my matcha. Eggs Benedict cost $68. English muffins are off-limits. I can't make myself obese. My partner said he'd swap me for a 19-year-old Eastern European if I keep eating bacon.

I leave no tip since tipping is too much pressure and math for me, so I go shopping.

My shopping adventures have gotten monotonous. 47 designer bags and 114 bag covers Birkins need their own luggage. My babies! I've never caught my BF with a baby. I have sleeping medications and a turkey baster. Tatiana is much younger and thinner than me, so I can't lose him to her. The goal is to become a stay-at-home wife shortly. A turkey baster is essential.

After spending $955 on La Mer lotions and getting a crystal manicure, I nap. Before my boyfriend's return, I can nap for 5 hours.

I wake up around 4 pm — it’s time to prepare dinner. Yes, I said “prepare for dinner,” not “prepare dinner.” I have crystals on my nails! Do you really think I would cook? No way.

My husband's arrival still requires much work. I clean the kitchen, get cutlery and napkins. I order UberEats while my BF is 30-45 minutes away.

Wagyu steaks with Matsutake mushroom soup today. I pick desserts for my lover but not myself. Eastern European threat?

When my BF gets home from work, we eat. I don't believe in tipping UberEats drivers. If he wants to appreciate life's finer things, he should locate a rich woman.

After eating, we plan our getaway. I requested Aruba's fanciest hotel for winter and expect a butler. We're bickering over who gets the butler. We may need two.

Day's end, I'm exhausted. Stay-at-home girlfriends put in a lot of time and work. Work and duties are never-ending.

Before bed, I shower and use a liquid gold mask in my 27-step makeup procedure. It's a French luxury brand, not La Mer.

Here's my day.

Note: I like satire and absurd trends. Stay-at-home-girlfriend TikTok videos have become popular recently.

I don't shame or support such agreements; I'm just an observer. Thanks for reading.

Sammy Abdullah

3 years ago

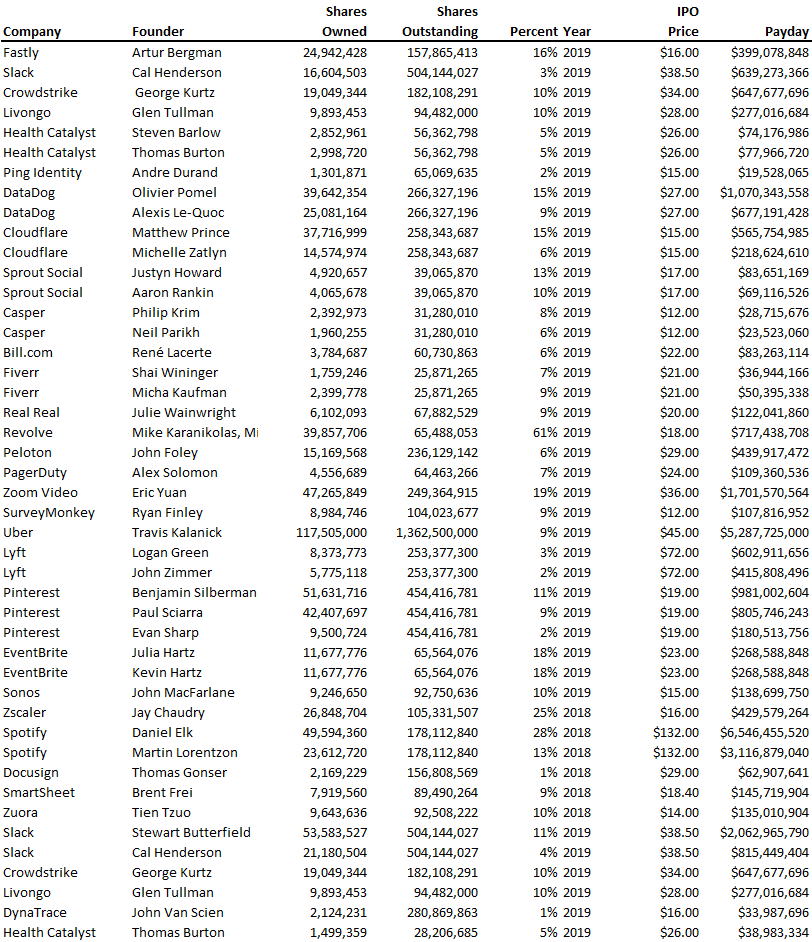

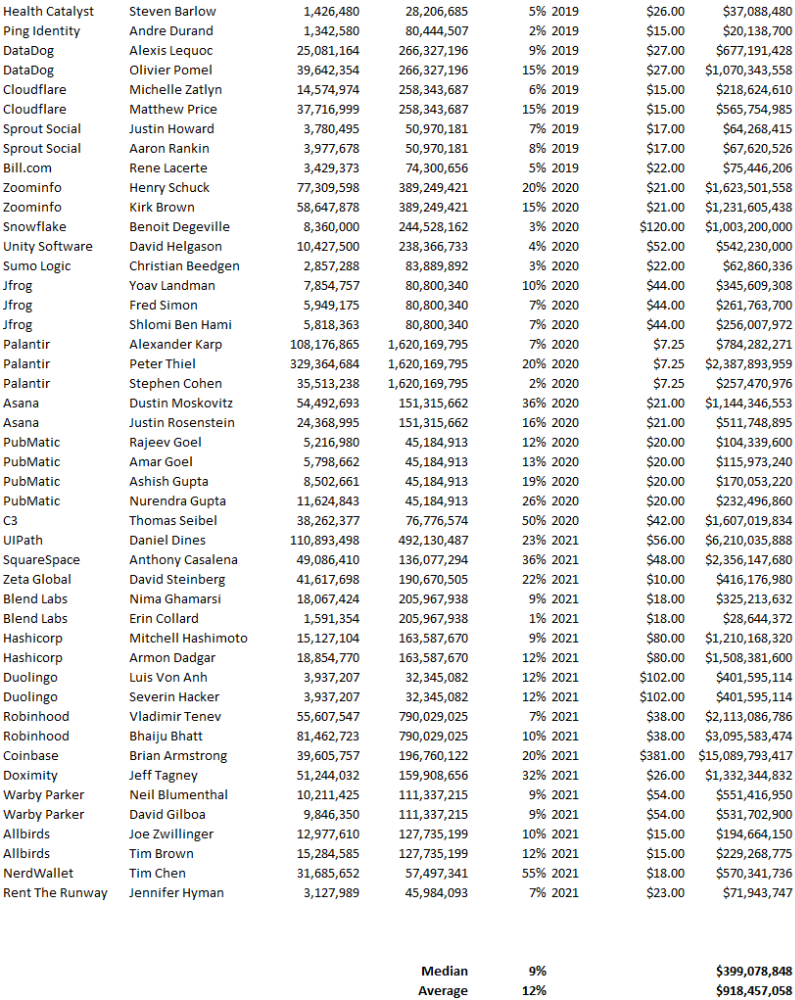

Payouts to founders at IPO

How much do startup founders make after an IPO? We looked at 2018's major tech IPOs. Paydays aren't what founders took home at the IPO (shares are normally locked up for 6 months), but what they were worth at the IPO price on the day the firm went public. It's not cash, but it's nice. Here's the data.

Several points are noteworthy.

Huge payoffs. Median and average pay were $399m and $918m. Average and median homeownership were 9% and 12%.

Coinbase, Uber, UI Path. Uber, Zoom, Spotify, UI Path, and Coinbase founders raised billions. Zoom's founder owned 19% and Spotify's 28% and 13%. Brian Armstrong controlled 20% of Coinbase at IPO and was worth $15bn. Preserving as much equity as possible by staying cash-efficient or raising at high valuations also helps.

The smallest was Ping. Ping's compensation was the smallest. Andre Duand owned 2% but was worth $20m at IPO. That's less than some billion-dollar paydays, but still good.

IPOs can be lucrative, as you can see. Preserving equity could be the difference between a $20mm and $15bln payday (Coinbase).

Tech With Dom

3 years ago

6 Awesome Desk Accessories You Must Have!

I'm gadget-obsessed. So I shared my top 6 desk gadgets.

These gadgets improve my workflow and are handy for working from home.

Without further ado...

Computer light bar Xiaomi Mi

I've previously recommended the Xiaomi Mi Light Bar, and I still do. It's stylish and convenient.

The Mi bar is a monitor-mounted desk lamp. The lamp's hue and brightness can be changed with a stylish wireless remote.

Changeable hue and brightness make it ideal for late-night work.

Desk Mat 2.

I wasn't planning to include a desk surface in this article, but I find it improves computer use.

The mouse feels smoother and is a better palm rest than wood or glass.

I'm currently using the overkill Razer Goliathus Extended Chroma RGB Gaming Surface, but I like RGB.

Using a desk surface or mat makes computer use more comfortable, and it's not expensive.

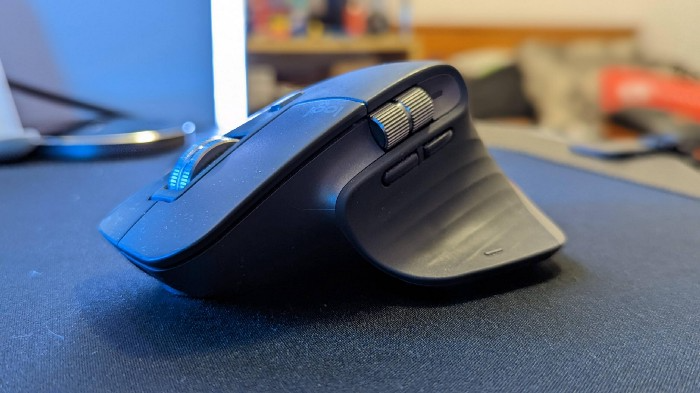

Third, the Logitech MX Master 3 Mouse

The Logitech MX Master 3 or any from the MX Master series is my favorite mouse.

The side scroll wheel on these mice is a feature I've never seen on another mouse.

Side scroll wheels are great for spreadsheets and video editing. It would be hard for me to switch from my Logitech MX Master 3 to another mouse. Only gaming is off-limits.

Google Nest 4.

Without a smart assistant, my desk is useless. I'm currently using the second-generation Google Nest Hub, but I've also used the Amazon Echo Dot, Echo Spot, and Apple HomePod Mini.

As a Pixel 6 Pro user, the Nest Hub works best with my phone.

My Nest Hub plays news, music, and calendar events. It also lets me control lights and switches with my smartphone. It plays YouTube videos.

Google Pixel Stand, No. 5

A wireless charger on my desk is convenient for charging my phone and other devices while I work. My desk has two wireless chargers. I have a Satechi aluminum fast charger and a second-generation Google Pixel Stand.

If I need to charge my phone and earbuds simultaneously, I use two wireless chargers. Satechi chargers are well-made and fast. Micro-USB is my only complaint.

The Pixel Stand converts compatible devices into a smart display for adjusting charging speeds and controlling other smart devices. My Pixel 6 Pro charges quickly. Here's my video review.

6. Anker Power Bank

Anker's 65W charger is my final recommendation. This online find was a must-have. This can charge my laptop and several non-wireless devices, perfect for any techie!

The charger has two USB-A ports and two USB-C ports, one with 45W and the other with 20W, so it can charge my iPad Pro and Pixel 6 Pro simultaneously.

Summary

These are some of my favorite office gadgets. My kit page has an updated list.

Links to the products mentioned in this article are in the appropriate sections. These are affiliate links.

You're up! Share the one desk gadget you can't live without and why.