More on Technology

Clive Thompson

3 years ago

Small Pieces of Code That Revolutionized the World

Few sentences can have global significance.

Ethan Zuckerman invented the pop-up commercial in 1997.

He was working for Tripod.com, an online service that let people make little web pages for free. Tripod offered advertising to make money. Advertisers didn't enjoy seeing their advertising next to filthy content, like a user's anal sex website.

Zuckerman's boss wanted a solution. Wasn't there a way to move the ads away from user-generated content?

When you visited a Tripod page, a pop-up ad page appeared. So, the ad isn't officially tied to any user page. It'd float onscreen.

Here’s the thing, though: Zuckerman’s bit of Javascript, that created the popup ad? It was incredibly short — a single line of code:

window.open('http://tripod.com/navbar.html'

"width=200, height=400, toolbar=no, scrollbars=no, resizable=no, target=_top");Javascript tells the browser to open a 200-by-400-pixel window on top of any other open web pages, without a scrollbar or toolbar.

Simple yet harmful! Soon, commercial websites mimicked Zuckerman's concept, infesting the Internet with pop-up advertising. In the early 2000s, a coder for a download site told me that most of their revenue came from porn pop-up ads.

Pop-up advertising are everywhere. You despise them. Hopefully, your browser blocks them.

Zuckerman wrote a single line of code that made the world worse.

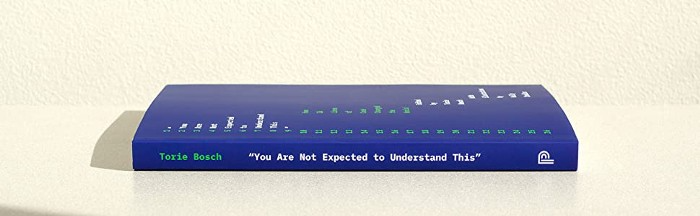

I read Zuckerman's story in How 26 Lines of Code Changed the World. Torie Bosch compiled a humorous anthology of short writings about code that tipped the world.

Most of these samples are quite short. Pop-cultural preconceptions about coding say that important code is vast and expansive. Hollywood depicts programmers as blurs spouting out Niagaras of code. Google's success was formerly attributed to its 2 billion lines of code.

It's usually not true. Google's original breakthrough, the piece of code that propelled Google above its search-engine counterparts, was its PageRank algorithm, which determined a web page's value based on how many other pages connected to it and the quality of those connecting pages. People have written their own Python versions; it's only a few dozen lines.

Google's operations, like any large tech company's, comprise thousands of procedures. So their code base grows. The most impactful code can be brief.

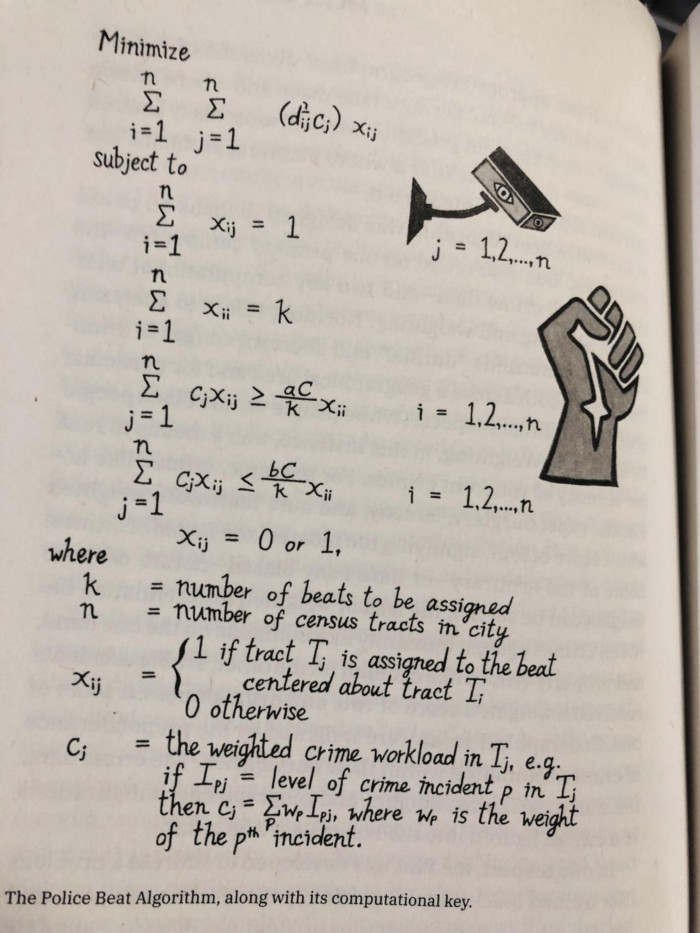

The examples are fascinating and wide-ranging, so read the whole book (or give it to nerds as a present). Charlton McIlwain wrote a chapter on the police beat algorithm developed in the late 1960s to anticipate crime hotspots so law enforcement could dispatch more officers there. It created a racial feedback loop. Since poor Black neighborhoods were already overpoliced compared to white ones, the algorithm directed more policing there, resulting in more arrests, which convinced it to send more police; rinse and repeat.

Kelly Chudler's You Are Not Expected To Understand This depicts the police-beat algorithm.

Even shorter code changed the world: the tracking pixel.

Lily Hay Newman's chapter on monitoring pixels says you probably interact with this code every day. It's a snippet of HTML that embeds a single tiny pixel in an email. Getting an email with a tracking code spies on me. As follows: My browser requests the single-pixel image as soon as I open the mail. My email sender checks to see if Clives browser has requested that pixel. My email sender can tell when I open it.

Adding a tracking pixel to an email is easy:

<img src="URL LINKING TO THE PIXEL ONLINE" width="0" height="0">An older example: Ellen R. Stofan and Nick Partridge wrote a chapter on Apollo 11's lunar module bailout code. This bailout code operated on the lunar module's tiny on-board computer and was designed to prioritize: If the computer grew overloaded, it would discard all but the most vital work.

When the lunar module approached the moon, the computer became overloaded. The bailout code shut down anything non-essential to landing the module. It shut down certain lunar module display systems, scaring the astronauts. Module landed safely.

22-line code

POODOO INHINT

CA Q

TS ALMCADR

TC BANKCALL

CADR VAC5STOR # STORE ERASABLES FOR DEBUGGING PURPOSES.

INDEX ALMCADR

CAF 0

ABORT2 TC BORTENT

OCT77770 OCT 77770 # DONT MOVE

CA V37FLBIT # IS AVERAGE G ON

MASK FLAGWRD7

CCS A

TC WHIMPER -1 # YES. DONT DO POODOO. DO BAILOUT.

TC DOWNFLAG

ADRES STATEFLG

TC DOWNFLAG

ADRES REINTFLG

TC DOWNFLAG

ADRES NODOFLAG

TC BANKCALL

CADR MR.KLEAN

TC WHIMPERThis fun book is worth reading.

I'm a contributor to the New York Times Magazine, Wired, and Mother Jones. I've also written Coders: The Making of a New Tribe and the Remaking of the World and Smarter Than You Think: How Technology is Changing Our Minds. Twitter and Instagram: @pomeranian99; Mastodon: @clive@saturation.social.

Asha Barbaschow

3 years ago

Apple WWDC 2022 Announcements

WWDC 2022 began early Tuesday morning. WWDC brought a ton of new features (which went for just shy of two hours).

With so many announcements, we thought we'd compile them. And now...

WWDC?

WWDC is Apple's developer conference. This includes iOS, macOS, watchOS, and iPadOS (all of its iPads). It's where Apple announces new features for developers to use. It's also where Apple previews new software.

Virtual WWDC runs June 6-10. You can rewatch the stream on Apple's website.

WWDC 2022 news:

Completely everything. Really. iOS 16 first.

iOS 16.

iOS 16 is a major iPhone update. iOS 16 adds the ability to customize the Lock Screen's color/theme. And widgets. It also organizes notifications and pairs Lock Screen with Focus themes. Edit or recall recently sent messages, recover recently deleted messages, and mark conversations as unread. Apple gives us yet another reason to stay in its walled garden with iMessage.

New iOS includes family sharing. Parents can set up a child's account with parental controls to restrict apps, movies, books, and music. iOS 16 lets large families and friend pods share iCloud photos. Up to six people can contribute photos to a separate iCloud library.

Live Text is getting creepier. Users can interact with text in any video frame. Touch and hold an image's subject to remove it from its background and place it in apps like messages. Dictation offers a new on-device voice-and-touch experience. Siri can run app shortcuts without setup in iOS 16. Apple also unveiled a new iOS 16 feature to help people break up with abusive partners who track their locations or read their messages. Safety Check.

Apple Pay Later allows iPhone users to buy products and pay for them later. iOS 16 pushes Mail. Users can schedule emails and cancel delivery before it reaches a recipient's inbox (be quick!). Mail now detects if you forgot an attachment, as Gmail has for years. iOS 16's Maps app gets "Multi-Stop Routing," .

Apple News also gets an iOS 16 update. Apple News adds My Sports. With iOS 16, the Apple Watch's Fitness app is also coming to iOS and the iPhone, using motion-sensing tech to track metrics and performance (as long as an athlete is wearing or carrying the device on their person).

iOS 16 includes accessibility updates like Door Detection.

watchOS9

Many of Apple's software updates are designed to take advantage of the larger screens in recent models, but they also improve health and fitness tracking.

The most obvious reason to upgrade watchOS every year is to get new watch faces from Apple. WatchOS 9 will add four new faces.

Runners' workout metrics improve.

Apple quickly realized that fitness tracking would be the Apple Watch's main feature, even though it's been the killer app for wearables since their debut. For watchOS 9, the Apple Watch will use its accelerometer and gyroscope to track a runner's form, stride length, and ground contact time. It also introduces the ability to specify heart rate zones, distance, and time intervals, with vibrating haptic feedback and voice alerts.

The Apple Watch's Fitness app is coming to iOS and the iPhone, using the smartphone's motion-sensing tech to track metrics and performance (as long as an athlete is wearing or carrying the device on their person).

We'll get sleep tracking, medication reminders, and drug interaction alerts. Your watch can create calendar events. A new Week view shows what meetings or responsibilities stand between you and the weekend.

iPadOS16

WWDC 2022 introduced iPad updates. iPadOS 16 is similar to iOS for the iPhone, but has features for larger screens and tablet accessories. The software update gives it many iPhone-like features.

iPadOS 16's Home app, like iOS 16, will have a new design language. iPad users who want to blame it on the rain finally have a Weather app. iPadOS 16 will have iCloud's Shared Photo Library, Live Text and Visual Look Up upgrades, and FaceTime Handoff, so you can switch between devices during a call.

Apple highlighted iPadOS 16's multitasking at WWDC 2022. iPad's Stage Manager sounds like a community theater app. It's a powerful multitasking tool for tablets and brings them closer to emulating laptops. Apple's iPadOS 16 supports multi-user collaboration. You can share content from Files, Keynote, Numbers, Pages, Notes, Reminders, Safari, and other third-party apps in Apple Messages.

M2-chip

WWDC 2022 revealed Apple's M2 chip. Apple has started the next generation of Apple Silicon for the Mac with M2. Apple says this device improves M1's performance.

M2's second-generation 5nm chip has 25% more transistors than M1's. 100GB/s memory bandwidth (50 per cent more than M1). M2 has 24GB of unified memory, up from 16GB but less than some ultraportable PCs' 32GB. The M2 chip has 10% better multi-core CPU performance than the M2, and it's nearly twice as fast as the latest 10-core PC laptop chip at the same power level (CPU performance is 18 per cent greater than M1).

New MacBooks

Apple introduced the M2-powered MacBook Air. Apple's entry-level laptop has a larger display, a new processor, new colors, and a notch.

M2 also powers the 13-inch MacBook Pro. The 13-inch MacBook Pro has 24GB of unified memory and 50% more memory bandwidth. New MacBook Pro batteries last 20 hours. As I type on the 2021 MacBook Pro, I can only imagine how much power the M2 will add.

macOS 13.0 (or, macOS Ventura)

macOS Ventura will take full advantage of M2 with new features like Stage Manager and Continuity Camera and Handoff for FaceTime. Safari, Mail, Messages, Spotlight, and more get updates in macOS Ventura.

Apple hasn't run out of California landmarks to name its OS after yet. macOS 13 will be called Ventura when it's released in a few months, but it's more than a name change and new wallpapers.

Stage Manager organizes windows

Stage Manager is a new macOS tool that organizes open windows and applications so they're still visible while focusing on a specific task. The main app sits in the middle of the desktop, while other apps and documents are organized and piled up to the side.

Improved Searching

Spotlight is one of macOS's least appreciated features, but with Ventura, it's becoming even more useful. Live Text lets you extract text from Spotlight results without leaving the window, including images from the photo library and the web.

Mail lets you schedule or unsend emails.

We've all sent an email we regret, whether it contained regrettable words or was sent at the wrong time. In macOS Ventura, Mail users can cancel or reschedule a message after sending it. Mail will now intelligently determine if a person was forgotten from a CC list or if a promised attachment wasn't included. Procrastinators can set a reminder to read a message later.

Safari adds tab sharing and password passkeys

Apple is updating Safari to make it more user-friendly... mostly. Users can share a group of tabs with friends or family, a useful feature when researching a topic with too many tabs. Passkeys will replace passwords in Safari's next version. Instead of entering random gibberish when creating a new account, macOS users can use TouchID to create an on-device passkey. Using an iPhone's camera and a QR system, Passkey syncs and works across all Apple devices and Windows computers.

Continuity adds Facetime device switching and iPhone webcam.

With macOS Ventura, iPhone users can transfer a FaceTime call from their phone to their desktop or laptop using Handoff, or vice versa if they started a call at their desk and need to continue it elsewhere. Apple finally admits its laptop and monitor webcams aren't the best. Continuity makes the iPhone a webcam. Apple demonstrated a feature where the wide-angle lens could provide a live stream of the desk below, while the standard zoom lens could focus on the speaker's face. New iPhone laptop mounts are coming.

System Preferences

System Preferences is Now System Settings and Looks Like iOS

Ventura's System Preferences has been renamed System Settings and is much more similar in appearance to iOS and iPadOS. As the iPhone and iPad are gateway devices into Apple's hardware ecosystem, new Mac users should find it easier to adjust.

This post is a summary. Read full article here

Shawn Mordecai

3 years ago

The Apple iPhone 14 Pill is Easier to Swallow

Is iPhone's Dynamic Island invention or a marketing ploy?

First of all, why the notch?

When Apple debuted the iPhone X with the notch, some were surprised, confused, and amused by the goof. Let the Brits keep the new meaning of top-notch.

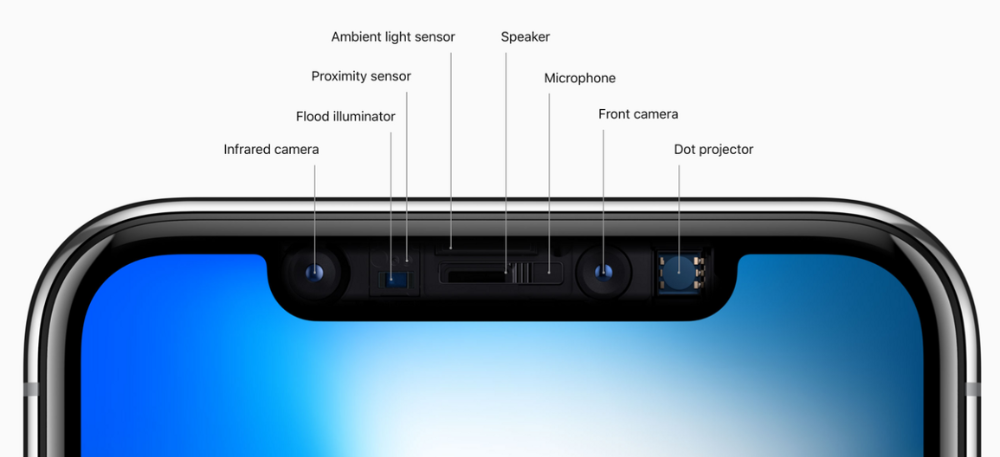

Apple removed the bottom home button to enhance screen space. The tides couldn't overtake part of the top. This section contained sensors, a speaker, a microphone, and cameras for facial recognition. A town resisted Apple's new iPhone design.

From iPhone X to 13, the notch has gotten smaller. We expected this as technology and engineering progressed, but we hated the notch. Apple approved. They attached it to their other gadgets.

Apple accepted, owned, and ran with the iPhone notch, it has become iconic (or infamous); and that’s intentional.

The Island Where Apple Is

Apple needs to separate itself, but they know how to do it well. The iPhone 14 Pro finally has us oohing and aahing. Life-changing, not just higher pixel density or longer battery.

Dynamic Island turned a visual differentiation into great usefulness, which may not be life-changing. Apple always welcomes the controversy, whether it's $700 for iMac wheels, no charging block with a new phone, or removing the headphone jack.

Apple knows its customers will be loyal, even if they're irritated. Their odd design choices often cause controversy. It's calculated that people blog, review, and criticize Apple's products. We accept what works for them.

While the competition zigs, Apple zags. Sometimes they zag too hard and smash into a wall, but we talk about it anyways, and that’s great publicity for them.

Getting Dependent on the drug

The notch became a crop. Dynamic Island's design is helpful, intuitive, elegant, and useful. It increases iPhone usability, productivity (slightly), and joy. No longer unsightly.

The medication helps with multitasking. It's a compact version of the iPhone's Live Activities lock screen function. Dynamic Island enhances apps and activities with visual effects and animations whether you engage with it or not. As you use the pill, its usefulness lessens. It lowers user notifications and consolidates them with live and permanent feeds, delivering quick app statuses. It uses the black pixels on the iPhone 14's display, which looked like a poor haircut.

The pill may be a gimmick to entice customers to use more Apple products and services. Apps may promote to their users like a live billboard.

Be prepared to get a huge dose of Dynamic Island’s “pill” like you never had before with the notch. It might become so satisfying and addicting to use, that every interaction with it will become habit-forming, and you’re going to forget that it ever existed.

WARNING: A Few Potential Side Effects

Vision blurred Dynamic Island's proximity to the front-facing camera may leave behind grease that blurs photos. Before taking a selfie, wipe the camera clean.

Strained thumb To fully use Dynamic Island, extend your thumb's reach 6.7 inches beyond your typical, comfortable range.

Happiness, contentment The Dynamic Island may enhance Endorphins and Dopamine. Multitasking, interactions, animations, and haptic feedback make you want to use this function again and again.

Motion-sickness Dynamic Island's motions and effects may make some people dizzy. If you can disable animations, you can avoid motion sickness.

I'm not a doctor, therefore they aren't established adverse effects.

Does Dynamic Island Include Multiple Tasks?

Dynamic Islands is a placebo for multitasking. Apple might have compromised on iPhone multitasking. It won't make you super productive, but it's a step up.

iPhone is primarily for personal use, like watching videos, messaging friends, sending money to friends, calling friends about the money you were supposed to send them, taking 50 photos of the same leaf, investing in crypto, driving for Uber because you lost all your money investing in crypto, listening to music and hailing an Uber from a deserted crop field because while you were driving for Uber your passenger stole your car and left you stranded, so you used Apple’s new SOS satellite feature to message your friend, who still didn’t receive their money, to hail you an Uber; now you owe them more money… karma?

We won't be watching videos on iPhones while perusing 10,000-row spreadsheets anytime soon. True multitasking and productivity aren't priorities for Apple's iPhone. Apple doesn't to preserve the iPhone's experience. Like why there's no iPad calculator. Apple doesn't want iPad users to do math, but isn't essential for productivity?

Digressing.

Apple will block certain functions so you must buy and use their gadgets and services, immersing yourself in their ecosystem and dictating how to use their goods.

Dynamic Island is a poor man’s multi-task for iPhone, and that’s fine it works for most iPhone users. For substantial productivity Apple prefers you to get an iPad or a MacBook. That’s part of the reason for restrictive features on certain Apple devices, but sometimes it’s based on principles to preserve the integrity of the product, according to Apple’s definition.

Is Apple using deception?

Dynamic Island may be distracting you from a design decision. The answer is kind of. Elegant distraction

When you pull down a smartphone webpage to refresh it or minimize an app, you get seamless animations. It's not simply because it appears better; it's due to iPhone and smartphone processing speeds. Such limits reduce the system's response to your activity, slowing the experience. Designers and developers use animations and effects to distract us from the time lag (most of the time) and sometimes because it looks cooler and smoother.

Dynamic Island makes apps more useable and interactive. It shows system states visually. Turn signal audio and visual cues, voice assistance, physical and digital haptic feedbacks, heads-up displays, fuel and battery level gauges, and gear shift indicators helped us overcome vehicle design problems.

Dynamic Island is a wonderfully delightful (and temporary) solution to a design “problem” until Apple or other companies can figure out a way to sink the cameras under the smartphone screen.

Apple Has Returned to Being an Innovative & Exciting Company

Now Apple's products are exciting. Next, bring back real Apple events, not pre-recorded demos.

Dynamic Island integrates hardware and software. What will this new tech do? How would this affect device use? Or is it just hype?

Dynamic Island may be an insignificant improvement to the iPhone, but it sure is promising for the future of bridging the human and computer interaction gap.

You might also like

Eitan Levy

3 years ago

The Top 8 Growth Hacking Techniques for Startups

The Top 8 Growth Hacking Techniques for Startups

These startups, and how they used growth-hack marketing to flourish, are some of the more ethical ones, while others are less so.

Before the 1970 World Cup began, Puma paid footballer Pele $120,000 to tie his shoes. The cameras naturally focused on Pele and his Pumas, causing people to realize that Puma was the top football brand in the world.

Early workers of Uber canceled over 5,000 taxi orders made on competing applications in an effort to financially hurt any of their rivals.

PayPal developed a bot that advertised cheap goods on eBay, purchased them, and paid for them with PayPal, fooling eBay into believing that customers preferred this payment option. Naturally, Paypal became eBay's primary method of payment.

Anyone renting a space on Craigslist had their emails collected by AirBnB, who then urged them to use their service instead. A one-click interface was also created to list immediately on AirBnB from Craigslist.

To entice potential single people looking for love, Tinder developed hundreds of bogus accounts of attractive people. Additionally, for at least a year, users were "accidentally" linked.

Reddit initially created a huge number of phony accounts and forced them all to communicate with one another. It eventually attracted actual users—the real meaning of "fake it 'til you make it"! Additionally, this gave Reddit control over the tone of voice they wanted for their site, which is still present today.

To disrupt the conferences of their main rival, Salesforce recruited fictitious protestors. The founder then took over all of the event's taxis and gave a 45-minute pitch for his startup. No place to hide!

When a wholesaler required a minimum purchase of 10, Amazon CEO Jeff Bezos wanted a way to purchase only one book from them. A wholesaler would deliver the one book he ordered along with an apology for the other eight books after he discovered a loophole and bought the one book before ordering nine books about lichens. On Amazon, he increased this across all of the users.

Original post available here

Jeff John Roberts

3 years ago

Jack Dorsey and Jay-Z Launch 'Bitcoin Academy' in Brooklyn rapper's home

The new Bitcoin Academy will teach Jay-Marcy Z's Houses neighbors "What is Cryptocurrency."

Jay-Z grew up in Brooklyn's Marcy Houses. The rapper and Block CEO Jack Dorsey are giving back to his hometown by creating the Bitcoin Academy.

The Bitcoin Academy will offer online and in-person classes, including "What is Money?" and "What is Blockchain?"

The program will provide participants with a mobile hotspot and a small amount of Bitcoin for hands-on learning.

Students will receive dinner and two evenings of instruction until early September. The Shawn Carter Foundation will help with on-the-ground instruction.

Jay-Z and Dorsey announced the program Thursday morning. It will begin at Marcy Houses but may be expanded.

Crypto Blockchain Plug and Black Bitcoin Billionaire, which has received a grant from Block, will teach the classes.

Jay-Z, Dorsey reunite

Jay-Z and Dorsey have previously worked together to promote a Bitcoin and crypto-based future.

In 2021, Dorsey's Block (then Square) acquired the rapper's streaming music service Tidal, which they propose using for NFT distribution.

Dorsey and Jay-Z launched an endowment in 2021 to fund Bitcoin development in Africa and India.

Dorsey is funding the new Bitcoin Academy out of his own pocket (as is Jay-Z), but he's also pushed crypto-related charitable endeavors at Block, including a $5 million fund backed by corporate Bitcoin interest.

This post is a summary. Read full article here

Miguel Saldana

3 years ago

Crypto Inheritance's Catch-22

Security, privacy, and a strategy!

How to manage digital assets in worst-case scenarios is a perennial crypto concern. Since blockchain and bitcoin technology is very new, this hasn't been a major issue. Many early developers are still around, and many groups created around this technology are young and feel they have a lot of life remaining. This is why inheritance and estate planning in crypto should be handled promptly. As cryptocurrency's intrinsic worth rises, many people in the ecosystem are holding on to assets that might represent generational riches. With that much value, it's crucial to have a plan. Creating a solid plan entails several challenges.

the initial hesitation in coming up with a plan

The technical obstacles to ensuring the assets' security and privacy

the passing of assets from a deceased or incompetent person

Legal experts' lack of comprehension and/or understanding of how to handle and treat cryptocurrency.

This article highlights several challenges, a possible web3-native solution, and how to learn more.

The Challenge of Inheritance:

One of the biggest hurdles to inheritance planning is starting the conversation. As humans, we don't like to think about dying. Early adopters will experience crazy gains as cryptocurrencies become more popular. Creating a plan is crucial if you wish to pass on your riches to loved ones. Without a plan, the technical and legal issues I barely mentioned above would erode value by requiring costly legal fees and/or taxes, and you could lose everything if wallets and assets are not distributed appropriately (associated with the private keys). Raising awareness of the consequences of not having a plan should motivate people to make one.

Controlling Change:

Having an inheritance plan for your digital assets is crucial, but managing the guts and bolts poses a new set of difficulties. Privacy and security provided by maintaining your own wallet provide different issues than traditional finances and assets. Traditional finance is centralized (say a stock brokerage firm). You can assign another person to handle the transfer of your assets. In crypto, asset transfer is reimagined. One may suppose future transaction management is doable, but the user must consent, creating an impossible loop.

I passed away and must send a transaction to the person I intended to deliver it to.

I have to confirm or authorize the transaction, but I'm dead.

In crypto, scheduling a future transaction wouldn't function. To transfer the wallet and its contents, we'd need the private keys and/or seed phrase. Minimizing private key exposure is crucial to protecting your crypto from hackers, social engineering, and phishing. People have lost private keys after utilizing Life Hack-type tactics to secure them. People that break and hide their keys, lose them, or make them unreadable won't help with managing and/or transferring. This will require a derived solution.

Legal Challenges and Implications

Unlike routine cryptocurrency transfers and transactions, local laws may require special considerations. Even in the traditional world, estate/inheritance taxes, how assets will be split, and who executes the will must be considered. Many lawyers aren't crypto-savvy, which complicates the matter. There will be many hoops to jump through to safeguard your crypto and traditional assets and give them to loved ones.

Knowing RUFADAA/UFADAA, depending on your state, is vital for Americans. UFADAA offers executors and trustees access to online accounts (which crypto wallets would fall into). RUFADAA was changed to limit access to the executor to protect assets. RUFADAA outlines how digital assets are administered following death and incapacity in the US.

A Succession Solution

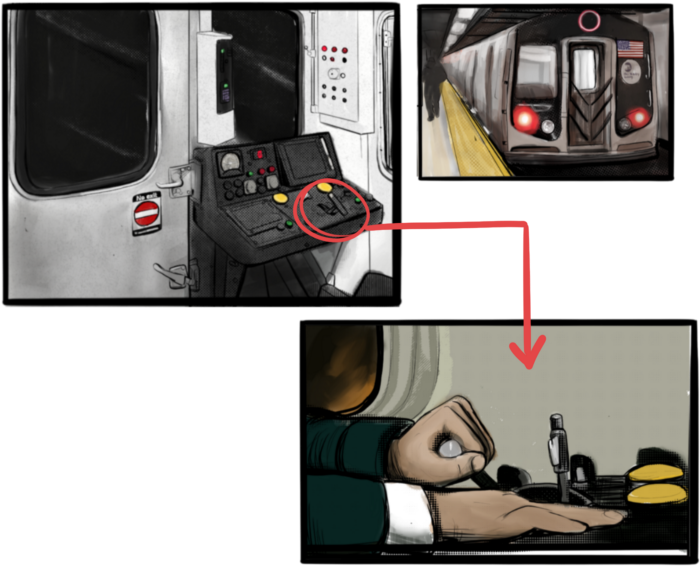

Having a will and talking about who would get what is the first step to having a solution, but using a Dad Mans Switch is a perfect tool for such unforeseen circumstances. As long as the switch's controller has control, nothing happens. Losing control of the switch initiates a state transition.

Subway or railway operations are examples. Modern control systems need the conductor to hold a switch to keep the train going. If they can't, the train stops.

Enter Sarcophagus

Sarcophagus is a decentralized dead man's switch built on Ethereum and Arweave. Sarcophagus allows actors to maintain control of their possessions even while physically unable to do so. Using a programmable dead man's switch and dual encryption, anything can be kept and passed on. This covers assets, secrets, seed phrases, and other use cases to provide authority and control back to the user and release trustworthy services from this work. Sarcophagus is built on a decentralized, transparent open source codebase. Sarcophagus is there if you're unprepared.