What is Terra? Your guide to the hot cryptocurrency

With cryptocurrencies like Bitcoin, Ether, and Dogecoin gyrating in value over the past few months, many people are looking at so-called stablecoins like Terra to invest in because of their more predictable prices.

Terraform Labs, which oversees the Terra cryptocurrency project, has benefited from its rising popularity. The company said recently that investors like Arrington Capital, Lightspeed Venture Partners, and Pantera Capital have pledged $150 million to help it incubate various crypto projects that are connected to Terra.

Terraform Labs and its partners have built apps that operate on the company’s blockchain technology that helps keep a permanent and shared record of the firm’s crypto-related financial transactions.

Here’s what you need to know about Terra and the company behind it.

What is Terra?

Terra is a blockchain project developed by Terraform Labs that powers the startup’s cryptocurrencies and financial apps. These cryptocurrencies include the Terra U.S. Dollar, or UST, that is pegged to the U.S. dollar through an algorithm.

Terra is a stablecoin that is intended to reduce the volatility endemic to cryptocurrencies like Bitcoin. Some stablecoins, like Tether, are pegged to more conventional currencies, like the U.S. dollar, through cash and cash equivalents as opposed to an algorithm and associated reserve token.

To mint new UST tokens, a percentage of another digital token and reserve asset, Luna, is “burned.” If the demand for UST rises with more people using the currency, more Luna will be automatically burned and diverted to a community pool. That balancing act is supposed to help stabilize the price, to a degree.

“Luna directly benefits from the economic growth of the Terra economy, and it suffers from contractions of the Terra coin,” Terraform Labs CEO Do Kwon said.

Each time someone buys something—like an ice cream—using UST, that transaction generates a fee, similar to a credit card transaction. That fee is then distributed to people who own Luna tokens, similar to a stock dividend.

Who leads Terra?

The South Korean firm Terraform Labs was founded in 2018 by Daniel Shin and Kwon, who is now the company’s CEO. Kwon is a 29-year-old former Microsoft employee; Shin now heads the Chai online payment service, a Terra partner. Kwon said many Koreans have used the Chai service to buy goods like movie tickets using Terra cryptocurrency.

Terraform Labs does not make money from transactions using its crypto and instead relies on outside funding to operate, Kwon said. It has raised $57 million in funding from investors like HashKey Digital Asset Group, Divergence Digital Currency Fund, and Huobi Capital, according to deal-tracking service PitchBook. The amount raised is in addition to the latest $150 million funding commitment announced on July 16.

What are Terra’s plans?

Terraform Labs plans to use Terra’s blockchain and its associated cryptocurrencies—including one pegged to the Korean won—to create a digital financial system independent of major banks and fintech-app makers. So far, its main source of growth has been in Korea, where people have bought goods at stores, like coffee, using the Chai payment app that’s built on Terra’s blockchain. Kwon said the company’s associated Mirror trading app is experiencing growth in China and Thailand.

Meanwhile, Kwon said Terraform Labs would use its latest $150 million in funding to invest in groups that build financial apps on Terra’s blockchain. He likened the scouting and investing in other groups as akin to a “Y Combinator demo day type of situation,” a reference to the popular startup pitch event organized by early-stage investor Y Combinator.

The combination of all these Terra-specific financial apps shows that Terraform Labs is “almost creating a kind of bank,” said Ryan Watkins, a senior research analyst at cryptocurrency consultancy Messari.

In addition to cryptocurrencies, Terraform Labs has a number of other projects including the Anchor app, a high-yield savings account for holders of the group’s digital coins. Meanwhile, people can use the firm’s associated Mirror app to create synthetic financial assets that mimic more conventional ones, like “tokenized” representations of corporate stocks. These synthetic assets are supposed to be helpful to people like “a small retail trader in Thailand” who can more easily buy shares and “get some exposure to the upside” of stocks that they otherwise wouldn’t have been able to obtain, Kwon said. But some critics have said the U.S. Securities and Exchange Commission may eventually crack down on synthetic stocks, which are currently unregulated.

What do critics say?

Terra still has a long way to go to catch up to bigger cryptocurrency projects like Ethereum.

Most financial transactions involving Terra-related cryptocurrencies have originated in Korea, where its founders are based. Although Terra is becoming more popular in Korea thanks to rising interest in its partner Chai, it’s too early to say whether Terra-related currencies will gain traction in other countries.

Terra’s blockchain runs on a “limited number of nodes,” said Messari’s Watkins, referring to the computers that help keep the system running. That helps reduce latency that may otherwise slow processing of financial transactions, he said.

But the tradeoff is that Terra is less “decentralized” than other blockchain platforms like Ethereum, which is powered by thousands of interconnected computing nodes worldwide. That could make Terra less appealing to some blockchain purists.

More on Web3 & Crypto

Shan Vernekar

3 years ago

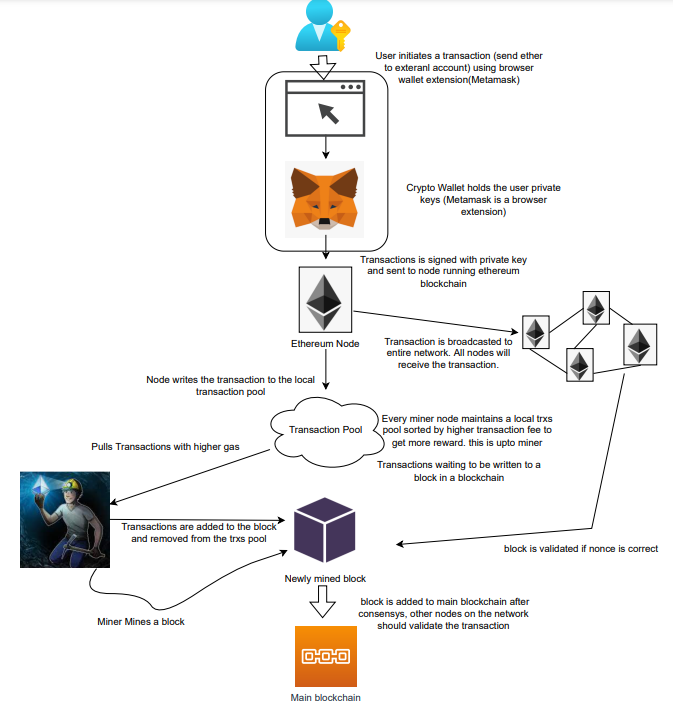

How the Ethereum blockchain's transactions are carried out

Overview

Ethereum blockchain is a network of nodes that validate transactions. Any network node can be queried for blockchain data for free. To write data as a transition requires processing and writing to each network node's storage. Fee is paid in ether and is also called as gas.

We'll examine how user-initiated transactions flow across the network and into the blockchain.

Flow of transactions

A user wishes to move some ether from one external account to another. He utilizes a cryptocurrency wallet for this (like Metamask), which is a browser extension.

The user enters the desired transfer amount and the external account's address. He has the option to choose the transaction cost he is ready to pay.

Wallet makes use of this data, signs it with the user's private key, and writes it to an Ethereum node. Services such as Infura offer APIs that enable writing data to nodes. One of these services is used by Metamask. An example transaction is shown below. Notice the “to” address and value fields.

var rawTxn = {

nonce: web3.toHex(txnCount),

gasPrice: web3.toHex(100000000000),

gasLimit: web3.toHex(140000),

to: '0x633296baebc20f33ac2e1c1b105d7cd1f6a0718b',

value: web3.toHex(0),

data: '0xcc9ab24952616d6100000000000000000000000000000000000000000000000000000000'

};The transaction is written to the target Ethereum node's local TRANSACTION POOL. It informed surrounding nodes of the new transaction, and those nodes reciprocated. Eventually, this transaction is received by and written to each node's local TRANSACTION pool.

The miner who finds the following block first adds pending transactions (with a higher gas cost) from the nearby TRANSACTION POOL to the block.

The transactions written to the new block are verified by other network nodes.

A block is added to the main blockchain after there is consensus and it is determined to be genuine. The local blockchain is updated with the new node by additional nodes as well.

Block mining begins again next.

The image above shows how transactions go via the network and what's needed to submit them to the main block chain.

References

ethereum.org/transactions How Ethereum transactions function, their data structure, and how to send them via app. ethereum.org

ANDREW SINGER

4 years ago

Crypto seen as the ‘future of money’ in inflation-mired countries

Crypto as the ‘future of money' in inflation-stricken nations

Citizens of devalued currencies “need” crypto. “Nice to have” in the developed world.

According to Gemini's 2022 Global State of Crypto report, cryptocurrencies “evolved from what many considered a niche investment into an established asset class” last year.

More than half of crypto owners in Brazil (51%), Hong Kong (51%), and India (54%), according to the report, bought cryptocurrency for the first time in 2021.

The study found that inflation and currency devaluation are powerful drivers of crypto adoption, especially in emerging market (EM) countries:

“Respondents in countries that have seen a 50% or greater devaluation of their currency against the USD over the last decade were more than 5 times as likely to plan to purchase crypto in the coming year.”

Between 2011 and 2021, the real lost 218 percent of its value against the dollar, and 45 percent of Brazilians surveyed by Gemini said they planned to buy crypto in 2019.

The rand (South Africa's currency) has fallen 103 percent in value over the last decade, second only to the Brazilian real, and 32 percent of South Africans expect to own crypto in the coming year. Mexico and India, the third and fourth highest devaluation countries, followed suit.

Compared to the US dollar, Hong Kong and the UK currencies have not devalued in the last decade. Meanwhile, only 5% and 8% of those surveyed in those countries expressed interest in buying crypto.

What can be concluded? Noah Perlman, COO of Gemini, sees various crypto use cases depending on one's location.

‘Need to have' investment in countries where the local currency has devalued against the dollar, whereas in the developed world it is still seen as a ‘nice to have'.

Crypto as money substitute

As an adjunct professor at New York University School of Law, Winston Ma distinguishes between an asset used as an inflation hedge and one used as a currency replacement.

Unlike gold, he believes Bitcoin (BTC) is not a “inflation hedge”. They acted more like growth stocks in 2022. “Bitcoin correlated more closely with the S&P 500 index — and Ether with the NASDAQ — than gold,” he told Cointelegraph. But in the developing world, things are different:

“Inflation may be a primary driver of cryptocurrency adoption in emerging markets like Brazil, India, and Mexico.”

According to Justin d'Anethan, institutional sales director at the Amber Group, a Singapore-based digital asset firm, early adoption was driven by countries where currency stability and/or access to proper banking services were issues. Simply put, he said, developing countries want alternatives to easily debased fiat currencies.

“The larger flows may still come from institutions and developed countries, but the actual users may come from places like Lebanon, Turkey, Venezuela, and Indonesia.”

“Inflation is one of the factors that has and continues to drive adoption of Bitcoin and other crypto assets globally,” said Sean Stein Smith, assistant professor of economics and business at Lehman College.

But it's only one factor, and different regions have different factors, says Stein Smith. As a “instantaneously accessible, traceable, and cost-effective transaction option,” investors and entrepreneurs increasingly recognize the benefits of crypto assets. Other places promote crypto adoption due to “potential capital gains and returns”.

According to the report, “legal uncertainty around cryptocurrency,” tax questions, and a general education deficit could hinder adoption in Asia Pacific and Latin America. In Africa, 56% of respondents said more educational resources were needed to explain cryptocurrencies.

Not only inflation, but empowering our youth to live better than their parents without fear of failure or allegiance to legacy financial markets or products, said Monica Singer, ConsenSys South Africa lead. Also, “the issue of cash and remittances is huge in Africa, as is the issue of social grants.”

Money's future?

The survey found that Brazil and Indonesia had the most cryptocurrency ownership. In each country, 41% of those polled said they owned crypto. Only 20% of Americans surveyed said they owned cryptocurrency.

These markets are more likely to see cryptocurrencies as the future of money. The survey found:

“The majority of respondents in Latin America (59%) and Africa (58%) say crypto is the future of money.”

Brazil (66%), Nigeria (63%), Indonesia (61%), and South Africa (57%). Europe and Australia had the fewest believers, with Denmark at 12%, Norway at 15%, and Australia at 17%.

Will the Ukraine conflict impact adoption?

The poll was taken before the war. Will the devastating conflict slow global crypto adoption growth?

With over $100 million in crypto donations directly requested by the Ukrainian government since the war began, Stein Smith says the war has certainly brought crypto into the mainstream conversation.

“This real-world demonstration of decentralized money's power could spur wider adoption, policy debate, and increased use of crypto as a medium of exchange.”

But the war may not affect all developing nations. “The Ukraine war has no impact on African demand for crypto,” Others loom larger. “Yes, inflation, but also a lack of trust in government in many African countries, and a young demographic very familiar with mobile phones and the internet.”

A major success story like Mpesa in Kenya has influenced the continent and may help accelerate crypto adoption. Creating a plan when everyone you trust fails you is directly related to the African spirit, she said.

On the other hand, Ma views the Ukraine conflict as a sort of crisis check for cryptocurrencies. For those in emerging markets, the Ukraine-Russia war has served as a “stress test” for the cryptocurrency payment rail, he told Cointelegraph.

“These emerging markets may see the greatest future gains in crypto adoption.”

Inflation and currency devaluation are persistent global concerns. In such places, Bitcoin and other cryptocurrencies are now seen as the “future of money.” Not in the developed world, but that could change with better regulation and education. Inflation and its impact on cash holdings are waking up even Western nations.

Read original post here.

CyberPunkMetalHead

3 years ago

It's all about the ego with Terra 2.0.

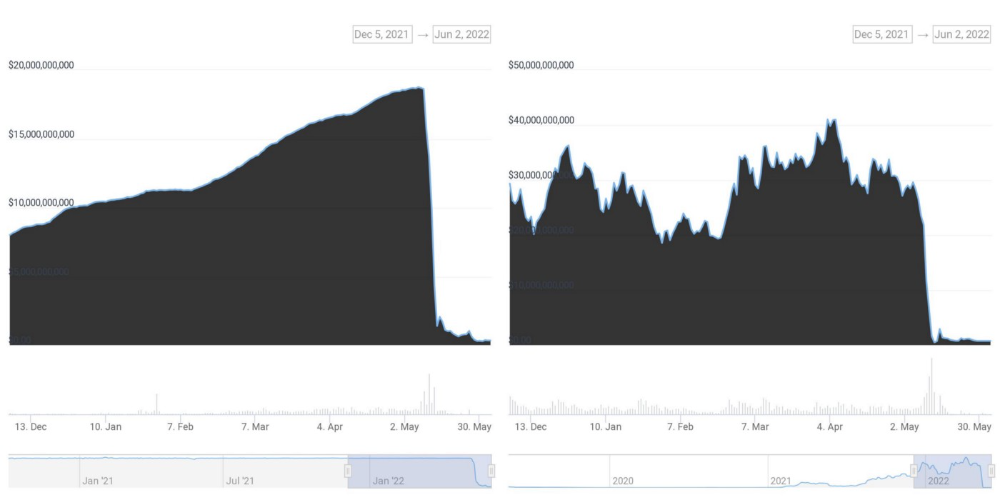

UST depegs and LUNA crashes 99.999% in a fraction of the time it takes the Moon to orbit the Earth.

Fat Man, a Terra whistle-blower, promises to expose Do Kwon's dirty secrets and shady deals.

The Terra community has voted to relaunch Terra LUNA on a new blockchain. The Terra 2.0 Pheonix-1 blockchain went live on May 28, 2022, and people were airdropped the new LUNA, now called LUNA, while the old LUNA became LUNA Classic.

Does LUNA deserve another chance? To answer this, or at least start a conversation about the Terra 2.0 chain's advantages and limitations, we must assess its fundamentals, ideology, and long-term vision.

Whatever the result, our analysis must be thorough and ruthless. A failure of this magnitude cannot happen again, so we must magnify every potential breaking point by 10.

Will UST and LUNA holders be compensated in full?

The obvious. First, and arguably most important, is to restore previous UST and LUNA holders' bags.

Terra 2.0 has 1,000,000,000,000 tokens to distribute.

25% of a community pool

Holders of pre-attack LUNA: 35%

10% of aUST holders prior to attack

Holders of LUNA after an attack: 10%

UST holders as of the attack: 20%

Every LUNA and UST holder has been compensated according to the above proposal.

According to self-reported data, the new chain has 210.000.000 tokens and a $1.3bn marketcap. LUNC and UST alone lost $40bn. The new token must fill this gap. Since launch:

LUNA holders collectively own $1b worth of LUNA if we subtract the 25% community pool airdrop from the current market cap and assume airdropped LUNA was never sold.

At the current supply, the chain must grow 40 times to compensate holders. At the current supply, LUNA must reach $240.

LUNA needs a full-on Bull Market to make LUNC and UST holders whole.

Who knows if you'll be whole? From the time you bought to the amount and price, there are too many variables to determine if Terra can cover individual losses.

The above distribution doesn't consider individual cases. Terra didn't solve individual cases. It would have been huge.

What does LUNA offer in terms of value?

UST's marketcap peaked at $18bn, while LUNC's was $41bn. LUNC and UST drove the Terra chain's value.

After it was confirmed (again) that algorithmic stablecoins are bad, Terra 2.0 will no longer support them.

Algorithmic stablecoins contributed greatly to Terra's growth and value proposition. Terra 2.0 has no product without algorithmic stablecoins.

Terra 2.0 has an identity crisis because it has no actual product. It's like Volkswagen faking carbon emission results and then stopping car production.

A project that has already lost the trust of its users and nearly all of its value cannot survive without a clear and in-demand use case.

Do Kwon, how about him?

Oh, the Twitter-caller-poor? Who challenges crypto billionaires to break his LUNA chain? Who dissolved Terra Labs South Korea before depeg? Arrogant guy?

That's not a good image for LUNA, especially when making amends. I think he should step down and let a nicer person be Terra 2.0's frontman.

The verdict

Terra has a terrific community with an arrogant, unlikeable leader. The new LUNA chain must grow 40 times before it can start making up its losses, and even then, not everyone's losses will be covered.

I won't invest in Terra 2.0 or other algorithmic stablecoins in the near future. I won't be near any Do Kwon-related project within 100 miles. My opinion.

Can Terra 2.0 be saved? Comment below.

You might also like

Adrien Book

3 years ago

What is Vitalik Buterin's newest concept, the Soulbound NFT?

Decentralizing Web3's soul

Our tech must reflect our non-transactional connections. Web3 arose from a lack of social links. It must strengthen these linkages to get widespread adoption. Soulbound NFTs help.

This NFT creates digital proofs of our social ties. It embodies G. Simmel's idea of identity, in which individuality emerges from social groups, just as social groups evolve from people.

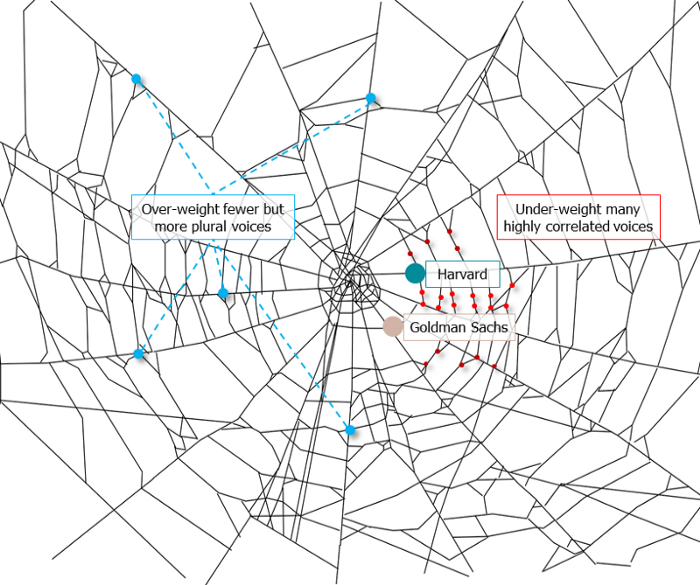

It's multipurpose. First, gather online our distinctive social features. Second, highlight and categorize social relationships between entities and people to create a spiderweb of networks.

1. 🌐 Reducing online manipulation: Only socially rich or respectable crypto wallets can participate in projects, ensuring that no one can create several wallets to influence decentralized project governance.

2. 🤝 Improving social links: Some sectors of society lack social context. Racism, sexism, and homophobia do that. Public wallets can help identify and connect distinct social groupings.

3. 👩❤️💋👨 Increasing pluralism: Soulbound tokens can ensure that socially connected wallets have less voting power online to increase pluralism. We can also overweight a minority of numerous voices.

4. 💰Making more informed decisions: Taking out an insurance policy requires a life review. Why not loans? Character isn't limited by income, and many people need a chance.

5. 🎶 Finding a community: Soulbound tokens are accessible to everyone. This means we can find people who are like us but also different. This is probably rare among your friends and family.

NFTs are dangerous, and I don't like them. Social credit score, privacy, lost wallet. We must stay informed and keep talking to innovators.

E. Glen Weyl, Puja Ohlhaver and Vitalik Buterin get all the credit for these ideas, having written the very accessible white paper “Decentralized Society: Finding Web3’s Soul”.

Navdeep Yadav

3 years ago

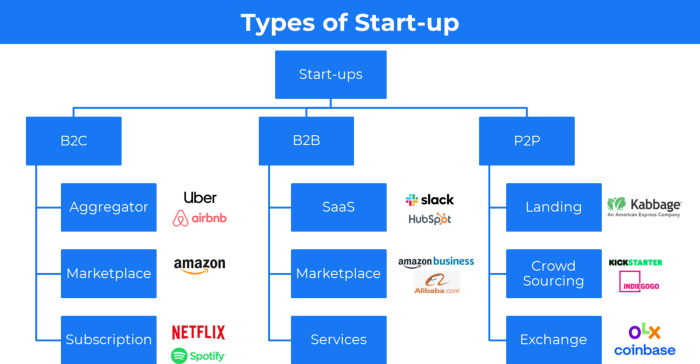

31 startup company models (with examples)

Many people find the internet's various business models bewildering.

This article summarizes 31 startup e-books.

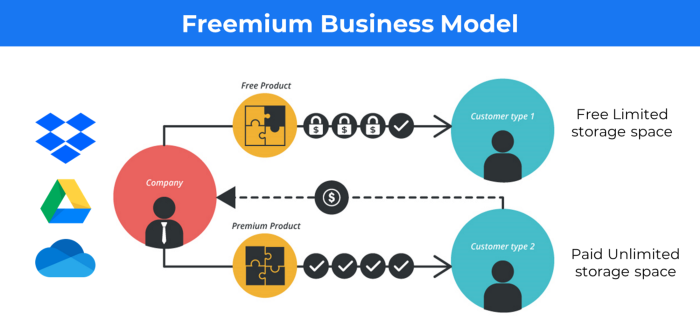

1. Using the freemium business model (free plus premium),

The freemium business model offers basic software, games, or services for free and charges for enhancements.

Examples include Slack, iCloud, and Google Drive

Provide a rudimentary, free version of your product or service to users.

Google Drive and Dropbox offer 15GB and 2GB of free space but charge for more.

Freemium business model details (Click here)

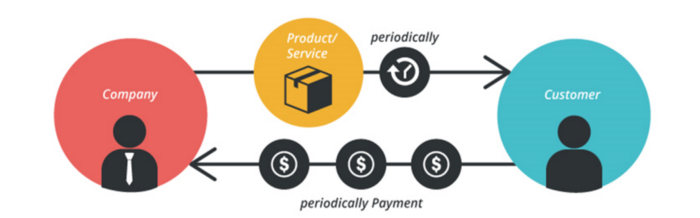

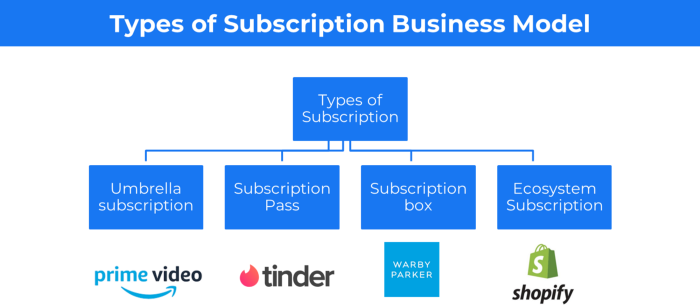

2. The Business Model of Subscription

Subscription business models sell a product or service for recurring monthly or yearly revenue.

Examples: Tinder, Netflix, Shopify, etc

It's the next step to Freemium if a customer wants to pay monthly for premium features.

Subscription Business Model (Click here)

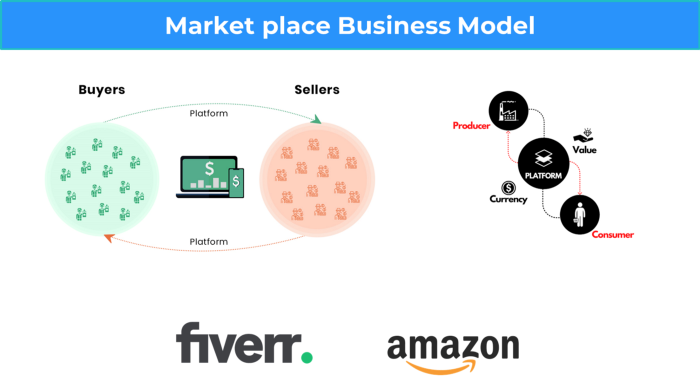

3. A market-based business strategy

It's an e-commerce site or app where third-party sellers sell products or services.

Examples are Amazon and Fiverr.

On Amazon's marketplace, a third-party vendor sells a product.

Freelancers on Fiverr offer specialized skills like graphic design.

Marketplace's business concept is explained.

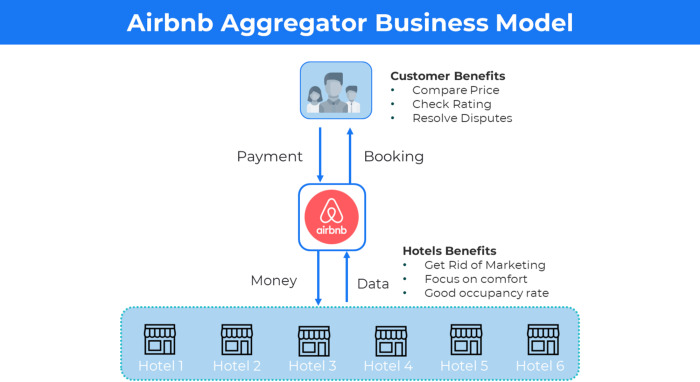

4. Business plans using aggregates

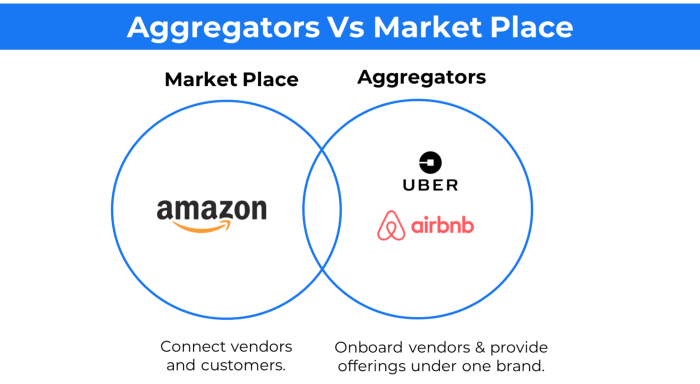

In the aggregator business model, the service is branded.

Uber, Airbnb, and other examples

Marketplace and Aggregator business models differ.

Amazon and Fiverr link merchants and customers and take a 10-20% revenue split.

Uber and Airbnb-style aggregator Join these businesses and provide their products.

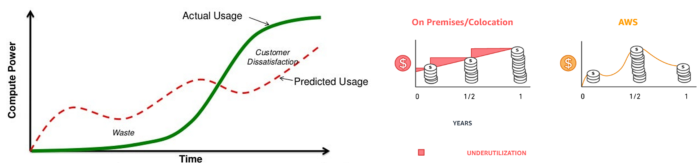

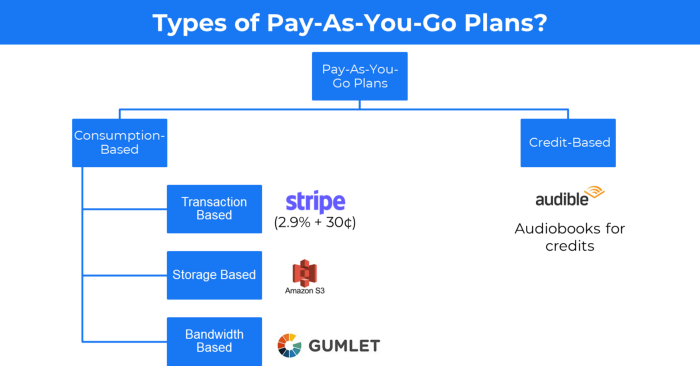

5. The pay-as-you-go concept of business

This is a consumption-based pricing system. Cloud companies use it.

Example: Amazon Web Service and Google Cloud Platform (GCP) (AWS)

AWS, an Amazon subsidiary, offers over 200 pay-as-you-go cloud services.

“In short, the more you use the more you pay”

When it's difficult to divide clients into pricing levels, pay-as-you is employed.

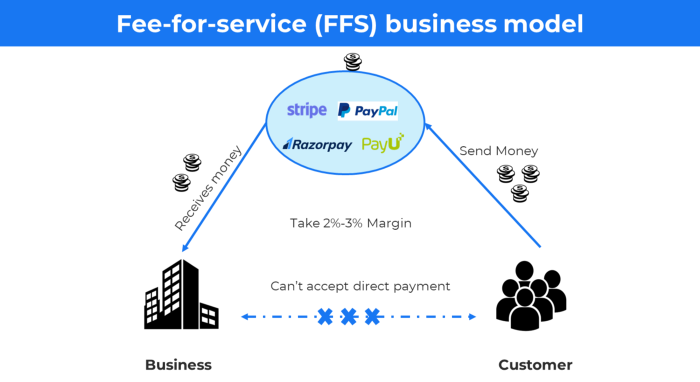

6. The business model known as fee-for-service (FFS)

FFS charges fixed and variable fees for each successful payment.

For instance, PayU, Paypal, and Stripe

Stripe charges 2.9% + 30 per payment.

These firms offer a payment gateway to take consumer payments and deposit them to a business account.

Fintech business model

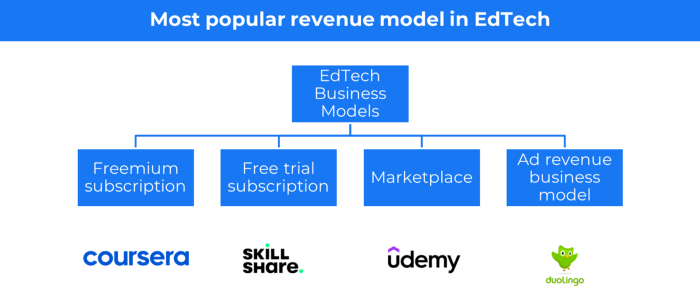

7. EdTech business strategy

In edtech, you generate money by selling material or teaching as a service.

edtech business models

Freemium When course content is free but certification isn't, e.g. Coursera

FREE TRIAL SkillShare offers free trials followed by monthly or annual subscriptions.

Self-serving marketplace approach where you pick what to learn.

Ad-revenue model The company makes money by showing adverts to its huge user base.

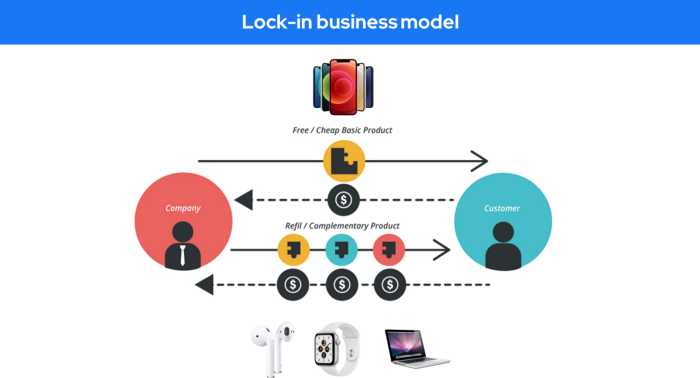

Lock-in business strategy

Lock in prevents customers from switching to a competitor's brand or offering.

It uses switching costs or effort to transmit (soft lock-in), improved brand experience, or incentives.

Apple, SAP, and other examples

Apple offers an iPhone and then locks you in with extra hardware (Watch, Airpod) and platform services (Apple Store, Apple Music, cloud, etc.).

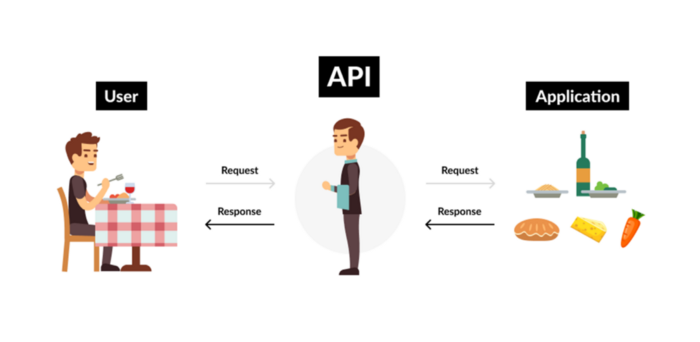

9. Business Model for API Licensing

APIs let third-party apps communicate with your service.

Uber and Airbnb use Google Maps APIs for app navigation.

Examples are Google Map APIs (Map), Sendgrid (Email), and Twilio (SMS).

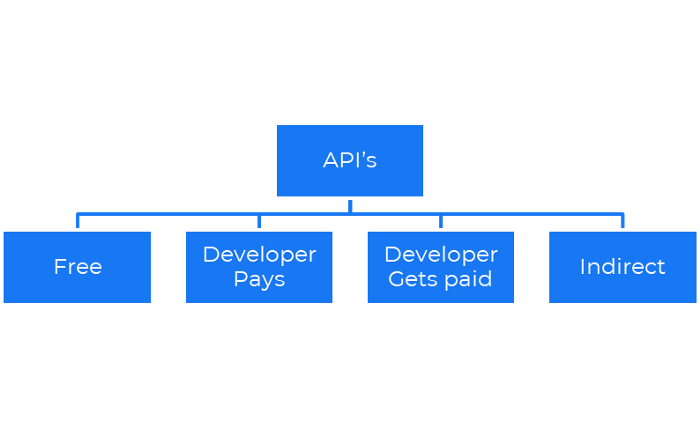

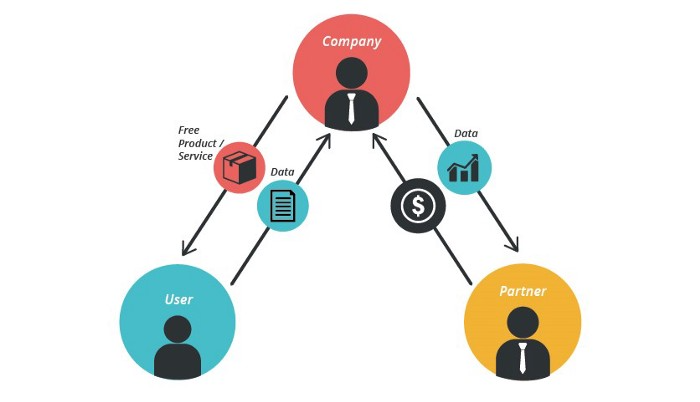

Business models for APIs

Free: The simplest API-driven business model that enables unrestricted API access for app developers. Google Translate and Facebook are two examples.

Developer Pays: Under this arrangement, service providers such as AWS, Twilio, Github, Stripe, and others must be paid by application developers.

The developer receives payment: These are the compensated content producers or developers who distribute the APIs utilizing their work. For example, Amazon affiliate programs

10. Open-source enterprise

Open-source software can be inspected, modified, and improved by anybody.

For instance, use Firefox, Java, or Android.

Google paid Mozilla $435,702 million to be their primary search engine in 2018.

Open-source software profits in six ways.

Paid assistance The Project Manager can charge for customization because he is quite knowledgeable about the codebase.

A full database solution is available as a Software as a Service (MongoDB Atlas), but there is a fee for the monitoring tool.

Open-core design R studio is a better GUI substitute for open-source applications.

sponsors of GitHub Sponsorships benefit the developers in full.

demands for paid features Earn Money By Developing Open Source Add-Ons for Current Products

Open-source business model

11. The business model for data

If the software or algorithm collects client data to improve or monetize the system.

Open AI GPT3 gets smarter with use.

Foursquare allows users to exchange check-in locations.

Later, they compiled large datasets to enable retailers like Starbucks launch new outlets.

12. Business Model Using Blockchain

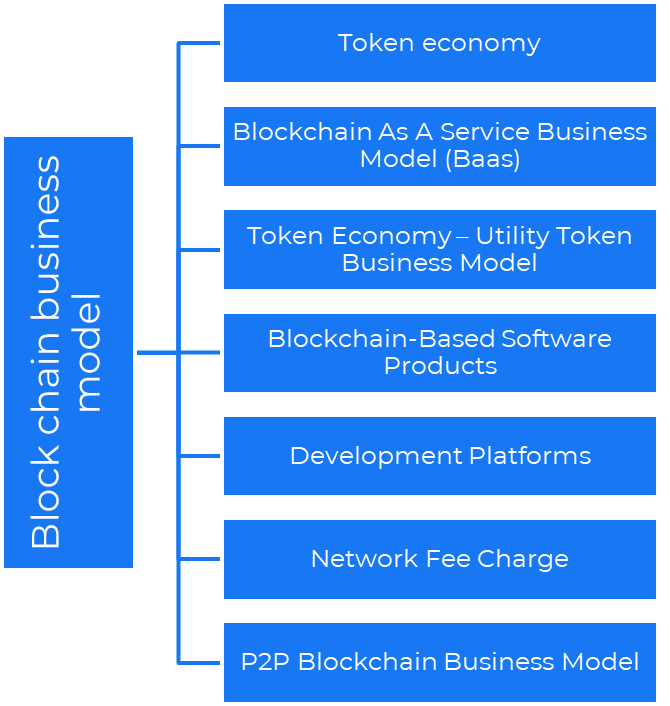

Blockchain is a distributed ledger technology that allows firms to deploy smart contracts without a central authority.

Examples include Alchemy, Solana, and Ethereum.

Business models using blockchain

Economy of tokens or utility When a business uses a token business model, it issues some kind of token as one of the ways to compensate token holders or miners. For instance, Solana and Ethereum

Bitcoin Cash P2P Business Model Peer-to-peer (P2P) blockchain technology permits direct communication between end users. as in IPFS

Enterprise Blockchain as a Service (Baas) BaaS focuses on offering ecosystem services similar to those offered by Amazon (AWS) and Microsoft (Azure) in the web 3 sector. Example: Ethereum Blockchain as a Service with Bitcoin (EBaaS).

Blockchain-Based Aggregators With AWS for blockchain, you can use that service by making an API call to your preferred blockchain. As an illustration, Alchemy offers nodes for many blockchains.

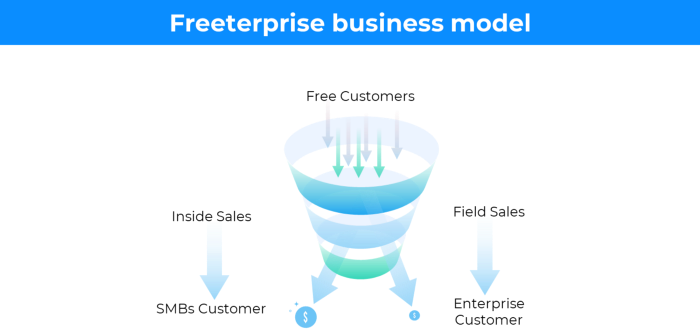

13. The free-enterprise model

In the freeterprise business model, free professional accounts are led into the funnel by the free product and later become B2B/enterprise accounts.

For instance, Slack and Zoom

Freeterprise companies flourish through collaboration.

Start with a free professional account to build an enterprise.

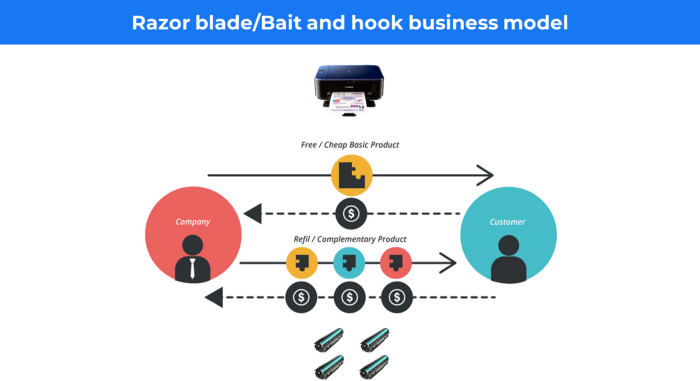

14. Business plan for razor blades

It's employed in hardware where one piece is sold at a loss and profits are made through refills or add-ons.

Gillet razor & blades, coffee machine & beans, HP printer & cartridge, etc.

Sony sells the Playstation console at a loss but makes up for it by selling games and charging for online services.

Advantages of the Razor-Razorblade Method

lowers the risk a customer will try a product. enables buyers to test the goods and services without having to pay a high initial investment.

The product's ongoing revenue stream has the potential to generate sales that much outweigh the original investments.

Razor blade business model

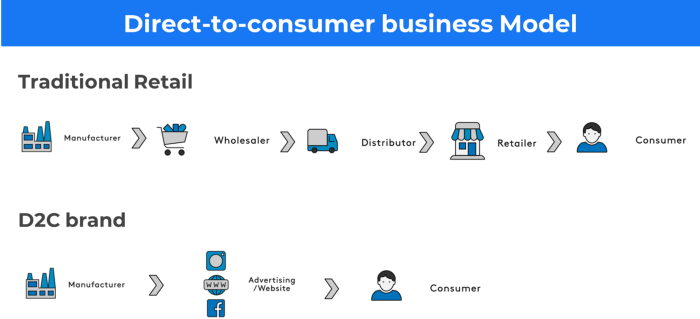

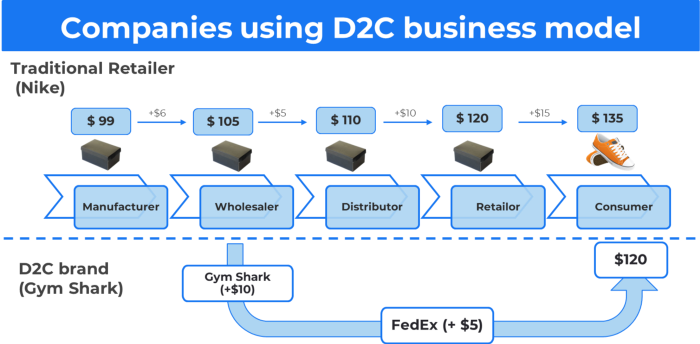

15. The business model of direct-to-consumer (D2C)

In D2C, the company sells directly to the end consumer through its website using a third-party logistic partner.

Examples include GymShark and Kylie Cosmetics.

D2C brands can only expand via websites, marketplaces (Amazon, eBay), etc.

D2C benefits

Lower reliance on middlemen = greater profitability

You now have access to more precise demographic and geographic customer data.

Additional space for product testing

Increased customisation throughout your entire product line-Inventory Less

16. Business model: White Label vs. Private Label

Private label/White label products are made by a contract or third-party manufacturer.

Most amazon electronics are made in china and white-labeled.

Amazon supplements and electronics.

Contract manufacturers handle everything after brands select product quantities on design labels.

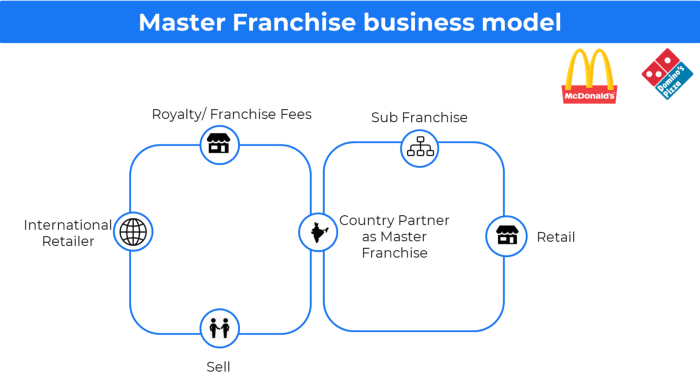

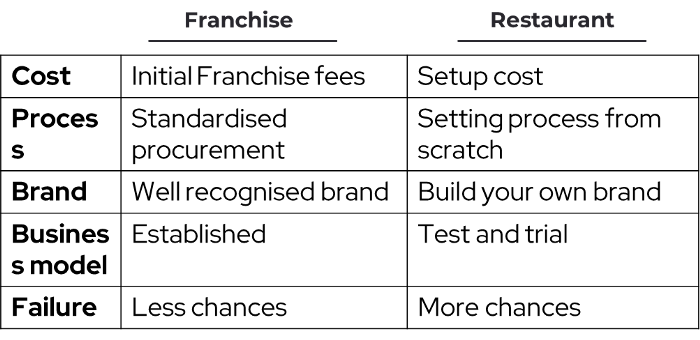

17. The franchise model

The franchisee uses the franchisor's trademark, branding, and business strategy (company).

For instance, KFC, Domino's, etc.

Subway, Domino, Burger King, etc. use this business strategy.

Many people pick a franchise because opening a restaurant is risky.

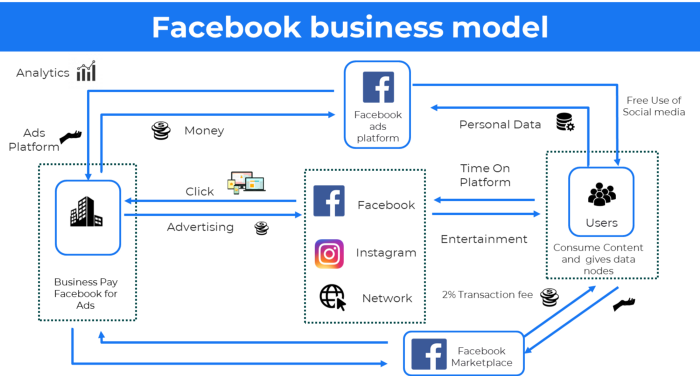

18. Ad-based business model

Social media and search engine giants exploit search and interest data to deliver adverts.

Google, Meta, TikTok, and Snapchat are some examples.

Users don't pay for the service or product given, e.g. Google users don't pay for searches.

In exchange, they collected data and hyper-personalized adverts to maximize revenue.

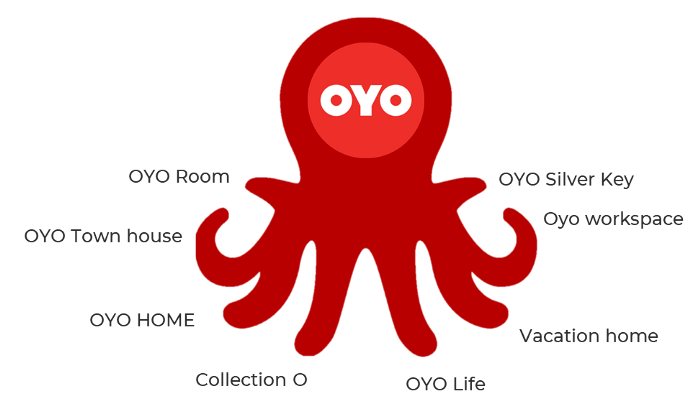

19. Business plan for octopuses

Each business unit functions separately but is connected to the main body.

Instance: Oyo

OYO is Asia's Airbnb, operating hotels, co-working, co-living, and vacation houses.

20, Transactional business model, number

Sales to customers produce revenue.

E-commerce sites and online purchases employ SSL.

Goli is an ex-GymShark.

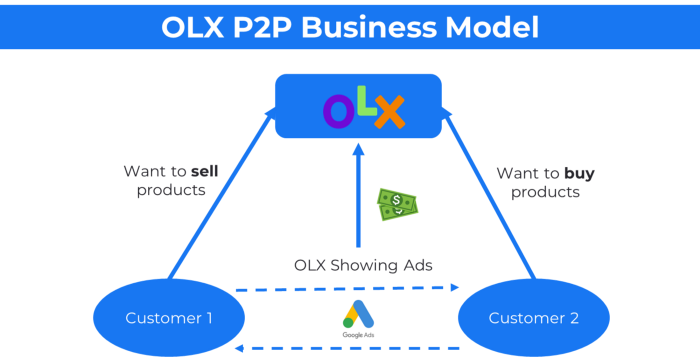

21. The peer-to-peer (P2P) business model

In P2P, two people buy and sell goods and services without a third party or platform.

Consider OLX.

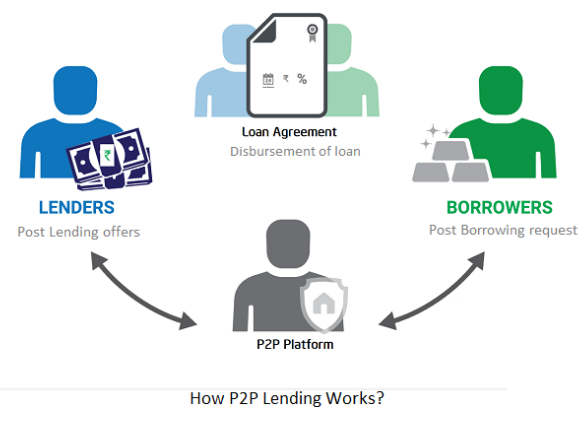

22. P2P lending as a manner of operation

In P2P lending, one private individual (P2P Lender) lends/invests or borrows money from another (P2P Borrower).

Instance: Kabbage

Social lending lets people lend and borrow money directly from each other without an intermediary financial institution.

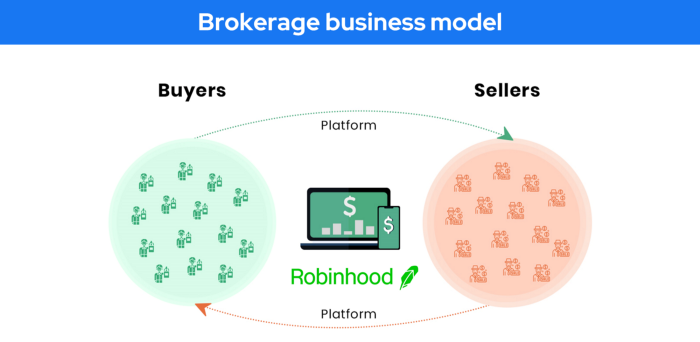

23. A business model for brokers

Brokerages charge a commission or fee for their services.

Examples include eBay, Coinbase, and Robinhood.

Brokerage businesses are common in Real estate, finance, and online and operate on this model.

Buy/sell similar models Examples include financial brokers, insurance brokers, and others who match purchase and sell transactions and charge a commission.

These brokers charge an advertiser a fee based on the date, place, size, or type of an advertisement. This is known as the classified-advertiser model. For instance, Craiglist

24. Drop shipping as an industry

Dropshipping allows stores to sell things without holding physical inventories.

When a customer orders, use a third-party supplier and logistic partners.

Retailer product portfolio and customer experience Fulfiller The consumer places the order.

Dropshipping advantages

Less money is needed (Low overhead-No Inventory or warehousing)

Simple to start (costs under $100)

flexible work environment

New product testing is simpler

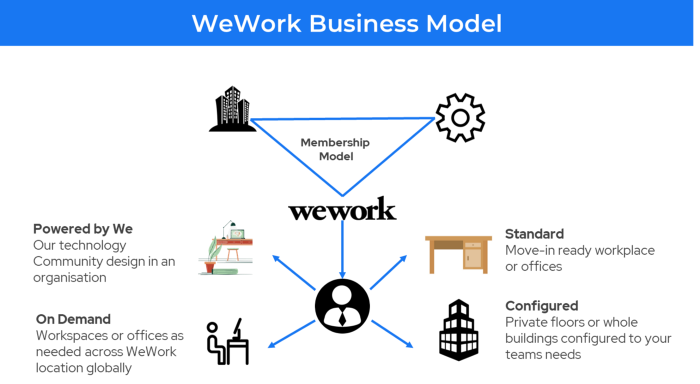

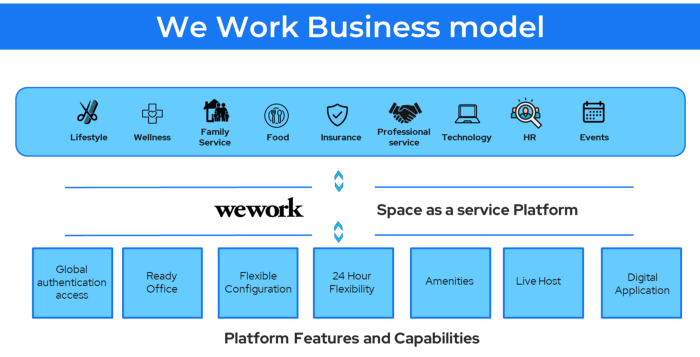

25. Business Model for Space as a Service

It's centered on a shared economy that lets millennials live or work in communal areas without ownership or lease.

Consider WeWork and Airbnb.

WeWork helps businesses with real estate, legal compliance, maintenance, and repair.

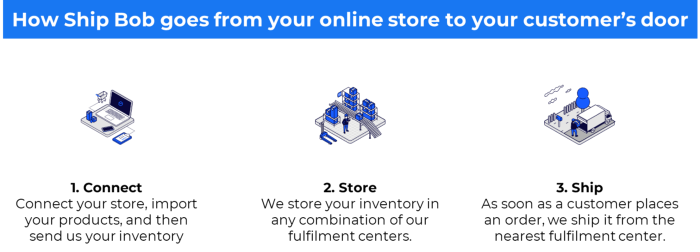

26. The business model for third-party logistics (3PL)

In 3PL, a business outsources product delivery, warehousing, and fulfillment to an external logistics company.

Examples include Ship Bob, Amazon Fulfillment, and more.

3PL partners warehouse, fulfill, and return inbound and outbound items for a charge.

Inbound logistics involves bringing products from suppliers to your warehouse.

Outbound logistics refers to a company's production line, warehouse, and customer.

27. The last-mile delivery paradigm as a commercial strategy

Last-mile delivery is the collection of supply chain actions that reach the end client.

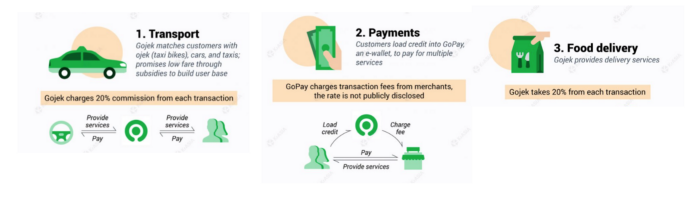

Examples include Rappi, Gojek, and Postmates.

Last-mile is tied to on-demand and has a nighttime peak.

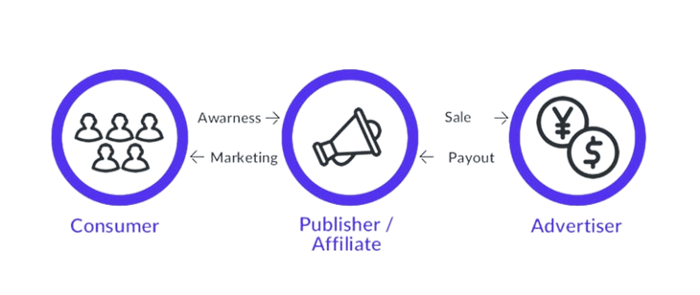

28. The use of affiliate marketing

Affiliate marketing involves promoting other companies' products and charging commissions.

Examples include Hubspot, Amazon, and Skillshare.

Your favorite youtube channel probably uses these short amazon links to get 5% of sales.

Affiliate marketing's benefits

In exchange for a success fee or commission, it enables numerous independent marketers to promote on its behalf.

Ensure system transparency by giving the influencers a specific tracking link and an online dashboard to view their profits.

Learn about the newest bargains and have access to promotional materials.

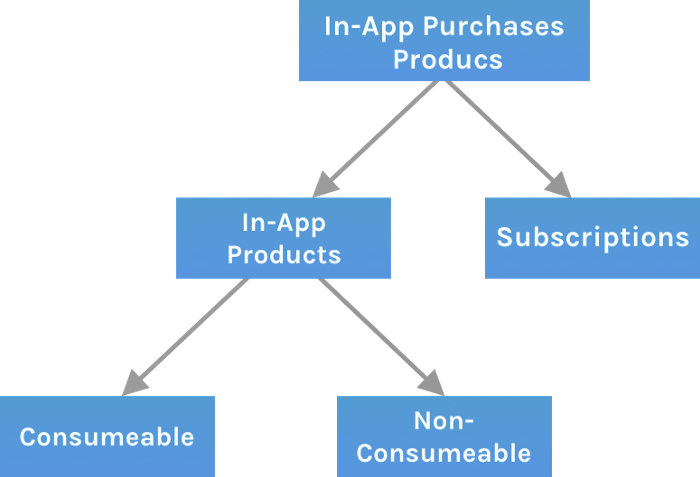

29. The business model for virtual goods

This is an in-app purchase for an intangible product.

Examples include PubG, Roblox, Candy Crush, etc.

Consumables are like gaming cash that runs out. Non-consumable products provide a permanent advantage without repeated purchases.

30. Business Models for Cloud Kitchens

Ghost, Dark, Black Box, etc.

Delivery-only restaurant.

These restaurants don't provide dine-in, only delivery.

For instance, NextBite and Faasos

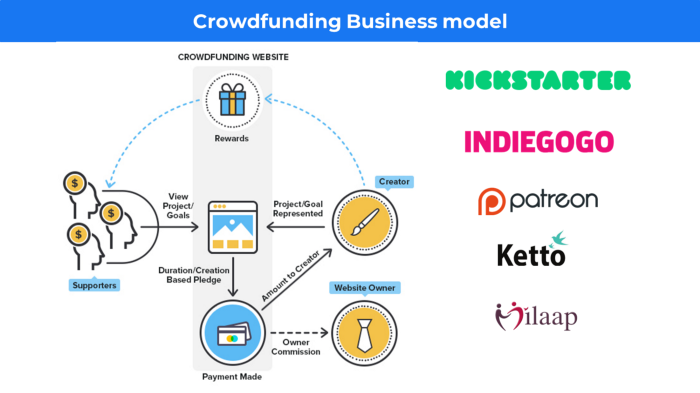

31. Crowdsourcing as a Business Model

Crowdsourcing = Using the crowd as a platform's source.

In crowdsourcing, you get support from people around the world without hiring them.

Crowdsourcing sites

Open-Source Software gives access to the software's source code so that developers can edit or enhance it. Examples include Firefox browsers and Linux operating systems.

Crowdfunding The oculus headgear would be an example of crowdfunding in essence, with no expectations.

DANIEL CLERY

3 years ago

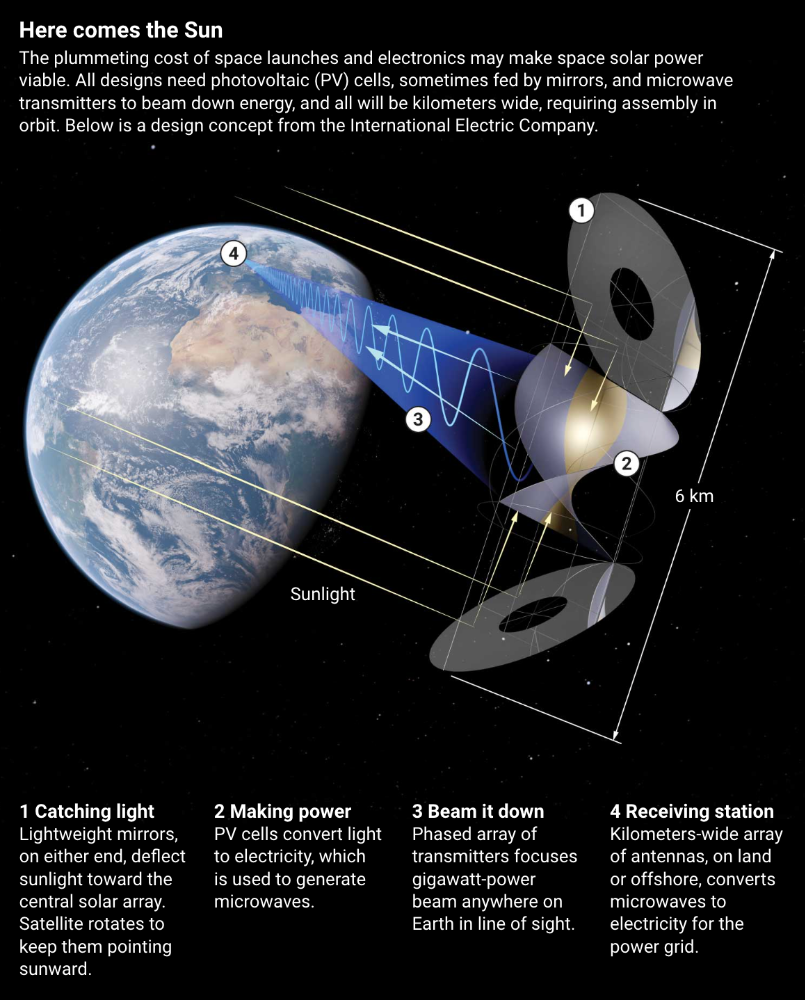

Can space-based solar power solve Earth's energy problems?

Better technology and lower launch costs revive science-fiction tech.

Airbus engineers showed off sustainable energy's future in Munich last month. They captured sunlight with solar panels, turned it into microwaves, and beamed it into an airplane hangar, where it lighted a city model. The test delivered 2 kW across 36 meters, but it posed a serious question: Should we send enormous satellites to capture solar energy in space? In orbit, free of clouds and nighttime, they could create power 24/7 and send it to Earth.

Airbus engineer Jean-Dominique Coste calls it an engineering problem. “But it’s never been done at [large] scale.”

Proponents of space solar power say the demand for green energy, cheaper space access, and improved technology might change that. Once someone invests commercially, it will grow. Former NASA researcher John Mankins says it might be a trillion-dollar industry.

Myriad uncertainties remain, including whether beaming gigawatts of power to Earth can be done efficiently and without burning birds or people. Concept papers are being replaced with ground and space testing. The European Space Agency (ESA), which supported the Munich demo, will propose ground tests to member nations next month. The U.K. government offered £6 million to evaluate innovations this year. Chinese, Japanese, South Korean, and U.S. agencies are working. NASA policy analyst Nikolai Joseph, author of an upcoming assessment, thinks the conversation's tone has altered. What formerly appeared unattainable may now be a matter of "bringing it all together"

NASA studied space solar power during the mid-1970s fuel crunch. A projected space demonstration trip using 1970s technology would have cost $1 trillion. According to Mankins, the idea is taboo in the agency.

Space and solar power technology have evolved. Photovoltaic (PV) solar cell efficiency has increased 25% over the past decade, Jones claims. Telecoms use microwave transmitters and receivers. Robots designed to repair and refuel spacecraft might create solar panels.

Falling launch costs have boosted the idea. A solar power satellite large enough to replace a nuclear or coal plant would require hundreds of launches. ESA scientist Sanjay Vijendran: "It would require a massive construction complex in orbit."

SpaceX has made the idea more plausible. A SpaceX Falcon 9 rocket costs $2600 per kilogram, less than 5% of what the Space Shuttle did, and the company promised $10 per kilogram for its giant Starship, slated to launch this year. Jones: "It changes the equation." "Economics rules"

Mass production reduces space hardware costs. Satellites are one-offs made with pricey space-rated parts. Mars rover Perseverance cost $2 million per kilogram. SpaceX's Starlink satellites cost less than $1000 per kilogram. This strategy may work for massive space buildings consisting of many identical low-cost components, Mankins has long contended. Low-cost launches and "hypermodularity" make space solar power economical, he claims.

Better engineering can improve economics. Coste says Airbus's Munich trial was 5% efficient, comparing solar input to electricity production. When the Sun shines, ground-based solar arrays perform better. Studies show space solar might compete with existing energy sources on price if it reaches 20% efficiency.

Lighter parts reduce costs. "Sandwich panels" with PV cells on one side, electronics in the middle, and a microwave transmitter on the other could help. Thousands of them build a solar satellite without heavy wiring to move power. In 2020, a team from the U.S. Naval Research Laboratory (NRL) flew on the Air Force's X-37B space plane.

NRL project head Paul Jaffe said the satellite is still providing data. The panel converts solar power into microwaves at 8% efficiency, but not to Earth. The Air Force expects to test a beaming sandwich panel next year. MIT will launch its prototype panel with SpaceX in December.

As a satellite orbits, the PV side of sandwich panels sometimes faces away from the Sun since the microwave side must always face Earth. To maintain 24-hour power, a satellite needs mirrors to keep that side illuminated and focus light on the PV. In a 2012 NASA study by Mankins, a bowl-shaped device with thousands of thin-film mirrors focuses light onto the PV array.

International Electric Company's Ian Cash has a new strategy. His proposed satellite uses enormous, fixed mirrors to redirect light onto a PV and microwave array while the structure spins (see graphic, above). 1 billion minuscule perpendicular antennas act as a "phased array" to electronically guide the beam toward Earth, regardless of the satellite's orientation. This design, argues Cash, is "the most competitive economically"

If a space-based power plant ever flies, its power must be delivered securely and efficiently. Jaffe's team at NRL just beamed 1.6 kW over 1 km, and teams in Japan, China, and South Korea have comparable attempts. Transmitters and receivers lose half their input power. Vijendran says space solar beaming needs 75% efficiency, "preferably 90%."

Beaming gigawatts through the atmosphere demands testing. Most designs aim to produce a beam kilometers wide so every ship, plane, human, or bird that strays into it only receives a tiny—hopefully harmless—portion of the 2-gigawatt transmission. Receiving antennas are cheap to build but require a lot of land, adds Jones. You could grow crops under them or place them offshore.

Europe's public agencies currently prioritize space solar power. Jones: "There's a devotion you don't see in the U.S." ESA commissioned two solar cost/benefit studies last year. Vijendran claims it might match ground-based renewables' cost. Even at a higher price, equivalent to nuclear, its 24/7 availability would make it competitive.

ESA will urge member states in November to fund a technical assessment. If the news is good, the agency will plan for 2025. With €15 billion to €20 billion, ESA may launch a megawatt-scale demonstration facility by 2030 and a gigawatt-scale facility by 2040. "Moonshot"