More on Personal Growth

Tim Denning

3 years ago

In this recession, according to Mark Cuban, you need to outwork everyone

Here’s why that’s baloney

Mark Cuban popularized entrepreneurship.

Shark Tank (which made Mark famous) made starting a business glamorous to attract more entrepreneurs. First off

This isn't an anti-billionaire rant.

Mark Cuban has done excellent. He's a smart, principled businessman. I enjoy his Web3 work. But Mark's work and productivity theories are absurd.

You don't need to outwork everyone in this recession to live well.

You won't be able to outwork me.

Yuck! Mark's words made me gag.

Why do boys think working is a football game where the winner wins a Super Bowl trophy? To outwork you.

Hard work doesn't equal intelligence.

Highly clever professionals spend 4 hours a day in a flow state, then go home to relax with family.

If you don't put forth the effort, someone else will.

- Mark.

He'll burn out. He's delusional and doesn't understand productivity. Boredom or disconnection spark our best thoughts.

TikTok outlaws boredom.

In a spare minute, we check our phones because we can't stand stillness.

All this work p*rn makes things worse. When is it okay to feel again? Because I can’t feel anything when I’m drowning in work and haven’t had a holiday in 2 years.

Your rivals are actively attempting to undermine you.

Ohhh please Mark…seriously.

This isn't a Tom Hanks war film. Relax. Not everyone is a rival. Only yourself is your competitor. To survive the recession, be better than a year ago.

If you get rich, great. If not, there's more to life than Lambos and angel investments.

Some want to relax and enjoy life. No competition. We witness people with lives trying to endure the recession and record-high prices.

This fictitious rival worsens life and work.

If you are truly talented, you will motivate others to work more diligently and effectively.

No Mark. Soz.

If you're a good leader, you won't brag about working hard and treating others like cogs. Treat them like humans. You'll have EQ.

Silly statements like this are caused by an out-of-control ego. No longer watch Shark Tank.

Ego over humanity.

Good leaders will urge people to keep together during the recession. Good leaders support those who are laid off and need a reference.

Not harder, quicker, better. That created my mental health problems 10 years ago.

Truth: we want to work less.

The promotion of entrepreneurship is ludicrous.

Marvel superheroes. Seriously, relax Max.

I used to write about entrepreneurship, then I quit. Many WeWork Adam Neumanns. Carelessness.

I now utilize the side hustle title when writing about online company or entrepreneurship. Humanizes.

Stop glorifying. Thinking we'll all be Elon Musks who send rockets to Mars is delusional. Most of us won't create companies employing hundreds.

OK.

The true epidemic is glorification. fewer selfies Little birdy needs less bank account screenshots. Less Uber talk.

We're exhausted.

Fun, ego-free business can transform the world. Take a relax pill.

Work as if someone were attempting to take everything from you.

I've seen people lose everything.

Myself included. My 20s startup failed. I was almost bankrupt. I thought I'd never recover. Nope.

Best thing ever.

Losing everything reveals your true self. Unintelligent entrepreneur egos perish instantly. Regaining humility revitalizes relationships.

Money's significance shifts. Stop chasing it like a puppy with a bone.

Fearing loss is unfounded.

Here is a more effective approach than outworking nobody.

(You'll thrive in the recession and become wealthy.)

Smarter work

Overworking is donkey work.

You don't want to be a career-long overworker. Instead than wasting time, write down what you do. List tasks and processes.

Keep doing/outsource the list. Step-by-step each task. Continuously systematize.

Then recruit a digital employee like Zapier or a virtual assistant in the same country.

Intelligent, not difficult.

If your big break could burn in hell, diversify like it will.

People err by focusing on one chance.

Chances can vanish. All-in risky. Instead of working like a Mark Cuban groupie, diversify your income.

If you're employed, your customer is your employer.

Sell the same abilities twice and add 2-3 contract clients. Reduce your hours at your main job and take on more clients.

Leave brand loyalty behind

Mark desires his employees' worship.

That's stupid. When times are bad, layoffs multiply. The problem is the false belief that companies care. No. A business maximizes profit and pays you the least.

To care or overpay is anti-capitalist (that run the world). Be honest.

I was a banker. Then the bat virus hit and jobs disappeared faster than I urinate after a night of drinking.

Start being disloyal now since your company will cheerfully replace you with a better applicant. Meet recruiters and hiring managers on LinkedIn. Whenever something goes wrong at work, act.

Loyalty to self and family. Nobody.

Outwork this instead

Mark doesn't suggest outworking inflation instead of people.

Inflation erodes your time on earth. If you ignore inflation, you'll work harder for less pay every minute.

Financial literacy beats inflation.

Get a side job and earn money online

So you can stop outworking everyone.

Internet leverages time. Same effort today yields exponential results later. There are still whole places not online.

Instead of working forever, generate money online.

Final Words

Overworking is stupid. Don't listen to wealthy football jocks.

Work isn't everything. Prioritize diversification, internet income streams, boredom, and financial knowledge throughout the recession.

That’s how to get wealthy rather than burnout-rich.

Matthew Royse

3 years ago

Ten words and phrases to avoid in presentations

Don't say this in public!

Want to wow your audience? Want to deliver a successful presentation? Do you want practical takeaways from your presentation?

Then avoid these phrases.

Public speaking is difficult. People fear public speaking, according to research.

"Public speaking is people's biggest fear, according to studies. Number two is death. "Sounds right?" — Comedian Jerry Seinfeld

Yes, public speaking is scary. These words and phrases will make your presentation harder.

Using unnecessary words can weaken your message.

You may have prepared well for your presentation and feel confident. During your presentation, you may freeze up. You may blank or forget.

Effective delivery is even more important than skillful public speaking.

Here are 10 presentation pitfalls.

1. I or Me

Presentations are about the audience, not you. Replace "I or me" with "you, we, or us." Focus on your audience. Reward them with expertise and intriguing views about your issue.

Serve your audience actionable items during your presentation, and you'll do well. Your audience will have a harder time listening and engaging if you're self-centered.

2. Sorry if/for

Your presentation is fine. These phrases make you sound insecure and unprepared. Don't pressure the audience to tell you not to apologize. Your audience should focus on your presentation and essential messages.

3. Excuse the Eye Chart, or This slide's busy

Why add this slide if you're utilizing these phrases? If you don't like this slide, change it before presenting. After the presentation, extra data can be provided.

Don't apologize for unclear slides. Hide or delete a broken PowerPoint slide. If so, divide your message into multiple slides or remove the "business" slide.

4. Sorry I'm Nervous

Some think expressing yourself will win over the audience. Nerves are horrible. Even public speakers are nervous.

Nerves aren't noticeable. What's the point? Let the audience judge your nervousness. Please don't make this obvious.

5. I'm not a speaker or I've never done this before.

These phrases destroy credibility. People won't listen and will check their phones or computers.

Why present if you use these phrases?

Good speakers aren't necessarily public speakers. Be confident in what you say. When you're confident, many people will like your presentation.

6. Our Key Differentiators Are

Overused term. It's widely utilized. This seems "salesy," and your "important differentiators" are probably like a competitor's.

This statement has been diluted; say, "what makes us different is..."

7. Next Slide

Many slides or stories? Your presentation needs transitions. They help your viewers understand your argument.

You didn't transition well when you said "next slide." Think about organic transitions.

8. I Didn’t Have Enough Time, or I’m Running Out of Time

The phrase "I didn't have enough time" implies that you didn't care about your presentation. This shows the viewers you rushed and didn't care.

Saying "I'm out of time" shows poor time management. It means you didn't rehearse enough and plan your time well.

9. I've been asked to speak on

This phrase is used to emphasize your importance. This phrase conveys conceit.

When you say this sentence, you tell others you're intelligent, skilled, and appealing. Don't utilize this term; focus on your topic.

10. Moving On, or All I Have

These phrases don't consider your transitions or presentation's end. People recall a presentation's beginning and end.

How you end your discussion affects how people remember it. You must end your presentation strongly and use natural transitions.

Conclusion

10 phrases to avoid in a presentation. I or me, sorry if or sorry for, pardon the Eye Chart or this busy slide, forgive me if I appear worried, or I'm really nervous, and I'm not good at public speaking, I'm not a speaker, or I've never done this before.

Please don't use these phrases: next slide, I didn't have enough time, I've been asked to speak about, or that's all I have.

We shouldn't make public speaking more difficult than it is. We shouldn't exacerbate a difficult issue. Better public speakers avoid these words and phrases.

“Remember not only to say the right thing in the right place, but far more difficult still, to leave unsaid the wrong thing at the tempting moment.” — Benjamin Franklin, Founding Father

This is a summary. See the original post here.

Patryk Nawrocki

3 years ago

7 things a new UX/UI designer should know

If I could tell my younger self a few rules, they would boost my career.

1. Treat design like medicine; don't get attached.

If it doesn't help, you won't be angry, but you'll try to improve it. Designers blame others if they don't like the design, but the rule is the same: we solve users' problems. You're not your design, and neither are they. Be humble with your work because your assumptions will often be wrong and users will behave differently.

2. Consider your design flawed.

Disagree with yourself, then defend your ideas. Most designers forget to dig deeper into a pattern, screen, button, or copywriting. If someone asked, "Have you considered alternatives? How does this design stack up? Here's a functional UX checklist to help you make design decisions.

3. Codeable solutions.

If your design requires more developer time, consider whether it's worth spending more money to code something with a small UX impact. Overthinking problems and designing abstract patterns is easy. Sometimes you see something on dribbble or bechance and try to recreate it, but it's not worth it. Here's my article on it.

4. Communication changes careers

Designers often talk with users, clients, companies, developers, and other designers. How you talk and present yourself can land you a job. Like driving or swimming, practice it. Success requires being outgoing and friendly. If I hadn't said "hello" to a few people, I wouldn't be where I am now.

5. Ignorance of the law is not an excuse.

Copyright, taxation How often have you used an icon without checking its license? If you use someone else's work in your project, the owner can cause you a lot of problems — paying a lot of money isn't worth it. Spend a few hours reading about copyrights, client agreements, and taxes.

6. Always test your design

If nobody has seen or used my design, it's not finished. Ask friends about prototypes. Testing reveals how wrong your assumptions were. Steve Krug, one of the authorities on this topic will tell you more about how to do testing.

7. Run workshops

A UX designer's job involves talking to people and figuring out what they need, which is difficult because they usually don't know. Organizing teamwork sessions is a powerful skill, but you must also be a good listener. Your job is to help a quiet, introverted developer express his solution and control the group. AJ Smart has more on workshops here.

You might also like

Faisal Khan

3 years ago

4 typical methods of crypto market manipulation

Market fraud

Due to its decentralized and fragmented character, the crypto market has integrity difficulties.

Cryptocurrencies are an immature sector, therefore market manipulation becomes a bigger issue. Many research have attempted to uncover these abuses. CryptoCompare's newest one highlights some of the industry's most typical scams.

Why are these concerns so common in the crypto market? First, even the largest centralized exchanges remain unregulated due to industry immaturity. A low-liquidity market segment makes an attack more harmful. Finally, market surveillance solutions not implemented reduce transparency.

In CryptoCompare's latest exchange benchmark, 62.4% of assessed exchanges had a market surveillance system, although only 18.1% utilised an external solution. To address market integrity, this measure must improve dramatically. Before discussing the report's malpractices, note that this is not a full list of attacks and hacks.

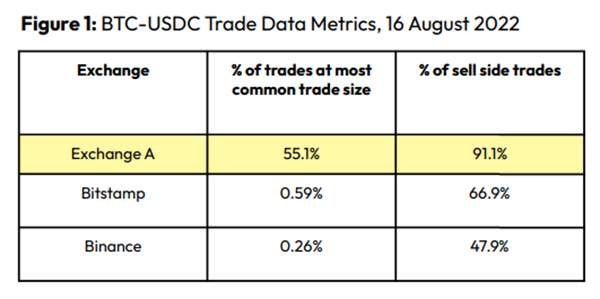

Clean Trading

An investor buys and sells concurrently to increase the asset's price. Centralized and decentralized exchanges show this misconduct. 23 exchanges have a volume-volatility correlation < 0.1 during the previous 100 days, according to CryptoCompares. In August 2022, Exchange A reported $2.5 trillion in artificial and/or erroneous volume, up from $33.8 billion the month before.

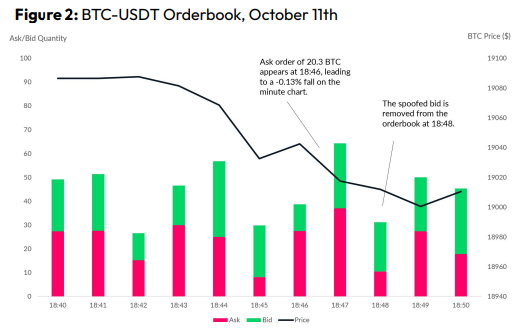

Spoofing

Criminals create and cancel fake orders before they can be filled. Since manipulators can hide in larger trading volumes, larger exchanges have more spoofing. A trader placed a 20.8 BTC ask order at $19,036 when BTC was trading at $19,043. BTC declined 0.13% to $19,018 in a minute. At 18:48, the trader canceled the ask order without filling it.

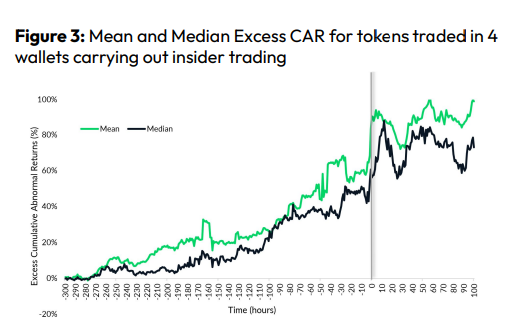

Front-Running

Most cryptocurrency front-running involves inside trading. Traditional stock markets forbid this. Since most digital asset information is public, this is harder. Retailers could utilize bots to front-run.

CryptoCompare found digital wallets of people who traded like insiders on exchange listings. The figure below shows excess cumulative anomalous returns (CAR) before a coin listing on an exchange.

Finally, LAYERING is a sequence of spoofs in which successive orders are put along a ladder of greater (layering offers) or lower (layering bids) values. The paper concludes with recommendations to mitigate market manipulation. Exchange data transparency, market surveillance, and regulatory oversight could reduce manipulative tactics.

Jayden Levitt

3 years ago

How to Explain NFTs to Your Grandmother, in Simple Terms

In simple terms, you probably don’t.

But try. Grandma didn't grow up with Facebook, but she eventually joined.

Perhaps the fear of being isolated outweighed the discomfort of learning the technology.

Grandmas are Facebook likers, sharers, and commenters.

There’s no stopping her.

Not even NFTs. Web3 is currently very complex.

It's difficult to explain what NFTs are, how they work, and why we might use them.

Three explanations.

1. Everything will be ours to own, both physically and digitally.

Why own something you can't touch? What's the point?

Blockchain technology proves digital ownership.

Untouchables need ownership proof. What?

Digital assets reduce friction, save time, and are better for the environment than physical goods.

Many valuable things are intangible. Feeling like your favorite brands. You'll pay obscene prices for clothing that costs pennies.

Secondly, NFTs Are Contracts. Agreements Have Value.

Blockchain technology will replace all contracts and intermediaries.

Every insurance contract, deed, marriage certificate, work contract, plane ticket, concert ticket, or sports event is likely an NFT.

We all have public wallets, like Grandma's Facebook page.

3. Your NFT Purchases Will Be Visible To Everyone.

Everyone can see your public wallet. What you buy says more about you than what you post online.

NFTs issued double as marketing collateral when seen on social media.

While I doubt Grandma knows who Snoop Dog is, imagine him or another famous person holding your NFT in his public wallet and the attention that could bring to you, your company, or brand.

This Technical Section Is For You

The NFT is a contract; its founders can add value through access, events, tuition, and possibly royalties.

Imagine Elon Musk releasing an NFT to his network. Or yearly business consultations for three years.

Christ-alive.

It's worth millions.

These determine their value.

No unsuspecting schmuck willing to buy your hot potato at zero. That's the trend, though.

Overpriced NFTs for low-effort projects created a bubble that has burst.

During a market bubble, you can make money by buying overvalued assets and selling them later for a profit, according to the Greater Fool Theory.

People are struggling. Some are ruined by collateralized loans and the gold rush.

Finances are ruined.

It's uncomfortable.

The same happened in 2018, during the ICO crash or in 1999/2000 when the dot com bubble burst. But the underlying technology hasn’t gone away.

Stephen Rivers

3 years ago

Because of regulations, the $3 million Mercedes-AMG ONE will not (officially) be available in the United States or Canada.

We asked Mercedes to clarify whether "customers" refers to people who have expressed interest in buying the AMG ONE but haven't made a down payment or paid in full for a production slot, and a company spokesperson told that it's the latter – "Actual customers for AMG ONE in the United States and Canada."

The Mercedes-AMG ONE has finally arrived in manufacturing form after numerous delays. This may be the most complicated and magnificent hypercar ever created, but according to Mercedes, those roads will not be found in the United States or Canada.

Despite all of the well-deserved excitement around the gorgeous AMG ONE, there was no word on when US customers could expect their cars. Our Editor-in-Chief became aware of this and contacted Mercedes to clarify the matter. Mercedes-hypercar AMG's with the F1-derived 1,049 HP 1.6-liter V6 engine will not be homologated for the US market, they've confirmed.

Mercedes has informed its customers in the United States and Canada that the ONE will not be arriving to North America after all, as of today, June 1, 2022. The whole text of the letter is included below, so sit back and wait for Mercedes to explain why we (or they) won't be getting (or seeing) the hypercar. Mercedes claims that all 275 cars it wants to produce have already been reserved, with net pricing in Europe starting at €2.75 million (about US$2.93 million at today's exchange rates), before country-specific taxes.

"The AMG-ONE was created with one purpose in mind: to provide a straight technology transfer of the World Championship-winning Mercedes-AMG Petronas Formula 1 E PERFORMANCE drive unit to the road." It's the first time a complete Formula 1 drive unit has been integrated into a road car.

Every component of the AMG ONE has been engineered to redefine high performance, with 1,000+ horsepower, four electric motors, and a blazing top speed of more than 217 mph. While the engine's beginnings are in competition, continuous research and refinement has left us with a difficult choice for the US market.

We determined that following US road requirements would considerably damage its performance and overall driving character in order to preserve the distinctive nature of its F1 powerplant. We've made the strategic choice to make the automobile available for road use in Europe, where it complies with all necessary rules."

If this is the first time US customers have heard about it, which it shouldn't be, we understand if it's a bit off-putting. The AMG ONE could very probably be Mercedes' final internal combustion hypercar of this type.

Nonetheless, we wouldn't be surprised if a few make their way to the United States via the federal government's "Show and Display" exemption provision. This legislation permits the importation of automobiles such as the AMG ONE, but only for a total of 2,500 miles per year.

The McLaren Speedtail, the Koenigsegg One:1, and the Bugatti EB110 are among the automobiles that have been imported under this special rule. We just hope we don't have to wait too long to see the ONE in the United States.