More on Personal Growth

Matthew Royse

3 years ago

7 ways to improve public speaking

How to overcome public speaking fear and give a killer presentation

"Public speaking is people's biggest fear, according to studies. Death's second. The average person is better off in the casket than delivering the eulogy." — American comedian, actor, writer, and producer Jerry Seinfeld

People fear public speaking, according to research. Public speaking can be intimidating.

Most professions require public speaking, whether to 5, 50, 500, or 5,000 people. Your career will require many presentations. In a small meeting, company update, or industry conference.

You can improve your public speaking skills. You can reduce your anxiety, improve your performance, and feel more comfortable speaking in public.

“If I returned to college, I'd focus on writing and public speaking. Effective communication is everything.” — 38th president Gerald R. Ford

You can deliver a great presentation despite your fear of public speaking. There are ways to stay calm while speaking and become a more effective public speaker.

Seven tips to improve your public speaking today. Let's help you overcome your fear (no pun intended).

Know your audience.

"You're not being judged; the audience is." — Entrepreneur, author, and speaker Seth Godin

Understand your audience before speaking publicly. Before preparing a presentation, know your audience. Learn what they care about and find useful.

Your presentation may depend on where you're speaking. A classroom is different from a company meeting.

Determine your audience before developing your main messages. Learn everything about them. Knowing your audience helps you choose the right words, information (thought leadership vs. technical), and motivational message.

2. Be Observant

Observe others' speeches to improve your own. Watching free TED Talks on education, business, science, technology, and creativity can teach you a lot about public speaking.

What worked and what didn't?

What would you change?

Their strengths

How interesting or dull was the topic?

Note their techniques to learn more. Studying the best public speakers will amaze you.

Learn how their stage presence helped them communicate and captivated their audience. Please note their pauses, humor, and pacing.

3. Practice

"A speaker should prepare based on what he wants to learn, not say." — Author, speaker, and pastor Tod Stocker

Practice makes perfect when it comes to public speaking. By repeating your presentation, you can find your comfort zone.

When you've practiced your presentation many times, you'll feel natural and confident giving it. Preparation helps overcome fear and anxiety. Review notes and important messages.

When you know the material well, you can explain it better. Your presentation preparation starts before you go on stage.

Keep a notebook or journal of ideas, quotes, and examples. More content means better audience-targeting.

4. Self-record

Videotape your speeches. Check yourself. Body language, hands, pacing, and vocabulary should be reviewed.

Best public speakers evaluate their performance to improve.

Write down what you did best, what you could improve and what you should stop doing after watching a recording of yourself. Seeing yourself can be unsettling. This is how you improve.

5. Remove text from slides

"Humans can't read and comprehend screen text while listening to a speaker. Therefore, lots of text and long, complete sentences are bad, bad, bad.” —Communications expert Garr Reynolds

Presentation slides shouldn't have too much text. 100-slide presentations bore the audience. Your slides should preview what you'll say to the audience.

Use slides to emphasize your main point visually.

If you add text, use at least 40-point font. Your slides shouldn't require squinting to read. You want people to watch you, not your slides.

6. Body language

"Body language is powerful." We had body language before speech, and 80% of a conversation is read through the body, not the words." — Dancer, writer, and broadcaster Deborah Bull

Nonverbal communication dominates. Our bodies speak louder than words. Don't fidget, rock, lean, or pace.

Relax your body to communicate clearly and without distraction through nonverbal cues. Public speaking anxiety can cause tense body language.

Maintain posture and eye contact. Don’t put your hand in your pockets, cross your arms, or stare at your notes. Make purposeful hand gestures that match what you're saying.

7. Beginning/ending Strong

Beginning and end are memorable. Your presentation must start strong and end strongly. To engage your audience, don't sound robotic.

Begin with a story, stat, or quote. Conclude with a summary of key points. Focus on how you will start and end your speech.

You should memorize your presentation's opening and closing. Memorize something naturally. Excellent presentations start and end strong because people won't remember the middle.

Bringing It All Together

Seven simple yet powerful ways to improve public speaking. Know your audience, study others, prepare and rehearse, record yourself, remove as much text as possible from slides, and start and end strong.

Follow these tips to improve your speaking and audience communication. Prepare, practice, and learn from great speakers to reduce your fear of public speaking.

"Speaking to one person or a thousand is public speaking." — Vocal coach Roger Love

Hudson Rennie

3 years ago

My Work at a $1.2 Billion Startup That Failed

Sometimes doing everything correctly isn't enough.

In 2020, I could fix my life.

After failing to start a business, I owed $40,000 and had no work.

A $1.2 billion startup on the cusp of going public pulled me up.

Ironically, it was getting ready for an epic fall — with the world watching.

Life sometimes helps. Without a base, even the strongest fall. A corporation that did everything right failed 3 months after going public.

First-row view.

Apple is the creator of Adore.

Out of respect, I've altered the company and employees' names in this account, despite their failure.

Although being a publicly traded company, it may become obvious.

We’ll call it “Adore” — a revolutionary concept in retail shopping.

Two Apple execs established Adore in 2014 with a focus on people-first purchasing.

Jon and Tim:

The concept for the stylish Apple retail locations you see today was developed by retail expert Jon Swanson, who collaborated closely with Steve Jobs.

Tim Cruiter is a graphic designer who produced the recognizable bouncing lamp video that appears at the start of every Pixar film.

The dynamic duo realized their vision.

“What if you could combine the convenience of online shopping with the confidence of the conventional brick-and-mortar store experience.”

Adore's mobile store concept combined traditional retail with online shopping.

Adore brought joy to 70+ cities and 4 countries over 7 years, including the US, Canada, and the UK.

Being employed on the ground floor, with world dominance and IPO on the horizon, was exciting.

I started as an Adore Expert.

I delivered cell phones, helped consumers set them up, and sold add-ons.

As the company grew, I became a Virtual Learning Facilitator and trained new employees across North America using Zoom.

In this capacity, I gained corporate insider knowledge. I worked with the creative team and Jon and Tim.

It's where I saw company foundation fissures. Despite appearances, investors were concerned.

The business strategy was ground-breaking.

Even after seeing my employee stocks fall from a home down payment to $0 (when Adore filed for bankruptcy), it's hard to pinpoint what went wrong.

Solid business model, well-executed.

Jon and Tim's chase for public funding ended in glory.

Here’s the business model in a nutshell:

Buying cell phones is cumbersome. You have two choices:

Online purchase: not knowing what plan you require or how to operate your device.

Enter a store, which can be troublesome and stressful.

Apple, AT&T, and Rogers offered Adore as a free delivery add-on. Customers could:

Have their phone delivered by UPS or Canada Post in 1-2 weeks.

Alternately, arrange for a person to visit them the same day (or sometimes even the same hour) to assist them set up their phone and demonstrate how to use it (transferring contacts, switching the SIM card, etc.).

Each Adore Expert brought a van with extra devices and accessories to customers.

Happy customers.

Here’s how Adore and its partners made money:

Adores partners appreciated sending Experts to consumers' homes since they improved customer satisfaction, average sale, and gadget returns.

**Telecom enterprises have low customer satisfaction. The average NPS is 30/100. Adore's global NPS was 80.

Adore made money by:

a set cost for each delivery

commission on sold warranties and extras

Consumer product applications seemed infinite.

A proprietary scheduling system (“The Adore App”), allowed for same-day, even same-hour deliveries.

It differentiates Adore.

They treated staff generously by:

Options on stock

health advantages

sales enticements

high rates per hour

Four-day workweeks were set by experts.

Being hired early felt like joining Uber, Netflix, or Tesla. We hoped the company's stocks would rise.

Exciting times.

I smiled as I greeted more than 1,000 new staff.

I spent a decade in retail before joining Adore. I needed a change.

After a leap of faith, I needed a lifeline. So, I applied for retail sales jobs in the spring of 2019.

The universe typically offers you what you want after you accept what you need. I needed a job to settle my debt and reach $0 again.

And the universe listened.

After being hired as an Adore Expert, I became a Virtual Learning Facilitator. Enough said.

After weeks of economic damage from the pandemic.

This employment let me work from home during the pandemic. It taught me excellent business skills.

I was active in brainstorming, onboarding new personnel, and expanding communication as we grew.

This job gave me vital skills and a regular paycheck during the pandemic.

It wasn’t until January of 2022 that I left on my own accord to try to work for myself again — this time, it’s going much better.

Adore was perfect. We valued:

Connection

Discovery

Empathy

Everything we did centered on compassion, and we held frequent Justice Calls to discuss diversity and work culture.

The last day of onboarding typically ended in tears as employees felt like they'd found a home, as I had.

Like all nice things, the wonderful vibes ended.

First indication of distress

My first day at the workplace was great.

Fun, intuitive, and they wanted creative individuals, not salesman.

While sales were important, the company's vision was more important.

“To deliver joy through life-changing mobile retail experiences.”

Thorough, forward-thinking training. We had a module on intuition. It gave us role ownership.

We were flown cross-country for training, gave feedback, and felt like we made a difference. Multiple contacts responded immediately and enthusiastically.

The atmosphere was genuine.

Making money was secondary, though. Incredible service was a priority.

Jon and Tim answered new hires' questions during Zoom calls during onboarding. CEOs seldom meet new hires this way, but they seemed to enjoy it.

All appeared well.

But in late 2021, things started changing.

Adore's leadership changed after its IPO. From basic values to sales maximization. We lost communication and were forced to fend for ourselves.

Removed the training wheels.

It got tougher to gain instructions from those above me, and new employees told me their roles weren't as advertised.

External money-focused managers were hired.

Instead of creative types, we hired salespeople.

With a new focus on numbers, Adore's uniqueness began to crumble.

Via Zoom, hundreds of workers were let go.

So.

Early in 2022, mass Zoom firings were trending. A CEO firing 900 workers over Zoom went viral.

Adore was special to me, but it became a headline.

30 June 2022, Vice Motherboard published Watch as Adore's CEO Fires Hundreds.

It described a leaked video of Jon Swanson laying off all staff in Canada and the UK.

They called it a “notice of redundancy”.

The corporation couldn't pay its employees.

I loved Adore's underlying ideals, among other things. We called clients Adorers and sold solutions, not add-ons.

But, like anything, a company is only as strong as its weakest link. And obviously, the people-first focus wasn’t making enough money.

There were signs. The expansion was presumably a race against time and money.

Adore finally declared bankruptcy.

Adore declared bankruptcy 3 months after going public. It happened in waves, like any large-scale fall.

Initial key players to leave were

Then, communication deteriorated.

Lastly, the corporate culture disintegrated.

6 months after leaving Adore, I received a letter in the mail from a Law firm — it was about my stocks.

Adore filed Chapter 11. I had to sue to collect my worthless investments.

I hoped those stocks will be valuable someday. Nope. Nope.

Sad, I sighed.

$1.2 billion firm gone.

I left the workplace 3 months before starting a writing business. Despite being mediocre, I'm doing fine.

I got up as Adore fell.

Finally, can we scale kindness?

I trust my gut. Changes at Adore made me leave before it sank.

Adores' unceremonious slide from a top startup to bankruptcy is astonishing to me.

The company did everything perfectly, in my opinion.

first to market,

provided excellent service

paid their staff handsomely.

was responsible and attentive to criticism

The company wasn't led by an egotistical eccentric. The crew had centuries of cumulative space experience.

I'm optimistic about the future of work culture, but is compassion scalable?

Sad NoCoiner

3 years ago

Two Key Money Principles You Should Understand But Were Never Taught

Prudence is advised. Be debt-free. Be frugal. Spend less.

This advice sounds nice, but it rarely works.

Most people never learn these two money rules. Both approaches will impact how you see personal finance.

It may safeguard you from inflation or the inability to preserve money.

Let’s dive in.

#1: Making long-term debt your ally

High-interest debt hurts consumers. Many credit cards carry 25% yearly interest (or more), so always pay on time. Otherwise, you’re losing money.

Some low-interest debt is good. Especially when buying an appreciating asset with borrowed money.

Inflation helps you.

If you borrow $800,000 at 3% interest and invest it at 7%, you'll make $32,000 (4%).

As money loses value, fixed payments get cheaper. Your assets' value and cash flow rise.

The never-in-debt crowd doesn't know this. They lose money paying off mortgages and low-interest loans early when they could have bought assets instead.

#2: How To Buy Or Build Assets To Make Inflation Irrelevant

Dozens of studies demonstrate actual wage growth is static; $2.50 in 1964 was equivalent to $22.65 now.

These reports never give solutions unless they're selling gold.

But there is one.

Assets beat inflation.

$100 invested into the S&P 500 would have an inflation-adjusted return of 17,739.30%.

Likewise, you can build assets from nothing. Doing is easy and quick. The returns can boost your income by 10% or more.

The people who obsess over inflation inadvertently make the problem worse for themselves. They wait for The Big Crash to buy assets. Or they moan about debt clocks and spending bills instead of seeking a solution.

Conclusion

Being ultra-prudent is like playing golf with a putter to avoid hitting the ball into the water. Sure, you might not slice a drive into the pond. But, you aren’t going to play well either. Or have very much fun.

Money has rules.

Avoiding debt or investment risks will limit your rewards. Long-term, being too cautious hurts your finances.

Disclaimer: This article is for entertainment purposes only. It is not financial advice, always do your own research.

You might also like

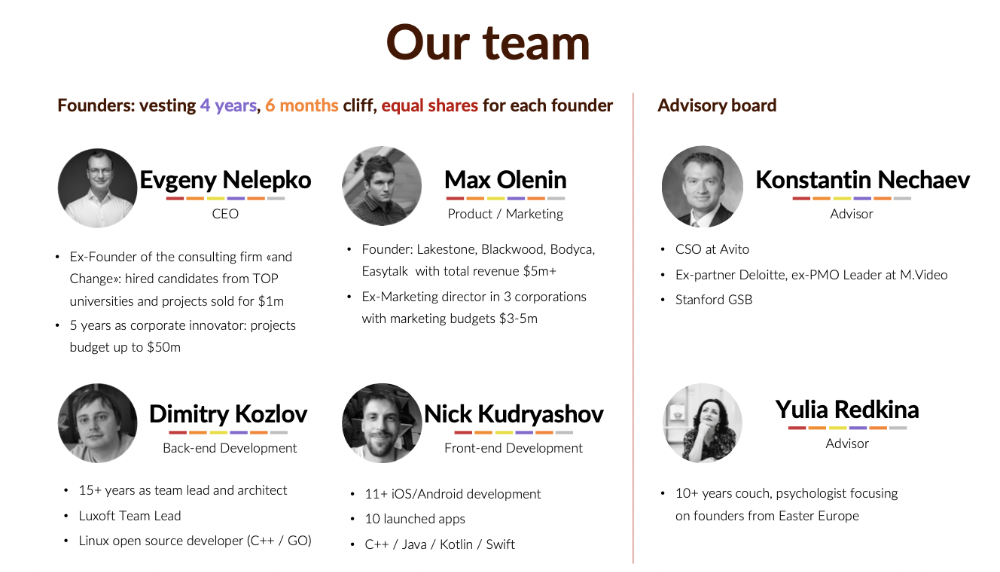

Evgenii Nelepko

3 years ago

My 3 biggest errors as a co-founder and CEO

Reflections on the closed company Hola! Dating app

I'll discuss my fuckups as an entrepreneur and CEO. All of them refer to the dating app Hola!, which I co-founded and starred in.

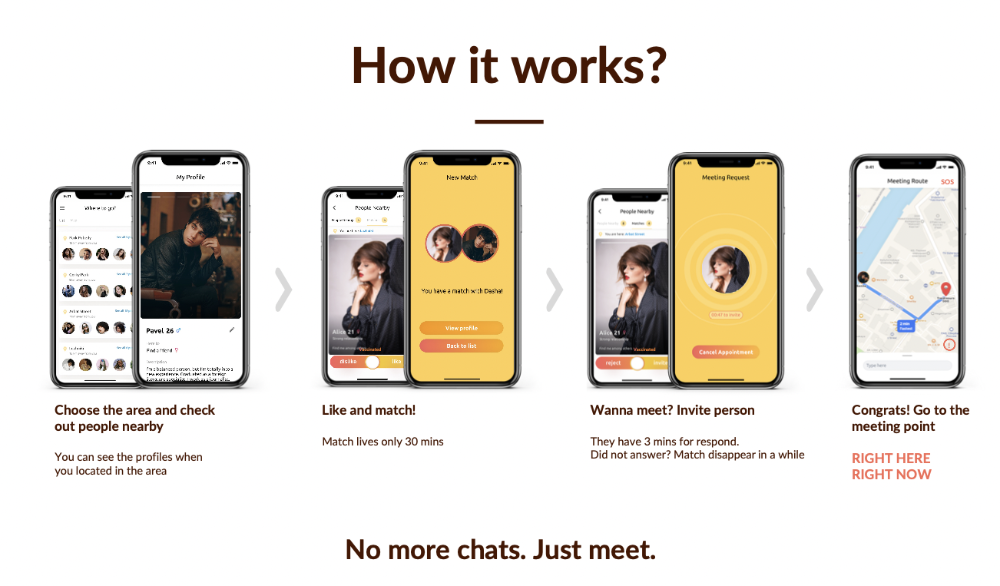

Spring 2021 was when we started. Two techies and two non-techies created a dating app. Pokemon Go and Tinder were combined.

Online dating is a business, and it takes two weeks from a like to a date. We questioned online dating app users if they met anyone offline last year.

75% replied yes, 50% sometimes, 25% usually.

Offline dating is popular, yet people have concerns.

Men are reluctant to make mistakes in front of others.

Women are curious about the background of everyone who approaches them.

We designed unique mechanics that let people date after a match. No endless chitchat. Women would be safe while men felt like cowboys.

I wish to emphasize three faults that lead to founders' estrangement.

This detachment ultimately led to us shutting down the company.

The wrong technology stack

Situation

Instead of generating a faster MVP and designing an app in a universal stack for iOS and Android, I argued we should pilot the app separately for iOS and Android. Technical founders' expertise made this possible.

Self-reflection

Mistaken strategy. We lost time and resources developing two apps at once. We chose iOS since it's more profitable. Apple took us out after the release, citing Guideline 4.3 Spam. After 4 months, we had nothing. We had a long way to go to get the app on Android and the Store.

I suggested creating a uniform platform for the company's growth. This makes parallel product development easier. The strategist's lack of experience and knowledge made it a piece of crap.

What would I have changed if I could?

We should have designed an Android universal stack. I expected Apple to have issues with a dating app.

Our approach should have been to launch something and subsequently improve it, but prejudice won.

The lesson

Discuss the IT stack with your CTO. It saves time and money. Choose the easiest MVP method.

2. A tardy search for investments

Situation

Though the universe and other founders encouraged me to locate investors first, I started pitching when we almost had an app.

When angels arrived, it was time to close. The app was banned, war broke out, I left the country, and the other co-founders stayed. We had no savings.

Self-reflection

I loved interviewing users. I'm proud of having done 1,000 interviews. I wanted to understand people's pain points and improve the product.

Interview results no longer affected the product. I was terrified to start pitching. I filled out accelerator applications and redid my presentation. You must go through that so you won't be terrified later.

What would I have changed if I could?

Get an external or internal mentor to help me with my first pitch as soon as possible. I'd be supported if criticized. He'd cheer with me if there was enthusiasm.

In 99% of cases, I'm comfortable jumping into the unknown, but there are exceptions. The mentor's encouragement would have prompted me to act sooner.

The lesson

Begin fundraising immediately. Months may pass. Show investors your pre-MVP project. Draw inferences from feedback.

3. Role ambiguity

Situation

My technical co-founders were also part-time lead developers, which produced communication issues. As co-founders, we communicated well and recognized the problems. Stakes, vesting, target markets, and approach were agreed upon.

We were behind schedule. Technical debt and strategic gap grew.

Bi-daily and weekly reviews didn't help. Each time, there were explanations. Inside, I was freaking out.

Self-reflection

I am a fairly easy person to talk to. I always try to stick to agreements; otherwise, my head gets stuffed with unnecessary information, interpretations, and emotions.

Sit down -> talk -> decide -> do -> evaluate the results. Repeat it.

If I don't get detailed comments, I start ruining everyone's mood. If there's a systematic violation of agreements without a good justification, I won't join the project or I'll end the collaboration.

What would I have done otherwise?

This is where it’s scariest to draw conclusions. Probably the most logical thing would have been not to start the project as we started it. But that was already a completely different project. So I would not have done anything differently and would have failed again.

But I drew conclusions for the future.

The lesson

First-time founders should find an adviser or team coach for a strategic session. It helps split the roles and responsibilities.

Tim Denning

3 years ago

Elon Musk’s Rich Life Is a Nightmare

I'm sure you haven't read about Elon's other side.

Elon divorced badly.

Nobody's surprised.

Imagine you're a parent. Someone isn't home year-round. What's next?

That’s what happened to YOLO Elon.

He can do anything. He can intervene in wars, shoot his mouth off, bang anyone he wants, avoid tax, make cool tech, buy anything his ego desires, and live anywhere exotic.

Few know his billionaire backstory. I'll tell you so you don't worship his lifestyle. It’s a cult.

Only his career succeeds. His life is a nightmare otherwise.

Psychopaths' schedule

Elon has said he works 120-hour weeks.

As he told the reporter about his job, he choked up, which was unusual for him.

His crazy workload and lack of sleep forced him to scold innocent Wall Street analysts. Later, he apologized.

In the same interview, he admits he hadn't taken more than a week off since 2001, when he was bedridden with malaria. Elon stays home after a near-death experience.

He's rarely outside.

Elon says he sometimes works 3 or 4 days straight.

He admits his crazy work schedule has cost him time with his kids and friends.

Elon's a slave

Elon's birthday description made him emotional.

Elon worked his entire birthday.

"No friends, nothing," he said, stuttering.

His brother's wedding in Catalonia was 48 hours after his birthday. That meant flying there from Tesla's factory prison.

He arrived two hours before the big moment, barely enough time to eat and change, let alone see his brother.

Elon had to leave after the bouquet was tossed to a crowd of billionaire lovers. He missed his brother's first dance with his wife.

Shocking.

He went straight to Tesla's prison.

The looming health crisis

Elon was asked if overworking affected his health.

Not great. Friends are worried.

Now you know why Elon tweets dumb things. Working so hard has probably caused him mental health issues.

Mental illness removed my reality filter. You do stupid things because you're tired.

Astronauts pelted Elon

Elon's overwork isn't the first time his life has made him emotional.

When asked about Neil Armstrong and Gene Cernan criticizing his SpaceX missions, he got emotional. Elon's heroes.

They're why he started the company, and they mocked his work. In another interview, we see how Elon’s business obsession has knifed him in the heart.

Once you have a company, you must feed, nurse, and care for it, even if it destroys you.

"Yep," Elon says, tearing up.

In the same interview, he's asked how Tesla survived the 2008 recession. Elon stopped the interview because he was crying. When Tesla and SpaceX filed for bankruptcy in 2008, he nearly had a nervous breakdown. He called them his "children."

All the time, he's risking everything.

Jack Raines explains best:

Too much money makes you a slave to your net worth.

Elon's emotions are admirable. It's one of the few times he seems human, not like an alien Cyborg.

Stop idealizing Elon's lifestyle

Building a side business that becomes a billion-dollar unicorn startup is a nightmare.

"Billionaire" means financially wealthy but otherwise broke. A rich life includes more than business and money.

This post is a summary. Read full article here

Alex Mathers

2 years ago

How to Produce Enough for People to Not Neglect You

Internet's fantastic, right?

We've never had a better way to share our creativity.

I can now draw on my iPad and tweet or Instagram it to thousands. I may get some likes.

With such a great, free tool, you're not alone.

Millions more bright-eyed artists are sharing their work online.

The issue is getting innovative work noticed, not sharing it.

In a world where creators want attention, attention is valuable.

We build for attention.

Attention helps us establish a following, make money, get notoriety, and make a difference.

Most of us require attention to stay sane while creating wonderful things.

I know how hard it is to work hard and receive little views.

How do we receive more attention, more often, in a sea of talent?

Advertising and celebrity endorsements are options. These may work temporarily.

To attract true, organic, and long-term attention, you must create in high quality, high volume, and consistency.

Adapting Steve Martin's Be so amazing, they can't ignore you (with a mention to Dan Norris in his great book Create or Hate for the reminder)

Create a lot.

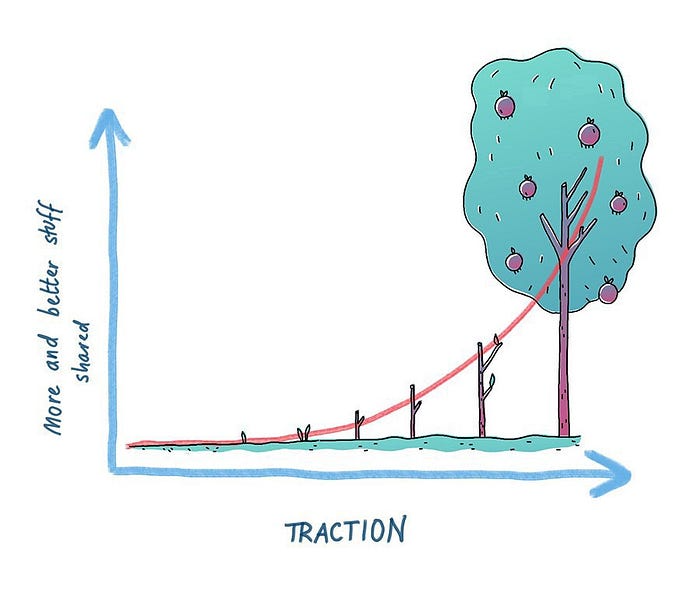

Eventually, your effort will gain traction.

Traction shows your work's influence.

Traction is when your product sells more. Traction is exponential user growth. Your work is shared more.

No matter how good your work is, it will always have minimal impact on the world.

Your work can eventually dent or puncture. Daily, people work to dent.

To achieve this tipping point, you must consistently produce exceptional work.

Expect traction after hundreds of outputs.

Dilbert creator Scott Adams says repetition persuades. If you don't stop, you can persuade practically anyone with anything.

Volume lends believability. So make more.

I worked as an illustrator for at least a year and a half without any recognition. After 150 illustrations on iStockphoto, my work started selling.

With 350 illustrations on iStock, I started getting decent client commissions.

Producing often will improve your craft and draw attention.

It's the only way to succeed. More creation means better results and greater attention.

Austin Kleon says you can improve your skill in relative anonymity before you become famous. Before obtaining traction, generate a lot and become excellent.

Most artists, even excellent ones, don't create consistently enough to get traction.

It may hurt. For makers who don't love and flow with their work, it's extremely difficult.

Your work must bring you to life.

To generate so much that others can't ignore you, decide what you'll accomplish every day (or most days).

Commit and be patient.

Prepare for zero-traction.

Anticipating this will help you persevere and create.

My online guru Grant Cardone says: Anything worth doing is worth doing every day.

Do.