Clean API Call With React Hooks

| Photo by Juanjo Jaramillo on Unsplash |

Calling APIs is the most common thing to do in any modern web application. When it comes to talking with an API then most of the time we need to do a lot of repetitive things like getting data from an API call, handling the success or error case, and so on.

When calling tens of hundreds of API calls we always have to do those tedious tasks. We can handle those things efficiently by putting a higher level of abstraction over those barebone API calls, whereas in some small applications, sometimes we don’t even care.

The problem comes when we start adding new features on top of the existing features without handling the API calls in an efficient and reusable manner. In that case for all of those API calls related repetitions, we end up with a lot of repetitive code across the whole application.

In React, we have different approaches for calling an API. Nowadays mostly we use React hooks. With React hooks, it’s possible to handle API calls in a very clean and consistent way throughout the application in spite of whatever the application size is. So let’s see how we can make a clean and reusable API calling layer using React hooks for a simple web application.

I’m using a code sandbox for this blog which you can get here.

import "./styles.css";

import React, { useEffect, useState } from "react";

import axios from "axios";

export default function App() {

const [posts, setPosts] = useState(null);

const [error, setError] = useState("");

const [loading, setLoading] = useState(false);

useEffect(() => {

handlePosts();

}, []);

const handlePosts = async () => {

setLoading(true);

try {

const result = await axios.get(

"https://jsonplaceholder.typicode.com/posts"

);

setPosts(result.data);

} catch (err) {

setError(err.message || "Unexpected Error!");

} finally {

setLoading(false);

}

};

return (

<div className="App">

<div>

<h1>Posts</h1>

{loading && <p>Posts are loading!</p>}

{error && <p>{error}</p>}

<ul>

{posts?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

</div>

);

}

I know the example above isn’t the best code but at least it’s working and it’s valid code. I will try to improve that later. For now, we can just focus on the bare minimum things for calling an API.

Here, you can try to get posts data from JsonPlaceholer. Those are the most common steps we follow for calling an API like requesting data, handling loading, success, and error cases.

If we try to call another API from the same component then how that would gonna look? Let’s see.

500: Internal Server Error

Now it’s going insane! For calling two simple APIs we’ve done a lot of duplication. On a top-level view, the component is doing nothing but just making two GET requests and handling the success and error cases. For each request, it’s maintaining three states which will periodically increase later if we’ve more calls.

Let’s refactor to make the code more reusable with fewer repetitions.

Step 1: Create a Hook for the Redundant API Request Codes

Most of the repetitions we have done so far are about requesting data, handing the async things, handling errors, success, and loading states. How about encapsulating those things inside a hook?

The only unique things we are doing inside handleComments and handlePosts are calling different endpoints. The rest of the things are pretty much the same. So we can create a hook that will handle the redundant works for us and from outside we’ll let it know which API to call.

500: Internal Server Error

Here, this request function is identical to what we were doing on the handlePosts and handleComments. The only difference is, it’s calling an async function apiFunc which we will provide as a parameter with this hook. This apiFunc is the only independent thing among any of the API calls we need.

With hooks in action, let’s change our old codes in App component, like this:

500: Internal Server Error

How about the current code? Isn’t it beautiful without any repetitions and duplicate API call handling things?

Let’s continue our journey from the current code. We can make App component more elegant. Now it knows a lot of details about the underlying library for the API call. It shouldn’t know that. So, here’s the next step…

Step 2: One Component Should Take Just One Responsibility

Our App component knows too much about the API calling mechanism. Its responsibility should just request the data. How the data will be requested under the hood, it shouldn’t care about that.

We will extract the API client-related codes from the App component. Also, we will group all the API request-related codes based on the API resource. Now, this is our API client:

import axios from "axios";

const apiClient = axios.create({

// Later read this URL from an environment variable

baseURL: "https://jsonplaceholder.typicode.com"

});

export default apiClient;

All API calls for comments resource will be in the following file:

import client from "./client";

const getComments = () => client.get("/comments");

export default {

getComments

};

All API calls for posts resource are placed in the following file:

import client from "./client";

const getPosts = () => client.get("/posts");

export default {

getPosts

};

Finally, the App component looks like the following:

import "./styles.css";

import React, { useEffect } from "react";

import commentsApi from "./api/comments";

import postsApi from "./api/posts";

import useApi from "./hooks/useApi";

export default function App() {

const getPostsApi = useApi(postsApi.getPosts);

const getCommentsApi = useApi(commentsApi.getComments);

useEffect(() => {

getPostsApi.request();

getCommentsApi.request();

}, []);

return (

<div className="App">

{/* Post List */}

<div>

<h1>Posts</h1>

{getPostsApi.loading && <p>Posts are loading!</p>}

{getPostsApi.error && <p>{getPostsApi.error}</p>}

<ul>

{getPostsApi.data?.map((post) => (

<li key={post.id}>{post.title}</li>

))}

</ul>

</div>

{/* Comment List */}

<div>

<h1>Comments</h1>

{getCommentsApi.loading && <p>Comments are loading!</p>}

{getCommentsApi.error && <p>{getCommentsApi.error}</p>}

<ul>

{getCommentsApi.data?.map((comment) => (

<li key={comment.id}>{comment.name}</li>

))}

</ul>

</div>

</div>

);

}

Now it doesn’t know anything about how the APIs get called. Tomorrow if we want to change the API calling library from axios to fetch or anything else, our App component code will not get affected. We can just change the codes form client.js This is the beauty of abstraction.

Apart from the abstraction of API calls, Appcomponent isn’t right the place to show the list of the posts and comments. It’s a high-level component. It shouldn’t handle such low-level data interpolation things.

So we should move this data display-related things to another low-level component. Here I placed those directly in the App component just for the demonstration purpose and not to distract with component composition-related things.

Final Thoughts

The React library gives the flexibility for using any kind of third-party library based on the application’s needs. As it doesn’t have any predefined architecture so different teams/developers adopted different approaches to developing applications with React. There’s nothing good or bad. We choose the development practice based on our needs/choices. One thing that is there beyond any choices is writing clean and maintainable codes.

More on Web3 & Crypto

Marco Manoppo

3 years ago

Failures of DCG and Genesis

Don't sleep with your own sister.

70% of lottery winners go broke within five years. You've heard the last one. People who got rich quickly without setbacks and hard work often lose it all. My father said, "Easy money is easily lost," and a wealthy friend who owns a family office said, "The first generation makes it, the second generation spends it, and the third generation blows it."

This is evident. Corrupt politicians in developing countries live lavishly, buying their third wives' fifth Hermès bag and celebrating New Year's at The Brando Resort. A successful businessperson from humble beginnings is more conservative with money. More so if they're atom-based, not bit-based. They value money.

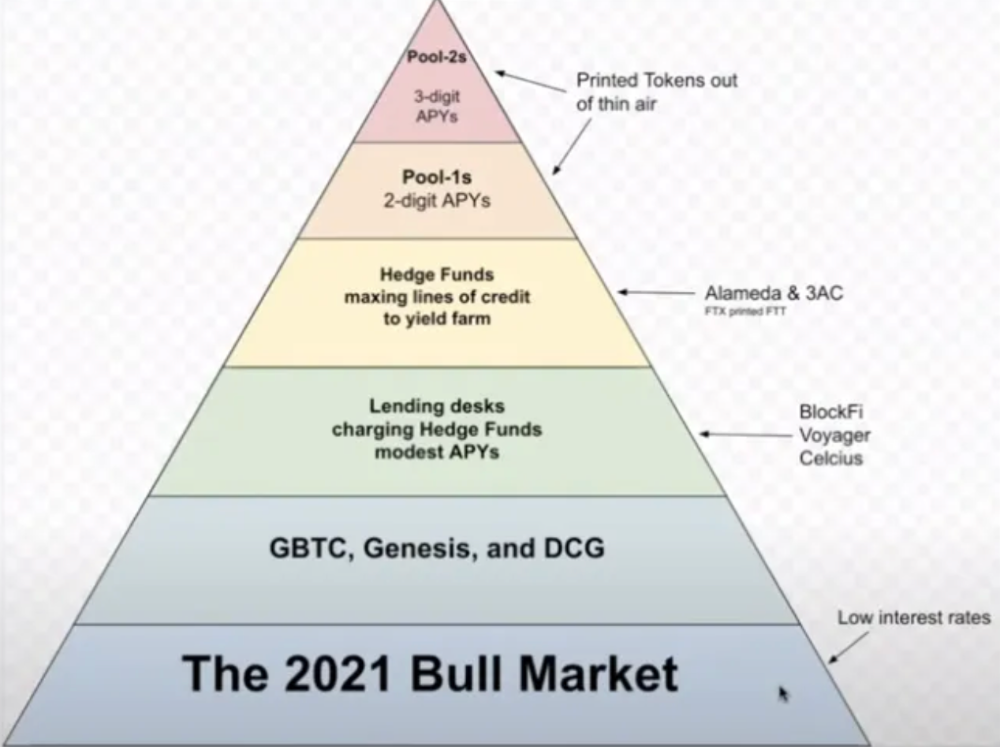

Crypto can "feel" easy. I have nothing against capital market investing. The global financial system is shady, but that's another topic. The problem started when those who took advantage of easy money started affecting other businesses. VCs did minimal due diligence on FTX because they needed deal flow and returns for their LPs. Lenders did minimum diligence and underwrote ludicrous loans to 3AC because they needed revenue.

Alameda (hence FTX) and 3AC made "easy money" Genesis and DCG aren't. Their businesses are more conventional, but they underestimated how "easy money" can hurt them.

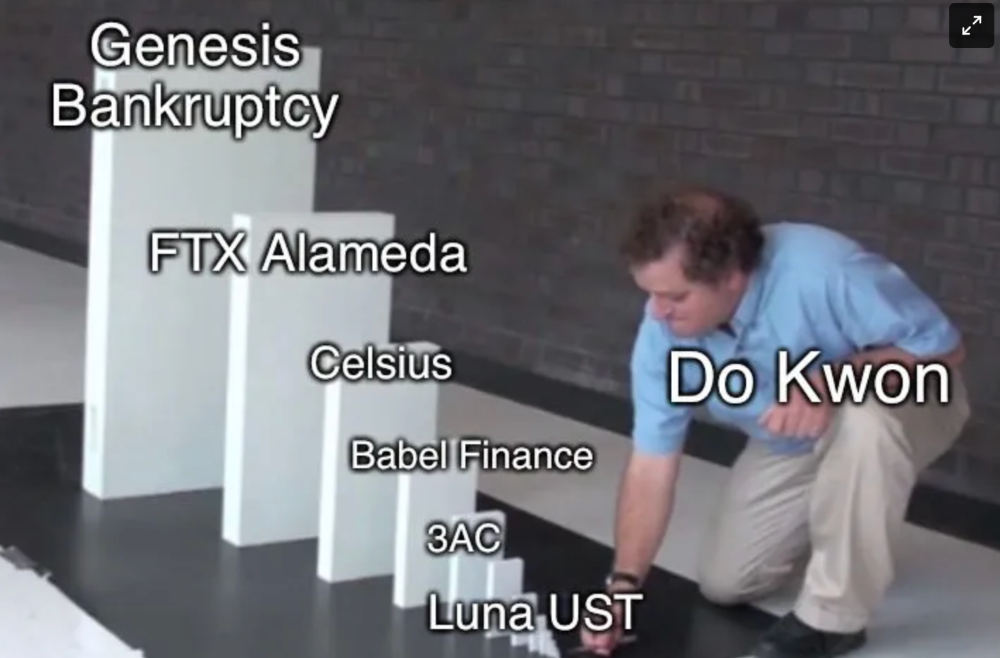

Genesis has been the victim of easy money hubris and insolvency, losing $1 billion+ to 3AC and $200M to FTX. We discuss the implications for the broader crypto market.

Here are the quick takeaways:

Genesis is one of the largest and most notable crypto lenders and prime brokerage firms.

DCG and Genesis have done related party transactions, which can be done right but is a bad practice.

Genesis owes DCG $1.5 billion+.

If DCG unwinds Grayscale's GBTC, $9-10 billion in BTC will hit the market.

DCG will survive Genesis.

What happened?

Let's recap the FTX shenanigan from two weeks ago. Shenanigans! Delphi's tweet sums up the craziness. Genesis has $175M in FTX.

Cred's timeline: I hate bad crisis management. Yes, admitting their balance sheet hole right away might've sparked more panic, and there's no easy way to convey your trouble, but no one ever learns.

By November 23, rumors circulated online that the problem could affect Genesis' parent company, DCG. To address this, Barry Silbert, Founder, and CEO of DCG released a statement to shareholders.

A few things are confirmed thanks to this statement.

DCG owes $1.5 billion+ to Genesis.

$500M is due in 6 months, and the rest is due in 2032 (yes, that’s not a typo).

Unless Barry raises new cash, his last-ditch efforts to repay the money will likely push the crypto market lower.

Half a year of GBTC fees is approximately $100M.

They can pay $500M with GBTC.

With profits, sell another port.

Genesis has hired a restructuring adviser, indicating it is in trouble.

Rehypothecation

Every crypto problem in the past year seems to be rehypothecation between related parties, excessive leverage, hubris, and the removal of the money printer. The Bankless guys provided a chart showing 2021 crypto yield.

In June 2022, @DataFinnovation published a great investigation about 3AC and DCG. Here's a summary.

3AC borrowed BTC from Genesis and pledged it to create Grayscale's GBTC shares.

3AC uses GBTC to borrow more money from Genesis.

This lets 3AC leverage their capital.

3AC's strategy made sense because GBTC had a premium, creating "free money."

GBTC's discount and LUNA's implosion caused problems.

3AC lost its loan money in LUNA.

Margin called on 3ACs' GBTC collateral.

DCG bought GBTC to avoid a systemic collapse and a larger discount.

Genesis lost too much money because 3AC can't pay back its loan. DCG "saved" Genesis, but the FTX collapse hurt Genesis further, forcing DCG and Genesis to seek external funding.

bruh…

Learning Experience

Co-borrowing. Unnecessary rehypothecation. Extra space. Governance disaster. Greed, hubris. Crypto has repeatedly shown it can recreate traditional financial system disasters quickly. Working in crypto is one of the best ways to learn crazy financial tricks people will do for a quick buck much faster than if you dabble in traditional finance.

Moving Forward

I think the crypto industry needs to consider its future. This is especially true for professionals. I'm not trying to scare you. In 2018 and 2020, I had doubts. No doubts now. Detailing the crypto industry's potential outcomes helped me gain certainty and confidence in its future. This includes VCs' benefits and talking points during the bull market, as well as what would happen if government regulations became hostile, etc. Even if that happens, I'm certain. This is permanent. I may write a post about that soon.

Sincerely,

M.

Vitalik

4 years ago

Fairness alternatives to selling below market clearing prices (or community sentiment, or fun)

When a seller has a limited supply of an item in high (or uncertain and possibly high) demand, they frequently set a price far below what "the market will bear." As a result, the item sells out quickly, with lucky buyers being those who tried to buy first. This has happened in the Ethereum ecosystem, particularly with NFT sales and token sales/ICOs. But this phenomenon is much older; concerts and restaurants frequently make similar choices, resulting in fast sell-outs or long lines.

Why do sellers do this? Economists have long wondered. A seller should sell at the market-clearing price if the amount buyers are willing to buy exactly equals the amount the seller has to sell. If the seller is unsure of the market-clearing price, they should sell at auction and let the market decide. So, if you want to sell something below market value, don't do it. It will hurt your sales and it will hurt your customers. The competitions created by non-price-based allocation mechanisms can sometimes have negative externalities that harm third parties, as we will see.

However, the prevalence of below-market-clearing pricing suggests that sellers do it for good reason. And indeed, as decades of research into this topic has shown, there often are. So, is it possible to achieve the same goals with less unfairness, inefficiency, and harm?

Selling at below market-clearing prices has large inefficiencies and negative externalities

An item that is sold at market value or at an auction allows someone who really wants it to pay the high price or bid high in the auction. So, if a seller sells an item below market value, some people will get it and others won't. But the mechanism deciding who gets the item isn't random, and it's not always well correlated with participant desire. It's not always about being the fastest at clicking buttons. Sometimes it means waking up at 2 a.m. (but 11 p.m. or even 2 p.m. elsewhere). Sometimes it's just a "auction by other means" that's more chaotic, less efficient, and has far more negative externalities.

There are many examples of this in the Ethereum ecosystem. Let's start with the 2017 ICO craze. For example, an ICO project would set the price of the token and a hard maximum for how many tokens they are willing to sell, and the sale would start automatically at some point in time. The sale ends when the cap is reached.

So what? In practice, these sales often ended in 30 seconds or less. Everyone would start sending transactions in as soon as (or just before) the sale started, offering higher and higher fees to encourage miners to include their transaction first. Instead of the token seller receiving revenue, miners receive it, and the sale prices out all other applications on-chain.

The most expensive transaction in the BAT sale set a fee of 580,000 gwei, paying a fee of $6,600 to get included in the sale.

Many ICOs after that tried various strategies to avoid these gas price auctions; one ICO notably had a smart contract that checked the transaction's gasprice and rejected it if it exceeded 50 gwei. But that didn't solve the issue. Buyers hoping to game the system sent many transactions hoping one would get through. An auction by another name, clogging the chain even more.

ICOs have recently lost popularity, but NFTs and NFT sales have risen in popularity. But the NFT space didn't learn from 2017; they do fixed-quantity sales just like ICOs (eg. see the mint function on lines 97-108 of this contract here). So what?

That's not the worst; some NFT sales have caused gas price spikes of up to 2000 gwei.

High gas prices from users fighting to get in first by sending higher and higher transaction fees. An auction renamed, pricing out all other applications on-chain for 15 minutes.

So why do sellers sometimes sell below market price?

Selling below market value is nothing new, and many articles, papers, and podcasts have written (and sometimes bitterly complained) about the unwillingness to use auctions or set prices to market-clearing levels.

Many of the arguments are the same for both blockchain (NFTs and ICOs) and non-blockchain examples (popular restaurants and concerts). Fairness and the desire not to exclude the poor, lose fans or create tension by being perceived as greedy are major concerns. The 1986 paper by Kahneman, Knetsch, and Thaler explains how fairness and greed can influence these decisions. I recall that the desire to avoid perceptions of greed was also a major factor in discouraging the use of auction-like mechanisms in 2017.

Aside from fairness concerns, there is the argument that selling out and long lines create a sense of popularity and prestige, making the product more appealing to others. Long lines should have the same effect as high prices in a rational actor model, but this is not the case in reality. This applies to ICOs and NFTs as well as restaurants. Aside from increasing marketing value, some people find the game of grabbing a limited set of opportunities first before everyone else is quite entertaining.

But there are some blockchain-specific factors. One argument for selling ICO tokens below market value (and one that persuaded the OmiseGo team to adopt their capped sale strategy) is community dynamics. The first rule of community sentiment management is to encourage price increases. People are happy if they are "in the green." If the price drops below what the community members paid, they are unhappy and start calling you a scammer, possibly causing a social media cascade where everyone calls you a scammer.

This effect can only be avoided by pricing low enough that post-launch market prices will almost certainly be higher. But how do you do this without creating a rush for the gates that leads to an auction?

Interesting solutions

It's 2021. We have a blockchain. The blockchain is home to a powerful decentralized finance ecosystem, as well as a rapidly expanding set of non-financial tools. The blockchain also allows us to reset social norms. Where decades of economists yelling about "efficiency" failed, blockchains may be able to legitimize new uses of mechanism design. If we could use our more advanced tools to create an approach that more directly solves the problems, with fewer side effects, wouldn't that be better than fiddling with a coarse-grained one-dimensional strategy space of selling at market price versus below market price?

Begin with the goals. We'll try to cover ICOs, NFTs, and conference tickets (really a type of NFT) all at the same time.

1. Fairness: don't completely exclude low-income people from participation; give them a chance. The goal of token sales is to avoid high initial wealth concentration and have a larger and more diverse initial token holder community.

2. Don’t create races: Avoid situations where many people rush to do the same thing and only a few get in (this is the type of situation that leads to the horrible auctions-by-another-name that we saw above).

3. Don't require precise market knowledge: the mechanism should work even if the seller has no idea how much demand exists.

4. Fun: The process of participating in the sale should be fun and game-like, but not frustrating.

5. Give buyers positive expected returns: in the case of a token (or an NFT), buyers should expect price increases rather than decreases. This requires selling below market value.

Let's start with (1). From Ethereum's perspective, there is a simple solution. Use a tool designed for the job: proof of personhood protocols! Here's one quick idea:

Mechanism 1 Each participant (verified by ID) can buy up to ‘’X’’ tokens at price P, with the option to buy more at an auction.

With the per-person mechanism, buyers can get positive expected returns for the portion sold through the per-person mechanism, and the auction part does not require sellers to understand demand levels. Is it race-free? The number of participants buying through the per-person pool appears to be high. But what if the per-person pool isn't big enough to accommodate everyone?

Make the per-person allocation amount dynamic.

Mechanism 2 Each participant can deposit up to X tokens into a smart contract to declare interest. Last but not least, each buyer receives min(X, N / buyers) tokens, where N is the total sold through the per-person pool (some other amount can also be sold by auction). The buyer gets their deposit back if it exceeds the amount needed to buy their allocation.

No longer is there a race condition based on the number of buyers per person. No matter how high the demand, it's always better to join sooner rather than later.

Here's another idea if you like clever game mechanics with fancy quadratic formulas.

Mechanism 3 Each participant can buy X units at a price P X 2 up to a maximum of C tokens per buyer. C starts low and gradually increases until enough units are sold.

The quantity allocated to each buyer is theoretically optimal, though post-sale transfers will degrade this optimality over time. Mechanisms 2 and 3 appear to meet all of the above objectives. They're not perfect, but they're good starting points.

One more issue. For fixed and limited supply NFTs, the equilibrium purchased quantity per participant may be fractional (in mechanism 2, number of buyers > N, and in mechanism 3, setting C = 1 may already lead to over-subscription). With fractional sales, you can offer lottery tickets: if there are N items available, you have a chance of N/number of buyers of getting the item, otherwise you get a refund. For a conference, groups could bundle their lottery tickets to guarantee a win or a loss. The certainty of getting the item can be auctioned.

The bottom tier of "sponsorships" can be used to sell conference tickets at market rate. You may end up with a sponsor board full of people's faces, but is that okay? After all, John Lilic was on EthCC's sponsor board!

Simply put, if you want to be reliably fair to people, you need an input that explicitly measures people. Authentication protocols do this (and if desired can be combined with zero knowledge proofs to ensure privacy). So we should combine the efficiency of market and auction-based pricing with the equality of proof of personhood mechanics.

Answers to possible questions

Q: Won't people who don't care about your project buy the item and immediately resell it?

A: Not at first. Meta-games take time to appear in practice. If they do, making them untradeable for a while may help mitigate the damage. Using your face to claim that your previous account was hacked and that your identity, including everything in it, should be moved to another account works because proof-of-personhood identities are untradeable.

Q: What if I want to make my item available to a specific community?

A: Instead of ID, use proof of participation tokens linked to community events. Another option, also serving egalitarian and gamification purposes, is to encrypt items within publicly available puzzle solutions.

Q: How do we know they'll accept? Strange new mechanisms have previously been resisted.

A: Having economists write screeds about how they "should" accept a new mechanism that they find strange is difficult (or even "equity"). However, abrupt changes in context effectively reset people's expectations. So the blockchain space is the best place to try this. You could wait for the "metaverse", but it's possible that the best version will run on Ethereum anyway, so start now.

Max Parasol

4 years ago

What the hell is Web3 anyway?

"Web 3.0" is a trendy buzzword with a vague definition. Everyone agrees it has to do with a blockchain-based internet evolution, but what is it?

Yet, the meaning and prospects for Web3 have become hot topics in crypto communities. Big corporations use the term to gain a foothold in the space while avoiding the negative connotations of “crypto.”

But it can't be evaluated without a definition.

Among those criticizing Web3's vagueness is Cobie:

“Despite the dominie's deluge of undistinguished think pieces, nobody really agrees on what Web3 is. Web3 is a scam, the future, tokenizing the world, VC exit liquidity, or just another name for crypto, depending on your tribe.

“Even the crypto community is split on whether Bitcoin is Web3,” he adds.

The phrase was coined by an early crypto thinker, and the community has had years to figure out what it means. Many ideologies and commercial realities have driven reverse engineering.

Web3 is becoming clearer as a concept. It contains ideas. It was probably coined by Ethereum co-founder Gavin Wood in 2014. His definition of Web3 included “trustless transactions” as part of its tech stack. Wood founded the Web3 Foundation and the Polkadot network, a Web3 alternative future.

The 2013 Ethereum white paper had previously allowed devotees to imagine a DAO, for example.

Web3 now has concepts like decentralized autonomous organizations, sovereign digital identity, censorship-free data storage, and data divided by multiple servers. They intertwine discussions about the “Web3” movement and its viability.

These ideas are linked by Cobie's initial Web3 definition. A key component of Web3 should be “ownership of value” for one's own content and data.

Noting that “late-stage capitalism greedcorps that make you buy a fractionalized micropayment NFT on Cardano to operate your electric toothbrush” may build the new web, he notes that “crypto founders are too rich to care anymore.”

Very Important

Many critics of Web3 claim it isn't practical or achievable. Web3 critics like Moxie Marlinspike (creator of sslstrip and Signal/TextSecure) can never see people running their own servers. Early in January, he argued that protocols are more difficult to create than platforms.

While this is true, some projects, like the file storage protocol IPFS, allow users to choose which jurisdictions their data is shared between.

But full decentralization is a difficult problem. Suhaza, replying to Moxie, said:

”People don't want to run servers... Companies are now offering API access to an Ethereum node as a service... Almost all DApps interact with the blockchain using Infura or Alchemy. In fact, when a DApp uses a wallet like MetaMask to interact with the blockchain, MetaMask is just calling Infura!

So, here are the questions: Web3: Is it a go? Is it truly decentralized?

Web3 history is shaped by Web2 failure.

This is the story of how the Internet was turned upside down...

Then came the vision. Everyone can create content for free. Decentralized open-source believers like Tim Berners-Lee popularized it.

Real-world data trade-offs for content creation and pricing.

A giant Wikipedia page married to a giant Craig's List. No ads, no logins, and a private web carve-up. For free usage, you give up your privacy and data to the algorithmic targeted advertising of Web 2.

Our data is centralized and savaged by giant corporations. Data localization rules and geopolitical walls like China's Great Firewall further fragment the internet.

The decentralized Web3 reflects Berners-original Lee's vision: "No permission is required from a central authority to post anything... there is no central controlling node and thus no single point of failure." Now he runs Solid, a Web3 data storage startup.

So Web3 starts with decentralized servers and data privacy.

Web3 begins with decentralized storage.

Data decentralization is a key feature of the Web3 tech stack. Web2 has closed databases. Large corporations like Facebook, Google, and others go to great lengths to collect, control, and monetize data. We want to change it.

Amazon, Google, Microsoft, Alibaba, and Huawei, according to Gartner, currently control 80% of the global cloud infrastructure market. Web3 wants to change that.

Decentralization enlarges power structures by giving participants a stake in the network. Users own data on open encrypted networks in Web3. This area has many projects.

Apps like Filecoin and IPFS have led the way. Data is replicated across multiple nodes in Web3 storage providers like Filecoin.

But the new tech stack and ideology raise many questions.

Giving users control over their data

According to Ryan Kris, COO of Verida, his “Web3 vision” is “empowering people to control their own data.”

Verida targets SDKs that address issues in the Web3 stack: identity, messaging, personal storage, and data interoperability.

A big app suite? “Yes, but it's a frontier technology,” he says. They are currently building a credentialing system for decentralized health in Bermuda.

By empowering individuals, how will Web3 create a fairer internet? Kris, who has worked in telecoms, finance, cyber security, and blockchain consulting for decades, admits it is difficult:

“The viability of Web3 raises some good business questions,” he adds. “How can users regain control over centralized personal data? How are startups motivated to build products and tools that support this transition? How are existing Web2 companies encouraged to pivot to a Web3 business model to compete with market leaders?

Kris adds that new technologies have regulatory and practical issues:

"On storage, IPFS is great for redundantly sharing public data, but not designed for securing private personal data. It is not controlled by the users. When data storage in a specific country is not guaranteed, regulatory issues arise."

Each project has varying degrees of decentralization. The diehards say DApps that use centralized storage are no longer “Web3” companies. But fully decentralized technology is hard to build.

Web2.5?

Some argue that we're actually building Web2.5 businesses, which are crypto-native but not fully decentralized. This is vital. For example, the NFT may be on a blockchain, but it is linked to centralized data repositories like OpenSea. A server failure could result in data loss.

However, according to Apollo Capital crypto analyst David Angliss, OpenSea is “not exactly community-led”. Also in 2021, much to the chagrin of crypto enthusiasts, OpenSea tried and failed to list on the Nasdaq.

This is where Web2.5 is defined.

“Web3 isn't a crypto segment. “Anything that uses a blockchain for censorship resistance is Web3,” Angliss tells us.

“Web3 gives users control over their data and identity. This is not possible in Web2.”

“Web2 is like feudalism, with walled-off ecosystems ruled by a few. For example, an honest user owned the Instagram account “Meta,” which Facebook rebranded and then had to make up a reason to suspend. Not anymore with Web3. If I buy ‘Ethereum.ens,' Ethereum cannot take it away from me.”

Angliss uses OpenSea as a Web2.5 business example. Too decentralized, i.e. censorship resistant, can be unprofitable for a large company like OpenSea. For example, OpenSea “enables NFT trading”. But it also stopped the sale of stolen Bored Apes.”

Web3 (or Web2.5, depending on the context) has been described as a new way to privatize internet.

“Being in the crypto ecosystem doesn't make it Web3,” Angliss says. The biggest risk is centralized closed ecosystems rather than a growing Web3.

LooksRare and OpenDAO are two community-led platforms that are more decentralized than OpenSea. LooksRare has even been “vampire attacking” OpenSea, indicating a Web3 competitor to the Web2.5 NFT king could find favor.

The addition of a token gives these new NFT platforms more options for building customer loyalty. For example, OpenSea charges a fee that goes nowhere. Stakeholders of LOOKS tokens earn 100% of the trading fees charged by LooksRare on every basic sale.

Maybe Web3's time has come.

So whose data is it?

Continuing criticisms of Web3 platforms' decentralization may indicate we're too early. Users want to own and store their in-game assets and NFTs on decentralized platforms like the Metaverse and play-to-earn games. Start-ups like Arweave, Sia, and Aleph.im propose an alternative.

To be truly decentralized, Web3 requires new off-chain models that sidestep cloud computing and Web2.5.

“Arweave and Sia emerged as formidable competitors this year,” says the Messari Report. They seek to reduce the risk of an NFT being lost due to a data breach on a centralized server.

Aleph.im, another Web3 cloud competitor, seeks to replace cloud computing with a service network. It is a decentralized computing network that supports multiple blockchains by retrieving and encrypting data.

“The Aleph.im network provides a truly decentralized alternative where it is most needed: storage and computing,” says Johnathan Schemoul, founder of Aleph.im. For reasons of consensus and security, blockchains are not designed for large storage or high-performance computing.

As a result, large data sets are frequently stored off-chain, increasing the risk for centralized databases like OpenSea

Aleph.im enables users to own digital assets using both blockchains and off-chain decentralized cloud technologies.

"We need to go beyond layer 0 and 1 to build a robust decentralized web. The Aleph.im ecosystem is proving that Web3 can be decentralized, and we intend to keep going.”

Aleph.im raised $10 million in mid-January 2022, and Ubisoft uses its network for NFT storage. This is the first time a big-budget gaming studio has given users this much control.

It also suggests Web3 could work as a B2B model, even if consumers aren't concerned about “decentralization.” Starting with gaming is common.

Can Tokenomics help Web3 adoption?

Web3 consumer adoption is another story. The average user may not be interested in all this decentralization talk. Still, how much do people value privacy over convenience? Can tokenomics solve the privacy vs. convenience dilemma?

Holon Global Investments' Jonathan Hooker tells us that human internet behavior will change. “Do you own Bitcoin?” he asks in his Web3 explanation. How does it feel to own and control your own sovereign wealth? Then:

“What if you could own and control your data like Bitcoin?”

“The business model must find what that person values,” he says. Putting their own health records on centralized systems they don't control?

“How vital are those medical records to that person at a critical time anywhere in the world? Filecoin and IPFS can help.”

Web3 adoption depends on NFT storage competition. A free off-chain storage of NFT metadata and assets was launched by Filecoin in April 2021.

Denationalization and blockchain technology have significant implications for data ownership and compensation for lending, staking, and using data.

Tokenomics can change human behavior, but many people simply sign into Web2 apps using a Facebook API without hesitation. Our data is already owned by Google, Baidu, Tencent, and Facebook (and its parent company Meta). Is it too late to recover?

Maybe. “Data is like fruit, it starts out fresh but ages,” he says. "Big Tech's data on us will expire."

Web3 founder Kris agrees with Hooker that “value for data is the issue, not privacy.” People accept losing their data privacy, so tokenize it. People readily give up data, so why not pay for it?

"Personalized data offering is valuable in personalization. “I will sell my social media data but not my health data.”

Purists and mass consumer adoption struggle with key management.

Others question data tokenomics' optimism. While acknowledging its potential, Box founder Aaron Levie questioned the viability of Web3 models in a Tweet thread:

“Why? Because data almost always works in an app. A product and APIs that moved quickly to build value and trust over time.”

Levie contends that tokenomics may complicate matters. In addition to community governance and tokenomics, Web3 ideals likely add a new negotiation vector.

“These are hard problems about human coordination, not software or blockchains,”. Using a Facebook API is simple. The business model and user interface are crucial.

For example, the crypto faithful have a common misconception about logging into Web3. It goes like this: Web 1 had usernames and passwords. Web 2 uses Google, Facebook, or Twitter APIs, while Web 3 uses your wallet. Pay with Ethereum on MetaMask, for example.

But Levie is correct. Blockchain key management is stressed in this meme. Even seasoned crypto enthusiasts have heart attacks, let alone newbies.

Web3 requires a better user experience, according to Kris, the company's founder. “How does a user recover keys?”

And at this point, no solution is likely to be completely decentralized. So Web3 key management can be improved. ”The moment someone loses control of their keys, Web3 ceases to exist.”

That leaves a major issue for Web3 purists. Put this one in the too-hard basket.

Is 2022 the Year of Web3?

Web3 must first solve a number of issues before it can be mainstreamed. It must be better and cheaper than Web2.5, or have other significant advantages.

Web3 aims for scalability without sacrificing decentralization protocols. But decentralization is difficult and centralized services are more convenient.

Ethereum co-founder Vitalik Buterin himself stated recently"

This is why (centralized) Binance to Binance transactions trump Ethereum payments in some places because they don't have to be verified 12 times."

“I do think a lot of people care about decentralization, but they're not going to take decentralization if decentralization costs $8 per transaction,” he continued.

“Blockchains need to be affordable for people to use them in mainstream applications... Not for 2014 whales, but for today's users."

For now, scalability, tokenomics, mainstream adoption, and decentralization believers seem to be holding Web3 hostage.

Much like crypto's past.

But stay tuned.

You might also like

Alison Randel

3 years ago

Raising the Bar on Your 1:1s

Managers spend much time in 1:1s. Most team members meet with supervisors regularly. 1:1s can help create relationships and tackle tough topics. Few appreciate the 1:1 format's potential. Most of the time, that potential is spent on small talk, surface-level updates, and ranting (Ugh, the marketing team isn’t stepping up the way I want them to).

What if you used that time to have deeper conversations and important insights? What if change was easy?

This post introduces a new 1:1 format to help you dive deeper, faster, and develop genuine relationships without losing impact.

A 1:1 is a chat, you would assume. Why use structure to talk to a coworker? Go! I know how to talk to people. I can write. I've always written. Also, This article was edited by Zoe.

Before you discard something, ask yourself if there's a good reason not to try anything new. Is the 1:1 only a talk, or do you want extra benefits? Try the steps below to discover more.

I. Reflection (5 minutes)

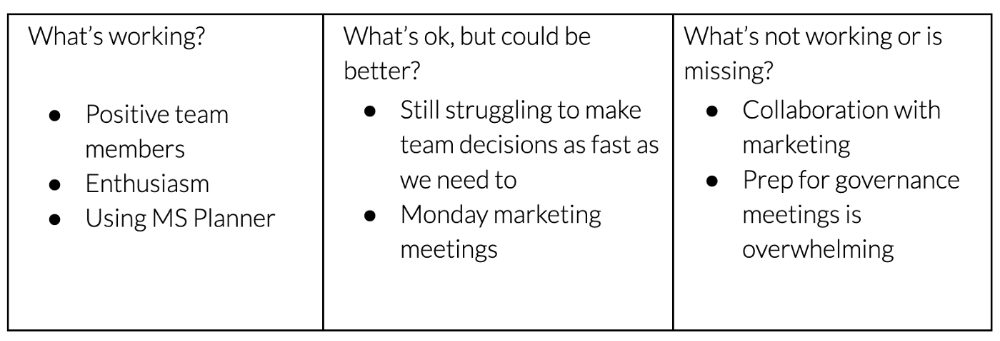

Context-free, broad comments waste time and are useless. Instead, give team members 5 minutes to write these 3 prompts.

What's effective?

What is decent but could be improved?

What is broken or missing?

Why these? They encourage people to be honest about all their experiences. Answering these questions helps people realize something isn't working. These prompts let people consider what's working.

Why take notes? Because you get more in less time. Will you feel awkward sitting quietly while your coworker writes? Probably. Persevere. Multi-task. Take a break from your afternoon meeting marathon. Any awkwardness will pay off.

What happens? After a few minutes of light conversation, create a template like the one given here and have team members fill in their replies. You can pre-share the template (with the caveat that this isn’t meant to take much prep time). Do this with your coworker: Answer the prompts. Everyone can benefit from pondering and obtaining guidance.

This step's output.

Part II: Talk (10-20 minutes)

Most individuals can explain what they see but not what's behind an answer. You don't like a meeting. Why not? Marketing partnership is difficult. What makes working with them difficult? I don't recommend slandering coworkers. Consider how your meetings, decisions, and priorities make work harder. The excellent stuff too. You want to know what's humming so you can reproduce the magic.

First, recognize some facts.

Real power dynamics exist. To encourage individuals to be honest, you must provide a safe environment and extend clear invites. Even then, it may take a few 1:1s for someone to feel secure enough to go there in person. It is part of your responsibility to admit that it is normal.

Curiosity and self-disclosure are crucial. Most leaders have received training to present themselves as the authorities. However, you will both benefit more from the dialogue if you can be open and honest about your personal experience, ask questions out of real curiosity, and acknowledge the pertinent sacrifices you're making as a leader.

Honesty without bias is difficult and important. Due to concern for the feelings of others, people frequently hold back. Or if they do point anything out, they do so in a critical manner. The key is to be open and unapologetic about what you observe while not presuming that your viewpoint is correct and that of the other person is incorrect.

Let's go into some prompts (based on genuine conversations):

“What do you notice across your answers?”

“What about the way you/we/they do X, Y, or Z is working well?”

“ Will you say more about item X in ‘What’s not working?’”

“I’m surprised there isn’t anything about Z. Why is that?”

“All of us tend to play some role in maintaining certain patterns. How might you/we be playing a role in this pattern persisting?”

“How might the way we meet, make decisions, or collaborate play a role in what’s currently happening?”

Consider the preceding example. What about the Monday meeting isn't working? Why? or What about the way we work with marketing makes collaboration harder? Remember to share your honest observations!

Third section: observe patterns (10-15 minutes)

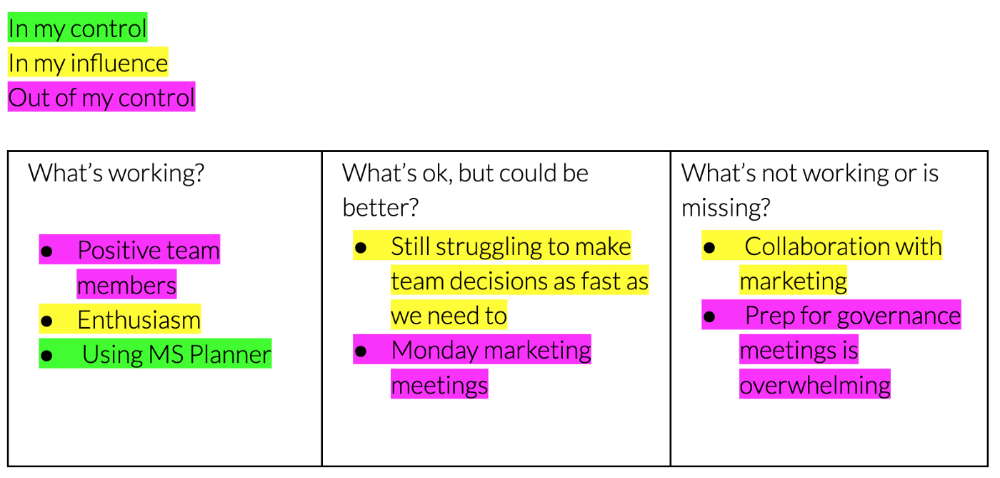

Leaders desire to empower their people but don't know how. We also have many preconceptions about what empowerment means to us and how it works. The next phase in this 1:1 format will assist you and your team member comprehend team power and empowerment. This understanding can help you support and shift your team member's behavior, especially where you disagree.

How to? After discussing the stated responses, ask each team member what they can control, influence, and not control. Mark their replies. You can do the same, adding colors where you disagree.

This step's output.

Next, consider the color constellation. Discuss these questions:

Is one color much more prevalent than the other? Why, if so?

Are the colors for the "what's working," "what's fine," and "what's not working" categories clearly distinct? Why, if so?

Do you have any disagreements? If yes, specifically where does your viewpoint differ? What activities do you object to? (Remember, there is no right or wrong in this. Give explicit details and ask questions with curiosity.)

Example: Based on the colors, you can ask, Is the marketing meeting's quality beyond your control? Were our marketing partners consulted? Are there any parts of team decisions we can control? We can't control people, but have we explored another decision-making method? How can we collaborate and generate governance-related information to reduce work, even if the requirement for prep can't be eliminated?

Consider the top one or two topics for this conversation. No 1:1 can cover everything, and that's OK. Focus on the present.

Part IV: Determine the next step (5 minutes)

Last, examine what this conversation means for you and your team member. It's easy to think we know the next moves when we don't.

Like what? You and your teammate answer these questions.

What does this signify moving ahead for me? What can I do to change this? Make requests, for instance, and see how people respond before thinking they won't be responsive.

What demands do I have on other people or my partners? What should I do first? E.g. Make a suggestion to marketing that we hold a monthly retrospective so we can address problems and exchange input more frequently. Include it on the meeting's agenda for next Monday.

Close the 1:1 by sharing what you noticed about the chat. Observations? Learn anything?

Yourself, you, and the 1:1

As a leader, you either reinforce or disrupt habits. Try this template if you desire greater ownership, empowerment, or creativity. Consider how you affect surrounding dynamics. How can you expect others to try something new in high-stakes scenarios, like meetings with cross-functional partners or senior stakeholders, if you won't? How can you expect deep thought and relationship if you don't encourage it in 1:1s? What pattern could this new format disrupt or reinforce?

Fight reluctance. First attempts won't be ideal, and that's OK. You'll only learn by trying.

Florian Wahl

3 years ago

An Approach to Product Strategy

I've been pondering product strategy and how to articulate it. Frameworks helped guide our thinking.

If your teams aren't working together or there's no clear path to victory, your product strategy may not be well-articulated or communicated (if you have one).

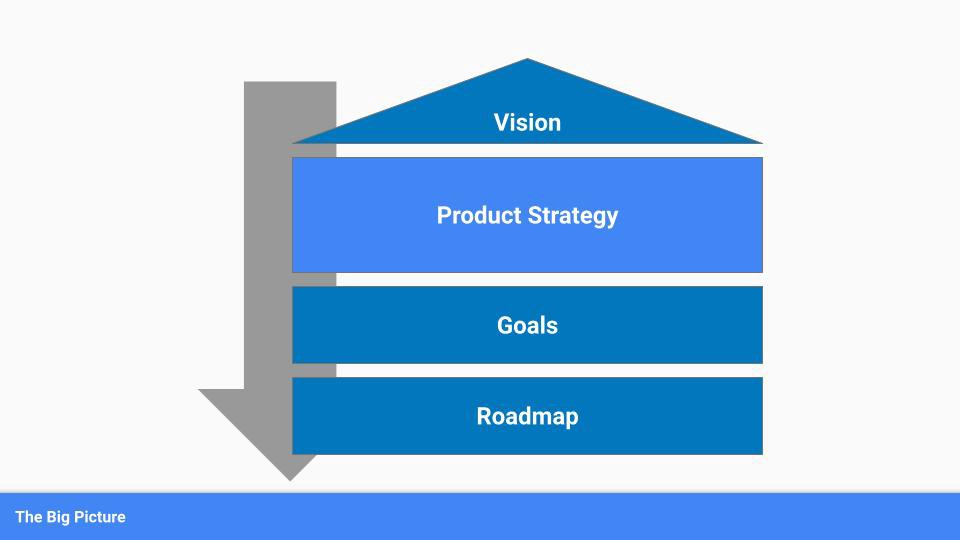

Before diving into a product strategy's details, it's important to understand its role in the bigger picture — the pieces that move your organization forward.

the overall picture

A product strategy is crucial, in my opinion. It's part of a successful product or business. It's the showpiece.

To simplify, we'll discuss four main components:

Vision

Product Management

Goals

Roadmap

Vision

Your company's mission? Your company/product in 35 years? Which headlines?

The vision defines everything your organization will do in the long term. It shows how your company impacted the world. It's your organization's rallying cry.

An ambitious but realistic vision is needed.

Without a clear vision, your product strategy may be inconsistent.

Product Management

Our main subject. Product strategy connects everything. It fulfills the vision.

In Part 2, we'll discuss product strategy.

Goals

This component can be goals, objectives, key results, targets, milestones, or whatever goal-tracking framework works best for your organization.

These product strategy metrics will help your team prioritize strategies and roadmaps.

Your company's goals should be unified. This fuels success.

Roadmap

The roadmap is your product strategy's timeline. It provides a prioritized view of your team's upcoming deliverables.

A roadmap is time-bound and includes measurable goals for your company. Your team's steps and capabilities for executing product strategy.

If your team has trouble prioritizing or defining a roadmap, your product strategy or vision is likely unclear.

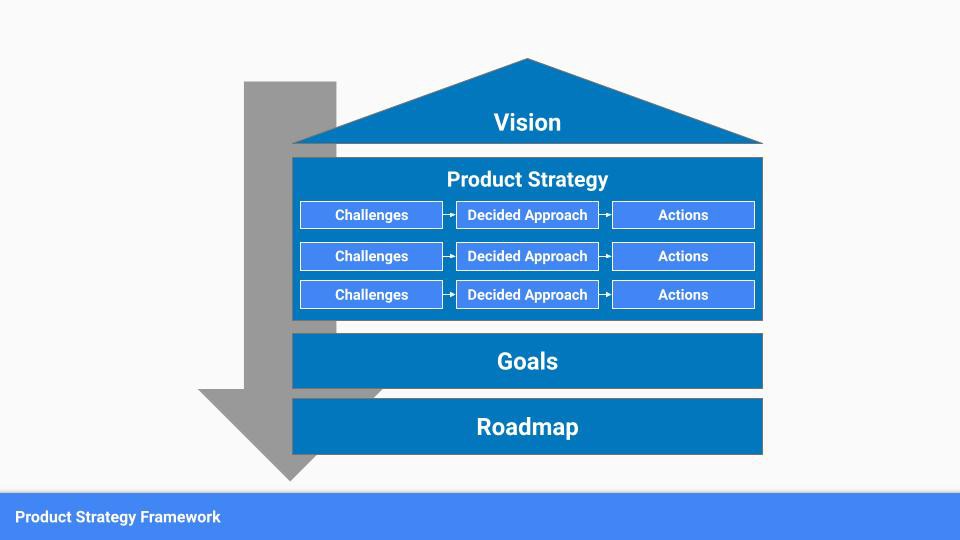

Formulation of a Product Strategy

Now that we've discussed where your product strategy fits in the big picture, let's look at a framework.

A product strategy should include challenges, an approach, and actions.

Challenges

First, analyze the problems/situations you're solving. It can be customer- or company-focused.

The analysis should explain the problems and why they're important. Try to simplify the situation and identify critical aspects.

Some questions:

What issues are we attempting to resolve?

What obstacles—internal or otherwise—are we attempting to overcome?

What is the opportunity, and why should we pursue it, in your opinion?

Decided Method

Second, describe your approach. This can be a set of company policies for handling the challenge. It's the overall approach to the first part's analysis.

The approach can be your company's bets, the solutions you've found, or how you'll solve the problems you've identified.

Again, these questions can help:

What is the value that we hope to offer to our clients?

Which market are we focusing on first?

What makes us stand out? Our benefit over rivals?

Actions

Third, identify actions that result from your approach. Second-part actions should be these.

Coordinate these actions. You may need to add products or features to your roadmap, acquire new capabilities through partnerships, or launch new marketing campaigns. Whatever fits your challenges and strategy.

Final questions:

What skills do we need to develop or obtain?

What is the chosen remedy? What are the main outputs?

What else ought to be added to our road map?

Put everything together

… and iterate!

Strategy isn't one-and-done. Changes occur. Economies change. Competitors emerge. Customer expectations change.

One unexpected event can make strategies obsolete quickly. Muscle it. Review, evaluate, and course-correct your strategies with your teams. Quarterly works. In a new or unstable industry, more often.

Pat Vieljeux

3 years ago

The three-year business plan is obsolete for startups.

If asked, run.

An entrepreneur asked me about her pitch deck. A Platform as a Service (PaaS).

She told me she hadn't done her 5-year forecasts but would soon.

I said, Don't bother. I added "time-wasting."

“I've been asked”, she said.

“Who asked?”

“a VC”

“5-year forecast?”

“Yes”

“Get another VC. If he asks, it's because he doesn't understand your solution or to waste your time.”

Some VCs are lagging. They're still using steam engines.

10-years ago, 5-year forecasts were requested.

Since then, we've adopted a 3-year plan.

But It's outdated.

Max one year.

What has happened?

Revolutionary technology. NO-CODE.

Revolution's consequences?

Product viability tests are shorter. Hugely. SaaS and PaaS.

Let me explain:

Building a minimum viable product (MVP) that works only takes a few months.

1 to 2 months for practical testing.

Your company plan can be validated or rejected in 4 months as a consequence.

After validation, you can ask for VC money. Even while a prototype can generate revenue, you may not require any.

Good VCs won't ask for a 3-year business plan in that instance.

One-year, though.

If you want, establish a three-year plan, but realize that the second year will be different.

You may have changed your business model by then.

A VC isn't interested in a three-year business plan because your solution may change.

Your ability to create revenue will be key.

But also, to pivot.

They will be interested in your value proposition.

They will want to know what differentiates you from other competitors and why people will buy your product over another.

What will interest them is your resilience, your ability to bounce back.

Not to mention your mindset. The fact that you won’t get discouraged at the slightest setback.

The grit you have when facing adversity, as challenges will surely mark your journey.

The authenticity of your approach. They’ll want to know that you’re not just in it for the money, let alone to show off.

The fact that you put your guts into it and that you are passionate about it. Because entrepreneurship is a leap of faith, a leap into the void.

They’ll want to make sure you are prepared for it because it’s not going to be a walk in the park.

They’ll want to know your background and why you got into it.

They’ll also want to know your family history.

And what you’re like in real life.

So a 5-year plan…. You can bet they won’t give a damn. Like their first pair of shoes.