17 Google Secrets 99 Percent of People Don't Know

What can't Google do?

Seriously, nothing! Google rocks.

Google is a major player in online tools and services. We use it for everything, from research to entertainment.

Did I say entertain yourself?

Yes, with so many features and options, it can be difficult to fully utilize Google.

#1. Drive Google Mad

You can make Google's homepage dance if you want to be silly.

Just type “Google Gravity” into Google.com. Then select I'm lucky.

See the page unstick before your eyes!

#2 Play With Google Image

Google isn't just for work.

Then have fun with it!

You can play games right in your search results. When you need a break, google “Solitaire” or “Tic Tac Toe”.

#3. Do a Barrel Roll

Need a little more excitement in your life? Want to see Google dance?

Type “Do a barrel roll” into the Google search bar.

Then relax and watch your screen do a 360.

#4 No Internet? No issue!

This is a fun trick to use when you have no internet.

If your browser shows a “No Internet” page, simply press Space.

Boom!

We have dinosaurs! Now use arrow keys to save your pixelated T-Rex from extinction.

#5 Google Can Help

Play this Google coin flip game to see if you're lucky.

Enter “Flip a coin” into the search engine.

You'll see a coin flipping animation. If you get heads or tails, click it.

#6. Think with Google

My favorite Google find so far is the “Think with Google” website.

Think with Google is a website that offers marketing insights, research, and case studies.

I highly recommend it to entrepreneurs, small business owners, and anyone interested in online marketing.

#7. Google Can Read Images!

This is a cool Google trick that few know about.

You can search for images by keyword or upload your own by clicking the camera icon on Google Images.

Google will then show you all of its similar images.

Caution: You should be fine with your uploaded images being public.

#8. Modify the Google Logo!

Clicking on the “I'm Feeling Lucky” button on Google.com takes you to a random Google Doodle.

Each year, Google creates a Doodle to commemorate holidays, anniversaries, and other occasions.

#9. What is my IP?

Simply type “What is my IP” into Google to find out.

Your IP address will appear on the results page.

#10. Send a Self-Destructing Email With Gmail,

Create a new message in Gmail. Find an icon that resembles a lock and a clock near the SEND button. That's where the Confidential Mode is.

By clicking it, you can set an expiration date for your email. Expiring emails are automatically deleted from both your and the recipient's inbox.

#11. Blink, Google Blink!

This is a unique Google trick.

Type “blink HTML” into Google. The words “blink HTML” will appear and then disappear.

The text is displayed for a split second before being deleted.

To make this work, Google reads the HTML code and executes the “blink” command.

#12. The Answer To Everything

This is for all Douglas Adams fans.

The answer to life, the universe, and everything is 42, according to Google.

An allusion to Douglas Adams' Hitchhiker's Guide to the Galaxy, in which Ford Prefect seeks to understand life, the universe, and everything.

#13. Google in 1998

It's a blast!

Type “Google in 1998” into Google. "I'm feeling lucky"

You'll be taken to an old-school Google homepage.

It's a nostalgic trip for long-time Google users.

#14. Scholarships and Internships

Google can help you find college funding!

Type “scholarships” or “internships” into Google.

The number of results will surprise you.

#15. OK, Google. Dice!

To roll a die, simply type “Roll a die” into Google.

On the results page is a virtual dice that you can click to roll.

#16. Google has secret codes!

Hit the nine squares on the right side of your Google homepage to go to My Account. Then Personal Info.

You can add your favorite language to the “General preferences for the web” tab.

#17. Google Terminal

You can feel like a true hacker.

Just type “Google Terminal” into Google.com. "I'm feeling lucky"

Voila~!

You'll be taken to an old-school computer terminal-style page.

You can then type commands to see what happens.

Have you tried any of these activities? Tell me in the comments.

Read full article here

More on Productivity

Ellane W

3 years ago

The Last To-Do List Template I'll Ever Need, Years in the Making

The holy grail of plain text task management is finally within reach

Plain text task management? Are you serious?? Dedicated task managers exist for a reason, you know. Sheesh.

—Oh, I know. Believe me, I know! But hear me out.

I've managed projects and tasks in plain text for more than four years. Since reorganizing my to-do list, plain text task management is within reach.

Data completely yours? One billion percent. Beef it up with coding? Be my guest.

Enter: The List

The answer? A list. That’s it!

Write down tasks. Obsidian, Notenik, Drafts, or iA Writer are good plain text note-taking apps.

List too long? Of course, it is! A large list tells you what to do. Feel the itch and friction. Then fix it.

But I want to be able to distinguish between work and personal life! List two things.

However, I need to know what should be completed first. Put those items at the top.

However, some things keep coming up, and I need to be reminded of them! Put those in your calendar and make an alarm for them.

But since individual X hasn't completed task Y, I can't proceed with this. Create a Waiting section on your list by dividing it.

But I must know what I'm supposed to be doing right now! Read your list(s). Check your calendar. Think critically.

Before I begin a new one, I remind myself that "Listory Never Repeats."

There’s no such thing as too many lists if all are needed. There is such a thing as too many lists if you make them before they’re needed. Before they complain that their previous room was small or too crowded or needed a new light.

A list that feels too long has a voice; it’s telling you what to do next.

I use one Master List. It's a control panel that tells me what to focus on short-term. If something doesn't need semi-immediate attention, it goes on my Backlog list.

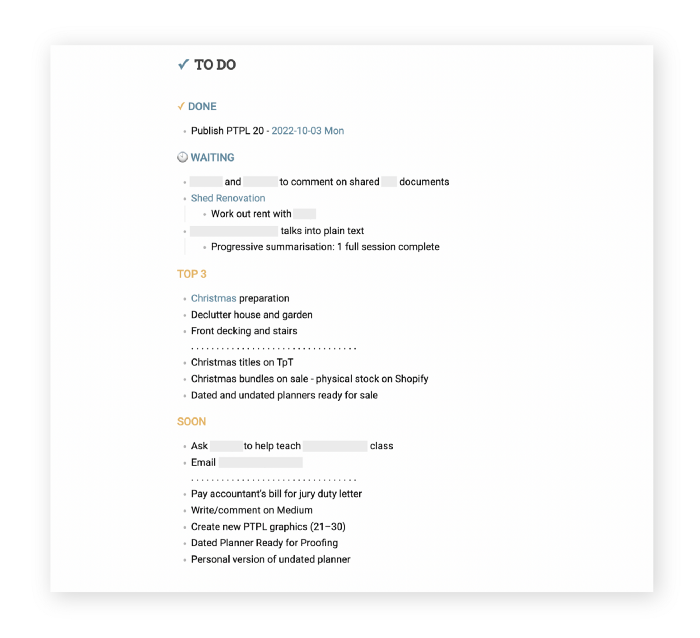

Todd Lewandowski's DWTS (Done, Waiting, Top 3, Soon) performance deserves praise. His DWTS to-do list structure has transformed my plain-text task management. I didn't realize it was upside down.

This is my take on it:

D = Done

Move finished items here. If they pile up, clear them out every week or month. I have a Done Archive folder.

W = Waiting

Things seething in the background, awaiting action. Stir them occasionally so they don't burn.

T = Top 3

Three priorities. Personal comes first, then work. There will always be a top 3 (no more than 5) in every category. Projects, not chores, usually.

S = Soon

This part is action-oriented. It's for anything you can accomplish to finish one of the Top 3. This collection includes thoughts and project lists. The sole requirement is that they should be short-term goals.

Some of you have probably concluded this isn't for you. Please read Todd's piece before throwing out the baby. Often. You shouldn't miss a newborn.

As much as Dancing With The Stars helps me recall this method, I may try switching their order. TSWD; Drilling Tunnel Seismic? Serenity After Task?

Master List Showcase

My Master List lives alone in its own file, but sometimes appears in other places. It's included in my Weekly List template. Here's a (soon-to-be-updated) demo vault of my Obsidian planning setup to download for free.

Here's the code behind my weekly screenshot:

## [[Master List - 2022|✓]] TO DO

![[Master List - 2022]]FYI, I use the Minimal Theme in Obsidian, with a few tweaks.

You may note I'm utilizing a checkmark as a link. For me, that's easier than locating the proper spot to click on the embed.

Blue headings for Done and Waiting are links. Done links to the Done Archive page and Waiting to a general waiting page.

Read my full article here.

Alex Mathers

3 years ago

8 guidelines to help you achieve your objectives 5x fast

If you waste time every day, even though you're ambitious, you're not alone.

Many of us could use some new time-management strategies, like these:

Focus on the following three.

You're thinking about everything at once.

You're overpowered.

It's mental. We just have what's in front of us. So savor the moment's beauty.

Prioritize 1-3 things.

To be one of the most productive people you and I know, follow these steps.

Get along with boredom.

Many of us grow bored, sweat, and turn on Netflix.

We shout, "I'm rarely bored!" Look at me! I'm happy.

Shut it, Sally.

You're not making wonderful things for the world. Boredom matters.

If you can sit with it for a second, you'll get insight. Boredom? Breathe.

Go blank.

Then watch your creativity grow.

Check your MacroVision once more.

We don't know what to do with our time, which contributes to time-wasting.

Nobody does, either. Jeff Bezos won't hand-deliver that crap to you.

Daily vision checks are required.

Also:

What are 5 things you'd love to create in the next 5 years?

You're soul-searching. It's food.

Return here regularly, and you'll adore the high you get from doing valuable work.

Improve your thinking.

What's Alex's latest nonsense?

I'm talking about overcoming our own thoughts. Worrying wastes so much time.

Too many of us are assaulted by lies, myths, and insecurity.

Stop letting your worries massage you into a worried coma like a Thai woman.

Optimizing your thoughts requires accepting what you can't control.

It means letting go of unhelpful thoughts and returning to the moment.

Keep your blood sugar level.

I gave up gluten, donuts, and sweets.

This has really boosted my energy.

Blood-sugar-spiking carbs make us irritable and tired.

These day-to-day ups and downs aren't productive. It's crucial.

Know how your diet affects insulin levels. Now I have more energy and can do more without clenching my teeth.

Reduce harmful carbs to boost energy.

Create a focused setting for yourself.

When we optimize the mind, we have more energy and use our time better because we're not tense.

Changing our environment can also help us focus. Disabling alerts is one example.

Too hot makes me procrastinate and irritable.

List five items that hinder your productivity.

You may be amazed at how much you may improve by removing distractions.

Be responsible.

Accountability is a time-saver.

Creating an emotional pull to finish things.

Writing down our goals makes us accountable.

We can engage a coach or work with an accountability partner to feel horrible if we don't show up and finish on time.

‘Hey Jake, I’m going to write 1000 words every day for 30 days — you need to make sure I do.’ ‘Sure thing, Nathan, I’ll be making sure you check in daily with me.’

Tick.

You might also blog about your ambitions to show your dedication.

Now you can't hide when you promised to appear.

Acquire a liking for bravery.

Boldness changes everything.

I sometimes feel lazy and wonder why. If my food and sleep are in order, I should assess my footing.

Most of us live backward. Doubtful. Uncertain. Feelings govern us.

Backfooting isn't living. It's lame, and you'll soon melt. Live boldly now.

Be assertive.

Get disgustingly into everything. Expand.

Even if it's hard, stop being a b*tch.

Those that make Mr. Bold Bear their spirit animal benefit. Save time to maximize your effect.

Ethan Siegel

3 years ago

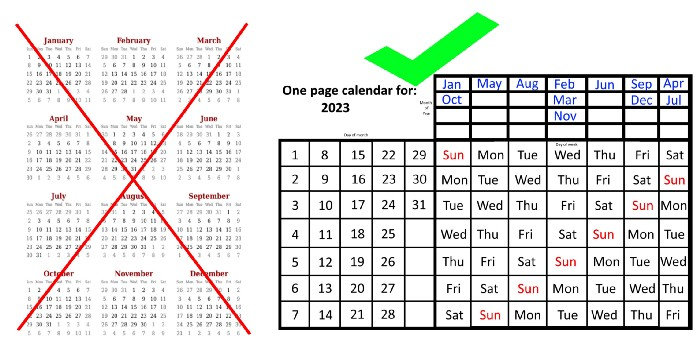

How you view the year will change after using this one-page calendar.

No other calendar is simpler, smaller, and reusable year after year. It works and is used here.

Most of us discard and replace our calendars annually. Each month, we move our calendar ahead another page, thus if we need to know which day of the week corresponds to a given day/month combination, we have to calculate it or flip forward/backward to the corresponding month. Questions like:

What day does this year's American Thanksgiving fall on?

Which months contain a Friday the thirteenth?

When is July 4th? What day of the week?

Alternatively, what day of the week is Christmas?

They're hard to figure out until you switch to the right month or look up all the months.

However, mathematically, the answers to these questions or any question that requires matching the day of the week with the day/month combination in a year are predictable, basic, and easy to work out. If you use this one-page calendar instead of a 12-month calendar, it lasts the whole year and is easy to alter for future years. Let me explain.

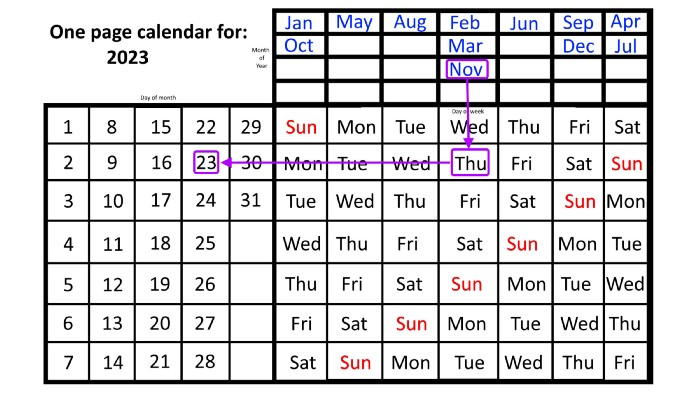

The 2023 one-page calendar is above. The days of the month are on the lower left, which works for all months if you know that:

There are 31 days in January, March, May, July, August, October, and December.

All of the months of April, June, September, and November have 30 days.

And depending on the year, February has either 28 days (in non-leap years) or 29 days (in leap years).

If you know this, this calendar makes it easy to match the day/month of the year to the weekday.

Here are some instances. American Thanksgiving is always on the fourth Thursday of November. You'll always know the month and day of the week, but the date—the day in November—changes each year.

On any other calendar, you'd have to flip to November to see when the fourth Thursday is. This one-page calendar only requires:

pick the month of November in the top-right corner to begin.

drag your finger down until Thursday appears,

then turn left and follow the monthly calendar until you reach the fourth Thursday.

It's obvious: 2023 is the 23rd American Thanksgiving. For every month and day-of-the-week combination, start at the month, drag your finger down to the desired day, and then move to the left to see which dates match.

What if you knew the day of the week and the date of the month, but not the month(s)?

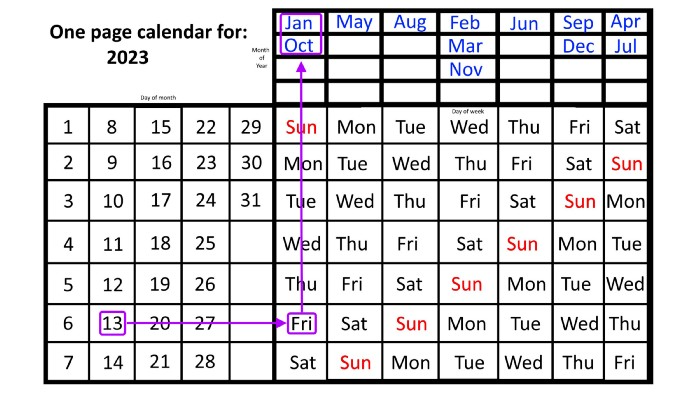

A different method using the same one-page calendar gives the answer. Which months have Friday the 13th this year? Just:

begin on the 13th of the month, the day you know you desire,

then swipe right with your finger till Friday appears.

and then work your way up until you can determine which months the specific Friday the 13th falls under.

One Friday the 13th occurred in January 2023, and another will occur in October.

The most typical reason to consult a calendar is when you know the month/day combination but not the day of the week.

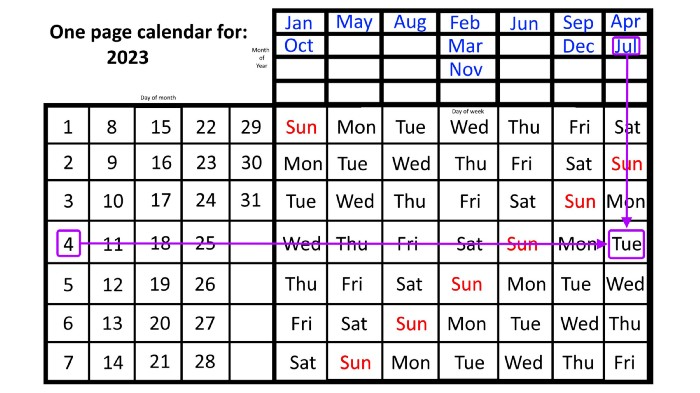

Compared to single-month calendars, the one-page calendar excels here. Take July 4th, for instance. Find the weekday here:

beginning on the left on the fourth of the month, as you are aware,

also begin with July, the month of the year you are most familiar with, at the upper right,

you should move your two fingers in the opposite directions till they meet: on a Tuesday in 2023.

That's how you find your selected day/month combination's weekday.

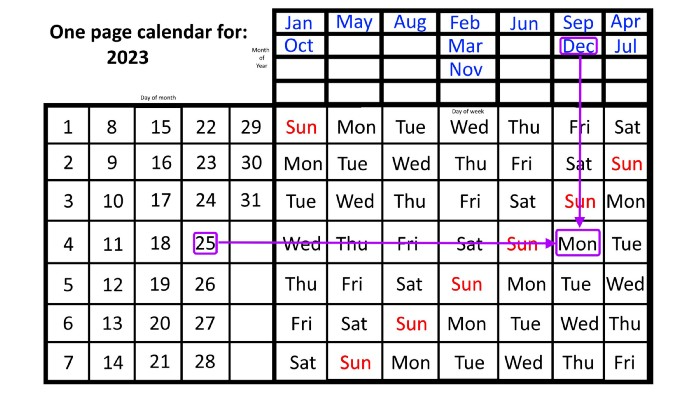

Another example: Christmas. Christmas Day is always December 25th, however unless your conventional calendar is open to December of your particular year, a question like "what day of the week is Christmas?" difficult to answer.

Unlike the one-page calendar!

Remember the left-hand day of the month. Top-right, you see the month. Put two fingers, one from each hand, on the date (25th) and the month (December). Slide the day hand to the right and the month hand downwards until they touch.

They meet on Monday—December 25, 2023.

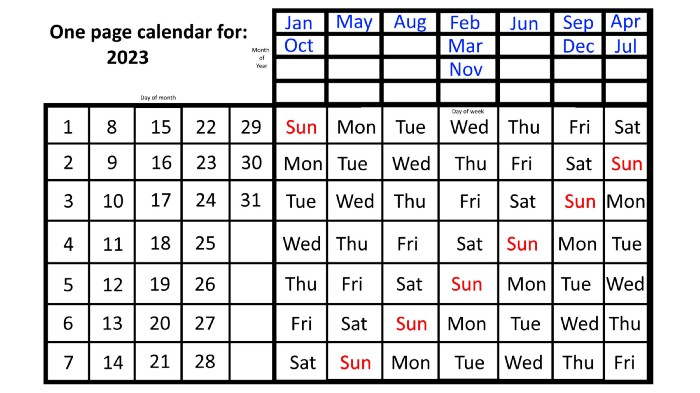

For 2023, that's fine, but what happens in 2024? Even worse, what if we want to know the day-of-the-week/day/month combo many years from now?

I think the one-page calendar shines here.

Except for the blue months in the upper-right corner of the one-page calendar, everything is the same year after year. The months also change in a consistent fashion.

Each non-leap year has 365 days—one more than a full 52 weeks (which is 364). Since January 1, 2023 began on a Sunday and 2023 has 365 days, we immediately know that December 31, 2023 will conclude on a Sunday (which you can confirm using the one-page calendar) and that January 1, 2024 will begin on a Monday. Then, reorder the months for 2024, taking in mind that February will have 29 days in a leap year.

Please note the differences between 2023 and 2024 month placement. In 2023:

October and January began on the same day of the week.

On the following Monday of the week, May began.

August started on the next day,

then the next weekday marked the start of February, March, and November, respectively.

Unlike June, which starts the following weekday,

While September and December start on the following day of the week,

Lastly, April and July start one extra day later.

Since 2024 is a leap year, February has 29 days, disrupting the rhythm. Month placements change to:

The first day of the week in January, April, and July is the same.

October will begin the following day.

Possibly starting the next weekday,

February and August start on the next weekday,

beginning on the following day of the week between March and November,

beginning the following weekday in June,

and commencing one more day of the week after that, September and December.

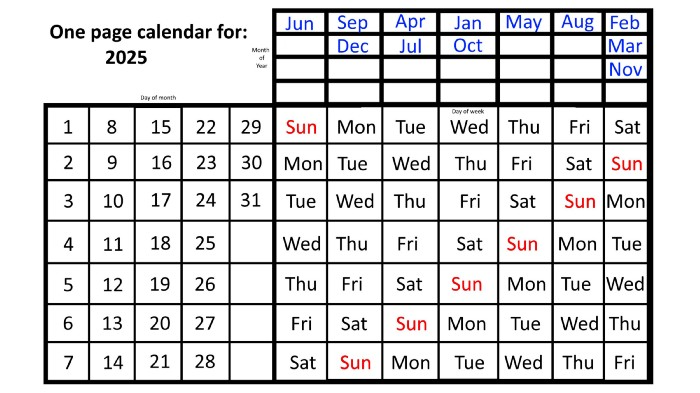

Due to the 366-day leap year, 2025 will start two days later than 2024 on January 1st.

Now, looking at the 2025 calendar, you can see that the 2023 pattern of which months start on which days is repeated! The sole variation is a shift of three days-of-the-week ahead because 2023 had one more day (365) than 52 full weeks (364), and 2024 had two more days (366). Again,

On Wednesday this time, January and October begin on the same day of the week.

Although May begins on Thursday,

August begins this Friday.

March, November, and February all begin on a Saturday.

Beginning on a Sunday in June

Beginning on Monday are September and December,

and on Tuesday, April and July begin.

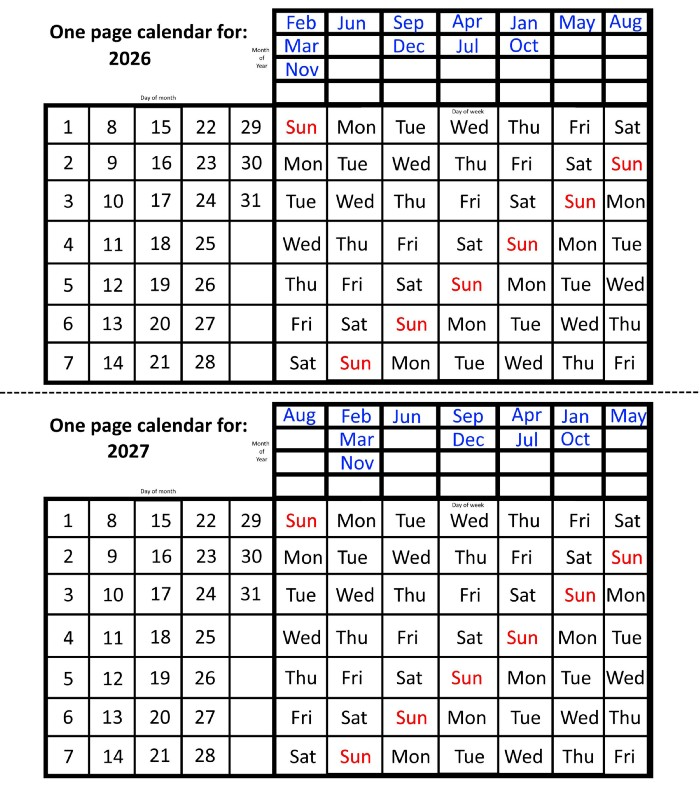

In 2026 and 2027, the year will commence on a Thursday and a Friday, respectively.

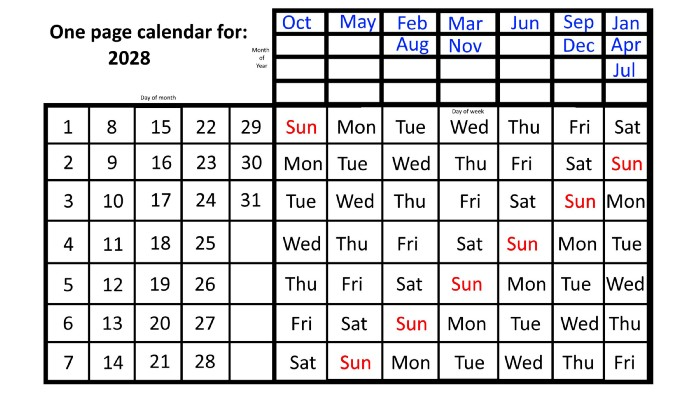

We must return to our leap year monthly arrangement in 2028. Yes, January 1, 2028 begins on a Saturday, but February, which begins on a Tuesday three days before January, will have 29 days. Thus:

Start dates for January, April, and July are all Saturdays.

Given that October began on Sunday,

Although May starts on a Monday,

beginning on a Tuesday in February and August,

Beginning on a Wednesday in March and November,

Beginning on Thursday, June

and Friday marks the start of September and December.

This is great because there are only 14 calendar configurations: one for each of the seven non-leap years where January 1st begins on each of the seven days of the week, and one for each of the seven leap years where it begins on each day of the week.

The 2023 calendar will function in 2034, 2045, 2051, 2062, 2073, 2079, 2090, 2102, 2113, and 2119. Except when passing over a non-leap year that ends in 00, like 2100, the repeat time always extends to 12 years or shortens to an extra 6 years.

The pattern is repeated in 2025's calendar in 2031, 2042, 2053, 2059, 2070, 2081, 2087, 2098, 2110, and 2121.

The extra 6-year repeat at the end of the century on the calendar for 2026 will occur in the years 2037, 2043, 2054, 2065, 2071, 2082, 2093, 2099, 2105, and 2122.

The 2027s calendar repeats in 2038, 2049, 2055, 2066, 2077, 2083, 2094, 2100, 2106, and 2117, almost exactly matching the 2026s pattern.

For leap years, the recurrence pattern is every 28 years when not passing a non-leap year ending in 00, or 12 or 40 years when we do. 2024's calendar repeats in 2052, 2080, 2120, 2148, 2176, and 2216; 2028's in 2056, 2084, 2124, 2152, 2180, and 2220.

Knowing January 1st and whether it's a leap year lets you construct a one-page calendar for any year. Try it—you might find it easier than any other alternative!

You might also like

forkast

3 years ago

Three Arrows Capital collapse sends crypto tremors

Three Arrows Capital's Google search volume rose over 5,000%.

Three Arrows Capital, a Singapore-based cryptocurrency hedge fund, filed for Chapter 15 bankruptcy last Friday to protect its U.S. assets from creditors.

Three Arrows filed for bankruptcy on July 1 in New York.

Three Arrows was ordered liquidated by a British Virgin Islands court last week after defaulting on a $670 million loan from Voyager Digital. Three days later, the Singaporean government reprimanded Three Arrows for spreading misleading information and exceeding asset limits.

Three Arrows' troubles began with Terra's collapse in May, after it bought US$200 million worth of Terra's LUNA tokens in February, co-founder Kyle Davies told the Wall Street Journal. Three Arrows has failed to meet multiple margin calls since then, including from BlockFi and Genesis.

Three Arrows Capital, founded by Kyle Davies and Su Zhu in 2012, manages $10 billion in crypto assets.

Bitcoin's price fell from US$20,600 to below US$19,200 after Three Arrows' bankruptcy petition. According to CoinMarketCap, BTC is now above US$20,000.

What does it mean?

Every action causes an equal and opposite reaction, per Newton's third law. Newtonian physics won't comfort Three Arrows investors, but future investors will thank them for their overconfidence.

Regulators are taking notice of crypto's meteoric rise and subsequent fall. Historically, authorities labeled the industry "high risk" to warn traditional investors against entering it. That attitude is changing. Regulators are moving quickly to regulate crypto to protect investors and prevent broader asset market busts.

The EU has reached a landmark deal that will regulate crypto asset sales and crypto markets across the 27-member bloc. The U.S. is close behind with a similar ruling, and smaller markets are also looking to improve safeguards.

For many, regulation is the only way to ensure the crypto industry survives the current winter.

Leah

3 years ago

The Burnout Recovery Secrets Nobody Is Talking About

What works and what’s just more toxic positivity

Just keep at it; you’ll get it.

I closed the Zoom call and immediately dropped my head. Open tabs included material on inspiration, burnout, and recovery.

I searched everywhere for ways to avoid burnout.

It wasn't that I needed to keep going, change my routine, employ 8D audio playlists, or come up with fresh ideas. I had several ideas and a schedule. I knew what to do.

I wasn't interested. I kept reading, changing my self-care and mental health routines, and writing even though it was tiring.

Since burnout became a psychiatric illness in 2019, thousands have shared their experiences. It's spreading rapidly among writers.

What is the actual key to recovering from burnout?

Every A-list burnout story emphasizes prevention. Other lists provide repackaged self-care tips. More discuss mental health.

It's like the mid-2000s, when pink quotes about bubble baths saturated social media.

The self-care mania cost us all. Self-care is crucial, but utilizing it to address everything didn't work then or now.

How can you recover from burnout?

Time

Are extended breaks actually good for you? Most people need a break every 62 days or so to avoid burnout.

Real-life burnout victims all took breaks. Perhaps not a long hiatus, but breaks nonetheless.

Burnout is slow and gradual. It takes little bits of your motivation and passion at a time. Sometimes it’s so slow that you barely notice or blame it on other things like stress and poor sleep.

Burnout doesn't come overnight; neither will recovery.

I don’t care what anyone else says the cure for burnout is. It has to be time because time is what gave us all burnout in the first place.

Steve QJ

4 years ago

Putin's War On Reality

The dictator's playbook.

Stalin's successor, Nikita Khrushchev, delivered a speech titled "On The Cult Of Personality And Its Consequences" in 1956, three years after Stalin’s death.

It was Stalin's grave abuse of power that caused untold harm to our party.

Stalin acted not by persuasion, explanation, or patient cooperation, but by imposing his ideas and demanding absolute obedience. […]

See where Stalin's mania for greatness led? He had lost all sense of reality.

The speech, which was never made public, shook the Soviet Union and the Soviet Bloc. After Stalin's "cult of personality" was exposed as a lie, only reality remained.

As I've watched the nightmare unfold in Ukraine, I'm reminded of that question. Primarily by Putin's repeated denials.

His odd claim that Ukraine is run by drug addicts and Nazis (especially strange given that Volodymyr Zelenskyy, the Ukrainian president, is Jewish). Others attempt to portray Russia as liberators rather than occupiers. For example, he portrays Luhansk and Donetsk as plucky, newly independent states when they have been totalitarian statelets for 8 years.

Putin seemed to have lost all sense of reality.

Maybe that's why his remarks to an oligarchs' gathering stood out:

Everything is a desperate measure. They gave us no choice. We couldn't do anything about their security risks. […] They could have put the country in jeopardy.

This is almost certainly true from Putin's perspective. Even for Putin, a military invasion seems unlikely. So, what exactly is putting Russia's security in jeopardy? How could Ukraine's independence endanger Russia's existence?

The truth is the only thing that truly terrifies leaders like these.

Trump, the president of “alternative facts,” "and “fake news” praised Putin's fabricated justifications for the Ukraine invasion. Russia tightened news censorship as news of their losses came in. It's no accident that modern dictatorships like Russia (and China and North Korea) restrict citizens' access to information.

Controlling what people see, hear, and think is the simplest method. And Ukraine's recent efforts to join the European Union showed a country whose thoughts Putin couldn't control. With the Russian and Ukrainian peoples so close, he could not control their reality.

He appears to think this is a threat worth fighting NATO over.

It's easy to disown history's great dictators. By the magnitude of their harm. But the strategy they used is still in use today, albeit not to the same devastating effect.

The Kim dynasty in North Korea has ruled for 74 years, Putin has ruled Russia for 19 years (using loopholes and even rewriting the constitution).

“Politicians and diapers must be changed frequently,” said Mark Twain. "And for the same reason.”

When their egos are threatened, they sabre-rattle, as in Kim Jong-un and Donald Trump's famous spat about the size of their...ahem, “nuclear buttons”." Or Putin's threats of mutual destruction this weekend.

Most importantly, they have cult-like control over their followers.

When a leader whose power is built on lies feels he is losing control of the narrative, things like Trump's Jan. 6 meltdown and Putin's current actions in Ukraine are unavoidable.

Leaders who try to control their people's reality will have to die to keep the illusion alive.

Long version of this post available here