More on Productivity

Pen Magnet

3 years ago

Why Google Staff Doesn't Work

Sundar Pichai unveiled Simplicity Sprint at Google's latest all-hands conference.

To boost employee efficiency.

Not surprising. Few envisioned Google declaring a productivity drive.

Sunder Pichai's speech:

“There are real concerns that our productivity as a whole is not where it needs to be for the head count we have. Help me create a culture that is more mission-focused, more focused on our products, more customer focused. We should think about how we can minimize distractions and really raise the bar on both product excellence and productivity.”

The primary driver driving Google's efficiency push is:

Google's efficiency push follows 13% quarterly revenue increase. Last year in the same quarter, it was 62%.

Market newcomers may argue that the previous year's figure was fuelled by post-Covid reopening and growing consumer spending. Investors aren't convinced. A promising company like Google can't afford to drop so quickly.

Google’s quarterly revenue growth stood at 13%, against 62% in last year same quarter.

Google isn't alone. In my recent essay regarding 2025 programmers, I warned about the economic downturn's effects on FAAMG's workforce. Facebook had suspended hiring, and Microsoft had promised hefty bonuses for loyal staff.

In the same article, I predicted Google's troubles. Online advertising, especially the way Google and Facebook sell it using user data, is over.

FAAMG and 2nd rung IT companies could be the first to fall without Post-COVID revival and uncertain global geopolitics.

Google has hardly ever discussed effectiveness:

Apparently openly.

Amazon treats its employees like robots, even in software positions. It has significant turnover and a terrible reputation as a result. Because of this, it rarely loses money due to staff productivity.

Amazon trumps Google. In reality, it treats its employees poorly.

Google was the founding father of the modern-day open culture.

Larry and Sergey Google founded the IT industry's Open Culture. Silicon Valley called Google's internal democracy and transparency near anarchy. Management rarely slammed decisions on employees. Surveys and internal polls ensured everyone knew the company's direction and had a vote.

20% project allotment (weekly free time to build own project) was Google's open-secret innovation component.

After Larry and Sergey's exit in 2019, this is Google's first profitability hurdle. Only Google insiders can answer these questions.

Would Google's investors compel the company's management to adopt an Amazon-style culture where the developers are treated like circus performers?

If so, would Google follow suit?

If so, how does Google go about doing it?

Before discussing Google's likely plan, let's examine programming productivity.

What determines a programmer's productivity is simple:

How would we answer Google's questions?

As a programmer, I'm more concerned about Simplicity Sprint's aftermath than its economic catalysts.

Large organizations don't care much about quarterly and annual productivity metrics. They have 10-year product-launch plans. If something seems horrible today, it's likely due to someone's lousy judgment 5 years ago who is no longer in the blame game.

Deconstruct our main question.

How exactly do you change the culture of the firm so that productivity increases?

How can you accomplish that without affecting your capacity to profit? There are countless ways to increase output without decreasing profit.

How can you accomplish this with little to no effect on employee motivation? (While not all employers care about it, in this case we are discussing the father of the open company culture.)

How do you do it for a 10-developer IT firm that is losing money versus a 1,70,000-developer organization with a trillion-dollar valuation?

When implementing a large-scale organizational change, success must be carefully measured.

The fastest way to do something is to do it right, no matter how long it takes.

You require clearly-defined group/team/role segregation and solid pass/fail matrices to:

You can give performers rewards.

Ones that are average can be inspired to improve

Underachievers may receive assistance or, in the worst-case scenario, rehabilitation

As a 20-year programmer, I associate productivity with greatness.

Doing something well, no matter how long it takes, is the fastest way to do it.

Let's discuss a programmer's productivity.

Why productivity is a strange term in programming:

Productivity is work per unit of time.

Money=time This is an economic proverb. More hours worked, more pay. Longer projects cost more.

As a buyer, you desire a quick supply. As a business owner, you want employees who perform at full capacity, creating more products to transport and boosting your profits.

All economic matrices encourage production because of our obsession with it. Productivity is the only organic way a nation may increase its GDP.

Time is money — is not just a proverb, but an economical fact.

Applying the same productivity theory to programming gets problematic. An automating computer. Its capacity depends on the software its master writes.

Today, a sophisticated program can process a billion records in a few hours. Creating one takes a competent coder and the necessary infrastructure. Learning, designing, coding, testing, and iterations take time.

Programming productivity isn't linear, unlike manufacturing and maintenance.

Average programmers produce code every day yet miss deadlines. Expert programmers go days without coding. End of sprint, they often surprise themselves by delivering fully working solutions.

Reversing the programming duties has no effect. Experts aren't needed for productivity.

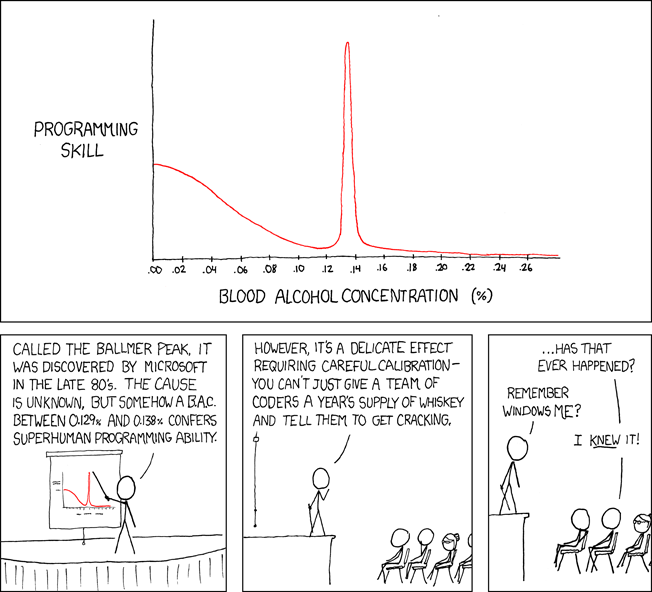

These patterns remind me of an XKCD comic.

Programming productivity depends on two factors:

The capacity of the programmer and his or her command of the principles of computer science

His or her productive bursts, how often they occur, and how long they last as they engineer the answer

At some point, productivity measurement becomes Schrödinger’s cat.

Product companies measure productivity using use cases, classes, functions, or LOCs (lines of code). In days of data-rich source control systems, programmers' merge requests and/or commits are the most preferred yardstick. Companies assess productivity by tickets closed.

Every organization eventually has trouble measuring productivity. Finer measurements create more chaos. Every measure compares apples to oranges (or worse, apples with aircraft.) On top of the measuring overhead, the endeavor causes tremendous and unnecessary stress on teams, lowering their productivity and defeating its purpose.

Macro productivity measurements make sense. Amazon's factory-era management has done it, but at great cost.

Google can pull it off if it wants to.

What Google meant in reality when it said that employee productivity has decreased:

When Google considers its employees unproductive, it doesn't mean they don't complete enough work in the allotted period.

They can't multiply their work's influence over time.

Programmers who produce excellent modules or products are unsure on how to use them.

The best data scientists are unable to add the proper parameters in their models.

Despite having a great product backlog, managers struggle to recruit resources with the necessary skills.

Product designers who frequently develop and A/B test newer designs are unaware of why measures are inaccurate or whether they have already reached the saturation point.

Most ignorant: All of the aforementioned positions are aware of what to do with their deliverables, but neither their supervisors nor Google itself have given them sufficient authority.

So, Google employees aren't productive.

How to fix it?

Business analysis: White suits introducing novel items can interact with customers from all regions. Track analytics events proactively, especially the infrequent ones.

SOLID, DRY, TEST, and AUTOMATION: Do less + reuse. Use boilerplate code creation. If something already exists, don't implement it yourself.

Build features-building capabilities: N features are created by average programmers in N hours. An endless number of features can be built by average programmers thanks to the fact that expert programmers can produce 1 capability in N hours.

Work on projects that will have a positive impact: Use the same algorithm to search for images on YouTube rather than the Mars surface.

Avoid tasks that can only be measured in terms of time linearity at all costs (if a task can be completed in N minutes, then M copies of the same task would cost M*N minutes).

In conclusion:

Software development isn't linear. Why should the makers be measured?

Notation for The Big O

I'm discussing a new way to quantify programmer productivity. (It applies to other professions, but that's another subject)

The Big O notation expresses the paradigm (the algorithmic performance concept programmers rot to ace their Google interview)

Google (or any large corporation) can do this.

Sort organizational roles into categories and specify their impact vs. time objectives. A CXO role's time vs. effect function, for instance, has a complexity of O(log N), meaning that if a CEO raises his or her work time by 8x, the result only increases by 3x.

Plot the influence of each employee over time using the X and Y axes, respectively.

Add a multiplier for Y-axis values to the productivity equation to make business objectives matter. (Example values: Support = 5, Utility = 7, and Innovation = 10).

Compare employee scores in comparable categories (developers vs. devs, CXOs vs. CXOs, etc.) and reward or help employees based on whether they are ahead of or behind the pack.

After measuring every employee's inventiveness, it's straightforward to help underachievers and praise achievers.

Example of a Big(O) Category:

If I ran Google (God forbid, its worst days are far off), here's how I'd classify it. You can categorize Google employees whichever you choose.

The Google interview truth:

O(1) < O(log n) < O(n) < O(n log n) < O(n^x) where all logarithmic bases are < n.

O(1): Customer service workers' hours have no impact on firm profitability or customer pleasure.

CXOs Most of their time is spent on travel, strategic meetings, parties, and/or meetings with minimal floor-level influence. They're good at launching new products but bad at pivoting without disaster. Their directions are being followed.

Devops, UX designers, testers Agile projects revolve around deployment. DevOps controls the levers. Their automation secures results in subsequent cycles.

UX/UI Designers must still prototype UI elements despite improved design tools.

All test cases are proportional to use cases/functional units, hence testers' work is O(N).

Architects Their effort improves code quality. Their right/wrong interference affects product quality and rollout decisions even after the design is set.

Core Developers Only core developers can write code and own requirements. When people understand and own their labor, the output improves dramatically. A single character error can spread undetected throughout the SDLC and cost millions.

Core devs introduce/eliminate 1000x bugs, refactoring attempts, and regression. Following our earlier hypothesis.

The fastest way to do something is to do it right, no matter how long it takes.

Conclusion:

Google is at the liberal extreme of the employee-handling spectrum

Microsoft faced an existential crisis after 2000. It didn't choose Amazon's data-driven people management to revitalize itself.

Instead, it entrusted developers. It welcomed emerging technologies and opened up to open source, something it previously opposed.

Google is too lax in its employee-handling practices. With that foundation, it can only follow Amazon, no matter how carefully.

Any attempt to redefine people's measurements will affect the organization emotionally.

The more Google compares apples to apples, the higher its chances for future rebirth.

Jari Roomer

3 years ago

5 ways to never run out of article ideas

“Perfectionism is the enemy of the idea muscle. " — James Altucher

Writer's block is a typical explanation for low output. Success requires productivity.

In four years of writing, I've never had writer's block. And you shouldn't care.

You'll never run out of content ideas if you follow a few tactics. No, I'm not overpromising.

Take Note of Ideas

Brains are strange machines. Blank when it's time to write. Idiot. Nothing. We get the best article ideas when we're away from our workstation.

In the shower

Driving

In our dreams

Walking

During dull chats

Meditating

In the gym

No accident. The best ideas come in the shower, in nature, or while exercising.

(Your workstation is the worst place for creativity.)

The brain has time and space to link 'dots' of information during rest. It's eureka! New idea.

If you're serious about writing, capture thoughts as they come.

Immediately write down a new thought. Capture it. Don't miss it. Your future self will thank you.

As a writer, entrepreneur, or creative, letting ideas slide is bad.

I recommend using Evernote, Notion, or your device's basic note-taking tool to capture article ideas.

It doesn't matter whatever app you use as long as you collect article ideas.

When you practice 'idea-capturing' enough, you'll have an unending list of article ideas when writer's block hits.

High-Quality Content

More books, films, Medium pieces, and Youtube videos I consume, the more I'm inspired to write.

What you eat shapes who you are.

Celebrity gossip and fear-mongering news won't help your writing. It won't help you write regularly.

Instead, read expert-written books. Watch documentaries to improve your worldview. Follow amazing people online.

Develop your 'idea muscle' Daily creativity takes practice. The more you exercise your 'idea muscles,' the easier it is to generate article ideas.

I've trained my 'concept muscle' using James Altucher's exercise.

Write 10 ideas daily.

Write ten book ideas every day if you're an author. Write down 10 business ideas per day if you're an entrepreneur. Write down 10 investing ideas per day.

Write 10 article ideas per day. You become a content machine.

It doesn't state you need ten amazing ideas. You don't need 10 ideas. Ten ideas, regardless of quality.

Like at the gym, reps are what matter. With each article idea, you gain creativity. Writer's block is no match for this workout.

Quit Perfectionism

Perfectionism is bad for writers. You'll have bad articles. You'll have bad ideas. OK. It's creative.

Writing success requires prolificacy. You can't have 'perfect' articles.

“Perfectionism is the enemy of the idea muscle. Perfectionism is your brain trying to protect you from harm.” — James Altucher

Vincent van Gogh painted 900 pieces. The Starry Night is the most famous.

Thomas Edison invented 1093 things, but not all were as important as the lightbulb or the first movie camera.

Mozart composed nearly 600 compositions, but only Serenade No13 became popular.

Always do your best. Perfectionism shouldn't stop you from working. Write! Publicize. Make. Even if imperfect.

Write Your Story

Living an interesting life gives you plenty to write about. If you travel a lot, share your stories or lessons learned.

Describe your business's successes and shortcomings.

Share your experiences with difficulties or addictions.

More experiences equal more writing material.

If you stay indoors, perusing social media, you won't be inspired to write.

Have fun. Travel. Strive. Build a business. Be bold. Live a life worth writing about, and you won't run out of material.

Todd Lewandowski

3 years ago

DWTS: How to Organize Your To-Do List Quickly

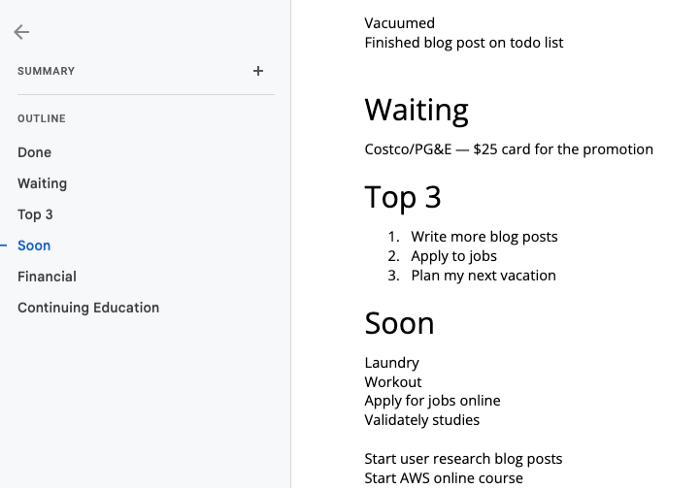

Don't overcomplicate to-do lists. DWTS (Done, Waiting, Top 3, Soon) organizes your to-dos.

How Are You Going to Manage Everything?

Modern America is busy. Work involves meetings. Anytime, Slack communications arrive. Many software solutions offer a @-mention notification capability. Emails.

Work obligations continue. At home, there are friends, family, bills, chores, and fun things.

How are you going to keep track of it all? Enter the todo list. It’s been around forever. It’s likely to stay forever in some way, shape, or form.

Everybody has their own system. You probably modified something from middle school. Post-its? Maybe it’s an app? Maybe both, another system, or none.

I suggest a format that has worked for me in 15 years of professional and personal life.

Try it out and see if it works for you. If not, no worries. You do you! Hopefully though you can learn a thing or two, and I from you too.

It is merely a Google Doc, yes.

It's a giant list. One task per line. Indent subtasks on a new line. Add or move new tasks as needed.

I recommend using Google Docs. It's easy to use and flexible for structuring.

Prioritizing these tasks is key. I organize them using DWTS (Done, Waiting, Top 3, Soon). Chronologically is good because it implicitly provides both a priority (high, medium, low) and an ETA (now, soon, later).

Yes, I recognize the similarities to DWTS (Dancing With The Stars) TV Show. Although I'm not a fan, it's entertaining. The acronym is easy to remember and adds fun to something dull.

What each section contains

Done

All tasks' endpoint. Finish here. Don't worry about it again.

Waiting

You're blocked and can't continue. Blocked tasks usually need someone. Write Person Task so you know who's waiting.

Blocking tasks shouldn't last long. After a while, remind them kindly. If people don't help you out of kindness, they will if you're persistent.

Top 3

Mental focus areas. These can be short- to mid-term goals or recent accomplishments. 2 to 5 is a good number to stay focused.

Top 3 reminds us to prioritize. If they don't fit your Top 3 goals, delay them.

Every 1:1 at work is a project update. Another chance to list your top 3. You should know your Top 3 well and be able to discuss them confidently.

Soon

Here's your short-term to-do list. Rank them from highest to lowest.

I usually subdivide it with empty lines. First is what I have to do today, then week, then month. Subsections can be arranged however you like.

Inventories by Concept

Tasks that aren’t in your short or medium future go into the backlog.

Eventually you’ll complete these tasks, assign them to someone else, or mark them as “wont’ do” (like done but in another sense).

Backlog tasks don't need to be organized chronologically because their timing and priority may change. Theme-organize them. When planning/strategic, you can choose themes to focus on, so future top 3 topics.

More Tips on Todos

Decide Upon a Morning Goal

Morning routines are universal. Coffee and Wordle. My to-do list is next. Two things:

As needed, update the to-do list: based on the events of yesterday and any fresh priorities.

Pick a few jobs to complete today: Pick a few goals that you know you can complete today. Push the remainder below and move them to the top of the Soon section. I typically select a few tasks I am confident I can complete along with one stretch task that might extend into tomorrow.

Finally. By setting and achieving small goals every day, you feel accomplished and make steady progress on medium and long-term goals.

Tech companies call this a daily standup. Everyone shares what they did yesterday, what they're doing today, and any blockers. The name comes from a tradition of holding meetings while standing up to keep them short. Even though it's virtual, everyone still wants a quick meeting.

Your team may or may not need daily standups. Make a daily review a habit with your coffee.

Review Backwards & Forwards on a regular basis

While you're updating your to-do list daily, take time to review it.

Review your Done list. Remember things you're proud of and things that could have gone better. Your Done list can be long. Archive it so your main to-do list isn't overwhelming.

Future-gaze. What you considered important may no longer be. Reorder tasks. Backlog grooming is a workplace term.

Backwards-and-forwards reviews aren't required often. Every 3-6 months is fine. They help you see the forest as often as the trees.

Final Remarks

Keep your list simple. Done, Waiting, Top 3, Soon. These are the necessary sections. If you like, add more subsections; otherwise, keep it simple.

I recommend a morning review. By having clear goals and an action-oriented attitude, you'll be successful.

You might also like

Ryan Weeks

3 years ago

Terra fiasco raises TRON's stablecoin backstop

After Terra's algorithmic stablecoin collapsed in May, TRON announced a plan to increase the capital backing its own stablecoin.

USDD, a near-carbon copy of Terra's UST, arrived on the TRON blockchain on May 5. TRON founder Justin Sun says USDD will be overcollateralized after initially being pegged algorithmically to the US dollar.

A reserve of cryptocurrencies and stablecoins will be kept at 130 percent of total USDD issuance, he said. TRON described the collateral ratio as "guaranteed" and said it would begin publishing real-time updates on June 5.

Currently, the reserve contains 14,040 bitcoin (around $418 million), 140 million USDT, 1.9 billion TRX, and 8.29 billion TRX in a burning contract.

Sun: "We want to hybridize USDD." We have an algorithmic stablecoin and TRON DAO Reserve.

algorithmic failure

USDD was designed to incentivize arbitrageurs to keep its price pegged to the US dollar by trading TRX, TRON's token, and USDD. Like Terra, TRON signaled its intent to establish a bitcoin and cryptocurrency reserve to support USDD in extreme market conditions.

Still, Terra's UST failed despite these safeguards. The stablecoin veered sharply away from its dollar peg in mid-May, bringing down Terra's LUNA and wiping out $40 billion in value in days. In a frantic attempt to restore the peg, billions of dollars in bitcoin were sold and unprecedented volumes of LUNA were issued.

Sun believes USDD, which has a total circulating supply of $667 million, can be backed up.

"Our reserve backing is diversified." Bitcoin and stablecoins are included. USDC will be a small part of Circle's reserve, he said.

TRON's news release lists the reserve's assets as bitcoin, TRX, USDC, USDT, TUSD, and USDJ.

All Bitcoin addresses will be signed so everyone knows they belong to us, Sun said.

Not giving in

Sun told that the crypto industry needs "decentralized" stablecoins that regulators can't touch.

Sun said the Luna Foundation Guard, a Singapore-based non-profit that raised billions in cryptocurrency to buttress UST, mismanaged the situation by trying to sell to panicked investors.

He said, "We must be ahead of the market." We want to stabilize the market and reduce volatility.

Currently, TRON finances most of its reserve directly, but Sun says the company hopes to add external capital soon.

Before its demise, UST holders could park the stablecoin in Terra's lending platform Anchor Protocol to earn 20% interest, which many deemed unsustainable. TRON's JustLend is similar. Sun hopes to raise annual interest rates from 17.67% to "around 30%."

This post is a summary. Read full article here

Woo

3 years ago

How To Launch A Business Without Any Risk

> Say Hello To The Lean-Hedge Model

People think starting a business requires significant debt and investment. Like Shark Tank, you need a world-changing idea. I'm not saying to avoid investors or brilliant ideas.

Investing is essential to build a genuinely profitable company. Think Apple or Starbucks.

Entrepreneurship is risky because many people go bankrupt from debt. As starters, we shouldn't do it. Instead, use lean-hedge.

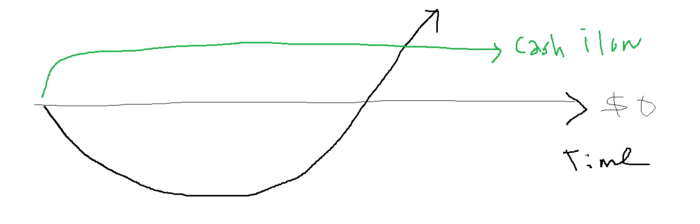

Simply defined, you construct a cash-flow business to hedge against long-term investment-heavy business expenses.

What the “fx!$rench-toast” is the lean-hedge model?

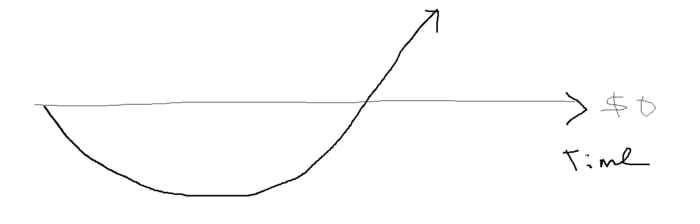

When you start a business, your money should move down, down, down, then up when it becomes profitable.

Many people don't survive the business's initial losses and debt. What if, we created a cash-flow business BEFORE we started our Starbucks to hedge against its initial expenses?

Lean-hedge has two sections. Start a cash-flow business. A cash-flow business takes minimal investment and usually involves sweat and time.

Let’s take a look at some examples:

A Translation company

Personal portfolio website (you make a site then you do cold e-mail marketing)

FREELANCE (UpWork, Fiverr).

Educational business.

Infomarketing. (You design a knowledge-based product. You sell the info).

Online fitness/diet/health coaching ($50-$300/month, calls, training plan)

Amazon e-book publishing. (Medium writers do this)

YouTube, cash-flow channel

A web development agency (I'm a dev, but if you're not, a graphic design agency, etc.) (Sell your time.)

Digital Marketing

Online paralegal (A million lawyers work in the U.S).

Some dropshipping (Organic Tik Tok dropshipping, where you create content to drive traffic to your shopify store instead of spend money on ads).

(Disclaimer: My first two cash-flow enterprises, which were language teaching, failed terribly. My translation firm is now booming because B2B e-mail marketing is easy.)

Crossover occurs. Your long-term business starts earning more money than your cash flow business.

My cash-flow business (freelancing, translation) makes $7k+/month.

I’ve decided to start a slightly more investment-heavy digital marketing agency

Here are the anticipated business's time- and money-intensive investments:

($$$) Top Front-End designer's Figma/UI-UX design (in negotiation)

(Time): A little copywriting (I will do this myself)

($$) Creating an animated webpage with HTML (in negotiation)

Backend Development (Duration) (I'll carry out this myself using Laravel.)

Logo Design ($$)

Logo Intro Video for $

Video Intro (I’ll edit this myself with Premiere Pro)

etc.

Then evaluate product, place, price, and promotion. Consider promotion and pricing.

The lean-hedge model's point is:

Don't gamble. Avoid debt. First create a cash-flow project, then grow it steadily.

Check read my previous posts on “Nightmare Mode” (which teaches you how to make work as interesting as video games) and Why most people can't escape a 9-5 to learn how to develop a cash-flow business.

Henrique Centieiro

4 years ago

DAO 101: Everything you need to know

Maybe you'll work for a DAO next! Over $1 Billion in NFTs in the Flamingo DAO Another DAO tried to buy the NFL team Denver Broncos. The UkraineDAO raised over $7 Million for Ukraine. The PleasrDAO paid $4m for a Wu-Tang Clan album that belonged to the “pharma bro.”

DAOs move billions and employ thousands. So learn what a DAO is, how it works, and how to create one!

DAO? So, what? Why is it better?

A Decentralized Autonomous Organization (DAO). Some people like to also refer to it as Digital Autonomous Organization, but I prefer the former.

They are virtual organizations. In the real world, you have organizations or companies right? These firms have shareholders and a board. Usually, anyone with authority makes decisions. It could be the CEO, the Board, or the HIPPO. If you own stock in that company, you may also be able to influence decisions. It's now possible to do something similar but much better and more equitable in the cryptocurrency world.

This article informs you:

DAOs- What are the most common DAOs, their advantages and disadvantages over traditional companies? What are they if any?

Is a DAO legally recognized?

How secure is a DAO?

I’m ready whenever you are!

A DAO is a type of company that is operated by smart contracts on the blockchain. Smart contracts are computer code that self-executes our commands. Those contracts can be any. Most second-generation blockchains support smart contracts. Examples are Ethereum, Solana, Polygon, Binance Smart Chain, EOS, etc. I think I've gone off topic. Back on track. Now let's go!

Unlike traditional corporations, DAOs are governed by smart contracts. Unlike traditional company governance, DAO governance is fully transparent and auditable. That's one of the things that sets it apart. The clarity!

A DAO, like a traditional company, has one major difference. In other words, it is decentralized. DAOs are more ‘democratic' than traditional companies because anyone can vote on decisions. Anyone! In a DAO, we (you and I) make the decisions, not the top-shots. We are the CEO and investors. A DAO gives its community members power. We get to decide.

As long as you are a stakeholder, i.e. own a portion of the DAO tokens, you can participate in the DAO. Tokens are open to all. It's just a matter of exchanging it. Ownership of DAO tokens entitles you to exclusive benefits such as governance, voting, and so on. You can vote for a move, a plan, or the DAO's next investment. You can even pitch for funding. Any ‘big' decision in a DAO requires a vote from all stakeholders. In this case, ‘token-holders'! In other words, they function like stock.

What are the 5 DAO types?

Different DAOs exist. We will categorize decentralized autonomous organizations based on their mode of operation, structure, and even technology. Here are a few. You've probably heard of them:

1. DeFi DAO

These DAOs offer DeFi (decentralized financial) services via smart contract protocols. They use tokens to vote protocol and financial changes. Uniswap, Aave, Maker DAO, and Olympus DAO are some examples. Most DAOs manage billions.

Maker DAO was one of the first protocols ever created. It is a decentralized organization on the Ethereum blockchain that allows cryptocurrency lending and borrowing without a middleman.

Maker DAO issues DAI, a stable coin. DAI is a top-rated USD-pegged stable coin.

Maker DAO has an MKR token. These token holders are in charge of adjusting the Dai stable coin policy. Simply put, MKR tokens represent DAO “shares”.

2. Investment DAO

Investors pool their funds and make investment decisions. Investing in new businesses or art is one example. Investment DAOs help DeFi operations pool capital. The Meta Cartel DAO is a community of people who want to invest in new projects built on the Ethereum blockchain. Instead of investing one by one, they want to pool their resources and share ideas on how to make better financial decisions.

Other investment DAOs include the LAO and Friends with Benefits.

3. DAO Grant/Launchpad

In a grant DAO, community members contribute funds to a grant pool and vote on how to allocate and distribute them. These DAOs fund new DeFi projects. Those in need only need to apply. The Moloch DAO is a great Grant DAO. The tokens are used to allocate capital. Also see Gitcoin and Seedify.

4. DAO Collector

I debated whether to put it under ‘Investment DAO' or leave it alone. It's a subset of investment DAOs. This group buys non-fungible tokens, artwork, and collectibles. The market for NFTs has recently exploded, and it's time to investigate. The Pleasr DAO is a collector DAO. One copy of Wu-Tang Clan's "Once Upon a Time in Shaolin" cost the Pleasr DAO $4 million. Pleasr DAO is known for buying Doge meme NFT. Collector DAOs include the Flamingo, Mutant Cats DAO, and Constitution DAOs. Don't underestimate their websites' "childish" style. They have millions.

5. Social DAO

These are social networking and interaction platforms. For example, Decentraland DAO and Friends With Benefits DAO.

What are the DAO Benefits?

Here are some of the benefits of a decentralized autonomous organization:

- They are trustless. You don’t need to trust a CEO or management team

- It can’t be shut down unless a majority of the token holders agree. The government can't shut - It down because it isn't centralized.

- It's fully democratic

- It is open-source and fully transparent.

What about DAO drawbacks?

We've been saying DAOs are the bomb? But are they really the shit? What could go wrong with DAO?

DAOs may contain bugs. If they are hacked, the results can be catastrophic.

No trade secrets exist. Because the smart contract is transparent and coded on the blockchain, it can be copied. It may be used by another organization without credit. Maybe DAOs should use Secret, Oasis, or Horizen blockchain networks.

Are DAOs legally recognized??

In most counties, DAO regulation is inexistent. It's unclear. Most DAOs don’t have a legal personality. The Howey Test and the Securities Act of 1933 determine whether DAO tokens are securities. Although most countries follow the US, this is only considered for the US. Wyoming became the first state to recognize DAOs as legal entities in July 2021 after passing a DAO bill. DAOs registered in Wyoming are thus legally recognized as business entities in the US and thus receive the same legal protections as a Limited Liability Company.

In terms of cyber-security, how secure is a DAO?

Blockchains are secure. However, smart contracts may have security flaws or bugs. This can be avoided by third-party smart contract reviews, testing, and auditing

Finally, Decentralized Autonomous Organizations are timeless. Let us examine the current situation: Ukraine's invasion. A DAO was formed to help Ukrainian troops fighting the Russians. It was named Ukraine DAO. Pleasr DAO, NFT studio Trippy Labs, and Russian art collective Pussy Riot organized this fundraiser. Coindesk reports that over $3 million has been raised in Ethereum-based tokens. AidForUkraine, a DAO aimed at supporting Ukraine's defense efforts, has launched. Accepting Solana token donations. They are fully transparent, uncensorable, and can’t be shut down or sanctioned.

DAOs are undeniably the future of blockchain. Everyone is paying attention. Personally, I believe traditional companies will soon have to choose between adapting or being left behind.

Long version of this post: https://medium.datadriveninvestor.com/dao-101-all-you-need-to-know-about-daos-275060016663