More on Productivity

Jano le Roux

3 years ago

My Top 11 Tools For Building A Modern Startup, With A Free Plan

The best free tools are probably unknown to you.

Modern startups are easy to build.

Start with free tools.

Let’s go.

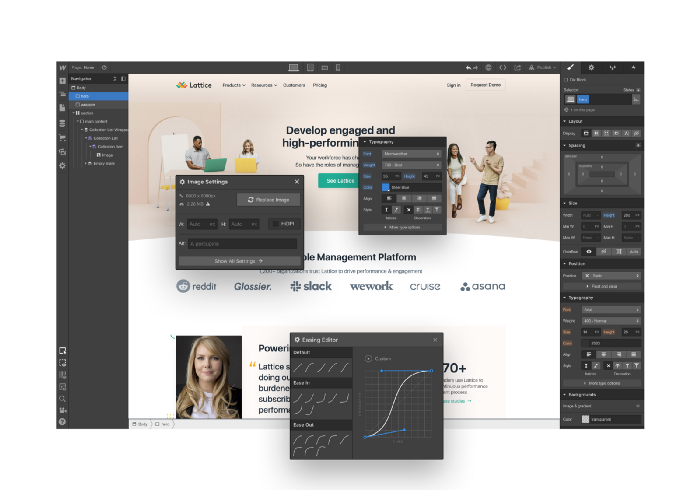

Web development — Webflow

Code-free HTML, CSS, and JS.

Webflow isn't like Squarespace, Wix, or Shopify.

It's a super-fast no-code tool for professionals to construct complex, highly-responsive websites and landing pages.

Webflow can help you add animations like those on Apple's website to your own site.

I made the jump from WordPress a few years ago and it changed my life.

No damn plugins. No damn errors. No damn updates.

The best, you can get started on Webflow for free.

Data tracking — Airtable

Spreadsheet wings.

Airtable combines spreadsheet flexibility with database power without code.

Airtable is modern.

Airtable has modularity.

Scaling Airtable is simple.

Airtable, one of the most adaptable solutions on this list, is perfect for client data management.

Clients choose customized service packages. Airtable consolidates data so you can automate procedures like invoice management and focus on your strengths.

Airtable connects with so many tools that rarely creates headaches. Airtable scales when you do.

Airtable's flexibility makes it a potential backend database.

Design — Figma

Better, faster, easier user interface design.

Figma rocks!

It’s fast.

It's free.

It's adaptable

First, design in Figma.

Iterate.

Export development assets.

Figma lets you add more team members as your company grows to work on each iteration simultaneously.

Figma is web-based, so you don't need a powerful PC or Mac to start.

Task management — Trello

Unclock jobs.

Tacky and terrifying task management products abound. Trello isn’t.

Those that follow Marie Kondo will appreciate Trello.

Everything is clean.

Nothing is complicated.

Everything has a place.

Compared to other task management solutions, Trello is limited. And that’s good. Too many buttons lead to too many decisions lead to too many hours wasted.

Trello is a must for teamwork.

Domain email — Zoho

Free domain email hosting.

Professional email is essential for startups. People relied on monthly payments for too long. Nope.

Zoho offers 5 free professional emails.

It doesn't have Google's UI, but it works.

VPN — Proton VPN

Fast Swiss VPN protects your data and privacy.

Proton VPN is secure.

Proton doesn't record any data.

Proton is based in Switzerland.

Swiss privacy regulation is among the most strict in the world, therefore user data are protected. Switzerland isn't a 14 eye country.

Journalists and activists trust Proton to secure their identities while accessing and sharing information authoritarian governments don't want them to access.

Web host — Netlify

Free fast web hosting.

Netlify is a scalable platform that combines your favorite tools and APIs to develop high-performance sites, stores, and apps through GitHub.

Serverless functions and environment variables preserve API keys.

Netlify's free tier is unmissable.

100GB of free monthly bandwidth.

Free 125k serverless operations per website each month.

Database — MongoDB

Create a fast, scalable database.

MongoDB is for small and large databases. It's a fast and inexpensive database.

Free for the first million reads.

Then, for each million reads, you must pay $0.10.

MongoDB's free plan has:

Encryption from end to end

Continual authentication

field-level client-side encryption

If you have a large database, you can easily connect MongoDB to Webflow to bypass CMS limits.

Automation — Zapier

Time-saving tip: automate repetitive chores.

Zapier simplifies life.

Zapier syncs and connects your favorite apps to do impossibly awesome things.

If your online store is connected to Zapier, a customer's purchase can trigger a number of automated actions, such as:

The customer is being added to an email chain.

Put the information in your Airtable.

Send a pre-programmed postcard to the customer.

Alexa, set the color of your smart lights to purple.

Zapier scales when you do.

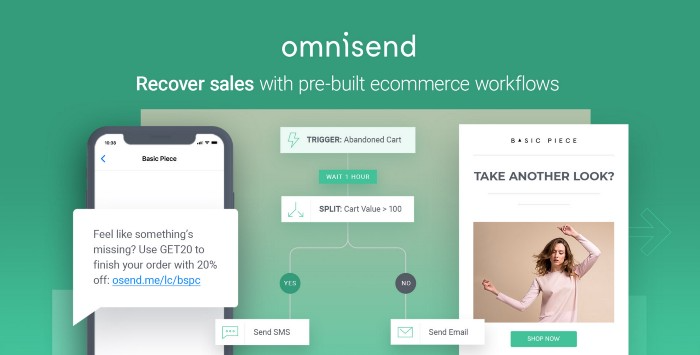

Email & SMS marketing — Omnisend

Email and SMS marketing campaigns.

This is an excellent Mailchimp option for magical emails. Omnisend's processes simplify email automation.

I love the interface's cleanliness.

Omnisend's free tier includes web push notifications.

Send up to:

500 emails per month

60 maximum SMSs

500 Web Push Maximum

Forms and surveys — Tally

Create flexible forms that people enjoy.

Typeform is clean but restricting. Sometimes you need to add many questions. Tally's needed sometimes.

Tally is flexible and cheaper than Typeform.

99% of Tally's features are free and unrestricted, including:

Unlimited forms

Countless submissions

Collect payments

File upload

Tally lets you examine what individuals contributed to forms before submitting them to see where they get stuck.

Airtable and Zapier connectors automate things further. If you pay, you can apply custom CSS to fit your brand.

See.

Free tools are the greatest.

Let's use them to launch a startup.

Taher Batterywala

3 years ago

Do You Have Focus Issues? Use These 5 Simple Habits

Many can't concentrate. The first 20% of the day isn't optimized.

Elon Musk, Tony Robbins, and Bill Gates share something:

Morning Routines.

A repeatable morning ritual saves time.

The result?

Time for hobbies.

I'll discuss 5 easy morning routines you can use.

1. Stop pressing snooze

Waking up starts the day. You disrupt your routine by hitting snooze.

One sleep becomes three. Your morning routine gets derailed.

Fix it:

Hide your phone. This disables snooze and wakes you up.

Once awake, staying awake is 10x easier. Simple trick, big results.

2. Drink water

Chronic dehydration is common. Mostly urban, air-conditioned workers/residents.

2% cerebral dehydration causes short-term memory loss.

Dehydration shrinks brain cells.

Drink 3-4 liters of water daily to avoid this.

3. Improve your focus

How to focus better?

Meditation.

Improve your mood

Enhance your memory

increase mental clarity

Reduce blood pressure and stress

Headspace helps with the habit.

Here's a meditation guide.

Sit comfortably

Shut your eyes.

Concentrate on your breathing

Breathe in through your nose

Breathe out your mouth.

5 in, 5 out.

Repeat for 1 to 20 minutes.

Here's a beginner's video:

4. Workout

Exercise raises:

Mental Health

Effort levels

focus and memory

15-60 minutes of fun:

Exercise Lifting

Running

Walking

Stretching and yoga

This helps you now and later.

5. Keep a journal

You have countless thoughts daily. Many quietly steal your focus.

Here’s how to clear these:

Write for 5-10 minutes.

You'll gain 2x more mental clarity.

Recap

5 morning practices for 5x more productivity:

Say no to snoozing

Hydrate

Improve your focus

Exercise

Journaling

Conclusion

One step starts a thousand-mile journey. Try these easy yet effective behaviors if you have trouble concentrating or have too many thoughts.

Start with one of these behaviors, then add the others. Its astonishing results are instant.

Jari Roomer

3 years ago

Three Simple Daily Practices That Will Immediately Double Your Output

Most productive people are habitual.

Early in the day, do important tasks.

In his best-selling book Eat That Frog, Brian Tracy advised starting the day with your hardest, most important activity.

Most individuals work best in the morning. Energy and willpower peak then.

Mornings are also ideal for memory, focus, and problem-solving.

Thus, the morning is ideal for your hardest chores.

It makes sense to do these things during your peak performance hours.

Additionally, your morning sets the tone for the day. According to Brian Tracy, the first hour of the workday steers the remainder.

After doing your most critical chores, you may feel accomplished, confident, and motivated for the remainder of the day, which boosts productivity.

Develop Your Essentialism

In Essentialism, Greg McKeown claims that trying to be everything to everyone leads to mediocrity and tiredness.

You'll either burn out, be spread too thin, or compromise your ideals.

Greg McKeown advises Essentialism:

Clarify what’s truly important in your life and eliminate the rest.

Eliminating non-essential duties, activities, and commitments frees up time and energy for what matters most.

According to Greg McKeown, Essentialists live by design, not default.

You'll be happier and more productive if you follow your essentials.

Follow these three steps to live more essentialist.

Prioritize Your Tasks First

What matters most clarifies what matters less. List your most significant aims and values.

The clearer your priorities, the more you can focus on them.

On Essentialism, McKeown wrote, The ultimate form of effectiveness is the ability to deliberately invest our time and energy in the few things that matter most.

#2: Set Your Priorities in Order

Prioritize your priorities, not simply know them.

“If you don’t prioritize your life, someone else will.” — Greg McKeown

Planning each day and allocating enough time for your priorities is the best method to become more purposeful.

#3: Practice saying "no"

If a request or demand conflicts with your aims or principles, you must learn to say no.

Saying no frees up space for our priorities.

Place Sleep Above All Else

Many believe they must forego sleep to be more productive. This is false.

A productive day starts with a good night's sleep.

Matthew Walker (Why We Sleep) says:

“Getting a good night’s sleep can improve cognitive performance, creativity, and overall productivity.”

Sleep helps us learn, remember, and repair.

Unfortunately, 35% of people don't receive the recommended 79 hours of sleep per night.

Sleep deprivation can cause:

increased risk of diabetes, heart disease, stroke, and obesity

Depression, stress, and anxiety risk are all on the rise.

decrease in general contentment

decline in cognitive function

To live an ideal, productive, and healthy life, you must prioritize sleep.

Follow these six sleep optimization strategies to obtain enough sleep:

Establish a nightly ritual to relax and prepare for sleep.

Avoid using screens an hour before bed because the blue light they emit disrupts the generation of melatonin, a necessary hormone for sleep.

Maintain a regular sleep schedule to control your body's biological clock (and optimizes melatonin production)

Create a peaceful, dark, and cool sleeping environment.

Limit your intake of sweets and caffeine (especially in the hours leading up to bedtime)

Regular exercise (but not right before you go to bed, because your body temperature will be too high)

Sleep is one of the best ways to boost productivity.

Sleep is crucial, says Matthew Walker. It's the key to good health and longevity.

You might also like

Nabil Alouani

3 years ago

Why Cryptocurrency Is Not Dead Despite the FTX Scam

A fraud, free-market, antifragility tale

Crypto's only rival is public opinion.

In less than a week, mainstream media, bloggers, and TikTokers turned on FTX's founder.

While some were surprised, almost everyone with a keyboard and a Twitter account predicted the FTX collapse. These financial oracles should have warned the 1.2 million people Sam Bankman-Fried duped.

After happening, unexpected events seem obvious to our brains. It's a bug and a feature because it helps us cope with disasters and makes our reasoning suck.

Nobody predicted the FTX debacle. Bloomberg? Politicians. Non-famous. No cryptologists. Who?

When FTX imploded, taking billions of dollars with it, an outrage bomb went off, and the resulting shockwave threatens the crypto market's existence.

As someone who lost more than $78,000 in a crypto scam in 2020, I can only understand people’s reactions. When the dust settles and rationality returns, we'll realize this is a natural occurrence in every free market.

What specifically occurred with FTX? (Skip if you are aware.)

FTX is a cryptocurrency exchange where customers can trade with cash. It reached #3 in less than two years as the fastest-growing platform of its kind.

FTX's performance helped make SBF the crypto poster boy. Other reasons include his altruistic public image, his support for the Democrats, and his company Alameda Research.

Alameda Research made a fortune arbitraging Bitcoin.

Arbitrage trading uses small price differences between two markets to make money. Bitcoin costs $20k in Japan and $21k in the US. Alameda Research did that for months, making $1 million per day.

Later, as its capital grew, Alameda expanded its trading activities and began investing in other companies.

Let's now discuss FTX.

SBF's diabolic master plan began when he used FTX-created FTT coins to inflate his trading company's balance sheets. He used inflated Alameda numbers to secure bank loans.

SBF used money he printed himself as collateral to borrow billions for capital. Coindesk exposed him in a report.

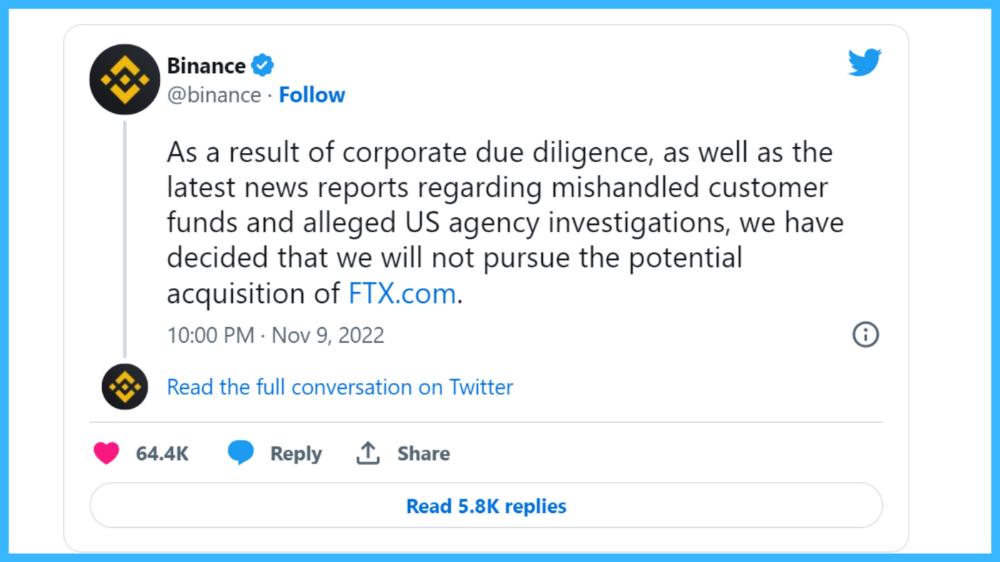

One of FTX's early investors tweeted that he planned to sell his FTT coins over the next few months. This would be a minor event if the investor wasn't Binance CEO Changpeng Zhao (CZ).

The crypto space saw a red WARNING sign when CZ cut ties with FTX. Everyone with an FTX account and a brain withdrew money. Two events followed. FTT fell from $20 to $4 in less than 72 hours, and FTX couldn't meet withdrawal requests, spreading panic.

SBF reassured FTX users on Twitter. Good assets.

He lied.

SBF falsely claimed FTX had a liquidity crunch. At the time of his initial claims, FTX owed about $8 billion to its customers. Liquidity shortages are usually minor. To get cash, sell assets. In the case of FTX, the main asset was printed FTT coins.

Sam wouldn't get out of trouble even if he slashed the discount (from $20 to $4) and sold every FTT. He'd flood the crypto market with his homemade coins, causing the price to crash.

SBF was trapped. He approached Binance about a buyout, which seemed good until Binance looked at FTX's books.

Binance's tweet ended SBF, and he had to apologize, resign as CEO, and file for bankruptcy.

Bloomberg estimated Sam's net worth to be zero by the end of that week. 0!

But that's not all. Twitter investigations exposed fraud at FTX and Alameda Research. SBF used customer funds to trade and invest in other companies.

Thanks to the Twitter indie reporters who made the mainstream press look amateurish. Some Twitter detectives didn't sleep for 30 hours to find answers. Others added to existing threads. Memes were hilarious.

One question kept repeating in my bald head as I watched the Blue Bird. Sam, WTF?

Then I understood.

SBF wanted that FTX becomes a bank.

Think about this. FTX seems healthy a few weeks ago. You buy 2 bitcoins using FTX. You'd expect the platform to take your dollars and debit your wallet, right?

No. They give I-Owe-Yous.

FTX records owing you 2 bitcoins in its internal ledger but doesn't credit your account. Given SBF's tricks, I'd bet on nothing.

What happens if they don't credit my account with 2 bitcoins? Your money goes into FTX's capital, where SBF and his friends invest in marketing, political endorsements, and buying other companies.

Over its two-year existence, FTX invested in 130 companies. Once they make a profit on their purchases, they'll pay you and keep the rest.

One detail makes their strategy dumb. If all FTX customers withdraw at once, everything collapses.

Financially savvy people think FTX's collapse resembles a bank run, and they're right. SBF designed FTX to operate like a bank.

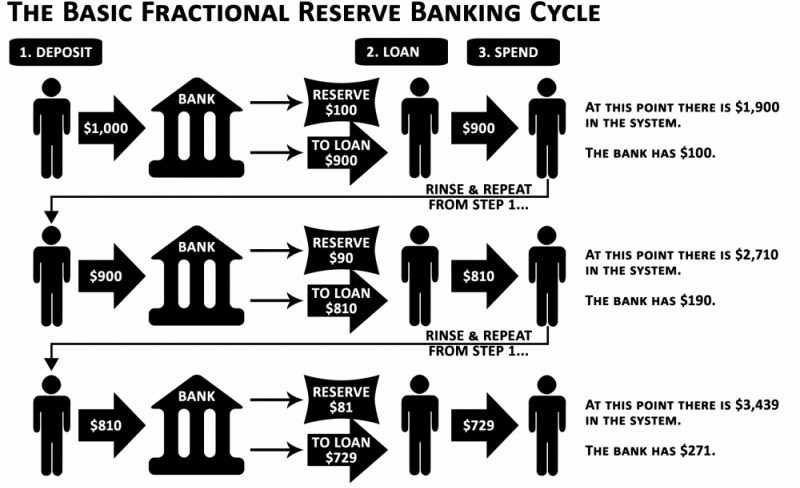

You expect your bank to open a drawer with your name and put $1,000 in it when you deposit $1,000. They deposit $100 in your drawer and create an I-Owe-You for $900. What happens to $900?

Let's sum it up: It's boring and headache-inducing.

When you deposit money in a bank, they can keep 10% and lend the rest. Fractional Reserve Banking is a popular method. Fractional reserves operate within and across banks.

Fractional reserve banking generates $10,000 for every $1,000 deposited. People will pay off their debt plus interest.

As long as banks work together and the economy grows, their model works well.

SBF tried to replicate the system but forgot two details. First, traditional banks need verifiable collateral like real estate, jewelry, art, stocks, and bonds, not digital coupons. Traditional banks developed a liquidity buffer. The Federal Reserve (or Central Bank) injects massive cash into troubled banks.

Massive cash injections come from taxpayers. You and I pay for bankers' mistakes and annual bonuses. Yes, you may think banking is rigged. It's rigged, but it's the best financial game in 150 years. We accept its flaws, including bailouts for too-big-to-fail companies.

Anyway.

SBF wanted Binance's bailout. Binance said no, which was good for the crypto market.

Free markets are resilient.

Nassim Nicholas Taleb coined the term antifragility.

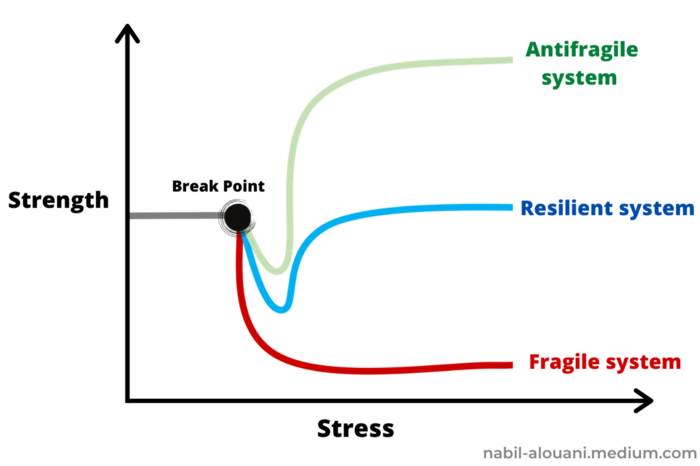

“Some things benefit from shocks; they thrive and grow when exposed to volatility, randomness, disorder, and stressors and love adventure, risk, and uncertainty. Yet, in spite of the ubiquity of the phenomenon, there is no word for the exact opposite of fragile. Let us call it antifragile. Antifragility is beyond resilience or robustness. The resilient resists shocks and stays the same; the antifragile gets better.”

The easiest way to understand how antifragile systems behave is to compare them with other types of systems.

Glass is like a fragile system. It snaps when shocked.

Similar to rubber, a resilient system. After a stressful episode, it bounces back.

A system that is antifragile is similar to a muscle. As it is torn in the gym, it gets stronger.

Time-changed things are antifragile. Culture, tech innovation, restaurants, revolutions, book sales, cuisine, economic success, and even muscle shape. These systems benefit from shocks and randomness in different ways, but they all pay a price for antifragility.

Same goes for the free market and financial institutions. Taleb's book uses restaurants as an example and ends with a reference to the 2008 crash.

“Restaurants are fragile. They compete with each other. But the collective of local restaurants is antifragile for that very reason. Had restaurants been individually robust, hence immortal, the overall business would be either stagnant or weak and would deliver nothing better than cafeteria food — and I mean Soviet-style cafeteria food. Further, it [the overall business] would be marred with systemic shortages, with once in a while a complete crisis and government bailout.”

Imagine the same thing with banks.

Independent banks would compete to offer the best services. If one of these banks fails, it will disappear. Customers and investors will suffer, but the market will recover from the dead banks' mistakes.

This idea underpins a free market. Bitcoin and other cryptocurrencies say this when criticizing traditional banking.

The traditional banking system's components never die. When a bank fails, the Federal Reserve steps in with a big taxpayer-funded check. This hinders bank evolution. If you don't let banking cells die and be replaced, your financial system won't be antifragile.

The interdependence of banks (centralization) means that one bank's mistake can sink the entire fleet, which brings us to SBF's ultimate travesty with FTX.

FTX has left the cryptocurrency gene pool.

FTX should be decentralized and independent. The super-star scammer invested in more than 130 crypto companies and linked them, creating a fragile banking-like structure. FTX seemed to say, "We exist because centralized banks are bad." But we'll be good, unlike the centralized banking system.

FTX saved several companies, including BlockFi and Voyager Digital.

FTX wanted to be a crypto bank conglomerate and Federal Reserve. SBF wanted to monopolize crypto markets. FTX wanted to be in bed with as many powerful people as possible, so SBF seduced politicians and celebrities.

Worst? People who saw SBF's plan flaws praised him. Experts, newspapers, and crypto fans praised FTX. When billions pour in, it's hard to realize FTX was acting against its nature.

Then, they act shocked when they realize FTX's fall triggered a domino effect. Some say the damage could wipe out the crypto market, but that's wrong.

Cell death is different from body death.

FTX is out of the game despite its size. Unfit, it fell victim to market natural selection.

Next?

The challengers keep coming. The crypto economy will improve with each failure.

Free markets are antifragile because their fragile parts compete, fostering evolution. With constructive feedback, evolution benefits customers and investors.

FTX shows that customers don't like being scammed, so the crypto market's health depends on them. Charlatans and con artists are eliminated quickly or slowly.

Crypto isn't immune to collapse. Cryptocurrencies can go extinct like biological species. Antifragility isn't immortality. A few more decades of evolution may be enough for humans to figure out how to best handle money, whether it's bitcoin, traditional banking, gold, or something else.

Keep your BS detector on. Start by being skeptical of this article's finance-related claims. Even if you think you understand finance, join the conversation.

We build a better future through dialogue. So listen, ask, and share. When you think you can't find common ground with the opposing view, remember:

Sam Bankman-Fried lied.

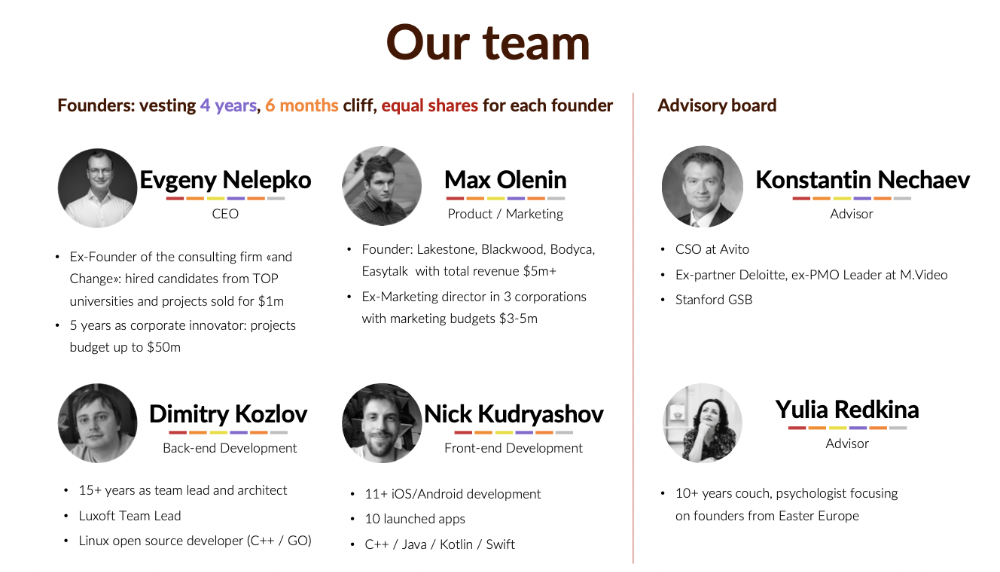

Evgenii Nelepko

3 years ago

My 3 biggest errors as a co-founder and CEO

Reflections on the closed company Hola! Dating app

I'll discuss my fuckups as an entrepreneur and CEO. All of them refer to the dating app Hola!, which I co-founded and starred in.

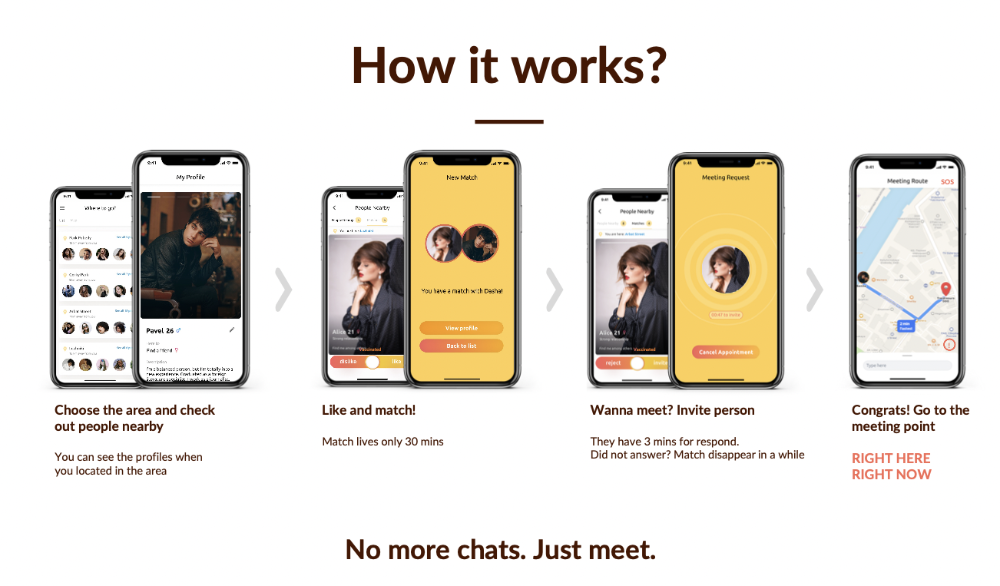

Spring 2021 was when we started. Two techies and two non-techies created a dating app. Pokemon Go and Tinder were combined.

Online dating is a business, and it takes two weeks from a like to a date. We questioned online dating app users if they met anyone offline last year.

75% replied yes, 50% sometimes, 25% usually.

Offline dating is popular, yet people have concerns.

Men are reluctant to make mistakes in front of others.

Women are curious about the background of everyone who approaches them.

We designed unique mechanics that let people date after a match. No endless chitchat. Women would be safe while men felt like cowboys.

I wish to emphasize three faults that lead to founders' estrangement.

This detachment ultimately led to us shutting down the company.

The wrong technology stack

Situation

Instead of generating a faster MVP and designing an app in a universal stack for iOS and Android, I argued we should pilot the app separately for iOS and Android. Technical founders' expertise made this possible.

Self-reflection

Mistaken strategy. We lost time and resources developing two apps at once. We chose iOS since it's more profitable. Apple took us out after the release, citing Guideline 4.3 Spam. After 4 months, we had nothing. We had a long way to go to get the app on Android and the Store.

I suggested creating a uniform platform for the company's growth. This makes parallel product development easier. The strategist's lack of experience and knowledge made it a piece of crap.

What would I have changed if I could?

We should have designed an Android universal stack. I expected Apple to have issues with a dating app.

Our approach should have been to launch something and subsequently improve it, but prejudice won.

The lesson

Discuss the IT stack with your CTO. It saves time and money. Choose the easiest MVP method.

2. A tardy search for investments

Situation

Though the universe and other founders encouraged me to locate investors first, I started pitching when we almost had an app.

When angels arrived, it was time to close. The app was banned, war broke out, I left the country, and the other co-founders stayed. We had no savings.

Self-reflection

I loved interviewing users. I'm proud of having done 1,000 interviews. I wanted to understand people's pain points and improve the product.

Interview results no longer affected the product. I was terrified to start pitching. I filled out accelerator applications and redid my presentation. You must go through that so you won't be terrified later.

What would I have changed if I could?

Get an external or internal mentor to help me with my first pitch as soon as possible. I'd be supported if criticized. He'd cheer with me if there was enthusiasm.

In 99% of cases, I'm comfortable jumping into the unknown, but there are exceptions. The mentor's encouragement would have prompted me to act sooner.

The lesson

Begin fundraising immediately. Months may pass. Show investors your pre-MVP project. Draw inferences from feedback.

3. Role ambiguity

Situation

My technical co-founders were also part-time lead developers, which produced communication issues. As co-founders, we communicated well and recognized the problems. Stakes, vesting, target markets, and approach were agreed upon.

We were behind schedule. Technical debt and strategic gap grew.

Bi-daily and weekly reviews didn't help. Each time, there were explanations. Inside, I was freaking out.

Self-reflection

I am a fairly easy person to talk to. I always try to stick to agreements; otherwise, my head gets stuffed with unnecessary information, interpretations, and emotions.

Sit down -> talk -> decide -> do -> evaluate the results. Repeat it.

If I don't get detailed comments, I start ruining everyone's mood. If there's a systematic violation of agreements without a good justification, I won't join the project or I'll end the collaboration.

What would I have done otherwise?

This is where it’s scariest to draw conclusions. Probably the most logical thing would have been not to start the project as we started it. But that was already a completely different project. So I would not have done anything differently and would have failed again.

But I drew conclusions for the future.

The lesson

First-time founders should find an adviser or team coach for a strategic session. It helps split the roles and responsibilities.

Al Anany

3 years ago

Notion AI Might Destroy Grammarly and Jasper

The trick Notion could use is simply Facebook-ing the hell out of them.

*Time travel to fifteen years ago.* Future-Me: “Hey! What are you up to?” Old-Me: “I am proofreading an article. It’s taking a few hours, but I will be done soon.” Future-Me: “You know, in the future, you will be using a google chrome plugin called Grammarly that will help you easily proofread articles in half that time.” Old-Me: “What is… Google Chrome?” Future-Me: “Gosh…”

I love Grammarly. It’s one of those products that I personally feel the effects of. I mean, Space X is a great company. But I am not a rocket writing this article in space (or am I?)…

No, I’m not. So I don’t personally feel a connection to Space X. So, if a company collapse occurs in the morning, I might write about it. But I will have zero emotions regarding it.

Yet, if Grammarly fails tomorrow, I will feel 1% emotionally distressed. So looking at the title of this article, you’d realize that I am betting against them. This is how much I believe in the critical business model that’s taking over the world, the one of Notion.

Notion How frequently do you go through your notes?

Grammarly is everywhere, which helps its success. Grammarly is available when you update LinkedIn on Chrome. Grammarly prevents errors in Google Docs.

My internal concentration isn't apparent in the previous paragraph. Not Grammarly. I should have used Chrome to make a Google doc and LinkedIn update. Without this base, Grammarly will be useless.

So, welcome to this business essay.

Grammarly provides a solution.

Another issue is resolved by Jasper.

Your entire existence is supposed to be contained within Notion.

New Google Chrome is offline. It's an all-purpose notepad (in the near future.)

How should I start my blog? Enter it in Note.

an update on LinkedIn? If you mention it, it might be automatically uploaded there (with little help from another app.)

An advanced thesis? You can brainstorm it with your coworkers.

This ad sounds great! I won't cry if Notion dies tomorrow.

I'll reread the following passages to illustrate why I think Notion could kill Grammarly and Jasper.

Notion is a fantastic app that incubates your work.

Smartly, they began with note-taking.

Hopefully, your work will be on Notion. Grammarly and Jasper are still must-haves.

Grammarly will proofread your typing while Jasper helps with copywriting and AI picture development.

They're the best, therefore you'll need them. Correct? Nah.

Notion might bombard them with Facebook posts.

Notion: “Hi Grammarly, do you want to sell your product to us?” Grammarly: “Dude, we are more valuable than you are. We’ve even raised $400m, while you raised $342m. Our last valuation round put us at $13 billion, while yours put you at $10 billion. Go to hell.” Notion: “Okay, we’ll speak again in five years.”

Notion: “Jasper, wanna sell?” Jasper: “Nah, we’re deep into AI and the field. You can’t compete with our people.” Notion: “How about you either sell or you turn into a Snapchat case?” Jasper: “…”

Notion is your home. Grammarly is your neighbor. Your track is Jasper.

What if you grew enough vegetables in your backyard to avoid the supermarket? No more visits.

What if your home had a beautiful treadmill? You won't rush outside as much (I disagree with my own metaphor). (You get it.)

It's Facebooking. Instagram Stories reduced your Snapchat usage. Notion will reduce your need to use Grammarly.

The Final Piece of the AI Puzzle

Let's talk about Notion first, since you've probably read about it everywhere.

They raised $343 million, as I previously reported, and bought four businesses

According to Forbes, Notion will have more than 20 million users by 2022. The number of users is up from 4 million in 2020.

If raising $1.8 billion was impressive, FTX wouldn't have fallen.

This article compares the basic product to two others. Notion is a day-long app.

Notion has released Notion AI to support writers. It's early, so it's not as good as Jasper. Then-Jasper isn't now-Jasper. In five years, Notion AI will be different.

With hard work, they may construct a Jasper-like writing assistant. They have resources and users.

At this point, it's all speculation. Jasper's copywriting is top-notch. Grammarly's proofreading is top-notch. Businesses are constrained by user activities.

If Notion's future business movements are strategic, they might become a blue ocean shark (or get acquired by an unbelievable amount.)

I love business mental teasers, so tell me:

How do you feel? Are you a frequent Notion user?

Do you dispute my position? I enjoy hearing opposing viewpoints.

Ironically, I proofread this with Grammarly.