More on Economics & Investing

Desiree Peralta

3 years ago

How to Use the 2023 Recession to Grow Your Wealth Exponentially

This season's three best money moves.

“Millionaires are made in recessions.” — Time Capital

We're in a serious downturn, whether or not we're in a recession.

97% of business owners are decreasing costs by more than 10%, and all markets are down 30%.

If you know what you're doing and analyze the markets correctly, this is your chance to become a millionaire.

In any recession, there are always excellent possibilities to seize. Real estate, crypto, stocks, enterprises, etc.

What you do with your money could influence your future riches.

This article analyzes the three key markets, their circumstances for 2023, and how to profit from them.

Ways to make money on the stock market.

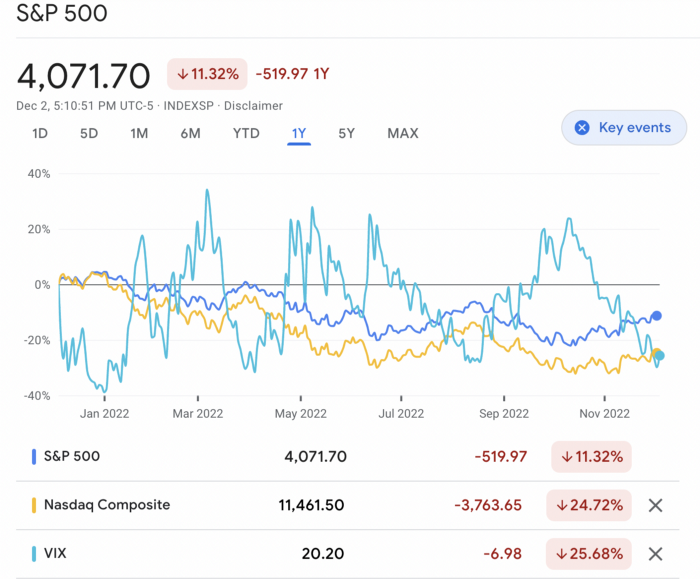

If you're conservative like me, you should invest in an index fund. Most of these funds are down 10-30% of ATH:

In earlier recessions, most money index funds lost 20%. After this downturn, they grew and passed the ATH in subsequent months.

Now is the greatest moment to invest in index funds to grow your money in a low-risk approach and make 20%.

If you want to be risky but wise, pick companies that will get better next year but are struggling now.

Even while we can't be 100% confident of a company's future performance, we know some are strong and will have a fantastic year.

Microsoft (down 22%), JPMorgan Chase (15.6%), Amazon (45%), and Disney (33.8%).

These firms give dividends, so you can earn passively while you wait.

So I consider that a good strategy to make wealth in the current stock market is to create two portfolios: one based on index funds to earn 10% to 20% profit when the corrections end, and the other based on individual stocks of popular and strong companies to earn 20%-30% return and dividends while you wait.

How to profit from the downturn in the real estate industry.

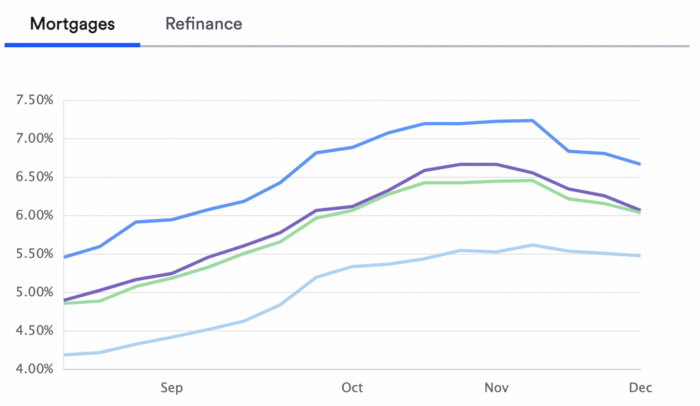

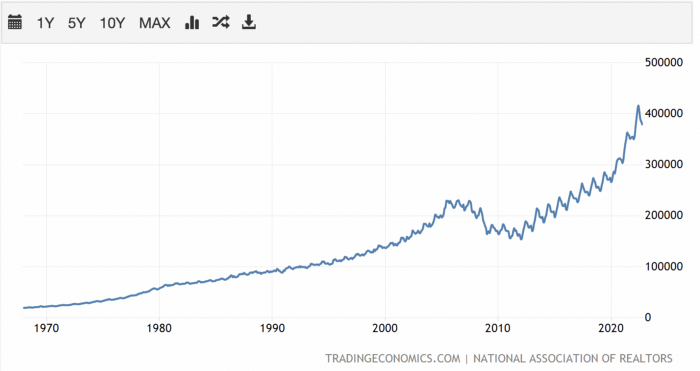

With rising mortgage rates, it's the worst moment to buy a home if you don't want to be eaten by banks. In the U.S., interest rates are double what they were three years ago, so buying now looks foolish.

Due to these rates, property prices are falling, but that won't last long since individuals will take advantage.

According to historical data, now is the ideal moment to buy a house for the next five years and perhaps forever.

If you can buy a house, do it. You can refinance the interest at a lower rate with acceptable credit, but not the house price.

Take advantage of the housing market prices now because you won't find a decent deal when rates normalize.

How to profit from the cryptocurrency market.

This is the riskiest market to tackle right now, but it could offer the most opportunities if done appropriately.

The most powerful cryptocurrencies are down more than 60% from last year: $68,990 for BTC and $4,865 for ETH.

If you focus on those two coins, you can make 30%-60% without waiting for them to return to their ATH, and they're low enough to be a solid investment.

I don't encourage trying other altcoins because the crypto market is in crisis and you can lose everything if you're greedy.

Still, the main Cryptos are a good investment provided you store them in an external wallet and follow financial gurus' security advice.

Last thoughts

We can't anticipate a recession until it ends. We can't forecast a market or asset's lowest point, therefore waiting makes little sense.

If you want to develop your wealth, assess the money prospects on all the marketplaces and initiate long-term trades.

Many millionaires are made during recessions because they don't fear negative figures and use them to scale their money.

Sofien Kaabar, CFA

3 years ago

How to Make a Trading Heatmap

Python Heatmap Technical Indicator

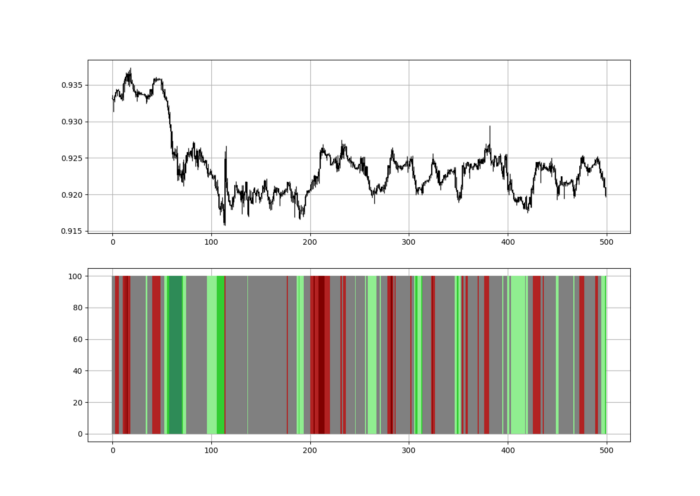

Heatmaps provide an instant overview. They can be used with correlations or to predict reactions or confirm the trend in trading. This article covers RSI heatmap creation.

The Market System

Market regime:

Bullish trend: The market tends to make higher highs, which indicates that the overall trend is upward.

Sideways: The market tends to fluctuate while staying within predetermined zones.

Bearish trend: The market has the propensity to make lower lows, indicating that the overall trend is downward.

Most tools detect the trend, but we cannot predict the next state. The best way to solve this problem is to assume the current state will continue and trade any reactions, preferably in the trend.

If the EURUSD is above its moving average and making higher highs, a trend-following strategy would be to wait for dips before buying and assuming the bullish trend will continue.

Indicator of Relative Strength

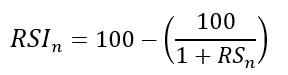

J. Welles Wilder Jr. introduced the RSI, a popular and versatile technical indicator. Used as a contrarian indicator to exploit extreme reactions. Calculating the default RSI usually involves these steps:

Determine the difference between the closing prices from the prior ones.

Distinguish between the positive and negative net changes.

Create a smoothed moving average for both the absolute values of the positive net changes and the negative net changes.

Take the difference between the smoothed positive and negative changes. The Relative Strength RS will be the name we use to describe this calculation.

To obtain the RSI, use the normalization formula shown below for each time step.

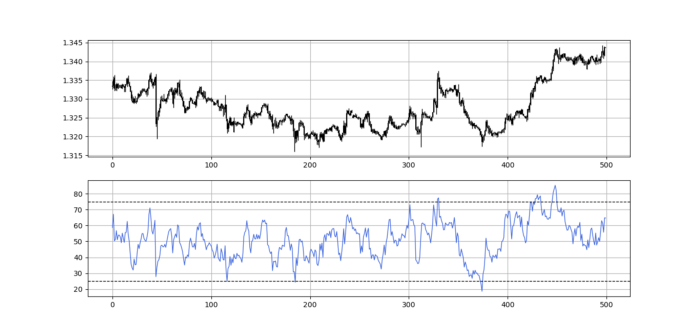

The 13-period RSI and black GBPUSD hourly values are shown above. RSI bounces near 25 and pauses around 75. Python requires a four-column OHLC array for RSI coding.

import numpy as np

def add_column(data, times):

for i in range(1, times + 1):

new = np.zeros((len(data), 1), dtype = float)

data = np.append(data, new, axis = 1)

return data

def delete_column(data, index, times):

for i in range(1, times + 1):

data = np.delete(data, index, axis = 1)

return data

def delete_row(data, number):

data = data[number:, ]

return data

def ma(data, lookback, close, position):

data = add_column(data, 1)

for i in range(len(data)):

try:

data[i, position] = (data[i - lookback + 1:i + 1, close].mean())

except IndexError:

pass

data = delete_row(data, lookback)

return data

def smoothed_ma(data, alpha, lookback, close, position):

lookback = (2 * lookback) - 1

alpha = alpha / (lookback + 1.0)

beta = 1 - alpha

data = ma(data, lookback, close, position)

data[lookback + 1, position] = (data[lookback + 1, close] * alpha) + (data[lookback, position] * beta)

for i in range(lookback + 2, len(data)):

try:

data[i, position] = (data[i, close] * alpha) + (data[i - 1, position] * beta)

except IndexError:

pass

return data

def rsi(data, lookback, close, position):

data = add_column(data, 5)

for i in range(len(data)):

data[i, position] = data[i, close] - data[i - 1, close]

for i in range(len(data)):

if data[i, position] > 0:

data[i, position + 1] = data[i, position]

elif data[i, position] < 0:

data[i, position + 2] = abs(data[i, position])

data = smoothed_ma(data, 2, lookback, position + 1, position + 3)

data = smoothed_ma(data, 2, lookback, position + 2, position + 4)

data[:, position + 5] = data[:, position + 3] / data[:, position + 4]

data[:, position + 6] = (100 - (100 / (1 + data[:, position + 5])))

data = delete_column(data, position, 6)

data = delete_row(data, lookback)

return dataMake sure to focus on the concepts and not the code. You can find the codes of most of my strategies in my books. The most important thing is to comprehend the techniques and strategies.

My weekly market sentiment report uses complex and simple models to understand the current positioning and predict the future direction of several major markets. Check out the report here:

Using the Heatmap to Find the Trend

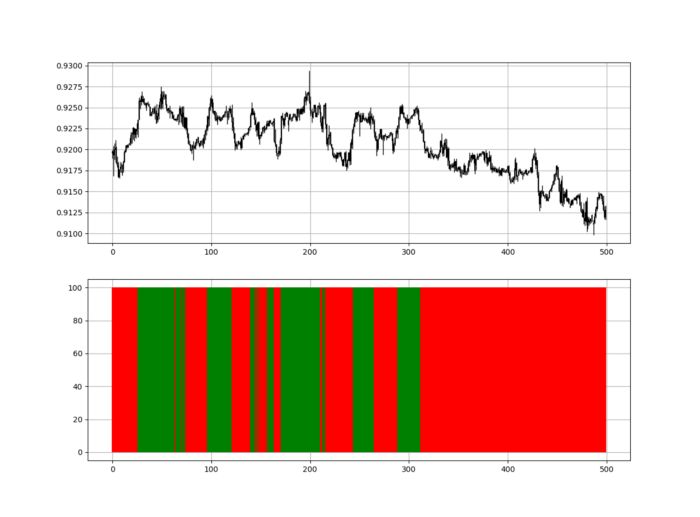

RSI trend detection is easy but useless. Bullish and bearish regimes are in effect when the RSI is above or below 50, respectively. Tracing a vertical colored line creates the conditions below. How:

When the RSI is higher than 50, a green vertical line is drawn.

When the RSI is lower than 50, a red vertical line is drawn.

Zooming out yields a basic heatmap, as shown below.

Plot code:

def indicator_plot(data, second_panel, window = 250):

fig, ax = plt.subplots(2, figsize = (10, 5))

sample = data[-window:, ]

for i in range(len(sample)):

ax[0].vlines(x = i, ymin = sample[i, 2], ymax = sample[i, 1], color = 'black', linewidth = 1)

if sample[i, 3] > sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 0], ymax = sample[i, 3], color = 'black', linewidth = 1.5)

if sample[i, 3] < sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 3], ymax = sample[i, 0], color = 'black', linewidth = 1.5)

if sample[i, 3] == sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 3], ymax = sample[i, 0], color = 'black', linewidth = 1.5)

ax[0].grid()

for i in range(len(sample)):

if sample[i, second_panel] > 50:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'green', linewidth = 1.5)

if sample[i, second_panel] < 50:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'red', linewidth = 1.5)

ax[1].grid()

indicator_plot(my_data, 4, window = 500)

Call RSI on your OHLC array's fifth column. 4. Adjusting lookback parameters reduces lag and false signals. Other indicators and conditions are possible.

Another suggestion is to develop an RSI Heatmap for Extreme Conditions.

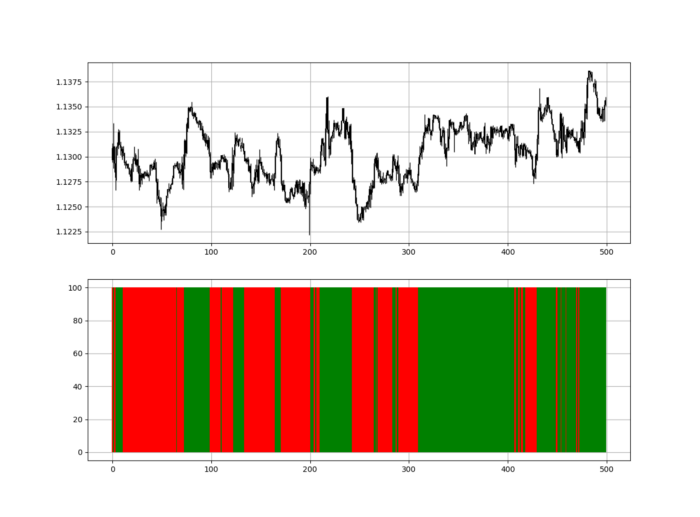

Contrarian indicator RSI. The following rules apply:

Whenever the RSI is approaching the upper values, the color approaches red.

The color tends toward green whenever the RSI is getting close to the lower values.

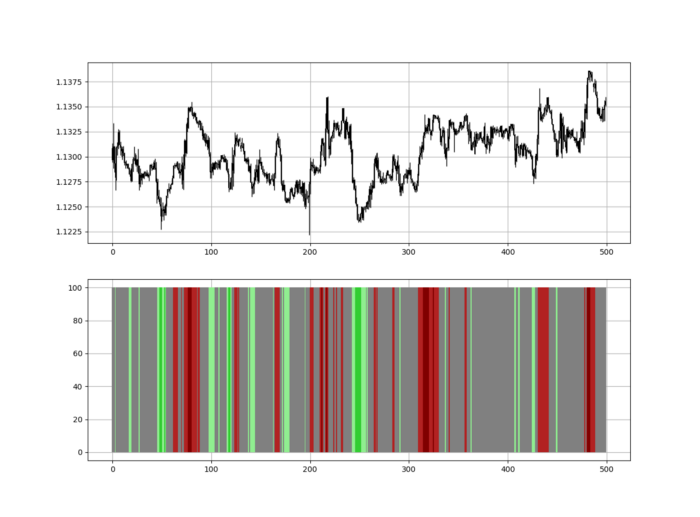

Zooming out yields a basic heatmap, as shown below.

Plot code:

import matplotlib.pyplot as plt

def indicator_plot(data, second_panel, window = 250):

fig, ax = plt.subplots(2, figsize = (10, 5))

sample = data[-window:, ]

for i in range(len(sample)):

ax[0].vlines(x = i, ymin = sample[i, 2], ymax = sample[i, 1], color = 'black', linewidth = 1)

if sample[i, 3] > sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 0], ymax = sample[i, 3], color = 'black', linewidth = 1.5)

if sample[i, 3] < sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 3], ymax = sample[i, 0], color = 'black', linewidth = 1.5)

if sample[i, 3] == sample[i, 0]:

ax[0].vlines(x = i, ymin = sample[i, 3], ymax = sample[i, 0], color = 'black', linewidth = 1.5)

ax[0].grid()

for i in range(len(sample)):

if sample[i, second_panel] > 90:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'red', linewidth = 1.5)

if sample[i, second_panel] > 80 and sample[i, second_panel] < 90:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'darkred', linewidth = 1.5)

if sample[i, second_panel] > 70 and sample[i, second_panel] < 80:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'maroon', linewidth = 1.5)

if sample[i, second_panel] > 60 and sample[i, second_panel] < 70:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'firebrick', linewidth = 1.5)

if sample[i, second_panel] > 50 and sample[i, second_panel] < 60:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'grey', linewidth = 1.5)

if sample[i, second_panel] > 40 and sample[i, second_panel] < 50:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'grey', linewidth = 1.5)

if sample[i, second_panel] > 30 and sample[i, second_panel] < 40:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'lightgreen', linewidth = 1.5)

if sample[i, second_panel] > 20 and sample[i, second_panel] < 30:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'limegreen', linewidth = 1.5)

if sample[i, second_panel] > 10 and sample[i, second_panel] < 20:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'seagreen', linewidth = 1.5)

if sample[i, second_panel] > 0 and sample[i, second_panel] < 10:

ax[1].vlines(x = i, ymin = 0, ymax = 100, color = 'green', linewidth = 1.5)

ax[1].grid()

indicator_plot(my_data, 4, window = 500)

Dark green and red areas indicate imminent bullish and bearish reactions, respectively. RSI around 50 is grey.

Summary

To conclude, my goal is to contribute to objective technical analysis, which promotes more transparent methods and strategies that must be back-tested before implementation.

Technical analysis will lose its reputation as subjective and unscientific.

When you find a trading strategy or technique, follow these steps:

Put emotions aside and adopt a critical mindset.

Test it in the past under conditions and simulations taken from real life.

Try optimizing it and performing a forward test if you find any potential.

Transaction costs and any slippage simulation should always be included in your tests.

Risk management and position sizing should always be considered in your tests.

After checking the above, monitor the strategy because market dynamics may change and make it unprofitable.

Ray Dalio

3 years ago

The latest “bubble indicator” readings.

As you know, I like to turn my intuition into decision rules (principles) that can be back-tested and automated to create a portfolio of alpha bets. I use one for bubbles. Having seen many bubbles in my 50+ years of investing, I described what makes a bubble and how to identify them in markets—not just stocks.

A bubble market has a high degree of the following:

- High prices compared to traditional values (e.g., by taking the present value of their cash flows for the duration of the asset and comparing it with their interest rates).

- Conditons incompatible with long-term growth (e.g., extrapolating past revenue and earnings growth rates late in the cycle).

- Many new and inexperienced buyers were drawn in by the perceived hot market.

- Broad bullish sentiment.

- Debt financing a large portion of purchases.

- Lots of forward and speculative purchases to profit from price rises (e.g., inventories that are more than needed, contracted forward purchases, etc.).

I use these criteria to assess all markets for bubbles. I have periodically shown you these for stocks and the stock market.

What Was Shown in January Versus Now

I will first describe the picture in words, then show it in charts, and compare it to the last update in January.

As of January, the bubble indicator showed that a) the US equity market was in a moderate bubble, but not an extreme one (ie., 70 percent of way toward the highest bubble, which occurred in the late 1990s and late 1920s), and b) the emerging tech companies (ie. As well, the unprecedented flood of liquidity post-COVID financed other bubbly behavior (e.g. SPACs, IPO boom, big pickup in options activity), making things bubbly. I showed which stocks were in bubbles and created an index of those stocks, which I call “bubble stocks.”

Those bubble stocks have popped. They fell by a third last year, while the S&P 500 remained flat. In light of these and other market developments, it is not necessarily true that now is a good time to buy emerging tech stocks.

The fact that they aren't at a bubble extreme doesn't mean they are safe or that it's a good time to get long. Our metrics still show that US stocks are overvalued. Once popped, bubbles tend to overcorrect to the downside rather than settle at “normal” prices.

The following charts paint the picture. The first shows the US equity market bubble gauge/indicator going back to 1900, currently at the 40% percentile. The charts also zoom in on the gauge in recent years, as well as the late 1920s and late 1990s bubbles (during both of these cases the gauge reached 100 percent ).

The chart below depicts the average bubble gauge for the most bubbly companies in 2020. Those readings are down significantly.

The charts below compare the performance of a basket of emerging tech bubble stocks to the S&P 500. Prices have fallen noticeably, giving up most of their post-COVID gains.

The following charts show the price action of the bubble slice today and in the 1920s and 1990s. These charts show the same market dynamics and two key indicators. These are just two examples of how a lot of debt financing stock ownership coupled with a tightening typically leads to a bubble popping.

Everything driving the bubbles in this market segment is classic—the same drivers that drove the 1920s bubble and the 1990s bubble. For instance, in the last couple months, it was how tightening can act to prick the bubble. Review this case study of the 1920s stock bubble (starting on page 49) from my book Principles for Navigating Big Debt Crises to grasp these dynamics.

The following charts show the components of the US stock market bubble gauge. Since this is a proprietary indicator, I will only show you some of the sub-aggregate readings and some indicators.

Each of these six influences is measured using a number of stats. This is how I approach the stock market. These gauges are combined into aggregate indices by security and then for the market as a whole. The table below shows the current readings of these US equity market indicators. It compares current conditions for US equities to historical conditions. These readings suggest that we’re out of a bubble.

1. How High Are Prices Relatively?

This price gauge for US equities is currently around the 50th percentile.

2. Is price reduction unsustainable?

This measure calculates the earnings growth rate required to outperform bonds. This is calculated by adding up the readings of individual securities. This indicator is currently near the 60th percentile for the overall market, higher than some of our other readings. Profit growth discounted in stocks remains high.

Even more so in the US software sector. Analysts' earnings growth expectations for this sector have slowed, but remain high historically. P/Es have reversed COVID gains but remain high historical.

3. How many new buyers (i.e., non-existing buyers) entered the market?

Expansion of new entrants is often indicative of a bubble. According to historical accounts, this was true in the 1990s equity bubble and the 1929 bubble (though our data for this and other gauges doesn't go back that far). A flood of new retail investors into popular stocks, which by other measures appeared to be in a bubble, pushed this gauge above the 90% mark in 2020. The pace of retail activity in the markets has recently slowed to pre-COVID levels.

4. How Broadly Bullish Is Sentiment?

The more people who have invested, the less resources they have to keep investing, and the more likely they are to sell. Market sentiment is now significantly negative.

5. Are Purchases Being Financed by High Leverage?

Leveraged purchases weaken the buying foundation and expose it to forced selling in a downturn. The leverage gauge, which considers option positions as a form of leverage, is now around the 50% mark.

6. To What Extent Have Buyers Made Exceptionally Extended Forward Purchases?

Looking at future purchases can help assess whether expectations have become overly optimistic. This indicator is particularly useful in commodity and real estate markets, where forward purchases are most obvious. In the equity markets, I look at indicators like capital expenditure, or how much businesses (and governments) invest in infrastructure, factories, etc. It reflects whether businesses are projecting future demand growth. Like other gauges, this one is at the 40th percentile.

What one does with it is a tactical choice. While the reversal has been significant, future earnings discounting remains high historically. In either case, bubbles tend to overcorrect (sell off more than the fundamentals suggest) rather than simply deflate. But I wanted to share these updated readings with you in light of recent market activity.

You might also like

Nikhil Vemu

3 years ago

7 Mac Apps That Are Exorbitantly Priced But Totally Worth It

Wish you more bang for your buck

By ‘Cost a Bomb’ I didn’t mean to exaggerate. It’s an idiom that means ‘To be very expensive’. In fact, no app on the planet costs a bomb lol.

So, to the point.

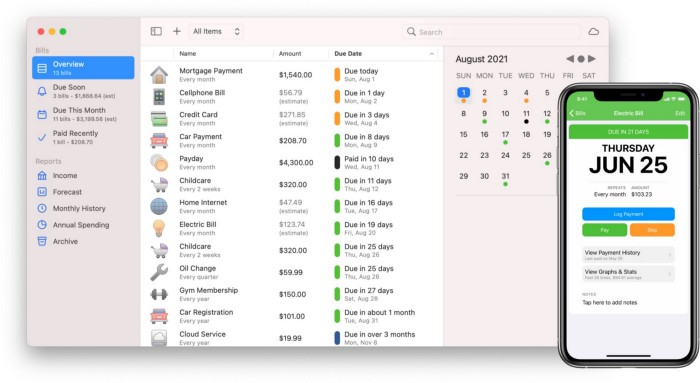

Chronicle

(Freemium. For Pro, $24.99 | Available on Setapp)

You probably have trouble keeping track of dozens of bills and subscriptions each month.

Try Chronicle.

Easy-to-use app

Add payment due dates and receive reminders,

Save payment documentation,

Analyze your spending by season, year, and month.

Observe expenditure trends and create new budgets.

Best of all, Chronicle features an integrated browser for fast payment and logging.

iOS and macOS sync.

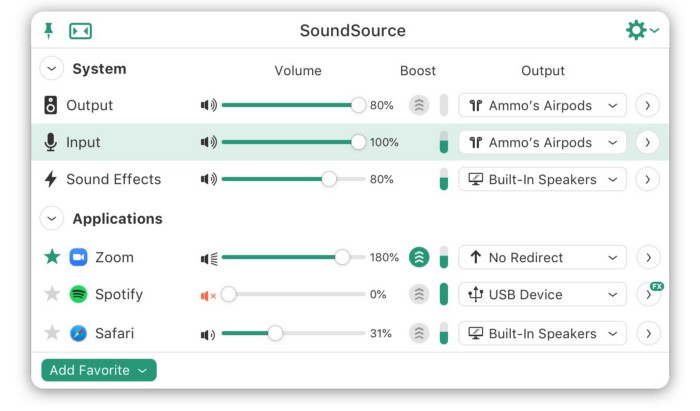

SoundSource

($39 for lifetime)

Background Music, a free macOS program, was featured in #6 of this post last month.

It controls per-app volume, stereo balance, and audio over its max level.

Background Music is fully supported. Additionally,

Connect various speakers to various apps (Wow! ),

change the audio sample rate for each app,

To facilitate access, add a floating SoundSource window.

Use its blocks in Shortcuts app,

On the menu bar, include meters for output/input devices and running programs.

PixelSnap

($39 for lifetime | Available on Setapp)

This software is heaven for UI designers.

It aids you.

quickly calculate screen distances (in pixels) ,

Drag an area around an object to determine its borders,

Measure the distances between the additional guides,

screenshots should be pixel-perfect.

What’s more.

You can

Adapt your tolerance for items with poor contrast and shadows.

Use your Touch Bar to perform important tasks, if you have one.

Mate Translation

($3.99 a month / $29.99 a year | Available on Setapp)

Mate Translate resembles a roided-up version of BarTranslate, which I wrote about in #1 of this piece last month.

If you translate often, utilize Mate Translate on macOS and Safari.

I'm really vocal about it.

It stays on the menu bar, and is accessible with a click or ⌥+shift+T hotkey.

It lets you

Translate in 103 different languages,

To translate text, double-click or right-click on it.

Totally translate websites. Additionally, Netflix subtitles,

Listen to their pronunciation to see how close it is to human.

iPhone and Mac sync Mate-ing history.

Swish

($16 for lifetime | Available on Setapp)

Swish is awesome!

Swipe, squeeze, tap, and hold movements organize chaotic desktop windows. Swish operates with mouse and trackpad.

Some gestures:

• Pinch Once: Close an app

• Pinch Twice: Quit an app

• Swipe down once: Minimise an app

• Pinch Out: Enter fullscreen mode

• Tap, Hold, & Swipe: Arrange apps in grids

and many more...

After getting acquainted to the movements, your multitasking will improve.

Unite

($24.99 for lifetime | Available on Setapp)

It turns webapps into macOS apps. The end.

Unite's functionality is a million times better.

Provide extensive customization (incl. its icon, light and dark modes)

make menu bar applications,

Get badges for web notifications and automatically refresh websites,

Replace any dock icon in the window with it (Wow!) by selecting that portion of the window.

Use PiP (Picture-in-Picture) on video sites that support it.

Delete advertising,

Throughout macOS, use floating windows

and many more…

I feel $24.99 one-off for this tool is a great deal, considering all these features. What do you think?

CleanShot X

(Basic: $29 one-off. Pro: $8/month | Available on Setapp)

CleanShot X can achieve things the macOS screenshot tool cannot. Complete screenshot toolkit.

CleanShot X, like Pixel Snap 2 (#3), is fantastic.

Allows

Scroll to capture a long page,

screen recording,

With webcam on,

• With mic and system audio,

• Highlighting mouse clicks and hotkeys.

Maintain floating screenshots for reference

While capturing, conceal desktop icons and notifications.

Recognize text in screenshots (OCR),

You may upload and share screenshots using the built-in cloud.

These are just 6 in 50+ features, and you’re already saying Wow!

Entreprogrammer

3 years ago

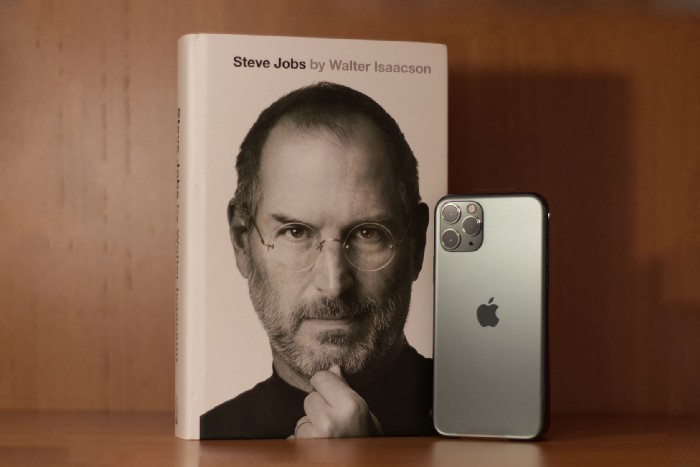

The Steve Jobs Formula: A Guide to Everything

A must-read for everyone

Jobs is well-known. You probably know the tall, thin guy who wore the same clothing every day. His influence is unavoidable. In fewer than 40 years, Jobs' innovations have impacted computers, movies, cellphones, music, and communication.

Steve Jobs may be more imaginative than the typical person, but if we can use some of his ingenuity, ambition, and good traits, we'll be successful. This essay explains how to follow his guidance and success secrets.

1. Repetition is necessary for success.

Be patient and diligent to master something. Practice makes perfect. This is why older workers are often more skilled.

When should you repeat a task? When you're confident and excited to share your product. It's when to stop tweaking and repeating.

Jobs stated he'd make the crowd sh** their pants with an iChat demo.

Use this in your daily life.

Start with the end in mind. You can put it in writing and be as detailed as you like with your plan's schedule and metrics. For instance, you have a goal of selling three coffee makers in a week.

Break it down, break the goal down into particular tasks you must complete, and then repeat those tasks. To sell your coffee maker, you might need to make 50 phone calls.

Be mindful of the amount of work necessary to produce the desired results. Continue doing this until you are happy with your product.

2. Acquire the ability to add and subtract.

How did Picasso invent cubism? Pablo Picasso was influenced by stylised, non-naturalistic African masks that depict a human figure.

Artists create. Constantly seeking inspiration. They think creatively about random objects. Jobs said creativity is linking things. Creative people feel terrible when asked how they achieved something unique because they didn't do it all. They saw innovation. They had mastered connecting and synthesizing experiences.

Use this in your daily life.

On your phone, there is a note-taking app. Ideas for what you desire to learn should be written down. It may be learning a new language, calligraphy, or anything else that inspires or intrigues you.

Note any ideas you have, quotations, or any information that strikes you as important.

Spend time with smart individuals, that is the most important thing. Jim Rohn, a well-known motivational speaker, has observed that we are the average of the five people with whom we spend the most time.

Learning alone won't get you very far. You need to put what you've learnt into practice. If you don't use your knowledge and skills, they are useless.

3. Develop the ability to refuse.

Steve Jobs deleted thousands of items when he created Apple's design ethic. Saying no to distractions meant upsetting customers and partners.

John Sculley, the former CEO of Apple, said something like this. According to Sculley, Steve’s methodology differs from others as he always believed that the most critical decisions are things you choose not to do.

Use this in your daily life.

Never be afraid to say "no," "I won't," or "I don't want to." Keep it simple. This method works well in some situations.

Give a different option. For instance, X might be interested even if I won't be able to achieve it.

Control your top priority. Before saying yes to anything, make sure your work schedule and priority list are up to date.

4. Follow your passion

“Follow your passion” is the worst advice people can give you. Steve Jobs didn't start Apple because he suddenly loved computers. He wanted to help others attain their maximum potential.

Great things take a lot of work, so quitting makes sense if you're not passionate. Jobs learned from history that successful people were passionate about their work and persisted through challenges.

Use this in your daily life.

Stay away from your passion. Allow it to develop daily. Keep working at your 9-5-hour job while carefully gauging your level of desire and endurance. Less risk exists.

The truth is that if you decide to work on a project by yourself rather than in a group, it will take you years to complete it instead of a week. Instead, network with others who have interests in common.

Prepare a fallback strategy in case things go wrong.

Success, this small two-syllable word eventually gives your life meaning, a perspective. What is success? For most, it's achieving their ambitions. However, there's a catch. Successful people aren't always happy.

Furthermore, where do people’s goals and achievements end? It’s a never-ending process. Success is a journey, not a destination. We wish you not to lose your way on this journey.

Theo Seeds

3 years ago

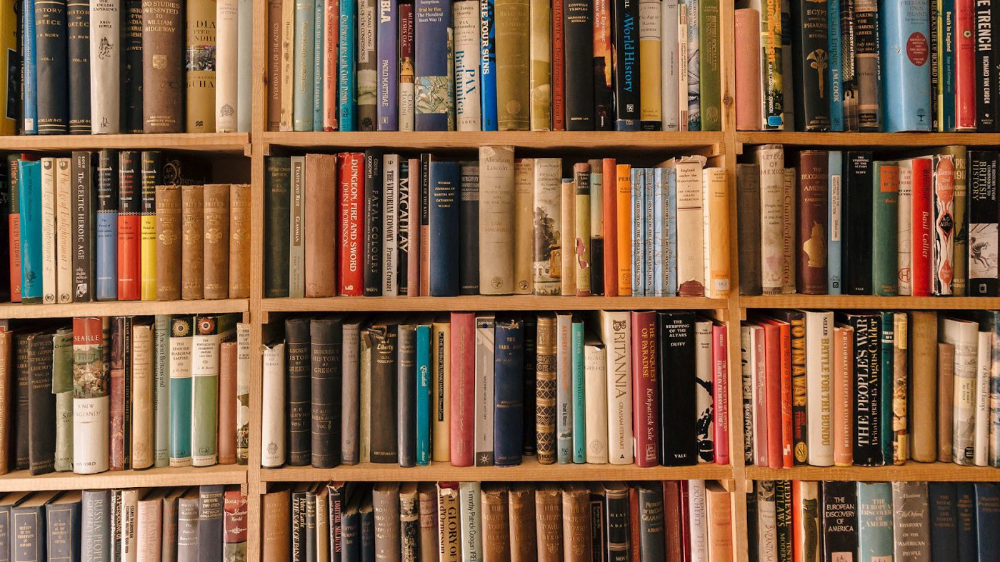

The nine novels that have fundamentally altered the way I view the world

I read 53 novels last year and hope to do so again.

Books are best if you love learning. You get a range of perspectives, unlike podcasts and YouTube channels where you get the same ones.

Book quality varies. I've read useless books. Most books teach me something.

These 9 novels have changed my outlook in recent years. They've made me rethink what I believed or introduced me to a fresh perspective that changed my worldview.

You can order these books yourself. Or, read my summaries to learn what I've synthesized.

Enjoy!

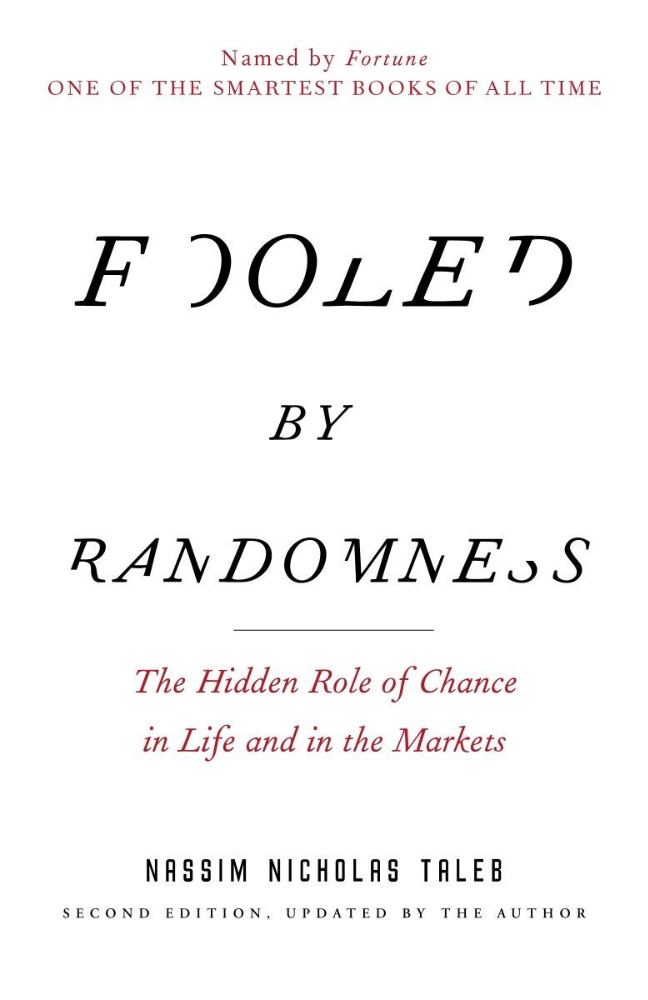

Fooled By Randomness

Nassim Taleb worked as a Wall Street analyst. He used options trading to bet on unlikely events like stock market crashes.

Using financial models, investors predict stock prices. The models assume constant, predictable company growth.

These models base their assumptions on historical data, so they assume the future will be like the past.

Fooled By Randomness argues that the future won't be like the past. We often see impossible market crashes like 2008's housing market collapse. The world changes too quickly to use historical data: by the time we understand how it works, it's changed.

Most people don't live to see history unfold. We think our childhood world will last forever. That goes double for stable societies like the U.S., which hasn't seen major turbulence in anyone's lifetime.

Fooled By Randomness taught me to expect the unexpected. The world is deceptive and rarely works as we expect. You can't always trust your past successes or what you've learned.

Antifragile

More Taleb. Some things, like the restaurant industry and the human body, improve under conditions of volatility and turbulence.

We didn't have a word for this counterintuitive concept until Taleb wrote Antifragile. The human body (which responds to some stressors, like exercise, by getting stronger) and the restaurant industry both benefit long-term from disorder (when economic turbulence happens, bad restaurants go out of business, improving the industry as a whole).

Many human systems are designed to minimize short-term variance because humans don't understand it. By eliminating short-term variation, we increase the likelihood of a major disaster.

Once, we put out every forest fire we found. Then, dead wood piled up in forests, causing catastrophic fires.

We don't like price changes, so politicians prop up markets with stimulus packages and printing money. This leads to a bigger crash later. Two years ago, we printed a ton of money for stimulus checks, and now we have double-digit inflation.

Antifragile taught me how important Plan B is. A system with one or two major weaknesses will fail. Make large systems redundant, foolproof, and change-responsive.

Reality is broken

We dread work. Work is tedious. Right?

Wrong. Work gives many people purpose. People are happiest when working. (That's why some are workaholics.)

Factory work saps your soul, office work is boring, and working for a large company you don't believe in and that operates unethically isn't satisfying.

Jane McGonigal says in Reality Is Broken that meaningful work makes us happy. People love games because they simulate good work. McGonigal says work should be more fun.

Some think they'd be happy on a private island sipping cocktails all day. That's not true. Without anything to do, most people would be bored. Unemployed people are miserable. Many retirees die within 2 years, much more than expected.

Instead of complaining, find meaningful work. If you don't like your job, it's because you're in the wrong environment. Find the right setting.

The Lean Startup

Before the airplane was invented, Harvard scientists researched flying machines. Who knew two North Carolina weirdos would beat them?

The Wright Brothers' plane design was key. Harvard researchers were mostly theoretical, designing an airplane on paper and trying to make it fly in theory. They'd build it, test it, and it wouldn't fly.

The Wright Brothers were different. They'd build a cheap plane, test it, and it'd crash. Then they'd learn from their mistakes, build another plane, and it'd crash.

They repeated this until they fixed all the problems and one of their planes stayed aloft.

Mistakes are considered bad. On the African savannah, one mistake meant death. Even today, if you make a costly mistake at work, you'll be fired as a scapegoat. Most people avoid failing.

In reality, making mistakes is the best way to learn.

Eric Reis offers an unintuitive recipe in The Lean Startup: come up with a hypothesis, test it, and fail. Then, try again with a new hypothesis. Keep trying, learning from each failure.

This is a great startup strategy. Startups are new businesses. Startups face uncertainty. Run lots of low-cost experiments to fail, learn, and succeed.

Don't fear failing. Low-cost failure is good because you learn more from it than you lose. As long as your worst-case scenario is acceptable, risk-taking is good.

The Sovereign Individual

Today, nation-states rule the world. The UN recognizes 195 countries, and they claim almost all land outside of Antarctica.

We agree. For the past 2,000 years, much of the world's territory was ungoverned.

Why today? Because technology has created incentives for nation-states for most of the past 500 years. The logic of violence favors nation-states, according to James Dale Davidson, author of the Sovereign Individual. Governments have a lot to gain by conquering as much territory as possible, so they do.

Not always. During the Dark Ages, Europe was fragmented and had few central governments. Partly because of armor. With armor, a sword, and a horse, you couldn't be stopped. Large states were hard to form because they rely on the threat of violence.

When gunpowder became popular in Europe, violence changed. In a world with guns, assembling large armies and conquest are cheaper.

James Dale Davidson says the internet will make nation-states obsolete. Most of the world's wealth will be online and in people's heads, making capital mobile.

Nation-states rely on predatory taxation of the rich to fund large militaries and welfare programs.

When capital is mobile, people can live anywhere in the world, Davidson says, making predatory taxation impossible. They're not bound by their job, land, or factory location. Wherever they're treated best.

Davidson says that over the next century, nation-states will collapse because they won't have enough money to operate as they do now. He imagines a world of small city-states, like Italy before 1900. (or Singapore today).

We've already seen some movement toward a more Sovereign Individual-like world. The pandemic proved large-scale remote work is possible, freeing workers from their location. Many cities and countries offer remote workers incentives to relocate.

Many Western businesspeople live in tax havens, and more people are renouncing their US citizenship due to high taxes. Increasing globalization has led to poor economic conditions and resentment among average people in the West, which is why politicians like Trump and Sanders rose to popularity with angry rhetoric, even though Obama rose to popularity with a more hopeful message.

The Sovereign Individual convinced me that the future will be different than Nassim Taleb's. Large countries like the U.S. will likely lose influence in the coming decades, while Portugal, Singapore, and Turkey will rise. If the trend toward less freedom continues, people may flee the West en masse.

So a traditional life of college, a big firm job, hard work, and corporate advancement may not be wise. Young people should learn as much as possible and develop flexible skills to adapt to the future.

Sapiens

Sapiens is a history of humanity, from proto-humans in Ethiopia to our internet society today, with some future speculation.

Sapiens views humans (and Homo sapiens) as a unique species on Earth. We were animals 100,000 years ago. We're slowly becoming gods, able to affect the climate, travel to every corner of the Earth (and the Moon), build weapons that can kill us all, and wipe out thousands of species.

Sapiens examines what makes Homo sapiens unique. Humans can believe in myths like religion, money, and human-made entities like countries and LLCs.

These myths facilitate large-scale cooperation. Ants from the same colony can cooperate. Any two humans can trade, though. Even if they're not genetically related, large groups can bond over religion and nationality.

Combine that with intelligence, and you have a species capable of amazing feats.

Sapiens may make your head explode because it looks at the world without presupposing values, unlike most books. It questions things that aren't usually questioned and says provocative things.

It also shows how human history works. It may help you understand and predict the world. Maybe.

The 4-hour Workweek

Things can be done better.

Tradition, laziness, bad bosses, or incentive structures cause complacency. If you're willing to make changes and not settle for the status quo, you can do whatever you do better and achieve more in less time.

The Four-Hour Work Week advocates this. Tim Ferriss explains how he made more sales in 2 hours than his 8-hour-a-day colleagues.

By firing 2 of his most annoying customers and empowering his customer service reps to make more decisions, he was able to leave his business and travel to Europe.

Ferriss shows how to escape your 9-to-5, outsource your life, develop a business that feeds you with little time, and go on mini-retirement adventures abroad.

Don't accept the status quo. Instead, level up. Find a way to improve your results. And try new things.

Why Nations Fail

Nogales, Arizona and Mexico were once one town. The US/Mexico border was arbitrarily drawn.

Both towns have similar cultures and populations. Nogales, Arizona is well-developed and has a high standard of living. Nogales, Mexico is underdeveloped and has a low standard of living. Whoa!

Why Nations Fail explains how government-created institutions affect country development. Strong property rights, capitalism, and non-corrupt governments promote development. Countries without capitalism, strong property rights, or corrupt governments don't develop.

Successful countries must also embrace creative destruction. They must offer ordinary citizens a way to improve their lot by creating value for others, not reducing them to slaves, serfs, or peasants. Authors say that ordinary people could get rich on trading expeditions in 11th-century Venice.

East and West Germany and North and South Korea have different economies because their citizens are motivated differently. It explains why Chile, China, and Singapore grow so quickly after becoming market economies.

People have spent a lot of money on third-world poverty. According to Why Nations Fail, education and infrastructure aren't the answer. Developing nations must adopt free-market economic policies.

Elon Musk

Elon Musk is the world's richest man, but that’s not a good way to describe him. Elon Musk is the world's richest man, which is like calling Steve Jobs a turtleneck-wearer or Benjamin Franklin a printer.

Elon Musk does cool sci-fi stuff to help humanity avoid existential threats.

Oil will run out. We've delayed this by developing better extraction methods. We only have so much nonrenewable oil.

Our society is doomed if it depends on oil. Elon Musk invested heavily in Tesla and SolarCity to speed the shift to renewable energy.

Musk worries about AI: we'll build machines smarter than us. We won't be able to stop these machines if something goes wrong, just like cows can't fight humans. Neuralink: we need to be smarter to compete with AI when the time comes.

If Earth becomes uninhabitable, we need a backup plan. Asteroid or nuclear war could strike Earth at any moment. We may not have much time to react if it happens in a few days. We must build a new civilization while times are good and resources are plentiful.

Short-term problems dominate our politics, but long-term issues are more important. Long-term problems can cause mass casualties and homelessness. Musk demonstrates how to think long-term.

The main reason people are impressed by Elon Musk, and why Ashlee Vances' biography influenced me so much, is that he does impossible things.

Electric cars were once considered unprofitable, but Tesla has made them mainstream. SpaceX is the world's largest private space company.

People lack imagination and dismiss ununderstood ideas as impossible. Humanity is about pushing limits. Don't worry if your dreams seem impossible. Try it.

Thanks for reading.